## Bar Chart: Jailbreak Evaluations

### Overview

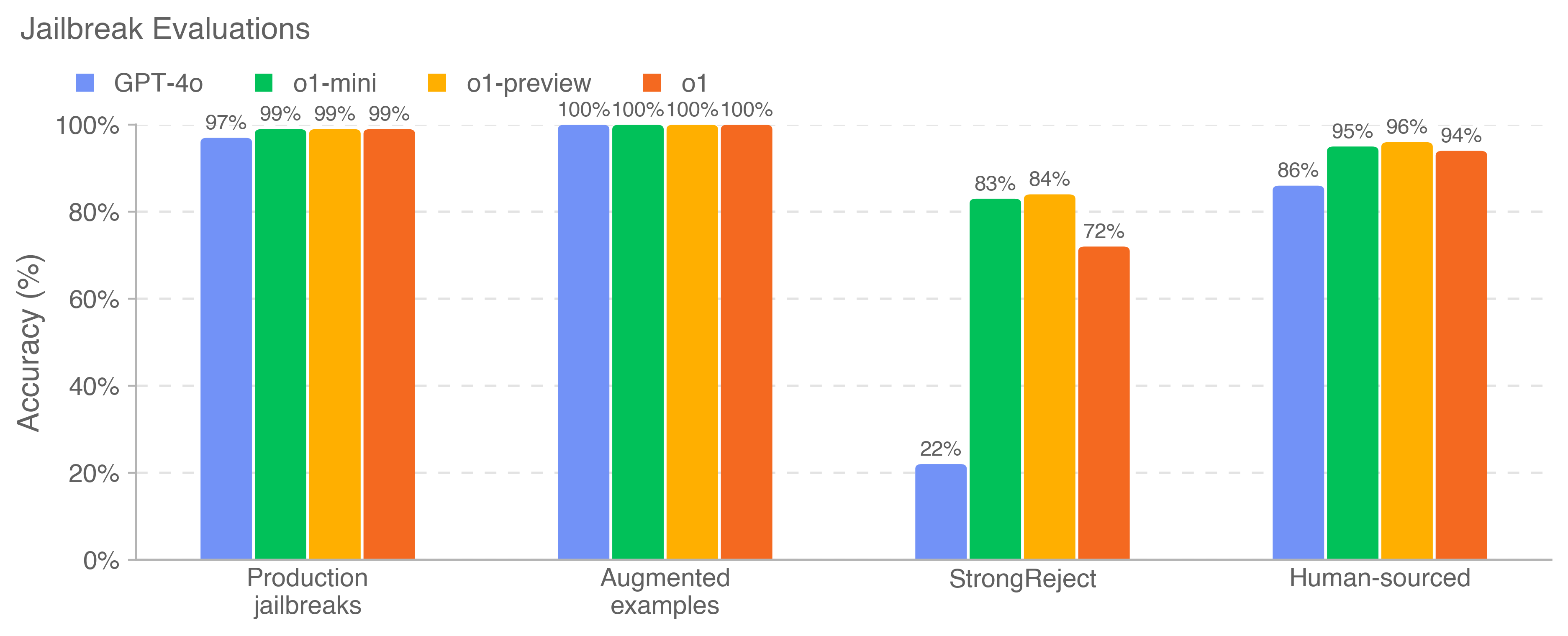

The image is a bar chart comparing the accuracy of four different models (GPT-4o, o1-mini, o1-preview, and o1) against four types of jailbreak attempts: Production jailbreaks, Augmented examples, StrongReject, and Human-sourced. The y-axis represents accuracy in percentage, ranging from 0% to 100%.

### Components/Axes

* **Title:** Jailbreak Evaluations

* **X-axis:** Categories of jailbreak attempts: Production jailbreaks, Augmented examples, StrongReject, Human-sourced.

* **Y-axis:** Accuracy (%), ranging from 0% to 100% in increments of 20%.

* **Legend:** Located at the top of the chart.

* Blue: GPT-4o

* Green: o1-mini

* Yellow: o1-preview

* Orange: o1

### Detailed Analysis

The chart presents the accuracy of each model against different jailbreak attempts.

* **Production jailbreaks:**

* GPT-4o (Blue): 97%

* o1-mini (Green): 99%

* o1-preview (Yellow): 99%

* o1 (Orange): 99%

* **Augmented examples:**

* GPT-4o (Blue): 100%

* o1-mini (Green): 100%

* o1-preview (Yellow): 100%

* o1 (Orange): 100%

* **StrongReject:**

* GPT-4o (Blue): 22%

* o1-mini (Green): 83%

* o1-preview (Yellow): 84%

* o1 (Orange): 72%

* **Human-sourced:**

* GPT-4o (Blue): 86%

* o1-mini (Green): 95%

* o1-preview (Yellow): 96%

* o1 (Orange): 94%

### Key Observations

* All models perform exceptionally well (near 100% accuracy) against Augmented examples.

* The GPT-4o model shows significantly lower accuracy (22%) against StrongReject jailbreak attempts compared to other models.

* All models perform well against Production jailbreaks and Human-sourced jailbreaks, with accuracy generally above 85%.

### Interpretation

The data suggests that the GPT-4o model is more vulnerable to StrongReject jailbreak attempts compared to the other models (o1-mini, o1-preview, and o1). All models are highly resistant to Augmented examples. The performance against Production and Human-sourced jailbreaks is generally high across all models, indicating a good level of security against these types of attacks. The StrongReject category appears to be a key differentiator in the models' vulnerability to jailbreaking.