## Bar Chart: Average Number of <thinking> Tokens by Question Difficulty and Model Size

### Overview

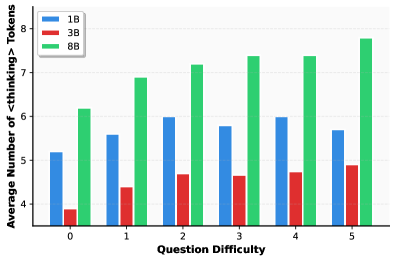

This is a grouped bar chart comparing the average number of `<thinking>` tokens generated by three different model sizes (1B, 3B, and 8B parameters) across six levels of question difficulty (0 through 5). The chart illustrates how token usage varies with both model scale and task complexity.

### Components/Axes

* **Chart Type:** Grouped Bar Chart.

* **X-Axis (Horizontal):** Labeled **"Question Difficulty"**. It has six discrete categories marked with the integers: `0`, `1`, `2`, `3`, `4`, `5`.

* **Y-Axis (Vertical):** Labeled **"Average Number of <thinking> Tokens"**. The scale runs from 4 to 8, with major tick marks at every integer (4, 5, 6, 7, 8).

* **Legend:** Located in the **top-left corner** of the chart area. It defines the three data series:

* **Blue square:** `1B`

* **Red square:** `3B`

* **Green square:** `8B`

* **Data Series:** For each difficulty level on the x-axis, there is a cluster of three bars, one for each model size, ordered left-to-right as 1B (blue), 3B (red), 8B (green).

### Detailed Analysis

The following table reconstructs the approximate data points from the chart. Values are estimated based on the y-axis scale.

| Question Difficulty | 1B (Blue) Avg. Tokens | 3B (Red) Avg. Tokens | 8B (Green) Avg. Tokens |

| :--- | :--- | :--- | :--- |

| **0** | ~5.2 | ~3.9 | ~6.2 |

| **1** | ~5.6 | ~4.4 | ~6.9 |

| **2** | ~5.7 | ~4.5 | ~7.2 |

| **3** | ~5.8 | ~4.6 | ~7.4 |

| **4** | ~6.0 | ~4.7 | ~7.4 |

| **5** | ~5.7 | ~4.9 | ~7.8 |

**Trend Verification per Data Series:**

* **8B (Green):** The green bars show a clear and consistent **upward trend**. Starting at ~6.2 for difficulty 0, the height increases with each step, reaching its peak at ~7.8 for difficulty 5.

* **1B (Blue):** The blue bars show a **general upward trend with a slight dip at the end**. Values rise from ~5.2 (diff 0) to a peak of ~6.0 (diff 4), then decrease slightly to ~5.7 at difficulty 5.

* **3B (Red):** The red bars show a **gradual, consistent upward trend**. Starting at the lowest point of ~3.9 (diff 0), the height increases slowly but steadily across all difficulty levels, ending at ~4.9 (diff 5).

### Key Observations

1. **Consistent Hierarchy:** At every difficulty level, the 8B model (green) uses the most tokens, followed by the 1B model (blue), with the 3B model (red) using the fewest. This order is maintained without exception.

2. **Scale vs. Token Usage:** There is not a simple linear relationship between model size (1B, 3B, 8B) and token count. The 3B model consistently uses fewer tokens than the smaller 1B model.

3. **Impact of Difficulty:** All three models show an overall increase in average thinking tokens as question difficulty increases from 0 to 5, suggesting more complex problems require more internal processing (as measured by token generation).

4. **Anomaly at Difficulty 5 for 1B:** The 1B model's token count peaks at difficulty 4 and then drops at difficulty 5, breaking its upward trend. This is the only instance where a model's token count decreases at a higher difficulty level.

### Interpretation

This chart provides insight into the "thinking" behavior of language models of different scales. The data suggests several key points:

* **Processing Effort Scales with Problem Complexity:** The general upward trend for all models indicates that more difficult questions elicit longer internal reasoning chains (more `<thinking>` tokens). This aligns with the expectation that harder problems require more computation.

* **Model Size Does Not Dictate Token Efficiency:** The most striking finding is that the mid-sized 3B model is the most "token-efficient," using significantly fewer thinking tokens than both the smaller 1B and larger 8B models at all difficulty levels. This could imply differences in training, architecture, or internal reasoning strategies. The 8B model, while using the most tokens, may be engaging in more exhaustive or verbose reasoning.

* **Potential Saturation or Strategy Shift:** The dip in the 1B model's tokens at the highest difficulty (5) could indicate a few possibilities: the model may have hit a limit in its reasoning capacity for very hard problems, it might be employing a different, more direct strategy that requires fewer tokens, or the sample of questions at this difficulty may have properties that lead to shorter outputs.

* **Practical Implications:** For applications where computational cost (tied to token count) is a concern, the 3B model appears most efficient. However, efficiency must be balanced against performance accuracy, which is not shown here. The 8B model's high token usage suggests it may be capable of deeper reasoning but at a higher operational cost.

In summary, the chart reveals that thinking token usage is influenced by both model scale and task difficulty in non-trivial ways, with the 3B model demonstrating a uniquely efficient processing pattern across the tested difficulty spectrum.