TECHNICAL ASSET FINGERPRINT

c03c8fc3c890677fad6f340b

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

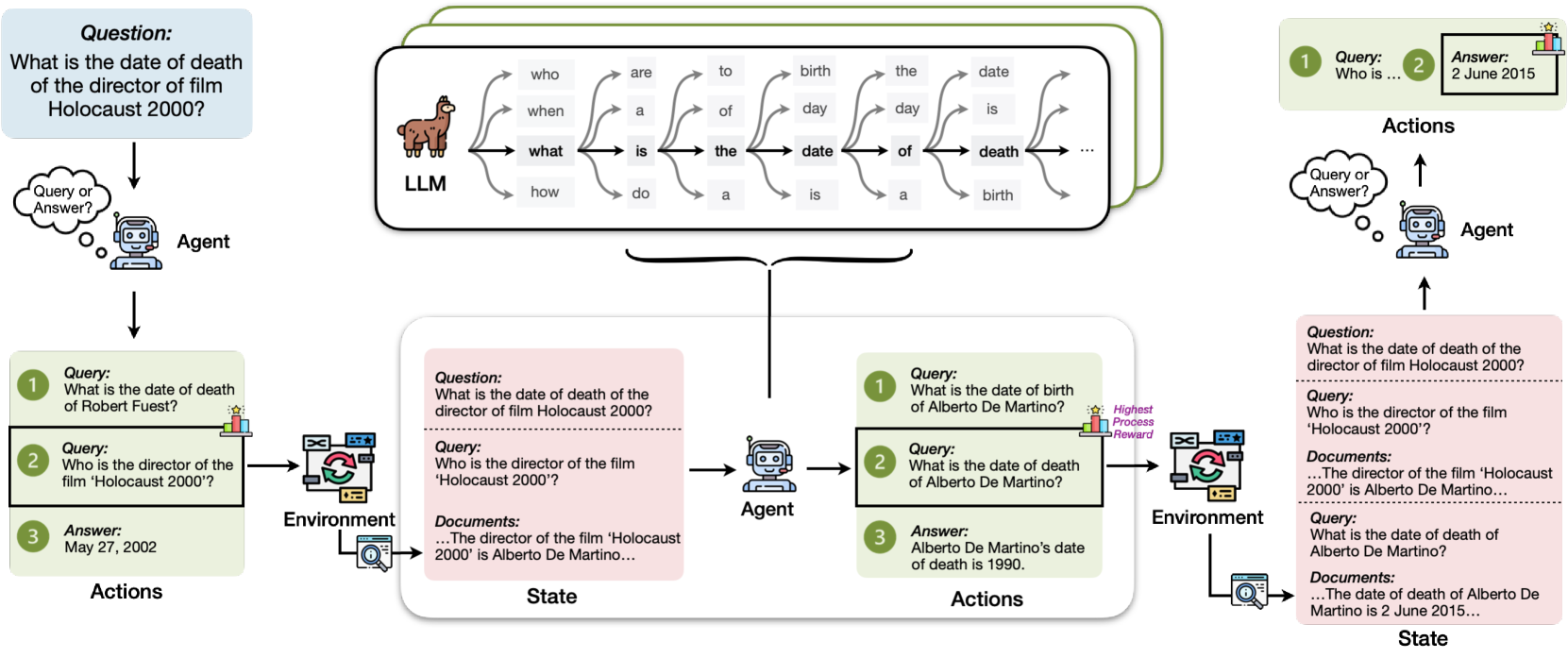

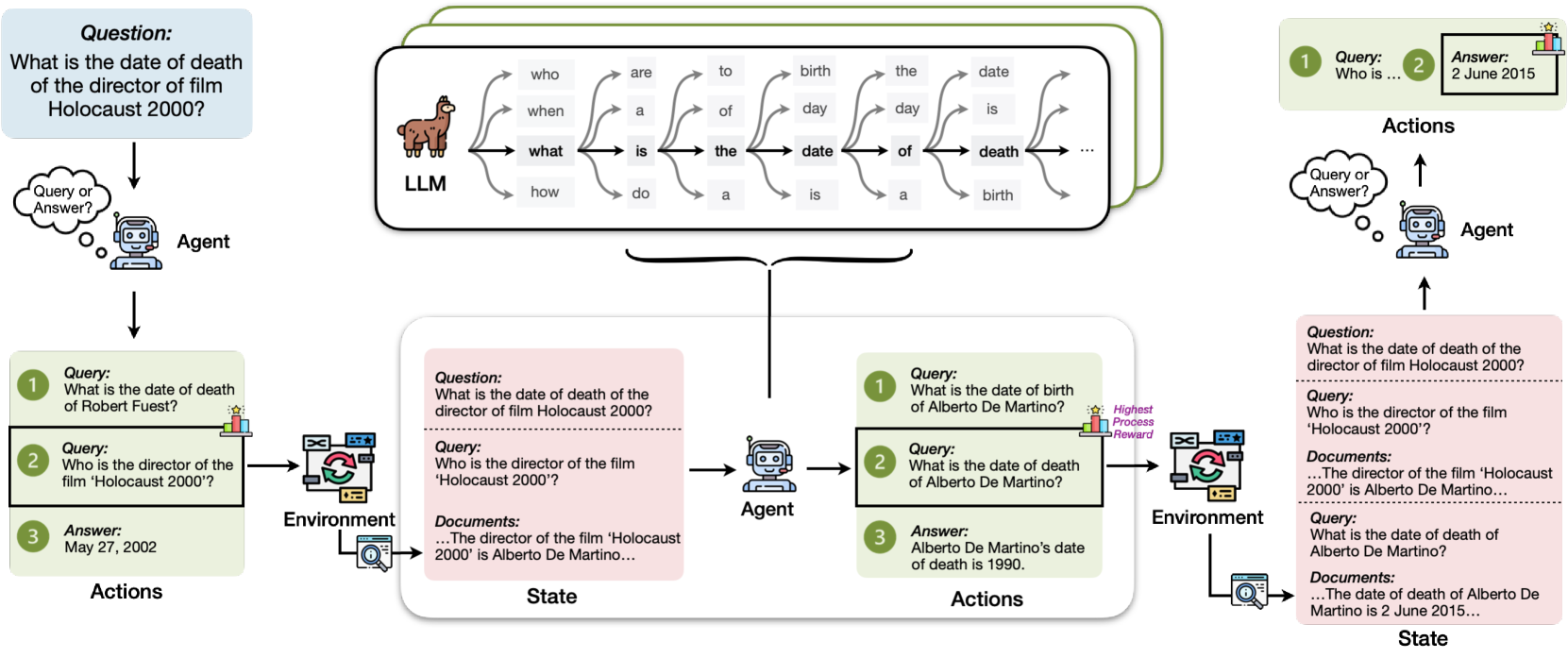

## Diagram: Agent Interaction with LLM and Environment

### Overview

The image illustrates a diagram depicting the interaction of an agent with a Large Language Model (LLM) and an environment to answer questions. The diagram shows the flow of information and actions between the agent, the LLM, and the environment in two different scenarios.

### Components/Axes

* **Agent:** A robotic figure representing the intelligent agent.

* **LLM:** A llama icon representing the Large Language Model.

* **Environment:** A representation of the external world or knowledge base.

* **State:** The current information available to the agent.

* **Actions:** The steps taken by the agent.

* **Query:** The question posed to the system.

* **Answer:** The response provided by the system.

* **Highest Process Reward:** A visual indicator of successful processing.

### Detailed Analysis or ### Content Details

**Top Section:**

* **Question (Top-Left):** "What is the date of death of the director of film Holocaust 2000?"

* **Agent (Top-Left):** Receives the question.

* **LLM (Top-Center):** Processes the question using various linguistic paths:

* "who are to birth the date"

* "when a of day day is"

* "what is the date of death"

* "how do a is a birth"

* **Agent (Top-Right):** Performs actions based on the LLM's processing:

* Query 1: "Who is..."

* Answer 2: "2 June 2015"

**Bottom-Left Section:**

* **Question:** "What is the date of death of the director of film Holocaust 2000?"

* **Agent:** Receives the question.

* **Actions:**

* Query 1: "What is the date of death of Robert Fuest?"

* Query 2: "Who is the director of the film 'Holocaust 2000'?"

* Answer 3: "May 27, 2002"

* **Environment:** Processes the queries.

* **State:**

* Question: "What is the date of death of the director of film Holocaust 2000?"

* Query: "Who is the director of the film 'Holocaust 2000'?"

* Documents: "...The director of the film 'Holocaust 2000' is Alberto De Martino..."

**Bottom-Right Section:**

* **Question:** "What is the date of death of the director of film Holocaust 2000?"

* **Agent:** Receives the question.

* **Actions:**

* Query 1: "What is the date of birth of Alberto De Martino?"

* Query 2: "What is the date of death of Alberto De Martino?"

* Answer 3: "Alberto De Martino's date of death is 1990."

* **Environment:** Processes the queries.

* **State:**

* Question: "What is the date of death of the director of film Holocaust 2000?"

* Query: "Who is the director of the film 'Holocaust 2000'?"

* Documents: "...The director of the film 'Holocaust 2000' is Alberto De Martino..."

* Query: "What is the date of death of Alberto De Martino?"

* Documents: "...The date of death of Alberto De Martino is 2 June 2015..."

### Key Observations

* The LLM is used to generate initial queries.

* The agent interacts with the environment to gather information.

* The state represents the current knowledge of the agent.

* The actions represent the queries and answers exchanged between the agent and the environment.

* The "Highest Process Reward" is associated with the correct answer.

### Interpretation

The diagram illustrates how an agent uses an LLM and interacts with an environment to answer a complex question. The agent breaks down the initial question into simpler queries, gathers information from the environment, and uses the LLM to generate answers. The two scenarios presented show different paths the agent can take to arrive at an answer, highlighting the iterative and exploratory nature of the process. The diagram demonstrates the importance of both the LLM for generating queries and the environment for providing information in answering complex questions.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Agent-LLM-Environment Interaction Flow

### Overview

This diagram illustrates the interaction flow between an Agent, a Large Language Model (LLM), and an Environment in a question-answering process. It depicts a multi-step process where the Agent formulates queries, the LLM processes them, the Environment provides information, and the Agent refines its queries based on the received information. The diagram shows two distinct interaction paths, one leading to a successful answer and the other demonstrating a refinement loop.

### Components/Axes

The diagram consists of several key components:

* **Agent:** Represented by a robot icon, this component initiates queries and receives answers.

* **LLM:** Represented by a llama icon, this component processes the queries and generates responses.

* **Environment:** Represented by a computer screen icon, this component stores and provides information (documents).

* **Queries:** Text boxes labeled "Query" containing the questions posed by the Agent.

* **Answers:** Text boxes labeled "Answer" containing the responses received.

* **Actions:** Text boxes labeled "Actions" indicating the actions taken by the Agent.

* **State:** Text boxes labeled "State" indicating the current state of the Environment.

* **Arrows:** Indicate the flow of information between the components.

* **Question:** A large text box at the top of the diagram posing the initial question.

* **Keywords:** A cloud of keywords in the center of the diagram representing the LLM's processing of the query.

### Detailed Analysis or Content Details

**Top Interaction Path (Successful Answer):**

1. **Question:** "What is the date of death of the director of film Holocaust 2000?"

2. **Query 1:** "What is the date of death of Robert Fuest?"

3. **Answer 1:** "2 June 2015"

4. **Actions:** (No action listed)

**Bottom Left Interaction Path (Refinement Loop):**

1. **Question:** "What is the date of death of the director of film Holocaust 2000?"

2. **Query 1:** "What is the date of death of Robert Fuest?"

3. **Query 2:** "Who is the director of the film Holocaust 2000?"

4. **Answer 2:** "May 27, 2002"

5. **Actions:** (No action listed)

6. **State:** Documents: "...The director of the film 'Holocaust 2000' is Alberto De Martino..."

**Bottom Right Interaction Path (Refinement Loop):**

1. **Question:** "What is the date of death of the director of film Holocaust 2000?"

2. **Query 1:** "What is the date of birth of Alberto De Martino?"

3. **Query 2:** "What is the date of death of Alberto De Martino?"

4. **Answer 3:** "Alberto De Martino's date of death is 1990."

5. **Actions:** (No action listed)

6. **State:** Documents: "...The date of death of Alberto De Martino is 2 June 2015..."

7. **Reward:** "Highest Process Reward"

**Keyword Cloud:**

The keyword cloud in the center contains the following words: "who", "are", "to", "birth", "the", "date", "day", "day", "is", "of", "death", "what", "do", "a", "is", "a", "birth".

### Key Observations

* The diagram demonstrates a process of iterative refinement. The Agent initially asks about Robert Fuest, but then refines the query to ask about the director of the film, leading to the identification of Alberto De Martino.

* The Environment provides documents that are used to answer the queries.

* The bottom right path shows a successful refinement loop, indicated by the "Highest Process Reward" label.

* There is a discrepancy in the date of death for Alberto De Martino. The Environment initially states 1990, but later provides a document stating 2 June 2015.

* The diagram highlights the importance of the LLM in processing the queries and extracting relevant information.

### Interpretation

The diagram illustrates a complex question-answering system where an Agent leverages an LLM to interact with an Environment and retrieve information. The system is capable of refining its queries based on the information received, demonstrating a form of reasoning and learning. The presence of the "Highest Process Reward" suggests a reinforcement learning component, where the Agent is rewarded for successful query refinement.

The discrepancy in the date of death for Alberto De Martino highlights a potential issue with data consistency in the Environment. This could be due to errors in the documents or conflicting information sources. The system's ability to identify and potentially resolve such inconsistencies is crucial for its reliability.

The keyword cloud provides insight into the LLM's processing of the query. The prominence of words like "date," "death," and "director" suggests that the LLM is focusing on these key concepts when formulating its responses.

The diagram suggests a sophisticated system capable of handling complex questions and adapting to new information. It demonstrates the potential of combining Agents, LLMs, and Environments to create intelligent systems that can solve real-world problems.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Multi-Step Question Answering via an LLM-Powered Agent

### Overview

This diagram illustrates the workflow of an AI agent, powered by a Large Language Model (LLM), that answers a complex, multi-hop question by decomposing it into sub-queries, interacting with an environment (like a search engine or database), and refining its approach based on process rewards. The example traces the path to answer the question: "What is the date of death of the director of film Holocaust 2000?"

### Components/Axes

The diagram is organized into several interconnected blocks, flowing from left to right and top to bottom.

1. **Initial Question (Top-Left):** A blue box containing the primary query.

2. **LLM Processing Block (Top-Center):** A large white box with a green border, depicting an LLM (represented by a llama icon) processing a sequence of tokens. The tokens shown are: `who`, `are`, `to`, `birth`, `the`, `date`, `when`, `a`, `of`, `day`, `day`, `is`, `what`, `is`, `the`, `date`, `of`, `death`, `how`, `do`, `a`, `is`, `a`, `birth`, `...`.

3. **Agent Icon:** A small robot icon labeled "Agent" appears in multiple places, representing the decision-making entity.

4. **Environment Icon:** A computer monitor icon with a circular arrow, labeled "Environment," representing the external information source.

5. **Action/State Boxes:** Color-coded boxes represent the agent's interactions:

* **Green Boxes (Actions):** Contain numbered steps (1, 2, 3) for queries and the final answer.

* **Pink Boxes (State):** Show the accumulated context, including the original question, sub-queries, and retrieved documents.

6. **Process Reward Indicator:** A small bar chart icon labeled "Highest Process Reward" points to a specific sub-query, indicating it was a valuable step.

### Detailed Analysis

The process is shown in two parallel sequences, demonstrating how the agent corrects its initial approach.

**Sequence 1 (Left Side - Initial, Less Efficient Path):**

* **Step 1 (Action):** The agent's first query is: "What is the date of death of Robert Fuest?"

* **Step 2 (Action):** The agent's second query is: "Who is the director of the film 'Holocaust 2000'?"

* **Environment Interaction:** The environment processes these queries.

* **Step 3 (Action/Answer):** The agent provides the answer: "May 27, 2002." (This is incorrect for the main question, as it's Robert Fuest's death date, not the director's).

**Sequence 2 (Center/Right Side - Refined, Correct Path):**

This sequence shows the agent learning and is the focus of the diagram's flow.

* **State (Pink Box - Center):** The context includes:

* Original Question: "What is the date of death of the director of film Holocaust 2000?"

* Sub-Query: "Who is the director of the film 'Holocaust 2000'?"

* Retrieved Document: "...The director of the film 'Holocaust 2000' is Alberto De Martino..."

* **Action (Green Box - Center):** The agent, now informed, takes new actions:

1. Query: "What is the date of birth of Alberto De Martino?" (This step is marked with the "Highest Process Reward" icon).

2. Query: "What is the date of death of Alberto De Martino?"

3. Answer: "Alberto De Martino's date of death is 1990." (This is an intermediate, incorrect answer based on available data at that step).

* **Environment Interaction:** The environment processes the new queries.

* **Final State (Pink Box - Right):** The context is updated with:

* The original question and the key sub-query.

* The document confirming the director.

* A new query: "What is the date of death of Alberto De Martino?"

* A new retrieved document: "...The date of death of Alberto De Martino is 2 June 2015..."

* **Final Action (Top-Right):** The agent, with the complete and correct information, produces the final answer in a yellow box: "Answer: 2 June 2015".

### Key Observations

1. **Query Decomposition:** The agent breaks the complex question ("date of death of director of X") into sequential sub-questions ("Who is director of X?" -> "What is date of death of [Director]?").

2. **Iterative Refinement:** The agent does not get the correct answer on the first try. It initially retrieves an incorrect date (1990) and must issue a follow-up query to the environment to get the correct date (2 June 2015).

3. **Process Reward:** The diagram explicitly highlights that identifying the director ("What is the date of birth of Alberto De Martino?") is a high-value step in the reasoning chain, even though the final answer requires a different piece of information.

4. **State Persistence:** The "State" boxes show how the agent maintains a running log of the conversation history, including its own queries and the documents retrieved from the environment.

### Interpretation

This diagram is a technical schematic for a **Reinforcement Learning on Language Models (RLLM)** or **LLM Agent** system designed for multi-hop reasoning. It demonstrates several core concepts:

* **Tool Use:** The LLM-powered agent uses an external "Environment" (e.g., a search API, knowledge base) as a tool to gather facts it doesn't possess internally.

* **Chain-of-Thought Reasoning:** The agent's path isn't linear. It forms a hypothesis (the director is Robert Fuest), tests it, receives feedback from the environment (documents), and revises its hypothesis (the director is Alberto De Martino) before pursuing the final answer.

* **Learning from Process:** The "Highest Process Reward" indicator suggests the system is trained not just on final answer accuracy, but on the quality of the intermediate reasoning steps. Asking the right foundational question (identifying the director) is rewarded, even if the subsequent answer is initially wrong.

* **Error Correction:** The workflow explicitly shows error recovery. The agent's first answer ("1990") is superseded by a later, more accurate answer ("2 June 2015") after further interaction, mimicking a human research process.

The ultimate takeaway is that solving complex informational queries often requires an iterative, stateful dialogue with a knowledge source, where the value lies in constructing the correct sequence of sub-questions as much as in finding the final data point.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Flowchart: Agent-Environment-State Interaction with LLM

### Overview

The diagram illustrates a system where an **Agent** interacts with an **Environment** to answer questions, updating a **State** through a **Language Learning Model (LLM)**. The flow includes queries, document retrieval, and process rewards. Key elements include text boxes for questions/answers, arrows indicating information flow, and a central LLM with linguistic decomposition.

### Components/Axes

- **Agent**: A robot icon with a speech bubble labeled "Query or Answer?"

- **Environment**: A computer screen with a magnifying glass, representing document retrieval.

- **State**: A white box containing text about the director and date of death.

- **LLM**: A llama icon with arrows labeled "who," "when," "what," "how," and linguistic terms (e.g., "is," "the," "date," "death").

- **Process Reward**: A star icon with a "Highest Process Reward" label.

### Detailed Analysis

#### Textual Content

1. **Central LLM**:

- Arrows from the llama icon point to linguistic terms:

- "who" → "are" → "a" → "do"

- "when" → "to" → "birth" → "day"

- "what" → "is" → "the" → "date"

- "how" → "of" → "death" → "birth"

2. **Queries and Answers**:

- **Query 1**: "What is the date of death of the director of film Holocaust 2000?"

- **Answer**: "May 27, 2002" (incorrect, as per later correction).

- **Query 2**: "Who is the director of the film 'Holocaust 2000'?"

- **Answer**: "Alberto De Martino" (correct).

- **Query 3**: "What is the date of death of Alberto De Martino?"

- **Answer**: "1990" (correct).

3. **Process Reward**:

- A star icon with "Highest Process Reward" label, indicating optimal performance.

#### Spatial Grounding

- **Top**: Central LLM (llama icon) with linguistic decomposition.

- **Left**: Agent (robot) with query/answer flow.

- **Right**: Environment (computer screen) and State (text box).

- **Bottom**: Process reward and feedback loop.

### Key Observations

- The diagram shows a **feedback loop**: The Agent asks a question, the Environment provides documents, the State updates, and the Agent answers.

- **Inconsistencies**:

- Initial answer for the director’s death date is "May 27, 2002" (incorrect).

- Corrected answer is "1990" (from the State).

- **Color Coding**:

- LLM: Brown (llama icon).

- Agent: Blue (robot).

- Environment: Green (computer screen).

- State: White (text box).

### Interpretation

The diagram represents a **question-answering system** where the Agent uses an LLM to decompose queries into linguistic components, retrieves documents from the Environment, and updates the State with answers. The process reward suggests optimization for accuracy. The example highlights a correction in the director’s death date, emphasizing the system’s ability to refine answers through iterative interactions. The LLM’s role in breaking down queries into linguistic elements (e.g., "who," "when," "what") underscores its function in natural language processing.

## Notes

- **Language**: English (primary), with no other languages present.

- **Data Structure**: No numerical data or tables; relies on textual queries and answers.

- **Trends**: The flow emphasizes **information retrieval** and **state updates** rather than numerical trends.

DECODING INTELLIGENCE...