## Diagram: LLM Agent System Architecture

### Overview

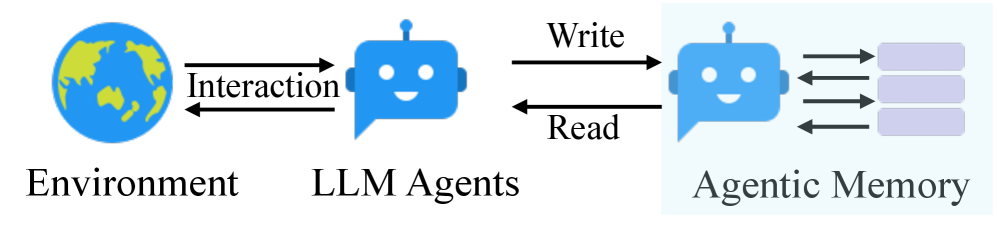

The image is a system architecture diagram illustrating the interaction flow between an environment, Large Language Model (LLM) agents, and a specialized memory system called "Agentic Memory." It depicts a closed-loop process where agents perceive, act, and learn.

### Components/Axes

The diagram consists of three primary components arranged horizontally from left to right, connected by labeled arrows indicating data or control flow.

1. **Environment** (Leftmost component):

* **Icon:** A stylized blue and green globe, representing the external world or operational context.

* **Label:** "Environment" (text below the icon).

2. **LLM Agents** (Central component):

* **Icon:** A blue robot head with a smiling face and an antenna, symbolizing the AI agent.

* **Label:** "LLM Agents" (text below the icon).

3. **Agentic Memory** (Rightmost component):

* **Icon:** A blue robot head (identical to the LLM Agents icon) positioned to the left of three stacked, light purple rectangular blocks, representing memory storage units.

* **Label:** "Agentic Memory" (text below the icon cluster).

* **Spatial Placement:** This entire component is enclosed within a faint, light blue rectangular background, visually grouping the agent icon and memory blocks as a single subsystem.

### Detailed Analysis

The flow of information and actions is defined by the arrows connecting the components:

* **Interaction Flow (Environment ↔ LLM Agents):**

* A double-headed arrow connects the Environment and LLM Agents.

* The arrow is labeled with the word **"Interaction"** placed centrally above it.

* This indicates a bidirectional relationship: the agent perceives the environment and acts upon it.

* **Memory Access Flow (LLM Agents ↔ Agentic Memory):**

* Two separate, single-headed arrows connect the LLM Agents to the Agentic Memory subsystem.

* The top arrow points from the LLM Agents to the Agentic Memory and is labeled **"Write"**.

* The bottom arrow points from the Agentic Memory back to the LLM Agents and is labeled **"Read"**.

* This signifies that agents can store information (write) into and retrieve information (read) from the dedicated memory system.

### Key Observations

* **Bidirectional Core Loop:** The primary interaction between the agent and its environment is explicitly bidirectional, forming a perception-action cycle.

* **Dedicated Memory System:** Memory is not an internal component of the LLM Agent but a separate, specialized subsystem ("Agentic Memory") that the agent interfaces with via clear read/write operations.

* **Visual Grouping:** The Agentic Memory component is visually distinct due to its background highlight, emphasizing its role as a cohesive unit separate from the core agent.

* **Symmetry in Icons:** The use of the same robot head icon for both "LLM Agents" and within "Agentic Memory" suggests a strong conceptual link, possibly indicating that the memory is agent-centric or structured for agent use.

### Interpretation

This diagram presents a conceptual model for an advanced AI agent system that separates core reasoning (LLM Agents) from persistent, structured memory (Agentic Memory). The architecture suggests several key principles:

1. **Embodied Cognition:** The agent is not a disembodied model but is situated within an "Environment," emphasizing the importance of real-world or simulated context for its operations.

2. **Memory as a Service:** By externalizing memory into a dedicated subsystem with explicit read/write channels, the design promotes modularity, scalability, and potentially more sophisticated memory management (e.g., different memory types, indexing, or retrieval strategies) than what might be natively available within an LLM's context window.

3. **Learning and Adaptation:** The "Write" pathway is crucial for learning. It implies the agent can record experiences, facts, or learned strategies into its agentic memory for future use, enabling long-term adaptation and improvement beyond a single interaction session.

4. **Investigative Lens (Peircean):** The diagram is an *iconic* and *indexical* representation. It *iconically* resembles the system's structure (globe=world, robot=agent). It is *indexical* because the arrows point directly to the causal relationships (interaction causes state changes, read/write operations cause data transfer). The *symbolic* labels ("LLM Agents," "Agentic Memory") ground these relationships in specific technical concepts. The model argues that effective agentic AI requires a clear separation between perception/action, reasoning, and memory storage, with well-defined interfaces between them.