## Scatter Plot: Model Performance Comparison (Retrieval vs. Ranking)

### Overview

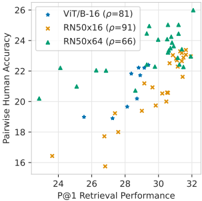

The image is a scatter plot comparing the performance of three different model variants on two distinct metrics: **P@1 Retrieval Performance** (x-axis) and **Pairwise Ranking Accuracy** (y-axis). Each data point represents a specific model configuration, with the model type indicated by marker shape and color. The plot includes a legend in the top-left corner and gridlines for reference.

### Components/Axes

* **Chart Type:** Scatter Plot

* **X-Axis:**

* **Label:** `P@1 Retrieval Performance`

* **Scale:** Linear, ranging from approximately 23 to 32.

* **Major Ticks:** 24, 26, 28, 30, 32.

* **Y-Axis:**

* **Label:** `Pairwise Ranking Accuracy`

* **Scale:** Linear, ranging from approximately 16 to 26.

* **Major Ticks:** 16, 18, 20, 22, 24, 26.

* **Legend (Top-Left Corner):**

* **Blue Triangle (▲):** `ViT-B/16 (p=81)`

* **Orange Cross (×):** `RN50x16 (p=91)`

* **Green Circle (●):** `RN50x64 (p=66)`

* *Note: The `p=` value likely refers to a parameter count or a specific model configuration identifier.*

* **Grid:** Light gray gridlines are present for both axes.

### Detailed Analysis

The plot contains approximately 30-40 data points. Below is an approximate extraction of the data points, grouped by model series. Values are estimated based on visual position relative to the axes.

**1. ViT-B/16 (Blue Triangles ▲)**

* **Trend:** This series shows a moderate positive correlation. Points are generally clustered in the mid-to-high range for both metrics.

* **Approximate Data Points (P@1, Accuracy):**

* (27.0, 20.0)

* (27.5, 21.5)

* (28.0, 22.0)

* (28.5, 22.5)

* (29.0, 23.0)

* (29.5, 23.5)

* (30.0, 24.0)

* (30.5, 24.5)

* (31.0, 24.0)

* (31.5, 23.5)

* (31.8, 23.0)

**2. RN50x16 (Orange Crosses ×)**

* **Trend:** This series shows a strong positive correlation. It has the widest spread, with points at both the lower and higher ends of the performance spectrum.

* **Approximate Data Points (P@1, Accuracy):**

* (23.5, 16.5)

* (25.0, 18.0)

* (26.5, 19.0)

* (27.0, 19.5)

* (28.0, 20.0)

* (28.5, 20.5)

* (29.0, 21.0)

* (29.5, 21.5)

* (30.0, 22.0)

* (30.5, 22.5)

* (31.0, 23.0)

* (31.5, 23.5)

**3. RN50x64 (Green Circles ●)**

* **Trend:** This series demonstrates the strongest performance, clustering in the top-right quadrant. It shows a positive correlation, though the slope appears slightly less steep than RN50x16 at the high end.

* **Approximate Data Points (P@1, Accuracy):**

* (24.0, 20.0)

* (25.0, 21.0)

* (26.0, 21.5)

* (27.0, 22.0)

* (28.0, 22.5)

* (29.0, 23.0)

* (29.5, 23.5)

* (30.0, 24.0)

* (30.5, 24.5)

* (31.0, 25.0)

* (31.5, 25.5)

* (31.8, 25.0)

### Key Observations

1. **Positive Correlation:** All three model series exhibit a clear positive correlation between P@1 Retrieval Performance and Pairwise Ranking Accuracy. As one metric improves, the other tends to improve as well.

2. **Performance Hierarchy:** There is a distinct performance stratification:

* **RN50x64 (Green)** consistently occupies the highest performance region (top-right).

* **ViT-B/16 (Blue)** occupies the middle-to-high region.

* **RN50x16 (Orange)** spans the widest range, from the lowest to mid-high performance.

3. **Outliers:** The data point for RN50x16 at approximately (23.5, 16.5) is a clear low-end outlier, representing the worst performance on both metrics in the dataset.

4. **Clustering at High Performance:** At the high end (P@1 > 30), the data points from all three series begin to converge, though RN50x64 maintains a slight edge in Pairwise Ranking Accuracy.

### Interpretation

This scatter plot visualizes the trade-off or relationship between two fundamental capabilities of vision-language models: **retrieval** (finding the correct image/text match, measured by P@1) and **ranking** (ordering pairs by relevance, measured by Pairwise Accuracy).

* **What the data suggests:** The strong positive correlation indicates that models which are better at retrieval are also generally better at ranking. This suggests these tasks, while different, share underlying representational strengths. Improving a model's core visual-semantic understanding likely benefits both tasks.

* **Model Comparison:** The `RN50x64` variant (likely a larger ResNet-based model) demonstrates superior overall performance, suggesting that increased model capacity (implied by `x64` vs. `x16`) leads to gains in both retrieval and ranking. The `ViT-B/16` (Vision Transformer) model performs competitively, especially in the mid-range.

* **Practical Implication:** For applications requiring both accurate retrieval and fine-grained ranking (e.g., search engines, recommendation systems), selecting a model from the top-right cluster (high P@1 and high Accuracy) is crucial. The `RN50x64` points represent the most robust candidates according to this evaluation.

* **Investigative Note:** The `p=` values in the legend are ambiguous. They could refer to parameter count (in millions), a hyperparameter, or a model checkpoint ID. Clarifying this would be essential for a full technical understanding. The spread within each series (especially RN50x16) suggests that factors beyond the base architecture (e.g., training data, hyperparameters, fine-tuning) significantly impact final performance on these metrics.