## Diagram: Neuro-Symbolic Probabilistic Circuit Architecture

### Overview

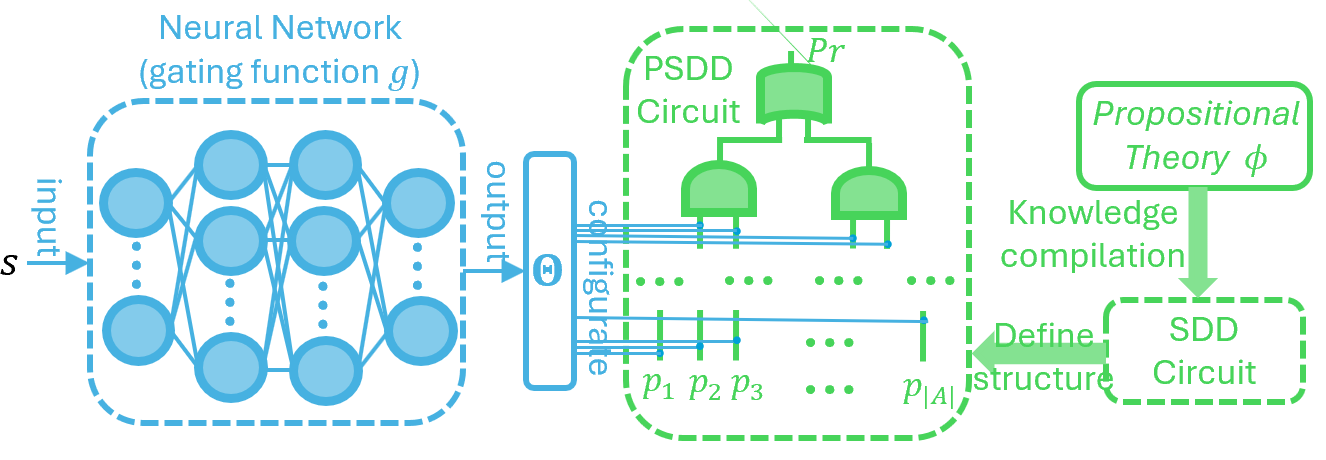

The image is a technical diagram illustrating a hybrid system that integrates a neural network with a probabilistic circuit (PSDD) for knowledge compilation and inference. The system flow proceeds from left to right, showing how an input is processed by a neural network to configure a probabilistic circuit, whose structure is defined by a compiled logical theory.

### Components/Axes

The diagram is segmented into three primary regions:

1. **Left Region (Neural Network):**

* **Label:** "Neural Network (gating function *g*)"

* **Input:** An arrow labeled "input" points to the network, with the symbol "**S**" at its origin.

* **Structure:** A multi-layer network of blue circles (nodes) connected by lines, representing a feedforward neural network.

* **Output:** An arrow labeled "output" exits the network to the right.

2. **Central Region (Probabilistic Circuit):**

* **Main Container:** A large green dashed box labeled "**PSDD Circuit**".

* **Internal Structure:** A tree-like hierarchy of green shapes (resembling AND/OR gates). The top node is labeled "**Pr**".

* **Parameters:** Below the tree, a series of vertical green lines are labeled "**p₁ p₂ p₃ ... p|A|**", indicating a set of parameters or probabilities.

* **Configuration Interface:** To the left of the PSDD box, a blue rectangular component receives the neural network's output. It is labeled "**configure**" and contains a symbol "**⨝**" (likely representing a join or configuration operation). Multiple blue lines connect this component to the PSDD circuit's parameter lines.

3. **Right Region (Knowledge Compilation):**

* **Source Theory:** A green box labeled "**Propositional Theory φ**".

* **Process:** A downward arrow labeled "**Knowledge compilation**" points to the next component.

* **Compiled Structure:** A green dashed box labeled "**SDD Circuit**".

* **Relationship:** A green arrow labeled "**Define structure**" points from the SDD Circuit back to the PSDD Circuit, indicating the SDD defines the PSDD's structure.

### Detailed Analysis

* **Flow Direction:** The primary data flow is from left to right: Input **S** → Neural Network → Configuration Signal → PSDD Circuit.

* **Control Flow:** A secondary, structural flow is from right to left: Propositional Theory **φ** → (via Knowledge Compilation) → SDD Circuit → (defines structure of) → PSDD Circuit.

* **Component Relationships:**

* The **Neural Network** acts as a "gating function *g*". Its role is to take an input **S** and produce an output that *configures* the parameters (**p₁...p|A|**) of the PSDD Circuit.

* The **PSDD Circuit** (Probabilistic Sentential Decision Diagram) is the core probabilistic model. Its internal tree structure (with root **Pr**) is not arbitrary; it is formally defined by the **SDD Circuit** (Sentential Decision Diagram).

* The **SDD Circuit** is itself derived from a **Propositional Theory φ** through a process of **knowledge compilation**. This means logical constraints or rules are compiled into an efficient computational structure (the SDD).

* **Key Symbols:**

* **S**: Input variable or state.

* **g**: The gating function implemented by the neural network.

* **Pr**: Likely denotes the root of the probabilistic circuit, representing the overall probability distribution.

* **p₁...p|A|**: A set of parameters (e.g., probabilities, weights) within the PSDD that are configured by the neural network.

* **φ**: A propositional logic theory (a set of logical formulas).

* **⨝**: Symbol within the "configure" block, suggesting a join, product, or configuration operation between the neural network output and the circuit parameters.

### Key Observations

1. **Hybrid Architecture:** The diagram explicitly combines connectionist (neural network) and symbolic (propositional theory, SDD) AI paradigms.

2. **Two-Stage Configuration:** The PSDD circuit is configured in two ways:

* **Structurally:** By the SDD circuit derived from logical theory.

* **Parametrically:** By the neural network's output based on input **S**.

3. **Directional Arrows:** The arrows are crucial. The neural network's output *configures* the PSDD, while the SDD *defines the structure* of the PSDD. This is a clear separation of concerns between structure and parameters.

4. **Visual Grouping:** Dashed boxes (blue for the neural network, green for the PSDD and SDD) are used to group related components, emphasizing the modular design.

### Interpretation

This diagram represents a **neuro-symbolic probabilistic reasoning system**. Its purpose is to merge the strengths of different AI approaches:

* **Neural Network (Subsymbolic):** Excels at learning patterns and mappings from raw data (input **S**). Here, it serves as a flexible, learnable "configurator" that adjusts the probabilistic model's parameters based on the specific input instance.

* **Symbolic Knowledge (Propositional Theory φ):** Encodes explicit, human-readable rules, constraints, or domain knowledge. Compiling this into an SDD circuit provides a structured, efficient representation of the logical relationships.

* **Probabilistic Circuit (PSDD):** Acts as the unifying inference engine. Its structure is constrained by logic (via the SDD), ensuring adherence to domain rules. Its parameters are dynamically set by the neural network, allowing it to adapt to data.

**The underlying narrative is one of constrained adaptation:** The system doesn't just learn a black-box model. It learns (via the neural network) to *instantiate* a probabilistic model whose very architecture is guaranteed to respect a set of predefined logical rules (the theory **φ**). This could be used for tasks requiring both data-driven prediction and logical consistency, such as diagnostic systems, planning under uncertainty, or explainable AI, where the neural network's role is to interpret the context (**S**) and the symbolic circuit ensures the reasoning follows logical principles.