TECHNICAL ASSET FINGERPRINT

c0d7692cbbf8f05eaaf80d67

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

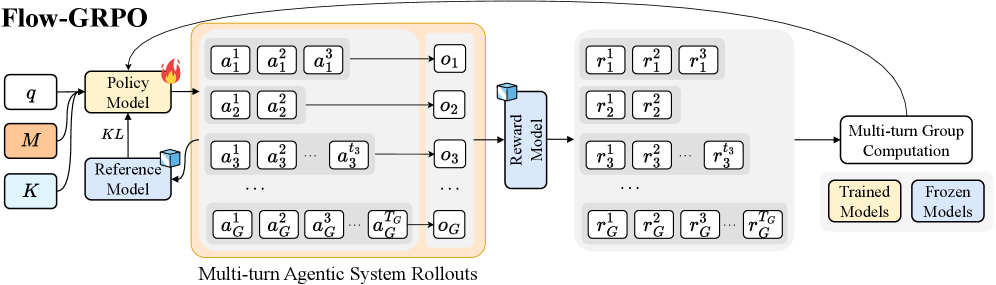

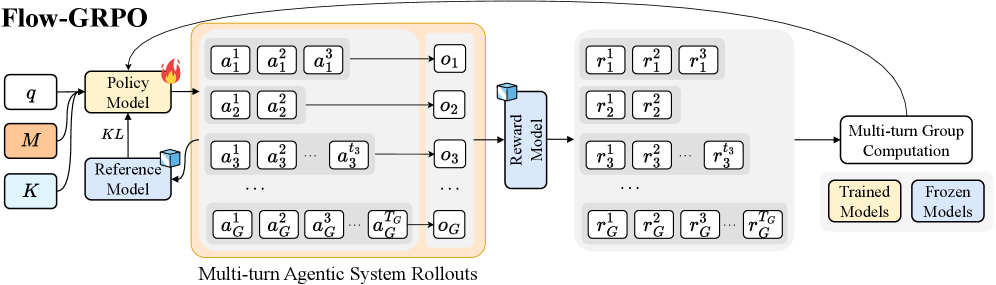

## Diagram: Flow-GRPO Architecture

### Overview

The image is a diagram illustrating the architecture of a system called Flow-GRPO. It depicts the flow of information and processes involved in multi-turn agentic system rollouts, reward modeling, and group computation. The diagram includes components such as Policy Model, Reference Model, Reward Model, and Multi-turn Group Computation, along with representations of actions, observations, and rewards.

### Components/Axes

* **Title:** Flow-GRPO (top-left)

* **Input Parameters (Left):**

* `q` (white box)

* `M` (orange box)

* `K` (light blue box)

* **Models:**

* `Policy Model` (orange box, top-center): Receives input from `q` and `Reference Model`. Has a fire icon on the top-right.

* `Reference Model` (light blue box, bottom-center): Receives input from `q`. Sends output to `Policy Model` via `KL`.

* `Reward Model` (light blue box, center-right): Receives input from the "Multi-turn Agentic System Rollouts".

* **Multi-turn Agentic System Rollouts (Center):**

* Enclosed in an orange rounded rectangle.

* Contains multiple rows, each representing a rollout.

* Each row contains action sequences `a_i^1`, `a_i^2`, `a_i^3`, ..., `a_i^{t_G}` and an observation `o_i`.

* The index `i` ranges from 1 to G (e.g., `a_1^1`, `a_2^1`, `a_3^1`, ..., `a_G^1`).

* **Rewards (Right):**

* Enclosed in a light gray rounded rectangle.

* Contains multiple rows, each corresponding to a rollout.

* Each row contains reward sequences `r_i^1`, `r_i^2`, `r_i^3`, ..., `r_i^{t_G}`.

* The index `i` ranges from 1 to G (e.g., `r_1^1`, `r_2^1`, `r_3^1`, ..., `r_G^1`).

* **Multi-turn Group Computation (Bottom-Right):** A white box with rounded corners. Receives input from the "Rewards" section and sends feedback to the "Policy Model".

* **Legend (Bottom-Right):**

* `Trained Models` (orange box)

* `Frozen Models` (light blue box)

### Detailed Analysis or Content Details

* **Flow of Information:**

* The `Policy Model` receives inputs `q` and feedback from the `Reference Model` (via `KL`).

* The `Policy Model` generates actions that are part of the "Multi-turn Agentic System Rollouts".

* The rollouts produce observations `o_i`.

* The `Reward Model` takes the rollouts as input and generates rewards `r_i^j`.

* The rewards are used in "Multi-turn Group Computation".

* The "Multi-turn Group Computation" provides feedback to the `Policy Model`.

* **Action and Reward Sequences:**

* Actions are represented as `a_i^j`, where `i` is the rollout index and `j` is the time step.

* Rewards are represented as `r_i^j`, where `i` is the rollout index and `j` is the time step.

* **Models:**

* The `Policy Model` is marked with a fire icon, possibly indicating active training or optimization.

* The `Reference Model` provides a baseline or comparison for the `Policy Model`.

* The `Reward Model` evaluates the performance of the agentic system.

### Key Observations

* The diagram illustrates a closed-loop system where the `Policy Model` generates actions, the environment provides rewards, and the `Policy Model` is updated based on these rewards.

* The "Multi-turn Agentic System Rollouts" represent the interaction of multiple agents over multiple time steps.

* The `KL` divergence is used to regulate the `Policy Model` with respect to the `Reference Model`.

* The legend indicates the presence of both trained and frozen models within the system.

### Interpretation

The Flow-GRPO architecture appears to be a reinforcement learning framework designed for multi-agent systems. The `Policy Model` learns to generate optimal actions through interaction with the environment, guided by a `Reference Model` and evaluated by a `Reward Model`. The "Multi-turn Group Computation" likely involves aggregating rewards across multiple agents and time steps to provide a comprehensive evaluation signal. The use of `KL` divergence suggests a regularization technique to prevent the `Policy Model` from deviating too far from the `Reference Model`. The distinction between trained and frozen models implies a modular design where certain components can be fixed while others are actively learned.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash

INTEL_VERIFIED

## Diagram: Flow-GRPO System Architecture

### Overview

This image presents a system architecture diagram titled "Flow-GRPO," illustrating a process for training a policy model within a multi-turn agentic system. The diagram details the interaction between a policy model, a reference model, a reward model, and various inputs and computational steps, including multi-turn rollouts and group computation, with clear distinctions between trained and frozen model components.

### Components/Axes

The diagram is structured as a left-to-right flow with feedback loops.

**Input Components (Left):**

* **q:** A white rounded rectangle, representing an input.

* **M:** An orange rounded rectangle, representing an input.

* **K:** A light blue rounded rectangle, representing an input.

**Model Components:**

* **Policy Model:** An orange rounded rectangle, centrally located on the left side. It has a small red flame icon on its top-right, indicating it is actively being trained or updated.

* **Reference Model:** A light blue rounded rectangle, positioned below the Policy Model. It has a small light blue cube icon on its top-right, indicating it is a frozen or fixed component.

* **Reward Model:** A light blue vertically oriented rounded rectangle, positioned in the middle of the diagram. It also has a small light blue cube icon on its top-left, indicating it is a frozen or fixed component.

**Process Blocks:**

* **Multi-turn Agentic System Rollouts:** A large orange-bordered rounded rectangle, spanning the middle-left section of the diagram. This block contains multiple rows of action sequences and observations.

* Each row represents a sequence of actions `a_i^1, a_i^2, ..., a_i^{T_i}` (where `i` denotes the group/agent and `T_i` denotes the number of turns for that group). These action sequences are enclosed in light grey rounded rectangles.

* Each action sequence leads to an observation `o_i` (e.g., `o_1, o_2, o_3, ..., o_G`), represented by white rounded rectangles.

* **Multi-turn Group Computation:** A white rounded rectangle, positioned on the far right, above the legend.

**Output/Intermediate Data Blocks:**

* A large light grey-bordered rounded rectangle, positioned in the middle-right section of the diagram. This block contains multiple rows of reward sequences.

* Each row represents a sequence of rewards `r_i^1, r_i^2, ..., r_i^{T_i}` (where `i` denotes the group/agent and `T_i` denotes the number of turns for that group). These reward sequences are enclosed in light grey rounded rectangles.

**Legend (Bottom-right):**

* **Trained Models:** An orange rounded rectangle.

* **Frozen Models:** A light blue rounded rectangle.

### Detailed Analysis

**Flow from Inputs to Policy Model:**

* Input `q` feeds into the `Policy Model`.

* Input `M` feeds into the `Policy Model`.

* Input `K` feeds into the `Reference Model`.

* The `Reference Model` feeds into the `Policy Model` with a connection labeled `KL`, suggesting a Kullback-Leibler divergence constraint or regularization.

**Multi-turn Agentic System Rollouts:**

* The `Policy Model` outputs to the `Multi-turn Agentic System Rollouts` block, specifically influencing the generation of actions `a_i^t`.

* The `Reference Model` also outputs to the `Multi-turn Agentic System Rollouts` block, specifically influencing the generation of actions `a_i^t`.

* Within the "Multi-turn Agentic System Rollouts" block:

* Row 1: `a_1^1, a_1^2, a_1^3` leads to `o_1`.

* Row 2: `a_2^1, a_2^2` leads to `o_2`.

* Row 3: `a_3^1, a_3^2, ..., a_3^{t_3}` leads to `o_3`.

* Ellipses indicate more rows.

* Last Row: `a_G^1, a_G^2, a_G^3, ..., a_G^{T_G}` leads to `o_G`.

* The `o_i` observations are then fed into the `Reward Model`.

**Reward Generation:**

* The `Reward Model` takes inputs from the `o_i` observations (e.g., an arrow from `o_3` points to the `Reward Model`).

* The `Reward Model` outputs to the light grey-bordered block containing reward sequences `r_i^t`.

* Row 1: `r_1^1, r_1^2, r_1^3`.

* Row 2: `r_2^1, r_2^2`.

* Row 3: `r_3^1, r_3^2, ..., r_3^{t_3}`.

* Ellipses indicate more rows.

* Last Row: `r_G^1, r_G^2, r_G^3, ..., r_G^{T_G}`.

**Policy Update Loop:**

* The reward sequences (from the light grey-bordered block) feed into the `Multi-turn Group Computation` block.

* The `Multi-turn Group Computation` block has a feedback loop, with an arrow pointing back to the `Policy Model`. This indicates that the computation based on rewards is used to update the `Policy Model`.

**Model Status (from Legend):**

* **Trained Models (Orange):** `Policy Model`, `M`. The orange border of "Multi-turn Agentic System Rollouts" suggests this process is part of the trained system's operation.

* **Frozen Models (Light Blue):** `Reference Model`, `Reward Model`, `K`. The cube icons on `Reference Model` and `Reward Model` reinforce their frozen status.

### Key Observations

* The `Policy Model` is the primary component undergoing training, indicated by its orange color and flame icon.

* The `Reference Model` and `Reward Model` are fixed or pre-trained components, indicated by their light blue color and cube icons.

* The system involves multi-turn interactions (`t` superscript) and multiple groups/agents (`i` subscript).

* A `KL` divergence term is used to regularize the `Policy Model`'s updates with respect to the `Reference Model`, a common technique in reinforcement learning (e.g., PPO).

* The process is iterative, with rewards from rollouts feeding back to update the policy.

### Interpretation

The Flow-GRPO diagram illustrates a reinforcement learning (RL) framework, likely for training a policy in a multi-agent, multi-turn environment. The core idea is to train a `Policy Model` (which generates actions `a_i^t`) by interacting with an environment (represented by the "Multi-turn Agentic System Rollouts" and subsequent reward generation).

1. **Policy Training:** The `Policy Model` is the trainable component, taking inputs `q` and `M`. It generates actions `a_i^t` for multiple agents/groups over multiple turns.

2. **Reference and Regularization:** A `Reference Model` (frozen) provides a baseline or constraint for the `Policy Model`'s updates, enforced by a `KL` divergence term. This prevents the policy from making drastic changes, promoting stable learning. Input `K` might be related to the reference model's parameters or state.

3. **Rollouts and Observations:** The generated actions `a_i^t` are executed in a simulated or real environment (the "Multi-turn Agentic System Rollouts"), leading to observations `o_i`. The orange border of this block suggests it's an active part of the training process, driven by the trained policy.

4. **Reward Evaluation:** The `Reward Model` (frozen) evaluates the observations `o_i` to produce rewards `r_i^t`. This implies the reward function is fixed and not learned during this process, providing a stable signal for policy improvement.

5. **Policy Update:** The `Multi-turn Group Computation` aggregates or processes these rewards to generate an update signal that is fed back to the `Policy Model`. This completes the RL loop, where the policy learns to maximize cumulative rewards.

The "Flow-GRPO" likely stands for a specific algorithm, possibly related to Group Reinforcement Policy Optimization, where "Flow" might imply a continuous or iterative process, and "GRPO" points to a group-based or generalized policy optimization method, potentially incorporating elements of PPO due to the KL divergence. The distinction between "Trained Models" and "Frozen Models" is crucial, highlighting which components are adaptive and which are fixed during the training phase. This architecture suggests a robust and controlled training process, leveraging fixed reference and reward models to guide the policy's learning.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Flow-GRPO

### Overview

This diagram illustrates the Flow-GRPO (likely an acronym for a reinforcement learning algorithm) process, depicting the interaction between a Policy Model, a Reference Model, and a Reward Model across multiple turns of agentic system rollouts. The diagram shows how actions are generated, observations are received, and rewards are calculated, with a distinction between trained and frozen models.

### Components/Axes

The diagram consists of the following key components:

* **Policy Model:** Represented by a flame icon, receiving input 'q' and outputting actions (a).

* **Reference Model:** Represented by a cube icon, receiving input 'K' and contributing to the KL divergence calculation.

* **Reward Model:** Represented by a cube icon, receiving observations (o) and outputting rewards (r).

* **Multi-turn Agentic System Rollouts:** A grid-like structure showing the sequence of actions, observations, and rewards across multiple turns (1 to G).

* **Multi-turn Group Computation:** A box indicating the aggregation of results across turns.

* **KL:** Label indicating the Kullback-Leibler divergence calculation.

* **Legend:** Distinguishes between "Trained Models" (light green) and "Frozen Models" (light blue).

* **Inputs:** 'q', 'M', 'K' are labeled as inputs.

* **Outputs:** 'a', 'o', 'r' are labeled as outputs.

### Detailed Analysis or Content Details

The diagram shows a flow from left to right.

1. **Inputs:** The process begins with inputs 'q', 'M', and 'K'. 'q' feeds into the Policy Model. 'K' feeds into the Reference Model. 'M' is connected to the KL divergence calculation.

2. **Policy Model & Actions:** The Policy Model generates a series of actions (a<sub>1</sub><sup>1</sup>, a<sub>1</sub><sup>2</sup>, a<sub>1</sub><sup>3</sup>, ... a<sub>G</sub><sup>1</sup>, a<sub>G</sub><sup>2</sup>, a<sub>G</sub><sup>3</sup>, ... a<sub>G</sub><sup>G</sup>) across G turns. The actions are arranged in a grid of G rows and G columns.

3. **Observations:** These actions lead to a series of observations (o<sub>1</sub>, o<sub>2</sub>, o<sub>3</sub>, ... o<sub>G</sub>).

4. **Reward Model & Rewards:** The Reward Model receives the observations and outputs corresponding rewards (r<sub>1</sub><sup>1</sup>, r<sub>1</sub><sup>2</sup>, r<sub>1</sub><sup>3</sup>, ... r<sub>G</sub><sup>1</sup>, r<sub>G</sub><sup>2</sup>, r<sub>G</sub><sup>3</sup>, ... r<sub>G</sub><sup>G</sup>), also arranged in a G x G grid.

5. **Multi-turn Group Computation:** The outputs from the rollouts are then fed into a "Multi-turn Group Computation" block.

6. **Model Status:** The Policy Model and Reference Model are color-coded. The Policy Model is shown as a light green box, indicating it is a "Trained Model". The Reference Model is shown as a light blue box, indicating it is a "Frozen Model". The Reward Model is also light green, indicating it is a "Trained Model".

### Key Observations

* The diagram emphasizes the iterative nature of the process, with multiple turns of agentic rollouts.

* The distinction between trained and frozen models suggests a specific training strategy where some models are updated while others remain fixed.

* The KL divergence calculation likely plays a role in regularizing the Policy Model's behavior relative to the Reference Model.

* The grid structure of actions and rewards indicates a parallel or batched processing of multiple rollouts.

### Interpretation

The Flow-GRPO diagram represents a reinforcement learning framework that leverages a reference model to guide the training of a policy model. The use of a frozen reference model suggests a desire to maintain a certain level of stability or prior knowledge during learning. The multi-turn rollouts and group computation indicate that the algorithm explores a range of possible actions and evaluates their cumulative rewards. The KL divergence term likely encourages the policy to stay close to the reference model, preventing it from deviating too far from established behavior. The diagram highlights a sophisticated approach to reinforcement learning that combines elements of imitation learning (through the reference model) and exploration (through the policy model). The color coding of the models is crucial for understanding the training dynamics of the system. The diagram suggests a system designed for complex, multi-step decision-making tasks.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Technical Diagram: Flow-GRPO Architecture

### Overview

The image displays a technical flowchart titled "Flow-GRPO," illustrating a machine learning training pipeline for a multi-turn agentic system. The diagram shows the flow of data from initial inputs through policy and reference models, generating multi-turn rollouts, which are then scored by a reward model to facilitate group-based computation for model updates.

### Components/Axes

The diagram is organized into several distinct, connected regions:

1. **Input Block (Leftmost):**

* Three input boxes labeled: `q`, `M`, and `K`.

* `M` is highlighted with an orange background.

2. **Model Block (Center-Left):**

* **Policy Model:** A yellow box with a flame icon (indicating a "Trained Model").

* **Reference Model:** A blue box with a cube icon (indicating a "Frozen Model").

* A bidirectional arrow labeled `KL` connects the Policy Model and Reference Model.

3. **Multi-turn Agentic System Rollouts (Center):**

* A large, light-orange container with this title at the bottom.

* It contains multiple rows, each representing a rollout sequence for an agent or group (indexed by `G`).

* Each row consists of a series of action boxes (`a`) leading to an observation box (`o`).

* **Row Structure:**

* Row 1: `a₁¹`, `a₁²`, `a₁³` → `o₁`

* Row 2: `a₂¹`, `a₂²` → `o₂`

* Row 3: `a₃¹`, `a₃²`, ..., `a₃^{t₃}` → `o₃`

* Ellipsis (`...`) indicating intermediate rows.

* Final Row: `a_G¹`, `a_G²`, `a_G³`, ..., `a_G^{t_G}` → `o_G`

4. **Reward Model Block (Center-Right):**

* A blue box labeled "Reward Model" with a cube icon (Frozen Model).

* It receives input from all observation boxes (`o₁` through `o_G`).

5. **Reward Output Block (Right of Reward Model):**

* A large, light-gray container.

* It contains multiple rows of reward values (`r`), corresponding to the actions in the rollouts.

* **Row Structure:**

* Row 1: `r₁¹`, `r₁²`, `r₁³`

* Row 2: `r₂¹`, `r₂²`

* Row 3: `r₃¹`, `r₃²`, ..., `r₃^{t₃}`

* Ellipsis (`...`).

* Final Row: `r_G¹`, `r_G²`, `r_G³`, ..., `r_G^{t_G}`

6. **Computation & Output Block (Rightmost):**

* A box labeled "Multi-turn Group Computation".

* An arrow points from the Reward Output Block to this computation box.

* Two output boxes emerge from the computation:

* "Trained Models" (yellow background).

* "Frozen Models" (blue background).

7. **Legend (Bottom-Right):**

* A small box explaining the iconography:

* Yellow box with flame icon: "Trained Models"

* Blue box with cube icon: "Frozen Models"

8. **Global Flow Arrow:**

* A large, curved arrow originates from the "Multi-turn Group Computation" box and points back to the "Policy Model," indicating an iterative training loop.

### Detailed Analysis

* **Data Flow:** The process begins with inputs `q`, `M`, `K`. The Policy Model (trained) and Reference Model (frozen) interact via a KL divergence constraint. The Policy Model generates multi-turn action sequences (`a`) for `G` different agents or groups, resulting in observations (`o`).

* **Rollout Structure:** The number of actions per rollout varies. Agent 1 has 3 actions, Agent 2 has 2 actions, Agent 3 has `t₃` actions, and the G-th agent has `t_G` actions. This indicates a flexible, multi-turn environment.

* **Reward Assignment:** The frozen Reward Model evaluates each observation (`o`) and assigns a reward (`r`) to *each individual action* within the sequence. The reward structure mirrors the action structure exactly (e.g., `a₁¹` receives reward `r₁¹`).

* **Group Computation:** Rewards from all agents and all turns are aggregated in the "Multi-turn Group Computation" step. This step's output is used to update the "Trained Models" (presumably the Policy Model) while keeping "Frozen Models" (Reference, Reward) unchanged.

* **Training Loop:** The final arrow back to the Policy Model confirms this is an iterative reinforcement learning or optimization process, where group-based reward signals are used to refine the policy.

### Key Observations

1. **Variable-Length Sequences:** The system explicitly handles sequences of different lengths (`t₃`, `t_G`), which is crucial for realistic multi-turn interactions.

2. **Granular Reward Signal:** Rewards are provided at the action-level, not just at the end of a sequence. This allows for fine-grained credit assignment across multiple turns.

3. **Model State Dichotomy:** The diagram strictly separates "Trained" (Policy) and "Frozen" (Reference, Reward) components, which is a common practice in stable reinforcement learning algorithms like RLHF.

4. **Group-Based Learning:** The title "Group Computation" and the indexing by `G` suggest the algorithm processes multiple rollouts (from different agents or attempts) simultaneously to compute a more robust training signal.

### Interpretation

This diagram outlines the **Flow-GRPO** (likely "Flow-based Group Relative Policy Optimization") algorithm, a method for training agentic AI systems that operate over multiple turns. The core innovation appears to be the integration of **group-based computation** with **multi-turn, action-level rewards**.

* **Purpose:** To train a policy model (`Policy Model`) to perform well in sequential decision-making tasks by comparing the relative performance of multiple rollouts (a "group") generated under the same conditions.

* **Mechanism:** The KL divergence constraint between the Policy and Reference models prevents the policy from deviating too drastically during updates, ensuring stability. The Reward Model provides dense feedback. The "Group Computation" likely calculates advantages or normalized rewards across the group of rollouts, which are then used to update the policy via a gradient step (indicated by the return arrow).

* **Significance:** This approach addresses key challenges in training multi-turn agents: credit assignment over long horizons and obtaining stable, comparative learning signals. By evaluating groups of trajectories together, it can mitigate noise and learn more robustly than methods relying on single rollouts. The architecture is modular, allowing different frozen models (Reference, Reward) to be plugged in.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Flowchart: Flow-GRPO Architecture

### Overview

The diagram illustrates a multi-stage reinforcement learning framework called Flow-GRPO, depicting the flow of information between policy models, reference models, reward models, and group computation systems. The architecture emphasizes iterative agent-environment interactions and model-based reward aggregation.

### Components/Axes

1. **Input Components** (Left):

- `q`: Query/input state

- `M`: Policy Model parameters

- `K`: Reference Model parameters

- Color coding: Orange (`M`), Blue (`K`)

2. **Core Components**:

- **Policy Model**:

- Inputs: `q`, `M`, `K`

- Outputs: Action sequence `a₁¹, a₁², ..., a₁ᴺ` (per turn)

- **Reference Model**:

- Connected via KL divergence to Policy Model

- **Multi-turn Agentic System Rollouts**:

- Action sequences: `a₁¹, a₂¹, ..., aᴺ¹` (turn 1), `a₁², a₂², ..., aᴺ²` (turn 2), ..., `a₁ᴺ, a₂ᴺ, ..., aᴺᴺ` (turn N)

- Observation sequence: `o₁, o₂, ..., oᴺ`

- **Reward Model**:

- Inputs: Action-observation pairs

- Outputs: Reward sequence `r₁¹, r₁², ..., r₁ᴺ` (per agent)

- **Multi-turn Group Computation**:

- Inputs: Trained Models (`r₁¹, r₂¹, ..., rᴺ¹`) and Frozen Models (`r₁ᴺ, r₂ᴺ, ..., rᴺᴺ`)

- Output: Aggregated group rewards

3. **Legend**:

- Yellow: Trained Models

- Blue: Frozen Models

### Detailed Analysis

- **Policy Model**: Generates action sequences (`aᴺᴺ`) through iterative turns, with each turn's actions (`aᴺᴺ`) influencing subsequent observations (`oᴺ`).

- **Reference Model**: Acts as a knowledge distillation component, connected to the Policy Model via KL divergence (indicated by the flame icon).

- **Multi-turn Rollouts**: Show temporal progression through action-observation pairs, with subscript indices denoting turn number and superscript indices denoting agent/episode.

- **Reward Model**: Processes rollout data to compute per-agent rewards (`rᴺᴺ`), with separate tracks for trained and frozen model outputs.

- **Group Computation**: Combines trained (`rᴺ¹`) and frozen (`rᴺᴺ`) model rewards through an unspecified aggregation mechanism.

### Key Observations

1. **Temporal Structure**: The system processes N discrete turns, with each turn's actions (`aᴺᴺ`) and observations (`oᴺ`) forming a Markovian chain.

2. **Model Diversity**: Maintains separate trained and frozen model tracks, suggesting ensemble learning or robustness testing.

3. **Reward Aggregation**: Final computation combines multiple reward streams, implying a meta-learning or distillation objective.

4. **Knowledge Distillation**: The KL divergence connection between Policy and Reference Models indicates parameter sharing or guidance mechanisms.

### Interpretation

This architecture represents a sophisticated RL framework where:

1. **Policy Development**: The Policy Model evolves through iterative interactions with the environment, guided by a Reference Model.

2. **Reward Engineering**: The Reward Model extracts value signals from raw interactions, with separate evaluation paths for different model versions.

3. **Ensemble Learning**: The final group computation likely combines diverse model perspectives, potentially for uncertainty reduction or performance improvement.

The diagram suggests a research direction focused on improving RL stability through:

- Model diversity (trained vs frozen)

- Temporal credit assignment across multiple turns

- Knowledge distillation between models

- Group-level reward aggregation for collective decision-making

DECODING INTELLIGENCE...