# Technical Data Extraction: Normalized Latency Comparison

## 1. Image Overview

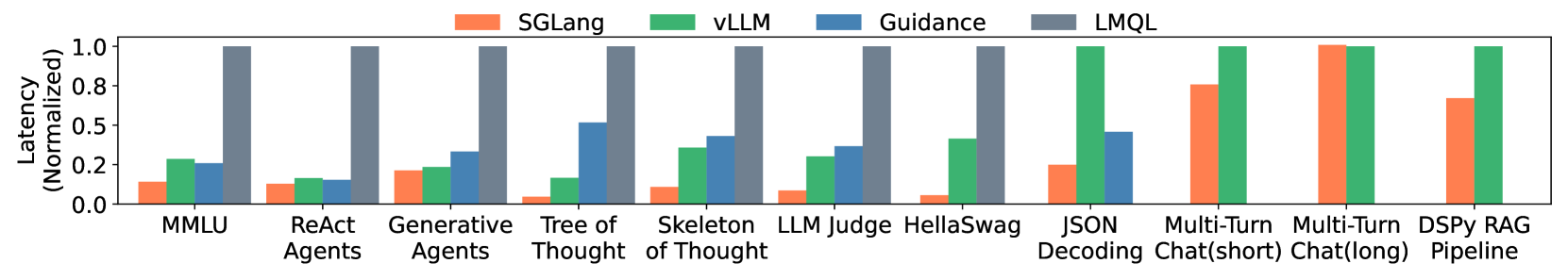

This image is a grouped bar chart comparing the normalized latency of four different Large Language Model (LLM) inference and programming frameworks across eleven distinct benchmarks/tasks.

## 2. Component Isolation

### Header (Legend)

The legend is positioned at the top center of the image.

* **SGLang** (Orange/Coral): `[x: ~30%, y: top]`

* **vLLM** (Green): `[x: ~45%, y: top]`

* **Guidance** (Blue): `[x: ~60%, y: top]`

* **LMQL** (Grey): `[x: ~75%, y: top]`

### Main Chart Area

* **Y-Axis Title:** Latency (Normalized)

* **Y-Axis Markers:** 0.0, 0.2, 0.5, 0.8, 1.0

* **X-Axis Categories (Benchmarks):**

1. MMLU

2. ReAct Agents

3. Generative Agents

4. Tree of Thought

5. Skeleton of Thought

6. LLM Judge

7. HellaSwag

8. JSON Decoding

9. Multi-Turn Chat(short)

10. Multi-Turn Chat(long)

11. DSPy RAG Pipeline

## 3. Data Extraction and Trend Analysis

The chart uses normalized values where the slowest framework for a given task typically represents the baseline of 1.0. Lower bars indicate better performance (lower latency).

### Trend Verification by Series

* **SGLang (Orange):** Consistently the lowest or near-lowest latency across all benchmarks. It shows significant performance leads in complex reasoning tasks like "Tree of Thought" and "HellaSwag."

* **vLLM (Green):** Generally higher latency than SGLang but lower than Guidance/LMQL in early benchmarks. It becomes the baseline (1.0) for the final three chat and RAG benchmarks.

* **Guidance (Blue):** Mid-range latency. It is absent from the final three benchmarks.

* **LMQL (Grey):** Consistently the highest latency (1.0) for the first seven benchmarks, after which it is absent from the data.

### Reconstructed Data Table (Approximate Values)

| Benchmark | SGLang (Orange) | vLLM (Green) | Guidance (Blue) | LMQL (Grey) |

| :--- | :---: | :---: | :---: | :---: |

| **MMLU** | ~0.15 | ~0.28 | ~0.25 | 1.0 |

| **ReAct Agents** | ~0.12 | ~0.18 | ~0.15 | 1.0 |

| **Generative Agents** | ~0.20 | ~0.22 | ~0.35 | 1.0 |

| **Tree of Thought** | ~0.05 | ~0.18 | ~0.52 | 1.0 |

| **Skeleton of Thought** | ~0.10 | ~0.35 | ~0.42 | 1.0 |

| **LLM Judge** | ~0.08 | ~0.30 | ~0.38 | 1.0 |

| **HellaSwag** | ~0.05 | ~0.42 | N/A | 1.0 |

| **JSON Decoding** | ~0.25 | 1.0 | ~0.45 | N/A |

| **Multi-Turn Chat(short)** | ~0.80 | 1.0 | N/A | N/A |

| **Multi-Turn Chat(long)** | 1.0 | ~0.98 | N/A | N/A |

| **DSPy RAG Pipeline** | ~0.70 | 1.0 | N/A | N/A |

## 4. Key Observations

* **Framework Availability:** LMQL and Guidance data points are missing for the more complex/recent benchmarks (JSON Decoding through DSPy RAG Pipeline).

* **SGLang Performance:** SGLang demonstrates a substantial latency reduction (often >5x faster) compared to the baseline (LMQL) in the first seven tasks.

* **Scaling:** In "Multi-Turn Chat(long)", SGLang and vLLM perform almost identically, with SGLang slightly higher, whereas in "DSPy RAG Pipeline", SGLang regains a lead over vLLM.