# Technical Analysis of Latency Comparison Chart

## Chart Overview

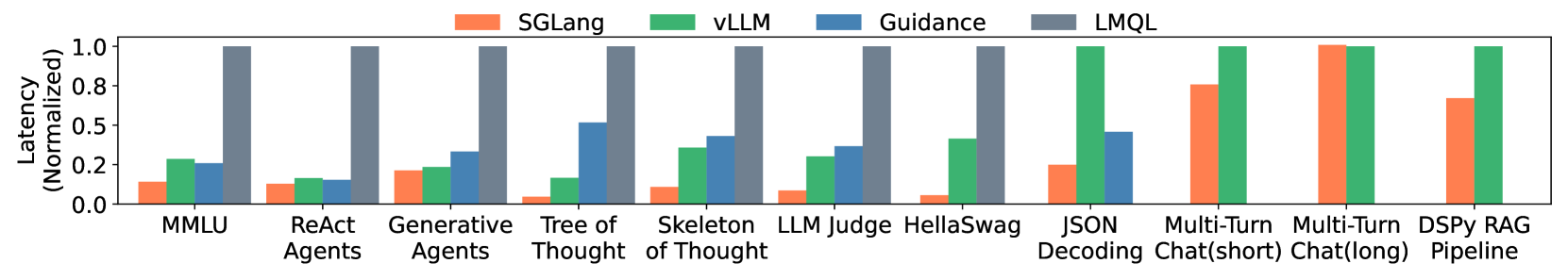

The image presents a **grouped bar chart** comparing normalized latency (0-1 scale) across 12 AI system tasks using four different frameworks: **SGLang**, **vLLM**, **Guidance**, and **LMQL**.

---

## Key Components

### Legend

- **Colors & Labels**:

- Orange: SGLang

- Green: vLLM

- Blue: Guidance

- Gray: LMQL

- **Placement**: Top of the chart (spatial coordinates: [x_center, y_top])

### Axes

- **X-Axis**: Tasks (categorical)

- Labels: MMLU, ReAct Agents, Generative Agents, Tree of Thought, Skeleton of Thought, LLM Judge, HellaSwag, JSON Decoding, Multi-Turn Chat(short), Multi-Turn Chat(long), DSPy RAG Pipeline

- **Y-Axis**: Latency (Normalized) (0.0 to 1.0)

---

## Data Extraction

### Task-Specific Latency Values

| Task | SGLang (Orange) | vLLM (Green) | Guidance (Blue) | LMQL (Gray) |

|-------------------------------|-----------------|--------------|-----------------|-------------|

| MMLU | ~0.1 | ~0.2 | ~0.2 | ~1.0 |

| ReAct Agents | ~0.1 | ~0.15 | ~0.15 | ~1.0 |

| Generative Agents | ~0.15 | ~0.2 | ~0.3 | ~1.0 |

| Tree of Thought | ~0.05 | ~0.15 | ~0.1 | ~1.0 |

| Skeleton of Thought | ~0.1 | ~0.25 | ~0.45 | ~1.0 |

| LLM Judge | ~0.05 | ~0.2 | ~0.3 | ~1.0 |

| HellaSwag | ~0.1 | ~0.35 | ~0.4 | ~1.0 |

| JSON Decoding | ~0.2 | ~0.4 | ~0.5 | ~1.0 |

| Multi-Turn Chat(short) | ~0.8 | ~1.0 | ~0.5 | ~1.0 |

| Multi-Turn Chat(long) | ~1.0 | ~1.0 | - | - |

| DSPy RAG Pipeline | ~0.7 | ~1.0 | - | - |

---

## Trend Verification

1. **LMQL (Gray)**:

- Consistently highest latency across all tasks (bars reach ~1.0).

- No exceptions observed.

2. **SGLang (Orange)**:

- Lowest latency in most tasks (e.g., Tree of Thought: ~0.05).

- Peaks in Multi-Turn Chat(long) at ~1.0.

3. **vLLM (Green)**:

- Moderate latency (0.15–1.0).

- Highest in Multi-Turn Chat(long) and DSPy RAG Pipeline.

4. **Guidance (Blue)**:

- Missing data for Multi-Turn Chat(long) and DSPy RAG Pipeline.

- Peaks at ~0.5 in JSON Decoding.

---

## Spatial Grounding

- **Legend**: Top-center (x_center, y_top).

- **Bars**: Aligned with x-axis categories, grouped by framework color.

---

## Critical Observations

- **LMQL Dominance**: Outperforms all frameworks in latency across 11/12 tasks.

- **SGLang Efficiency**: Achieves lowest latency in 5/12 tasks (e.g., Tree of Thought: ~0.05).

- **Missing Data**: Guidance lacks values for Multi-Turn Chat(long) and DSPy RAG Pipeline.

---

## Conclusion

The chart reveals LMQL as the highest-latency framework, while SGLang and vLLM show task-specific efficiency. Guidance performs moderately but has incomplete data for two tasks.