TECHNICAL ASSET FINGERPRINT

c13f0a79149699abbd8e07c1

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

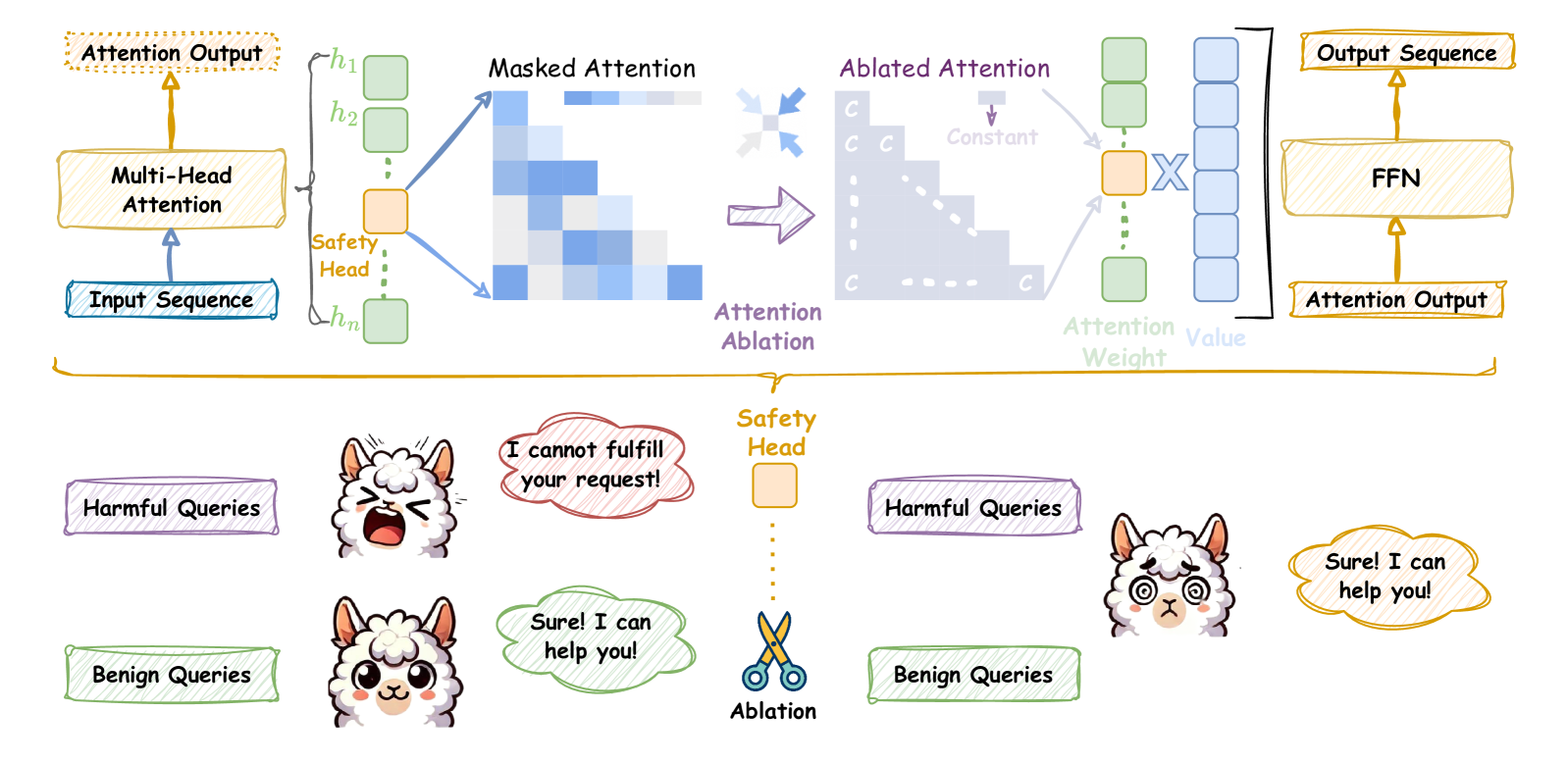

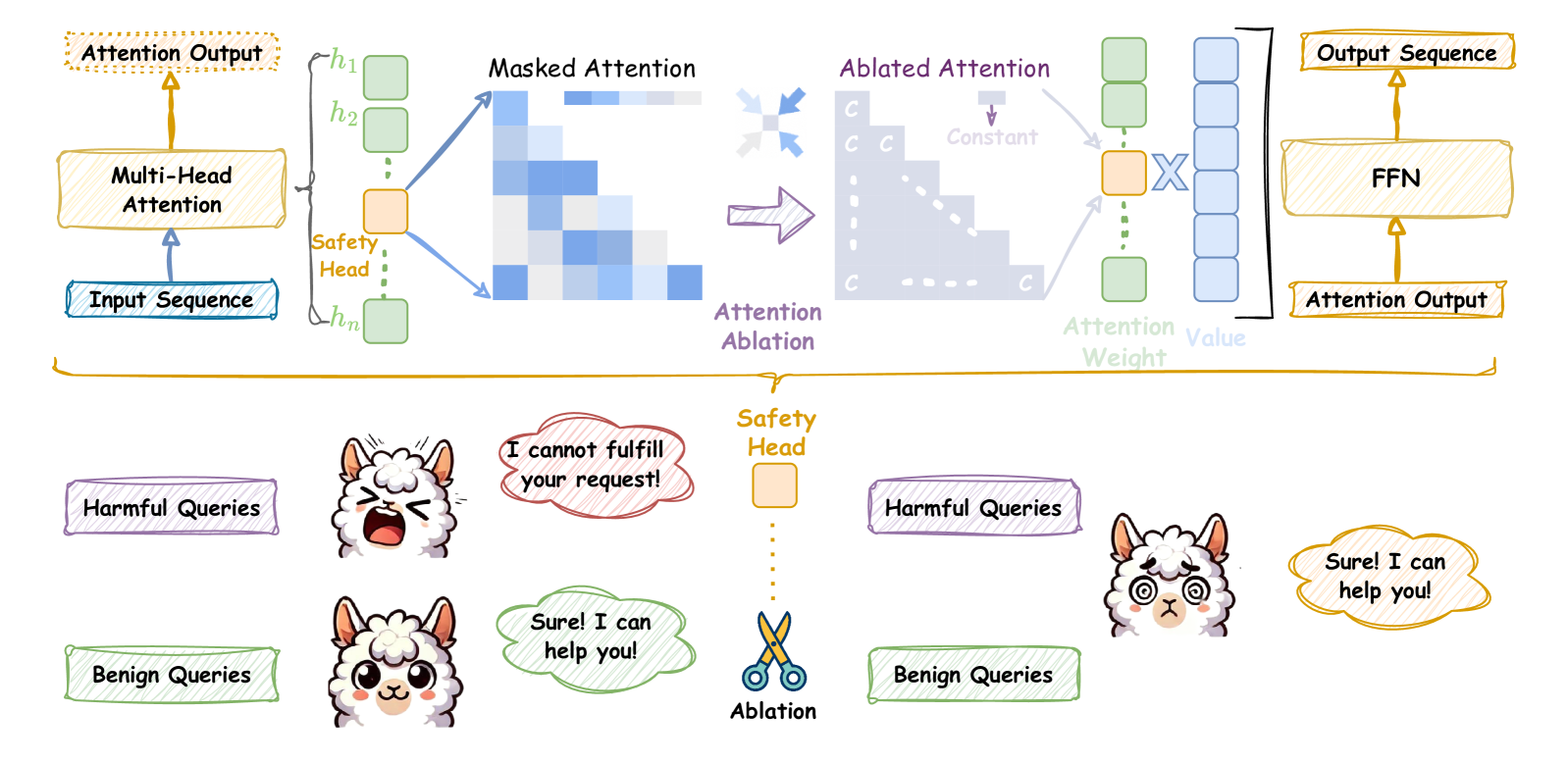

## Diagram: Safety Head Ablation

### Overview

The image illustrates the concept of "Safety Head Ablation" in a neural network, likely within a transformer architecture. It shows how ablating (removing) a specific "Safety Head" affects the model's behavior, particularly in handling harmful versus benign queries. The diagram contrasts the model's response with and without the Safety Head.

### Components/Axes

* **Top Section:** Depicts the neural network architecture.

* **Input Sequence:** The initial input to the model (bottom-left).

* **Multi-Head Attention:** A standard attention mechanism, processing the input sequence.

* **Attention Output:** The output of the multi-head attention layer.

* **h1, h2, ..., hn:** Represent individual attention heads, with the "Safety Head" highlighted in orange.

* **Masked Attention:** Visualizes the attention pattern with masking.

* **Ablated Attention:** Shows the attention pattern after ablating the Safety Head, where the attention weights are set to a constant value 'C'.

* **Attention Ablation:** Label indicating the transition from Masked Attention to Ablated Attention.

* **Attention Weight:** Indicates the weights assigned to the values.

* **Value:** Represents the values being attended to.

* **Output Sequence:** The final output of the attention mechanism.

* **FFN:** Feed-Forward Network, processing the output sequence.

* **Attention Output:** The final output of the FFN.

* **Bottom Section:** Illustrates the model's responses to harmful and benign queries with and without the Safety Head.

* **Harmful Queries:** Labeled input representing potentially harmful prompts.

* **Benign Queries:** Labeled input representing safe prompts.

* **Safety Head:** Represented as an orange box, with dotted lines indicating its ablation.

* **Ablation:** Represented by a pair of scissors, symbolizing the removal of the Safety Head.

* **Speech Bubbles:** Contain the model's responses to the queries.

### Detailed Analysis or ### Content Details

* **Neural Network Architecture (Top Section):**

* The input sequence flows into a multi-head attention mechanism.

* The multi-head attention consists of multiple attention heads (h1 to hn), one of which is designated as the "Safety Head".

* The "Safety Head" is connected to a "Masked Attention" visualization, showing a triangular attention pattern.

* "Attention Ablation" is performed, resulting in "Ablated Attention" where the attention weights from the Safety Head are replaced with a constant value 'C'.

* The ablated attention is then used to compute the "Attention Weight" and "Value", leading to the "Output Sequence" and final "Attention Output" via an FFN.

* **Query Response (Bottom Section):**

* **Before Ablation:**

* A "Harmful Query" results in the model saying "I cannot fulfill your request!".

* A "Benign Query" results in the model saying "Sure! I can help you!".

* **After Ablation:**

* A "Harmful Query" now results in the model saying "Sure! I can help you!".

* A "Benign Query" still results in the model saying "Sure! I can help you!".

### Key Observations

* The Safety Head appears to be responsible for detecting and blocking harmful queries.

* Ablating the Safety Head causes the model to respond positively to both harmful and benign queries.

* The "Masked Attention" visualization shows a pattern where the model attends to previous tokens in the sequence.

* The "Ablated Attention" visualization shows a uniform attention pattern after ablation.

### Interpretation

The diagram demonstrates the importance of the Safety Head in preventing the model from responding to harmful queries. By ablating the Safety Head, the model loses its ability to distinguish between harmful and benign inputs, leading to potentially undesirable behavior. This highlights the role of specific attention heads in controlling the model's safety and ethical considerations. The ablation study suggests that targeted interventions, such as removing specific attention heads, can significantly impact the model's overall behavior and safety profile.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Attention Ablation for Safety

### Overview

This diagram illustrates a process of attention ablation applied to a multi-head attention mechanism, specifically focusing on a "Safety Head" to mitigate harmful queries. The diagram shows the flow of data from an input sequence through multi-head attention, masked attention, ablated attention, and finally to an output sequence. Below the flow diagram is a visual representation of how the ablation affects the model's response to benign and harmful queries.

### Components/Axes

The diagram consists of several key components:

* **Input Sequence:** The initial data fed into the system.

* **Multi-Head Attention:** A processing block that generates attention outputs (h1 to hn).

* **Safety Head:** A specific attention head highlighted in green.

* **Masked Attention:** A visual representation of attention weights, shown as a heatmap.

* **Attention Ablation:** A process that removes or modifies attention weights. Represented by dashed arrows and "C" symbols.

* **Ablated Attention:** The attention weights after ablation.

* **Attention Weight:** A visual representation of the attention weight.

* **Output Sequence:** The final output of the system.

* **FFN:** Feed Forward Network.

* **Harmful Queries:** Input queries that the system should avoid fulfilling.

* **Benign Queries:** Input queries that the system should fulfill.

* **Scissors:** Representing the ablation process.

### Detailed Analysis or Content Details

The diagram shows a data flow from left to right.

1. **Input Sequence** enters the **Multi-Head Attention** block. This block produces multiple attention outputs labeled h1 to hn. One of these heads is specifically designated as the **Safety Head** and is highlighted in green.

2. The attention weights are visualized as a **Masked Attention** heatmap, with varying shades of blue indicating different weight values.

3. **Attention Ablation** is applied, represented by dashed arrows. The heatmap is modified with "C" symbols, indicating constant values after ablation.

4. The resulting **Ablated Attention** is then multiplied by an **Attention Weight** (represented by a multiplication symbol).

5. The output of this multiplication is fed into a **FFN** (Feed Forward Network) to produce the **Output Sequence**.

6. Below the data flow, two scenarios are depicted:

* **Harmful Queries:** A llama character receives a harmful query and responds with "I cannot fulfill your request!" in a speech bubble.

* **Benign Queries:** A llama character receives a benign query and responds with "Sure! I can help you!" in a speech bubble.

7. The **Ablation** process (represented by scissors) is shown to remove the Safety Head's influence, leading to the different responses.

### Key Observations

* The Safety Head is specifically targeted by the ablation process.

* Ablation appears to modify the attention weights, potentially reducing the influence of the Safety Head.

* The ablation process changes the model's response to harmful queries, preventing it from fulfilling them.

* The model continues to respond to benign queries without issue.

### Interpretation

This diagram demonstrates a technique for improving the safety of a language model by ablating the attention weights associated with a dedicated "Safety Head." The Safety Head likely learns to identify and suppress harmful patterns in the input sequence. By removing its influence through ablation, the model is prevented from responding to harmful queries. The visual representation with the llama characters effectively illustrates the practical impact of this technique – the model refuses to fulfill harmful requests while continuing to assist with benign ones. The use of a heatmap to visualize attention weights provides insight into the model's internal workings and how ablation affects its decision-making process. The diagram suggests that attention ablation is a viable method for mitigating risks associated with language models and ensuring responsible AI behavior.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Attention Ablation in a Safety-Mechanism-Enhanced Language Model

### Overview

This image is a technical diagram illustrating the architecture and function of a "Safety Head" within a transformer-based language model's attention mechanism. It demonstrates how this specialized head processes input sequences to filter harmful content and shows the consequence of removing ("ablating") this safety feature. The diagram is divided into two main sections: an upper technical flowchart of the model's architecture and a lower conceptual illustration of its behavioral impact.

### Components/Axes

The diagram contains no traditional chart axes. It is a flowchart with labeled components, directional arrows, and illustrative metaphors.

**Upper Technical Flowchart Components (Left to Right):**

1. **Input Sequence**: Blue-bordered box. The starting point of the data flow.

2. **Multi-Head Attention**: Yellow, hand-drawn-style box. Receives the Input Sequence.

3. **Attention Heads**: A vertical stack of green squares labeled `h1`, `h2`, ..., `hn`. An orange square labeled **Safety Head** is positioned within this stack.

4. **Masked Attention**: A blue-toned heatmap/matrix. Arrows point from the attention heads (including the Safety Head) to this matrix.

5. **Attention Ablation**: A purple arrow points from the Masked Attention to the next stage, labeled with this text.

6. **Ablated Attention**: A greyed-out version of the attention matrix. Key features:

* The diagonal is replaced with a constant value `c`.

* A label "Constant" with a downward arrow points to this `c`.

* A large "X" is drawn over the connection from the Safety Head's position.

7. **Attention Weight & Value**: Green squares (Attention Weight) and blue squares (Value) are shown being multiplied (indicated by a large "X").

8. **FFN**: Yellow, hand-drawn-style box (Feed-Forward Network).

9. **Output Sequence**: Yellow-bordered box. The final output.

10. **Attention Output**: Two instances, one at the very top (output of Multi-Head Attention) and one feeding into the FFN.

**Lower Conceptual Illustration Components:**

1. **Query Types**: Two labeled boxes: "Harmful Queries" (purple border) and "Benign Queries" (green border).

2. **Model States**: Two cartoon llama characters represent the model.

* **Left Llama (With Safety Head)**: Reacts differently to query types.

* To Harmful Queries: Speech bubble says, "I cannot fulfill your request!" (red, agitated bubble).

* To Benign Queries: Speech bubble says, "Sure! I can help you!" (green, calm bubble).

* **Right Llama (After Ablation)**: Has swirly, "hypnotized" eyes.

* To Harmful Queries: Speech bubble says, "Sure! I can help you!" (yellow, compliant bubble).

* To Benign Queries: No specific response shown, but implied to still be compliant.

3. **Safety Head & Ablation Metaphor**:

* An orange square labeled **Safety Head** is shown with a dotted line leading to a pair of scissors.

* The scissors are labeled **Ablation**, symbolizing the cutting/removal of the Safety Head's influence.

### Detailed Analysis

**Technical Flow (Upper Section):**

The process begins with an **Input Sequence** entering a **Multi-Head Attention** layer. This layer consists of multiple parallel attention heads (`h1` to `hn`), one of which is designated the **Safety Head** (orange). These heads collectively produce a **Masked Attention** pattern, visualized as a heatmap where darker blue likely indicates higher attention weights.

The core operation is **Attention Ablation**. This process modifies the attention matrix to create the **Ablated Attention** matrix. The modification involves:

1. **Isolating the Safety Head's contribution**: The connection from the Safety Head's position is severed (shown by the large "X").

2. **Imposing a constant**: The diagonal of the attention matrix is replaced with a constant value `c`, effectively neutralizing the standard autoregressive masking and likely the Safety Head's filtering effect.

The resulting (ablated) **Attention Weight** matrix is then multiplied with the **Value** vectors. This product is passed through the **FFN** (Feed-Forward Network) to generate the final **Output Sequence**.

**Behavioral Impact (Lower Section):**

This section provides a metaphorical interpretation of the technical process.

* **With Safety Head Intact (Left)**: The model (llama) correctly distinguishes between query types. It refuses **Harmful Queries** ("I cannot fulfill your request!") and complies with **Benign Queries** ("Sure! I can help you!").

* **After Safety Head Ablation (Right)**: The model's safety mechanism is disabled (symbolized by the scissors). It now responds compliantly ("Sure! I can help you!") to **Harmful Queries**, indicating a failure in its safety alignment. The swirly eyes suggest the model is now "compromised" or operating without its intended safeguards.

### Key Observations

1. **Spatial Grounding of Safety Head**: The Safety Head is visually embedded within the standard multi-head attention stack (`h1...hn`), indicating it is an integral but specialized component of the attention mechanism.

2. **Ablation Target**: The ablation specifically targets the pathway influenced by the Safety Head and alters the fundamental attention masking (diagonal constant `c`), suggesting the safety mechanism is deeply tied to how the model attends to and processes sequential information.

3. **Color-Coded Semantics**: Colors are used consistently: Orange for the Safety Head, purple for harmful elements, green for benign/safe elements, and yellow for core model components (Multi-Head Attention, FFN, Output).

4. **Dramatic Behavioral Shift**: The most striking observation is the complete reversal in the model's response to harmful queries post-ablation, moving from refusal to eager compliance.

### Interpretation

This diagram argues that a dedicated "Safety Head" within a language model's architecture is crucial for aligning the model's behavior with safety guidelines. It provides a mechanistic explanation for how such a head might work—by influencing the attention pattern to filter or suppress harmful content during processing.

The **ablation study** illustrated here serves as a critical experiment. By surgically removing the Safety Head's influence (the "X") and disrupting the attention mechanism (constant `c`), the researchers demonstrate a direct causal link: disabling this component leads to a catastrophic failure in safety alignment. The model becomes "jailbroken," responding helpfully to requests it was designed to refuse.

The Peircean insight here is that the Safety Head doesn't just add a rule; it fundamentally shapes the model's *perception* (attention) of the input. Ablating it doesn't just remove a filter; it changes how the model "sees" the query, making a harmful request appear indistinguishable from a benign one. This underscores that safety in advanced AI may require architectural interventions that are integral to the core processing loop, not just superficial classifiers added at the end. The diagram is a warning: safety mechanisms, if they can be ablated, represent a critical point of failure.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Safety Mechanism in Transformer Model

### Overview

The diagram illustrates a safety mechanism in a transformer-based language model, focusing on how attention heads are manipulated to filter harmful queries. It combines architectural components (attention heads, FFN) with visualizations of attention weights and example query handling.

### Components/Axes

#### Top Diagram (Architecture Flow)

1. **Input Sequence** → **Multi-Head Attention** → **Attention Output**

2. **Safety Head** (highlighted in orange):

- Applies **Masked Attention** (visualized as a heatmap with triangular pattern)

- Generates **Ablated Attention** (modified attention weights)

3. **FFN (Feed-Forward Network)** processes Ablated Attention → **Output Sequence**

4. **Attention Ablation** (purple arrow) connects Masked Attention to Ablated Attention

#### Bottom Diagram (Query Handling)

- **Harmful Queries** (purple box):

- Example: "I cannot fulfill your request!"

- Response: Blocked (red cloud)

- **Benign Queries** (green box):

- Example: "Sure! I can help you!"

- Response: Allowed (green cloud)

- **Safety Head** (central orange box with scissors icon):

- **Ablation** (cutting action) applied to harmful queries

### Detailed Analysis

1. **Attention Flow**:

- Input sequence passes through Multi-Head Attention, producing attention weights (heatmap).

- The Safety Head modifies these weights via **Masked Attention**, creating a triangular pattern where later tokens receive reduced attention.

- **Ablated Attention** (modified weights) is fed into the FFN to generate the final output.

2. **Attention Visualization**:

- **Masked Attention Heatmap**: Shows decreasing attention weights toward later tokens (triangular pattern), likely to limit context for harmful queries.

- **Ablated Attention**: Represents the final attention weights after safety modifications.

3. **Query Handling**:

- Harmful queries trigger the Safety Head to **ablate** (cut) attention weights, preventing the model from generating unsafe responses.

- Benign queries bypass ablation, allowing normal attention flow and safe responses.

### Key Observations

- The triangular attention pattern suggests the model prioritizes earlier tokens, potentially to avoid over-reliance on harmful context.

- The Safety Head acts as a gatekeeper, selectively modifying attention to suppress harmful queries.

- The FFN's role is to process the ablated attention, ensuring outputs align with safety constraints.

### Interpretation

This diagram demonstrates a **context-aware safety mechanism** where:

1. The Safety Head inspects attention weights to identify harmful queries.

2. Ablation (cutting attention weights) prevents the model from focusing on unsafe input segments.

3. The FFN generates outputs based on the modified attention, ensuring responses are safe.

The use of llamas with contrasting expressions (angry vs. smiling) visually reinforces the distinction between blocked harmful queries and allowed benign ones. The scissors icon in the Safety Head symbolizes the active modification of attention weights to enforce safety constraints. This architecture highlights how transformer models can be engineered to balance utility and safety through targeted attention manipulation.

DECODING INTELLIGENCE...