\n

## Diagram: Attention Ablation for Safety

### Overview

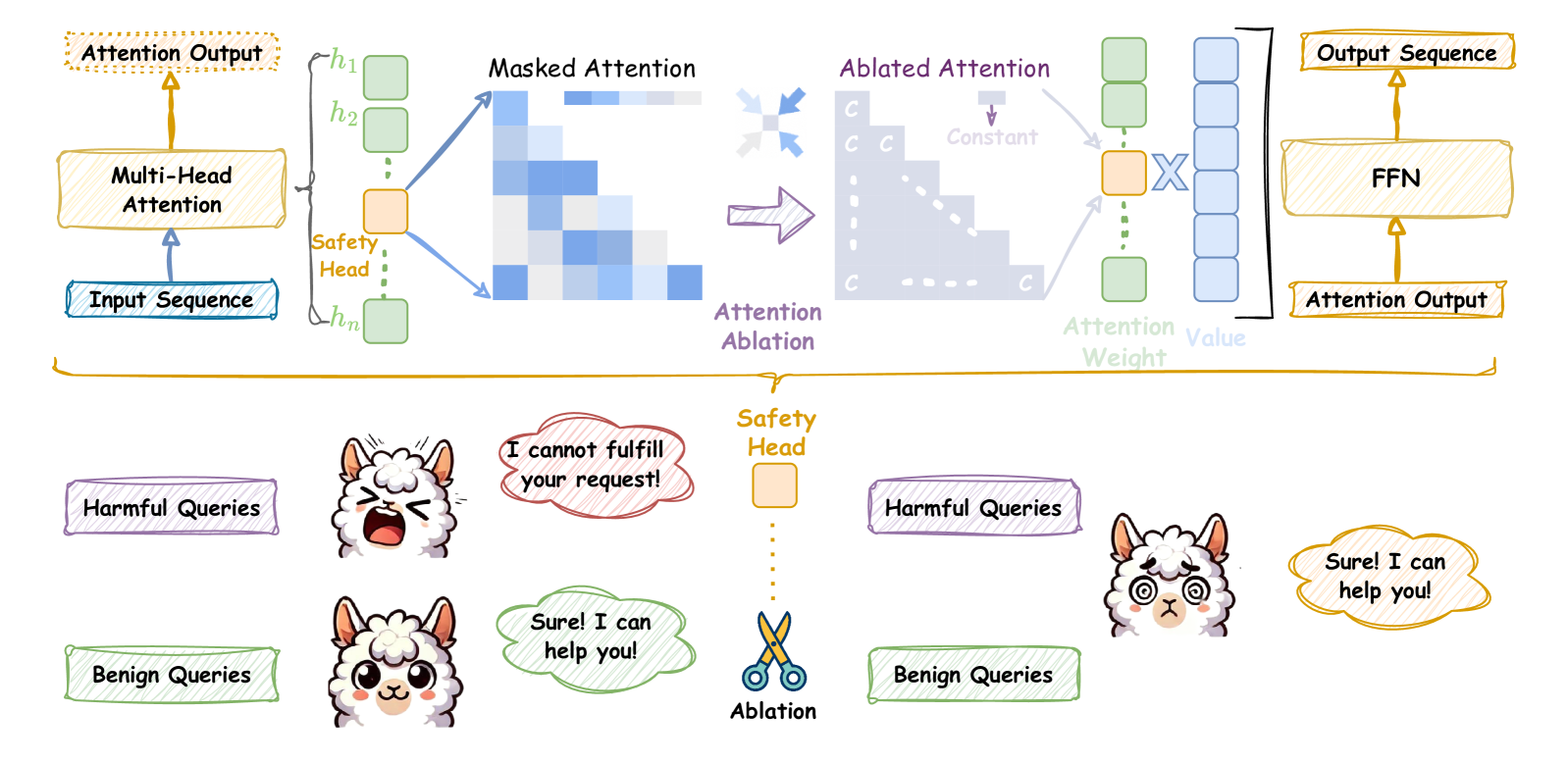

This diagram illustrates a process of attention ablation applied to a multi-head attention mechanism, specifically focusing on a "Safety Head" to mitigate harmful queries. The diagram shows the flow of data from an input sequence through multi-head attention, masked attention, ablated attention, and finally to an output sequence. Below the flow diagram is a visual representation of how the ablation affects the model's response to benign and harmful queries.

### Components/Axes

The diagram consists of several key components:

* **Input Sequence:** The initial data fed into the system.

* **Multi-Head Attention:** A processing block that generates attention outputs (h1 to hn).

* **Safety Head:** A specific attention head highlighted in green.

* **Masked Attention:** A visual representation of attention weights, shown as a heatmap.

* **Attention Ablation:** A process that removes or modifies attention weights. Represented by dashed arrows and "C" symbols.

* **Ablated Attention:** The attention weights after ablation.

* **Attention Weight:** A visual representation of the attention weight.

* **Output Sequence:** The final output of the system.

* **FFN:** Feed Forward Network.

* **Harmful Queries:** Input queries that the system should avoid fulfilling.

* **Benign Queries:** Input queries that the system should fulfill.

* **Scissors:** Representing the ablation process.

### Detailed Analysis or Content Details

The diagram shows a data flow from left to right.

1. **Input Sequence** enters the **Multi-Head Attention** block. This block produces multiple attention outputs labeled h1 to hn. One of these heads is specifically designated as the **Safety Head** and is highlighted in green.

2. The attention weights are visualized as a **Masked Attention** heatmap, with varying shades of blue indicating different weight values.

3. **Attention Ablation** is applied, represented by dashed arrows. The heatmap is modified with "C" symbols, indicating constant values after ablation.

4. The resulting **Ablated Attention** is then multiplied by an **Attention Weight** (represented by a multiplication symbol).

5. The output of this multiplication is fed into a **FFN** (Feed Forward Network) to produce the **Output Sequence**.

6. Below the data flow, two scenarios are depicted:

* **Harmful Queries:** A llama character receives a harmful query and responds with "I cannot fulfill your request!" in a speech bubble.

* **Benign Queries:** A llama character receives a benign query and responds with "Sure! I can help you!" in a speech bubble.

7. The **Ablation** process (represented by scissors) is shown to remove the Safety Head's influence, leading to the different responses.

### Key Observations

* The Safety Head is specifically targeted by the ablation process.

* Ablation appears to modify the attention weights, potentially reducing the influence of the Safety Head.

* The ablation process changes the model's response to harmful queries, preventing it from fulfilling them.

* The model continues to respond to benign queries without issue.

### Interpretation

This diagram demonstrates a technique for improving the safety of a language model by ablating the attention weights associated with a dedicated "Safety Head." The Safety Head likely learns to identify and suppress harmful patterns in the input sequence. By removing its influence through ablation, the model is prevented from responding to harmful queries. The visual representation with the llama characters effectively illustrates the practical impact of this technique – the model refuses to fulfill harmful requests while continuing to assist with benign ones. The use of a heatmap to visualize attention weights provides insight into the model's internal workings and how ablation affects its decision-making process. The diagram suggests that attention ablation is a viable method for mitigating risks associated with language models and ensuring responsible AI behavior.