## Diagram: Safety Mechanism in Transformer Model

### Overview

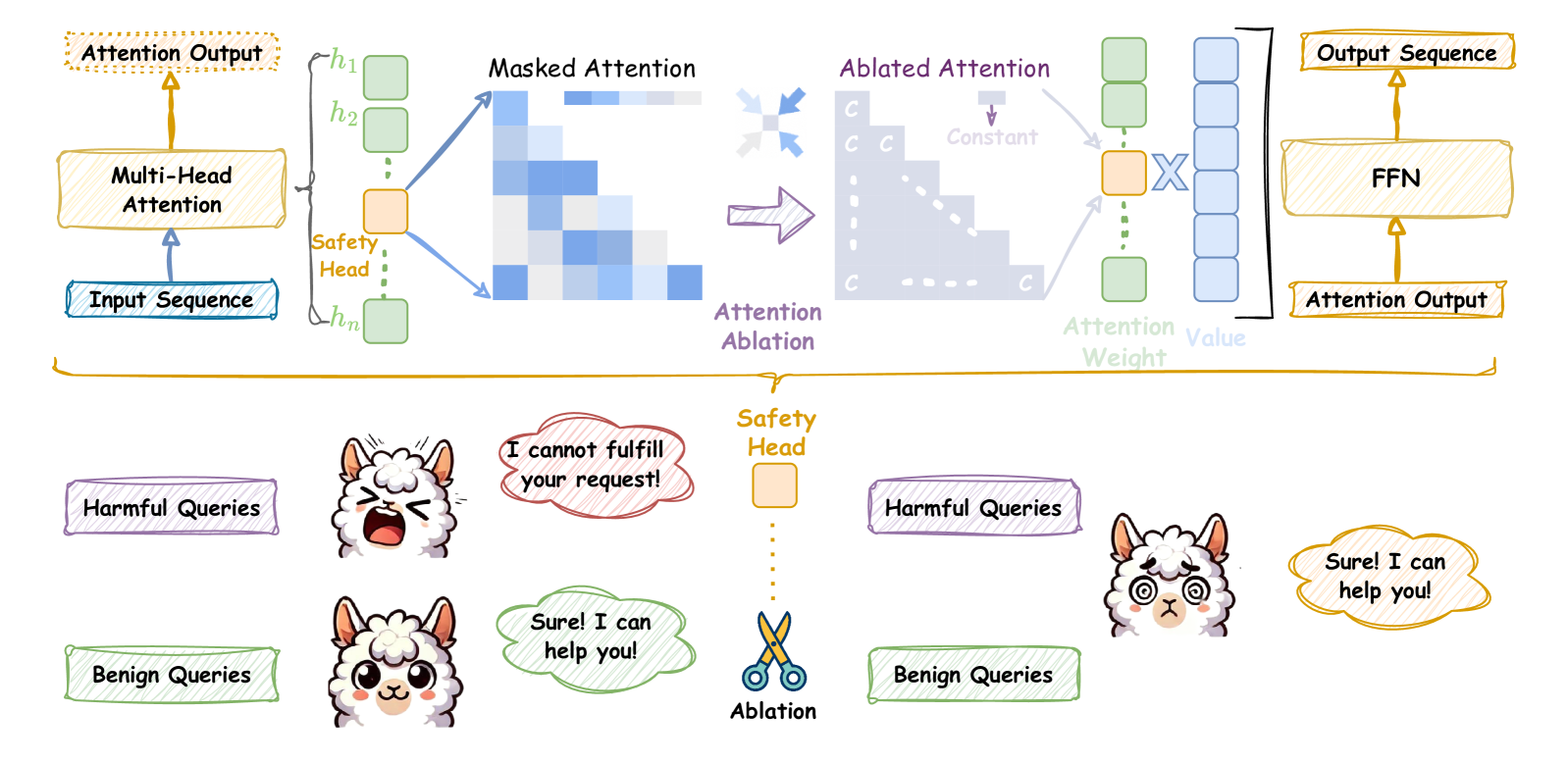

The diagram illustrates a safety mechanism in a transformer-based language model, focusing on how attention heads are manipulated to filter harmful queries. It combines architectural components (attention heads, FFN) with visualizations of attention weights and example query handling.

### Components/Axes

#### Top Diagram (Architecture Flow)

1. **Input Sequence** → **Multi-Head Attention** → **Attention Output**

2. **Safety Head** (highlighted in orange):

- Applies **Masked Attention** (visualized as a heatmap with triangular pattern)

- Generates **Ablated Attention** (modified attention weights)

3. **FFN (Feed-Forward Network)** processes Ablated Attention → **Output Sequence**

4. **Attention Ablation** (purple arrow) connects Masked Attention to Ablated Attention

#### Bottom Diagram (Query Handling)

- **Harmful Queries** (purple box):

- Example: "I cannot fulfill your request!"

- Response: Blocked (red cloud)

- **Benign Queries** (green box):

- Example: "Sure! I can help you!"

- Response: Allowed (green cloud)

- **Safety Head** (central orange box with scissors icon):

- **Ablation** (cutting action) applied to harmful queries

### Detailed Analysis

1. **Attention Flow**:

- Input sequence passes through Multi-Head Attention, producing attention weights (heatmap).

- The Safety Head modifies these weights via **Masked Attention**, creating a triangular pattern where later tokens receive reduced attention.

- **Ablated Attention** (modified weights) is fed into the FFN to generate the final output.

2. **Attention Visualization**:

- **Masked Attention Heatmap**: Shows decreasing attention weights toward later tokens (triangular pattern), likely to limit context for harmful queries.

- **Ablated Attention**: Represents the final attention weights after safety modifications.

3. **Query Handling**:

- Harmful queries trigger the Safety Head to **ablate** (cut) attention weights, preventing the model from generating unsafe responses.

- Benign queries bypass ablation, allowing normal attention flow and safe responses.

### Key Observations

- The triangular attention pattern suggests the model prioritizes earlier tokens, potentially to avoid over-reliance on harmful context.

- The Safety Head acts as a gatekeeper, selectively modifying attention to suppress harmful queries.

- The FFN's role is to process the ablated attention, ensuring outputs align with safety constraints.

### Interpretation

This diagram demonstrates a **context-aware safety mechanism** where:

1. The Safety Head inspects attention weights to identify harmful queries.

2. Ablation (cutting attention weights) prevents the model from focusing on unsafe input segments.

3. The FFN generates outputs based on the modified attention, ensuring responses are safe.

The use of llamas with contrasting expressions (angry vs. smiling) visually reinforces the distinction between blocked harmful queries and allowed benign ones. The scissors icon in the Safety Head symbolizes the active modification of attention weights to enforce safety constraints. This architecture highlights how transformer models can be engineered to balance utility and safety through targeted attention manipulation.