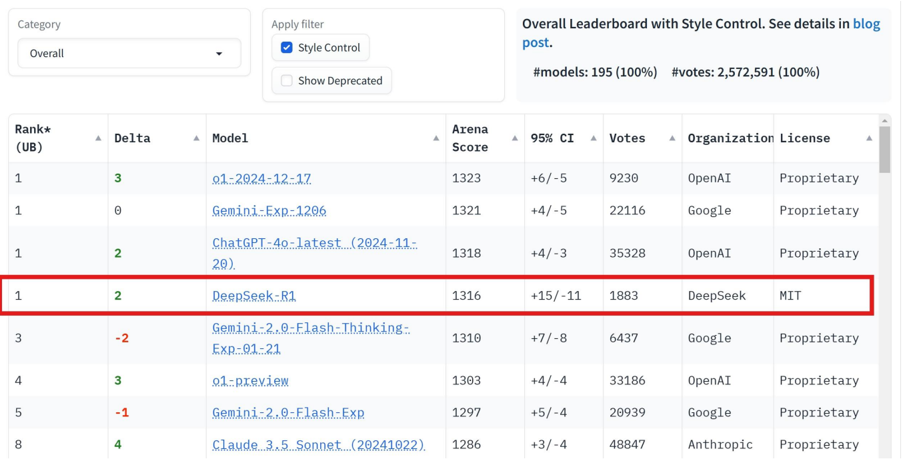

## Table: Overall Leaderboard with Style Control

### Overview

The image displays a ranked leaderboard of AI models with performance metrics, including Arena Scores, confidence intervals, votes, organizational affiliations, and licensing details. The table is filtered under the "Overall" category with "Style Control" enabled. A highlighted row emphasizes the model "DeepSeek-R1" with a red border.

### Components/Axes

- **Columns**:

- **Rank (UB)**: Numerical ranking (1–8).

- **Delta**: Change in rank (positive/negative values in green/red).

- **Model**: Model name and version (e.g., "o1-2024-12-17", "Gemini-Exp-1206").

- **Arena Score**: Performance metric (e.g., 1323, 1316).

- **95% CI**: Confidence interval (e.g., +6/-5, +15/-11).

- **Votes**: Number of votes received (e.g., 9230, 1883).

- **Organization**: Affiliated organization (e.g., OpenAI, Google, DeepSeek).

- **License**: Licensing type (e.g., Proprietary, MIT).

- **UI Elements**:

- **Category Dropdown**: Set to "Overall".

- **Apply Filter**: "Style Control" checked (blue), "Show Deprecated" unchecked (gray).

- **Footer Note**: "Overall Leaderboard with Style Control. See details in blog post. #models: 195 (100%) #votes: 2,572,591 (100%)".

### Detailed Analysis

1. **Rank 1**:

- **Model**: DeepSeek-R1.

- **Delta**: +2 (green).

- **Arena Score**: 1316.

- **95% CI**: +15/-11.

- **Votes**: 1883.

- **Organization**: DeepSeek.

- **License**: MIT (open-source).

2. **Other Models**:

- **Rank 2**: Gemini-Exp-1206 (Delta: 0, Arena Score: 1321, Votes: 22,116, Organization: Google, License: Proprietary).

- **Rank 3**: Gemini-2.0-Flash-Thinking-Exp-01-21 (Delta: -2, Arena Score: 1310, Votes: 6,437, Organization: Google, License: Proprietary).

- **Rank 4**: o1-preview (Delta: +3, Arena Score: 1303, Votes: 33,186, Organization: OpenAI, License: Proprietary).

- **Rank 5**: Gemini-2.0-Flash-Exp (Delta: -1, Arena Score: 1297, Votes: 20,939, Organization: Google, License: Proprietary).

- **Rank 8**: Claude 3.5 Sonnet (Delta: +4, Arena Score: 1286, Votes: 48,847, Organization: Anthropic, License: Proprietary).

### Key Observations

- **DeepSeek-R1** is the top-ranked model with the highest Arena Score (1316) and a positive Delta (+2), indicating improved performance.

- **Google** and **OpenAI** dominate the leaderboard with multiple models, while **DeepSeek** and **Anthropic** have fewer entries.

- **Votes** correlate with model popularity, with Claude 3.5 Sonnet receiving the most (48,847).

- **License Types**: Most models are proprietary, except DeepSeek-R1 (MIT), suggesting open-source adoption for high-performing models.

### Interpretation

The leaderboard reflects competitive performance among AI models, with DeepSeek-R1 emerging as the current leader. The use of Style Control filtering suggests customization options for evaluating models. The MIT license for DeepSeek-R1 may indicate strategic open-sourcing to foster adoption or collaboration. High vote counts for Claude 3.5 Sonnet (Anthropic) suggest strong community engagement despite lower Arena Scores. The confidence intervals (95% CI) highlight variability in performance metrics, with Gemini-2.0-Flash-Thinking-Exp showing the widest range (+7/-8), indicating potential instability in its ranking.