## Chart Type: Line Chart - Calling Error Rate vs. Training Steps

### Overview

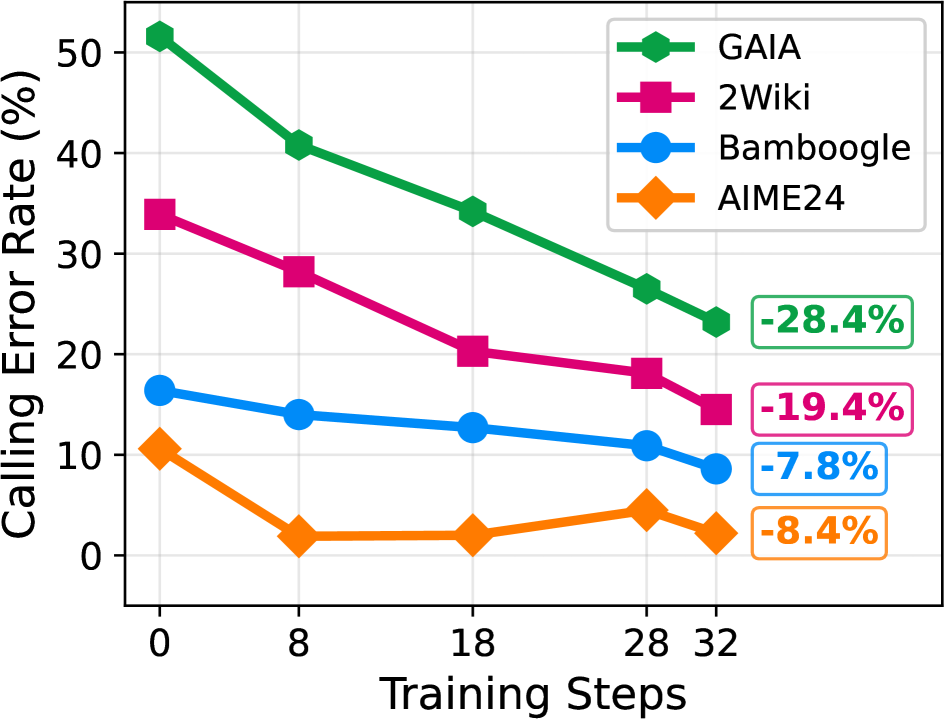

This image displays a 2D line chart illustrating the "Calling Error Rate (%)" on the Y-axis as a function of "Training Steps" on the X-axis. Four different methods or models—GAIA, 2Wiki, Bamboogle, and AIME24—are compared, each represented by a distinct colored line and marker. The chart shows how the calling error rate changes for each method as the number of training steps increases from 0 to 32. Additionally, percentage reduction values are displayed next to the final data point for each line, indicating the absolute decrease in error rate from 0 to 32 training steps.

### Components/Axes

* **X-axis Label**: "Training Steps"

* **X-axis Scale**: Numeric, ranging from 0 to 32.

* **X-axis Markers**: 0, 8, 18, 28, 32.

* **Y-axis Label**: "Calling Error Rate (%)"

* **Y-axis Scale**: Numeric, ranging from 0 to 50.

* **Y-axis Markers**: 0, 10, 20, 30, 40, 50.

* **Legend**: Located in the top-right quadrant of the plot area.

* **GAIA**: Green line with filled diamond markers.

* **2Wiki**: Magenta line with filled square markers.

* **Bamboogle**: Blue line with filled circle markers.

* **AIME24**: Orange line with filled diamond markers.

* **Additional Labels (Right side of plot)**:

* Next to the GAIA line (green): "-28.4%" (in a green box)

* Next to the 2Wiki line (magenta): "-19.4%" (in a magenta box)

* Next to the Bamboogle line (blue): "-7.8%" (in a blue box)

* Next to the AIME24 line (orange): "-8.4%" (in an orange box)

These values represent the absolute reduction in "Calling Error Rate (%)" from 0 to 32 Training Steps for each respective method.

### Detailed Analysis

The chart presents four data series, each tracking the Calling Error Rate (%) across five distinct Training Steps (0, 8, 18, 28, 32).

1. **GAIA (Green line with diamond markers)**:

* **Trend**: This line shows a consistent downward trend, indicating a decrease in calling error rate as training steps increase. It starts at the highest error rate among all methods and maintains the highest error rate throughout.

* **Data Points**:

* (Training Steps: 0, Calling Error Rate: ~51.5%)

* (Training Steps: 8, Calling Error Rate: ~40.5%)

* (Training Steps: 18, Calling Error Rate: ~33.5%)

* (Training Steps: 28, Calling Error Rate: ~26.5%)

* (Training Steps: 32, Calling Error Rate: ~23.1%)

* **Total Reduction (0 to 32 steps)**: -28.4 percentage points.

2. **2Wiki (Magenta line with square markers)**:

* **Trend**: This line also exhibits a clear downward trend, showing a reduction in calling error rate with more training steps. It starts as the second-highest error rate and remains so.

* **Data Points**:

* (Training Steps: 0, Calling Error Rate: ~34.2%)

* (Training Steps: 8, Calling Error Rate: ~28.0%)

* (Training Steps: 18, Calling Error Rate: ~20.5%)

* (Training Steps: 28, Calling Error Rate: ~18.5%)

* (Training Steps: 32, Calling Error Rate: ~14.8%)

* **Total Reduction (0 to 32 steps)**: -19.4 percentage points.

3. **Bamboogle (Blue line with circle markers)**:

* **Trend**: This line shows a relatively stable but decreasing trend in calling error rate. It starts at a moderate error rate and consistently decreases, ending as the second-lowest error rate.

* **Data Points**:

* (Training Steps: 0, Calling Error Rate: ~17.2%)

* (Training Steps: 8, Calling Error Rate: ~14.0%)

* (Training Steps: 18, Calling Error Rate: ~13.0%)

* (Training Steps: 28, Calling Error Rate: ~11.0%)

* (Training Steps: 32, Calling Error Rate: ~9.4%)

* **Total Reduction (0 to 32 steps)**: -7.8 percentage points.

4. **AIME24 (Orange line with diamond markers)**:

* **Trend**: This line shows an initial sharp decrease, then a plateau, followed by a slight increase, and finally another decrease. It starts with the lowest initial error rate and generally maintains the lowest error rate throughout, despite a minor fluctuation.

* **Data Points**:

* (Training Steps: 0, Calling Error Rate: ~11.2%)

* (Training Steps: 8, Calling Error Rate: ~2.5%)

* (Training Steps: 18, Calling Error Rate: ~2.5%)

* (Training Steps: 28, Calling Error Rate: ~5.0%)

* (Training Steps: 32, Calling Error Rate: ~2.8%)

* **Total Reduction (0 to 32 steps)**: -8.4 percentage points.

### Key Observations

* All four methods generally show a decrease in "Calling Error Rate (%)" as "Training Steps" increase, indicating that more training is beneficial for reducing errors.

* GAIA starts with the highest error rate (~51.5%) and ends with the highest (~23.1%), but also achieves the largest absolute reduction in error rate (-28.4 percentage points).

* AIME24 consistently demonstrates the lowest calling error rate across most training steps, starting at ~11.2% and ending at ~2.8%. It shows a significant initial drop by 8 training steps.

* The reduction in error rate for AIME24 is -8.4 percentage points, which is slightly more than Bamboogle's -7.8 percentage points, despite Bamboogle having a higher initial error rate.

* The rate of decrease varies among methods. GAIA and 2Wiki show steeper initial declines, while Bamboogle's decline is more gradual. AIME24 has a very sharp initial drop, then a more complex pattern.

* A notable anomaly is AIME24's slight increase in error rate between 18 and 28 training steps (from ~2.5% to ~5.0%) before dropping again.

### Interpretation

The data suggests that while all evaluated methods benefit from increased training steps in terms of reducing calling error rates, their baseline performance and the effectiveness of training vary significantly.

GAIA, despite having the highest initial error rate, shows the most substantial absolute improvement, reducing its error rate by over 28 percentage points. This indicates that GAIA might be a method with significant room for improvement through training, or perhaps it starts from a less optimized state.

AIME24 stands out as the best-performing method overall, consistently achieving the lowest calling error rates. Its ability to reach a very low error rate (~2.5%) with just 8 training steps is remarkable, suggesting high efficiency or a strong initial model. The slight increase in error rate for AIME24 between 18 and 28 training steps could be an interesting anomaly, possibly indicating a temporary overfitting, a change in the training data distribution, or a hyperparameter instability at that stage of training. However, it recovers and ends at a very low error rate.

2Wiki and Bamboogle fall in between GAIA and AIME24 in terms of performance. 2Wiki shows a good reduction in error rate, similar to GAIA's trend but at a lower magnitude. Bamboogle, while showing improvement, has the smallest absolute reduction in error rate, suggesting its performance is less sensitive to the additional training steps within this range, or it might be closer to its performance ceiling.

In summary, for applications where minimizing the calling error rate is paramount, AIME24 appears to be the superior choice, achieving very low error rates quickly. If a method starts with a high error rate but has significant potential for improvement with training, GAIA demonstrates the largest absolute gains. The choice of method would depend on the acceptable baseline error and the resources available for extensive training.