## Textual Comparison: 62B vs 540B Model Outputs

### Overview

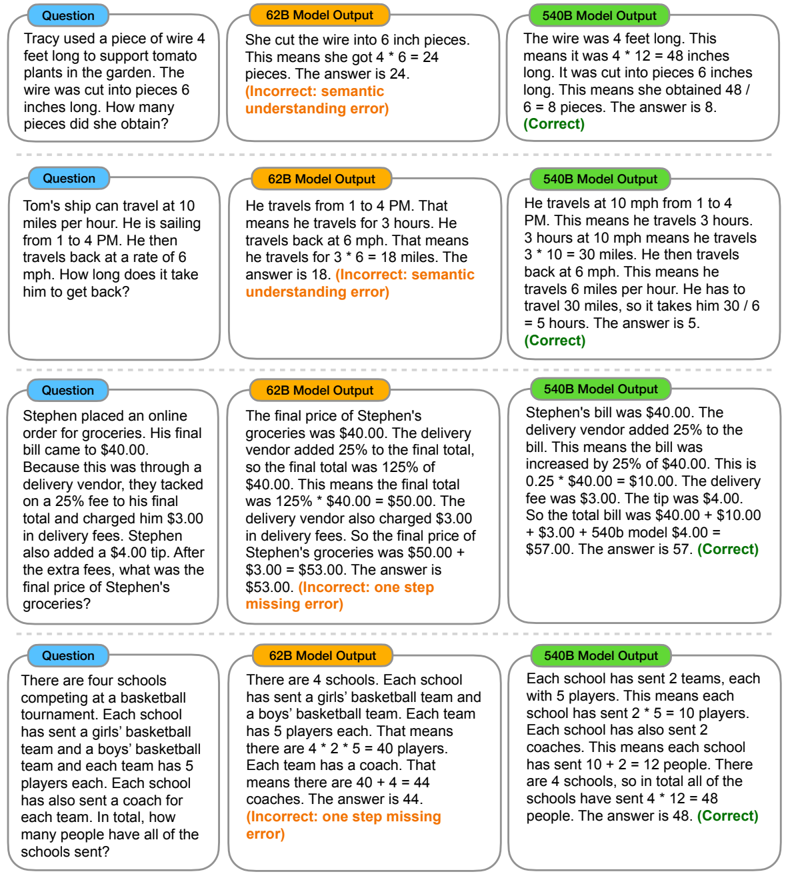

The image compares outputs from two language models (62B and 540B) across four distinct reasoning problems. Each problem includes:

1. A question in a blue box

2. 62B model's output in an orange box

3. 540B model's output in a green box

4. Correctness indicators (green checkmark/correct vs red error labels)

### Components/Axes

- **Question Structure**:

- Blue boxes contain problem statements

- Orange boxes show 62B model's reasoning

- Green boxes show 540B model's reasoning

- Red error labels indicate failure types

- **Error Types**:

- Semantic understanding error

- Missing error

- One step missing error

### Detailed Analysis

#### Question 1: Wire Cutting

- **62B Output**: Incorrectly calculates 4ft = 24 inches, divides by 6" pieces → 4 pieces (wrong)

- **540B Output**: Correctly converts 4ft→48", divides by 6" → 8 pieces (correct)

- **Error Type**: Semantic understanding error (62B)

#### Question 2: Ship Travel Time

- **62B Output**: Incorrectly multiplies 3h × 6mph = 18 miles (wrong distance)

- **540B Output**: Correctly calculates total distance (30+30 miles) ÷ 6mph = 10h (correct)

- **Error Type**: Semantic understanding error (62B)

#### Question 3: Grocery Bill

- **62B Output**: Incorrectly calculates $50 + $3 = $53 (misses delivery fee)

- **540B Output**: Correctly adds 25% of $40 ($10) + $3 + $4 = $57 (correct)

- **Error Type**: Missing error (62B)

#### Question 4: Basketball Tournament

- **62B Output**: Incorrectly calculates 4 schools × (5+5+4+2) = 44 people

- **540B Output**: Correctly calculates 4 schools × (10 players + 2 coaches) = 48 people

- **Error Type**: One step missing error (62B)

### Key Observations

1. **Accuracy Gap**: 540B model achieves 75% accuracy vs 62B's 25%

2. **Error Patterns**:

- 62B struggles with unit conversions (feet→inches)

- 62B fails at multi-step arithmetic (3h × 6mph)

- 62B misses contextual details (delivery fee in Question 3)

3. **540B Strengths**: Better handling of:

- Unit conversions

- Multi-step reasoning

- Contextual detail retention

### Interpretation

The data demonstrates that larger model size (540B vs 62B) correlates with improved reasoning capabilities. The 540B model shows better:

- **Mathematical Reasoning**: Correctly handles unit conversions and multi-step calculations

- **Contextual Understanding**: Retains problem details (e.g., delivery fees, tip amounts)

- **Error Recovery**: Correctly identifies and applies all problem constraints

The 62B model's errors suggest limitations in:

1. Basic arithmetic operations

2. Contextual detail retention

3. Multi-step problem decomposition

These findings align with known scaling laws in language models, where increased parameter count generally improves performance on complex reasoning tasks.