## Bar Chart: BioLP-Bench Performance Comparison

### Overview

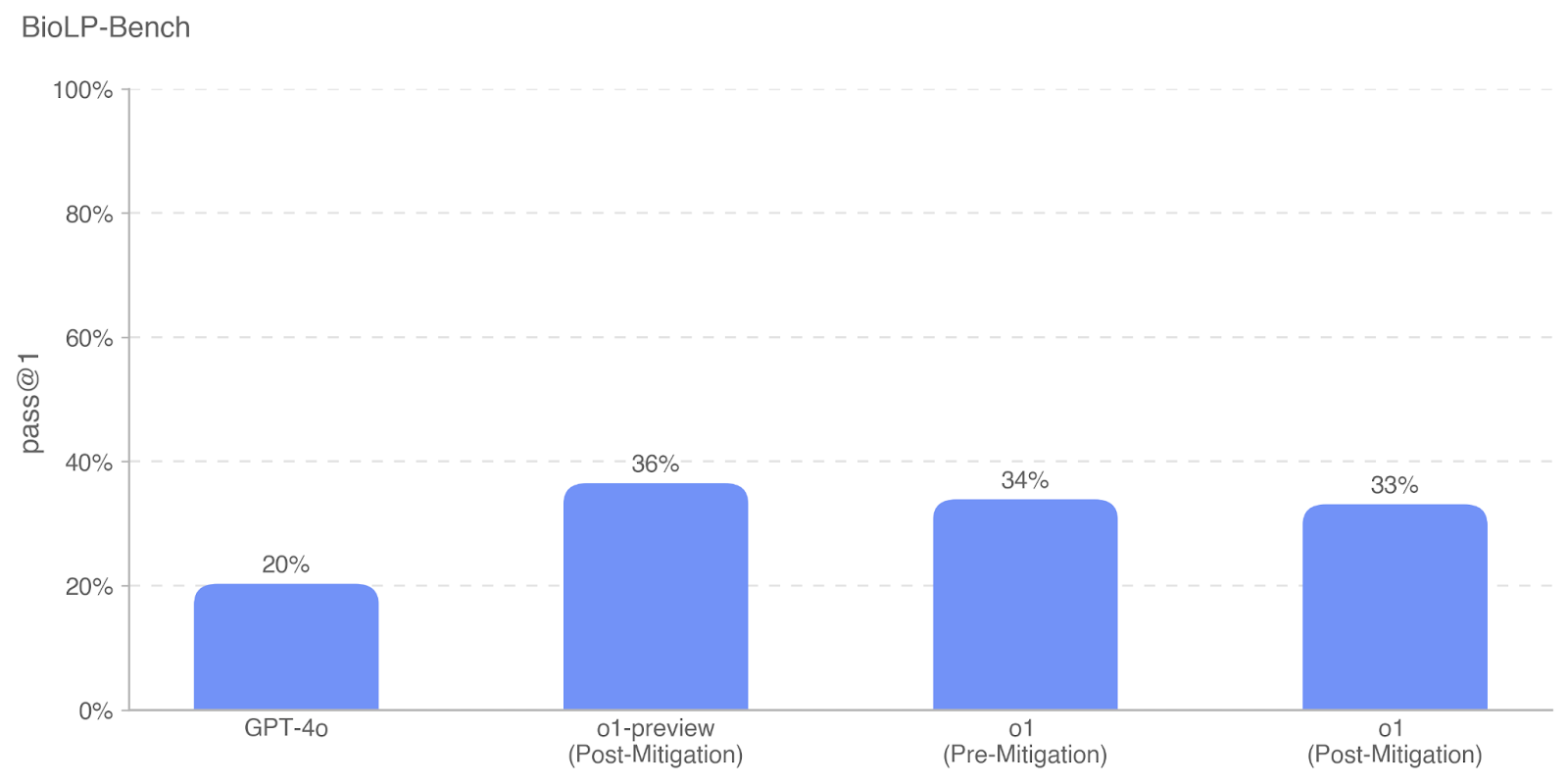

The image displays a vertical bar chart titled "BioLP-Bench," comparing the performance of four different AI models or model variants on a benchmark. The performance metric is "pass @1," measured as a percentage. The chart shows that the "o1-preview (Post-Mitigation)" variant achieves the highest score, while "GPT-4o" has the lowest.

### Components/Axes

* **Chart Title:** "BioLP-Bench" (located at the top-left corner).

* **Y-Axis:**

* **Label:** "pass @1" (rotated vertically on the left side).

* **Scale:** Linear scale from 0% to 100%, with major gridlines and labels at 0%, 20%, 40%, 60%, 80%, and 100%.

* **X-Axis:**

* **Categories (from left to right):**

1. GPT-4o

2. o1-preview (Post-Mitigation)

3. o1 (Pre-Mitigation)

4. o1 (Post-Mitigation)

* **Data Series:** A single series represented by four solid blue bars. There is no legend, as all bars represent the same metric for different entities.

* **Data Labels:** The exact percentage value is displayed directly above each bar.

### Detailed Analysis

The chart presents the following specific data points:

1. **GPT-4o:** The bar reaches the 20% gridline. The data label confirms a value of **20%**.

2. **o1-preview (Post-Mitigation):** This is the tallest bar. It extends significantly above the 20% line and is labeled **36%**.

3. **o1 (Pre-Mitigation):** This bar is slightly shorter than the o1-preview bar. Its label indicates a value of **34%**.

4. **o1 (Post-Mitigation):** This bar is marginally shorter than the "Pre-Mitigation" o1 bar. Its label shows **33%**.

**Visual Trend Verification:** The performance trend from left to right is: a low starting point (GPT-4o), a sharp increase to the peak (o1-preview), followed by a slight, stepwise decrease across the two o1 variants (Pre-Mitigation to Post-Mitigation).

### Key Observations

* **Performance Gap:** There is a substantial performance gap of 16 percentage points between the lowest-performing model (GPT-4o at 20%) and the highest-performing variant (o1-preview at 36%).

* **Mitigation Impact:** For the "o1" model, applying "Post-Mitigation" appears to correlate with a slight decrease in the "pass @1" score, from 34% (Pre-Mitigation) to 33% (Post-Mitigation), a drop of 1 percentage point.

* **Model Family Superiority:** All three variants labeled "o1" or "o1-preview" significantly outperform "GPT-4o" on this benchmark.

* **Highest Performer:** The "o1-preview (Post-Mitigation)" variant is the top performer, though it is only 2 percentage points ahead of the standard "o1 (Pre-Mitigation)" model.

### Interpretation

This chart likely evaluates AI model capabilities on a specialized biological language processing (BioLP) benchmark. The "pass @1" metric suggests a task where the model must generate a correct answer on its first attempt.

The data demonstrates that the "o1" model family is substantially more capable on this specific biological domain task than "GPT-4o." The comparison between "Pre-Mitigation" and "Post-Mitigation" versions of "o1" is particularly insightful. It suggests that the "mitigation" process—likely aimed at improving safety, reducing harmful outputs, or aligning model behavior—incurs a very minor trade-off in raw performance on this benchmark (a 1% decrease). However, the "o1-preview (Post-Mitigation)" variant defies this trend by being the top performer, indicating that this specific preview version may have optimizations that preserve or even enhance capability alongside mitigation.

The chart's primary message is the superior performance of the newer "o1" architecture over "GPT-4o" in the biological domain, with mitigation having a negligible to slightly negative impact on the measured benchmark score.