\n

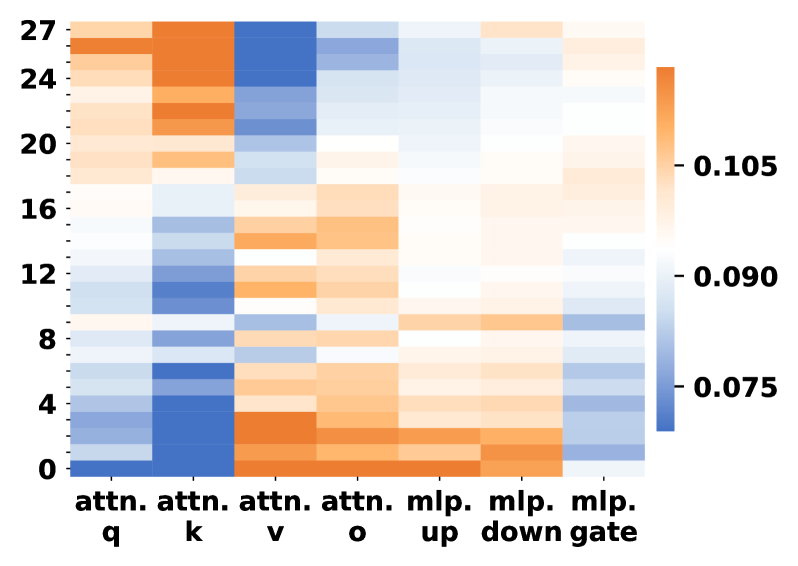

## Heatmap: Attention and MLP Layer Contributions

### Overview

The image presents a heatmap visualizing the contribution of different attention and Multi-Layer Perceptron (MLP) components across various layers (numbered 0 to 27). The color intensity represents the magnitude of the contribution, with warmer colors (orange/red) indicating higher contributions and cooler colors (blue) indicating lower contributions.

### Components/Axes

* **X-axis:** Represents different components: "attn. q", "attn. k", "attn. v", "attn. o", "mlp. up", "mlp. down", "mlp. gate".

* **Y-axis:** Represents layer numbers, ranging from 0 to 27.

* **Color Scale:** Ranges from approximately 0.075 (blue) to 0.105 (orange/red). The scale is positioned on the right side of the heatmap.

### Detailed Analysis

The heatmap displays the contribution levels for each component at each layer. Here's a breakdown of the observed trends:

* **attn. q:** Shows a strong initial contribution at layers 0-4, then gradually decreases and remains relatively low from layer 8 onwards. The color transitions from orange to blue. Approximate values: Layer 0: ~0.100, Layer 4: ~0.095, Layer 8: ~0.080, Layer 27: ~0.075.

* **attn. k:** Exhibits a similar trend to "attn. q", with high contributions in the initial layers (0-8) and a decline thereafter. Approximate values: Layer 0: ~0.105, Layer 4: ~0.100, Layer 8: ~0.090, Layer 27: ~0.075.

* **attn. v:** Shows a moderate contribution across most layers, with a slight peak around layers 4-12. Approximate values: Layer 0: ~0.085, Layer 8: ~0.090, Layer 12: ~0.095, Layer 27: ~0.080.

* **attn. o:** Displays a relatively consistent, low contribution across all layers. Approximate values: ~0.075 - 0.085 across all layers.

* **mlp. up:** Shows a gradual increase in contribution from layer 0 to a peak around layer 16-20, then a slight decline. Approximate values: Layer 0: ~0.075, Layer 16: ~0.100, Layer 20: ~0.095, Layer 27: ~0.085.

* **mlp. down:** Exhibits a strong contribution in the later layers (16-27), with a peak around layer 24. Approximate values: Layer 16: ~0.085, Layer 24: ~0.105, Layer 27: ~0.095.

* **mlp. gate:** Shows a very strong contribution at layer 24, and is otherwise low. Approximate values: Layer 24: ~0.105, other layers: ~0.075.

### Key Observations

* Attention components ("attn. q", "attn. k", "attn. v") have higher contributions in the earlier layers, suggesting their importance in initial feature extraction.

* MLP components ("mlp. up", "mlp. down", "mlp. gate") become more prominent in the later layers, indicating their role in higher-level processing and decision-making.

* "mlp. gate" shows a very localized, strong contribution at layer 24, which could indicate a critical gating mechanism at that specific layer.

* "attn. o" consistently has the lowest contribution across all layers.

### Interpretation

This heatmap likely represents the attention weights or activation magnitudes within a transformer-based neural network. The data suggests a hierarchical processing structure where attention mechanisms are crucial in the initial stages, while MLP layers take over in the later stages. The strong contribution of "mlp. gate" at layer 24 could indicate a key control point in the network's decision-making process. The decreasing contribution of attention components as the network deepens suggests that the network relies less on direct attention and more on learned representations as it processes information. The heatmap provides valuable insights into the internal workings of the model and can be used to identify potential areas for optimization or further investigation.