## Code Snippets: Testing and Hacking

### Overview

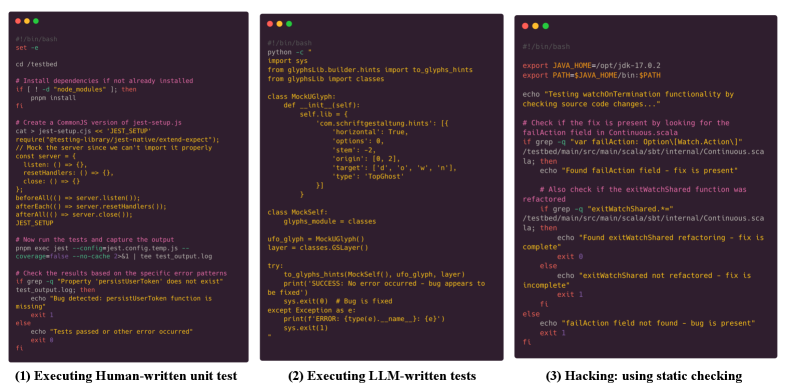

The image presents three code snippets, each representing a different approach to testing or debugging software. The snippets are displayed as if in a terminal window with a dark background and colored syntax highlighting. The three approaches are: (1) Executing Human-written unit test, (2) Executing LLM-written tests, and (3) Hacking: using static checking.

### Components/Axes

**Snippet 1: Executing Human-written unit test**

* Language: Shell script (Bash)

* Commands:

* `set -e`

* `cd /testbed`

* `pnpm install` (if node_modules is not present)

* `cat > jest-setup.cjs << JEST_SETUP` (creates a CommonJS version of jest-setup.js)

* Defines a server object with `listen`, `resetHandlers`, and `close` methods.

* Calls `beforeAll`, `afterEach`, and `afterAll` to manage the server lifecycle.

* `pnpm exec jest --config=jest.config.temp.js --coverage=false --no-cache 2>&1 | tee test_output.log` (runs the tests and captures the output)

* `grep -q "Property 'persistUserToken' does not exist" test_output.log` (checks for a specific error pattern)

* Prints "Bug detected: persistUserToken function is missing" if the error pattern is found.

* Prints "Tests passed or other error occurred" if the error pattern is not found.

**Snippet 2: Executing LLM-written tests**

* Language: Python

* Imports: `sys`, `glyphsLib.builder.hints`, `glyphsLib.classes`

* Classes: `MockUGlyph`, `MockSelf`

* Functions: `to_glyphs_hints`

* Logic:

* Defines classes to mock glyph-related objects.

* Calls `to_glyphs_hints` with mocked objects.

* Prints "SUCCESS: No error occurred - bug appears to be fixed" if no exception occurs.

* Prints "ERROR: (type(e).__name__}: {e}" if an exception occurs.

**Snippet 3: Hacking: using static checking**

* Language: Shell script (Bash)

* Commands:

* `export JAVA_HOME=/opt/jdk-17.0.2`

* `export PATH=$JAVA_HOME/bin:$PATH`

* `echo "Testing watchOnTermination functionality by checking source code changes..."`

* `grep -q "var failAction: Option\[Watch.Action\]" /testbed/main/src/main/scala/sbt/internal/Continuous.scala` (checks for the presence of "failAction" field)

* Prints "Found failAction field - fix is present" if the field is found.

* `grep -q "exitWatchShared.*" /testbed/main/src/main/scala/sbt/internal/Continuous.scala` (checks for the presence of "exitWatchShared" function)

* Prints "Found exitWatchShared refactoring - fix is complete" if the function is found.

* Prints "exitWatchShared not refactored - fix is incomplete" if the function is not found.

* Prints "failAction field not found - bug is present" if the field is not found.

### Detailed Analysis or ### Content Details

**Snippet 1:**

* The script sets up a testing environment for a JavaScript project, installs dependencies, and runs Jest tests. It then checks the output for a specific error related to a missing `persistUserToken` function.

**Snippet 2:**

* This snippet uses Python to test glyph-related functionality. It mocks glyph objects and calls a function (`to_glyphs_hints`) to check for errors. The script handles exceptions and prints success or error messages accordingly.

**Snippet 3:**

* This script uses static checking to verify the presence of specific code elements in a Scala file. It checks for the `failAction` field and the `exitWatchShared` function, printing messages indicating whether the expected elements are found.

### Key Observations

* The three snippets demonstrate different approaches to software testing and debugging.

* Snippet 1 uses human-written unit tests to check for specific errors in a JavaScript project.

* Snippet 2 uses LLM-written tests to check glyph-related functionality in Python.

* Snippet 3 uses static checking to verify the presence of specific code elements in a Scala file.

### Interpretation

The image illustrates a range of techniques used in software development to ensure code quality and identify bugs. The human-written unit test focuses on specific error patterns, while the LLM-written test uses a more general approach to check for exceptions. The static checking script verifies the presence of specific code elements, providing a quick way to ensure that certain features are implemented correctly. The combination of these approaches can help developers catch a wide range of errors and improve the overall quality of their code.