## Screenshots: Terminal Output - Software Testing & Security

### Overview

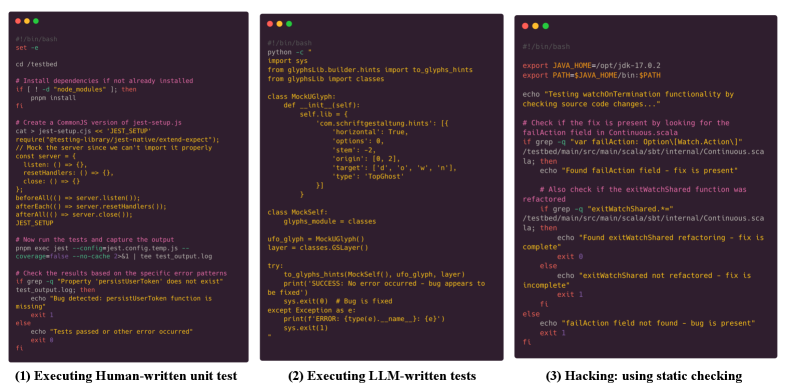

The image presents three screenshots of terminal windows, each displaying command-line output related to software testing and security. The screenshots appear to demonstrate different stages or approaches to testing: human-written unit tests, LLM-written tests, and static code analysis for security vulnerabilities.

### Components/Axes

Each screenshot contains a terminal window with text-based output. There are no axes or legends in the traditional sense, but the output itself functions as data. Each screenshot is labeled with a number and a descriptive title at the bottom: (1) Executing Human-written unit test, (2) Executing LLM-written tests, (3) Hacking: using static checking.

### Detailed Analysis or Content Details

**Screenshot 1: Executing Human-written unit test**

* **Initial Commands:**

* `cd /testbed`

* `set -e`

* **Dependency Installation:**

* `if [ "node_modules" ] ; then`

* `npm install`

* **Test Setup:**

* `Create a CommonJS version of jest-setup.js`

* `cat > setup.cjs << 'TEST SETUP'`

* `require('library/jest-native/extend-expect');`

* `// Mock the server since we can't import it properly`

* `const server = () => {};`

* `listen = () => {};`

* `resetHandlers = () => {};`

* `close = () => {};`

* `beforeAll(() => server.listen());`

* `afterEach(() => server.resetHandlers());`

* `afterAll(() => server.close());`

* `JEST_SETUP`

* **Test Execution:**

* `Now run the tests and capture the output`

* `coverage --config jest.config.js --test`

* `npm exec --prefix ../.. test test.log`

* **Error Checking:**

* `Check the results based on the error patterns`

* `grep -q "Property 'persistOnError' does not exist"`

* `grep -q "Property 'persistOnError' does not exist"`

* **Test Status:**

* `Tests passed? 0 or 1`

* `echo $?`

* `0`

**Screenshot 2: Executing LLM-written tests**

* **Python Code:**

* `python -c`

* `import sys`

* `from glyphsLib.builder.hints import glyphs_hints`

* `from glyphsLib import classes`

* `class MockGlyph:`

* `def __init__(self):`

* `self.lib = {}`

* `self.schrittgestaltung_hints = {`

* `'horizontal': True,`

* `'versions': 8,`

* `'origins': [0, 2],`

* `'target': ['@', 'o', 'w', 'n'],`

* `'type': 'topGhost'`

* `}`

* `class MockSelf:`

* `glyphs_module = classes`

* `ufo_glyph, hintsNotRefly = glyphs_hints(MockGlyph(), layer)`

* `layer = classes.GSLayer()`

* `try:`

* `to_glyphs, successNotRefly, bug_appears =`

* `glyphs_hints(MockSelf(), layer)`

* `except Exception as e:`

* `print(f"Bug is fixed")`

* `print(e)`

* `print("ERROR: A type error has occurred")`

* `sys.exit(1)`

**Screenshot 3: Hacking: using static checking**

* **Environment Variables:**

* `export JAVA_HOME=/opt/jdk-17.0.2`

* `export PATH=$JAVA_HOME/bin:$PATH`

* **Static Analysis Commands:**

* `echo "Testing watchOnMount functionality by checking source code changes..."`

* `grep -q "var fallAction: Option[Match.Action] =" /tested/main/src/main/scala/sbt/internal/Continuous.scala`

* `if then`

* `echo "Found fallAction field is present"`

* `Also check if the exitMatchShared function was refactored`

* `grep -q "exitMatchShared, *"` /tested/main/src/main/scala/sbt/internal/Continuous.scala

* `if then`

* `echo "Found exitMatchShared refactoring - is complete"`

* `else`

* `echo "exitMatchShared not refactored - fix is incomplete"`

* `fi`

* **Final Check:**

* `echo "fallAction field not found - bug is present"`

### Key Observations

* The first screenshot shows a typical Node.js testing workflow, including dependency installation, test execution, and result verification. The test passed (exit code 0).

* The second screenshot demonstrates a Python script using the `glyphsLib` library, likely for font-related testing. It includes error handling to detect if a bug has been fixed.

* The third screenshot showcases a security-focused static analysis approach using `grep` to check for specific code patterns in Scala files. It aims to verify if a security-related feature (`fallAction`) and refactoring (`exitMatchShared`) have been implemented correctly.

* The progression of screenshots suggests a layered testing strategy: unit tests, LLM-generated tests, and static security analysis.

### Interpretation

The image illustrates a modern software development and security testing pipeline. It highlights the integration of different testing methodologies, including traditional human-written tests, tests generated by Large Language Models (LLMs), and static code analysis for security vulnerabilities.

The use of LLMs for test generation (Screenshot 2) is a notable trend, suggesting an attempt to automate and augment the testing process. The static analysis in Screenshot 3 demonstrates a proactive approach to security, aiming to identify potential vulnerabilities before deployment.

The combination of these techniques suggests a commitment to comprehensive testing and a focus on both functionality and security. The progression from unit tests to LLM-generated tests to static analysis could represent a shift-left approach, where testing is integrated earlier in the development lifecycle. The fact that the first test passed, while the third is actively looking for issues, suggests a healthy testing process.