## Code Snippet Comparison: Testing Methodologies

### Overview

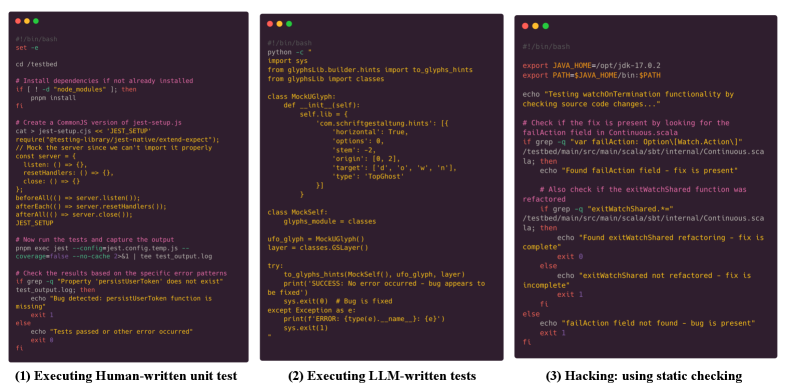

The image displays three side-by-side terminal or code editor windows, each containing a different script related to software testing and verification. The panels are labeled at the bottom: (1) Executing Human-written unit test, (2) Executing LLM-written tests, and (3) Hacking: using static checking. The content is presented with syntax highlighting on a dark background.

### Components/Axes

The image is segmented into three distinct vertical panels:

* **Left Panel (1):** A Bash shell script.

* **Middle Panel (2):** A Python script.

* **Right Panel (3):** A Bash shell script.

Each panel contains code with comments (prefixed by `#` or `"""`), commands, and logic. The text is monospaced.

### Detailed Analysis

#### Panel (1): Executing Human-written unit test

This is a Bash script for setting up and running a JavaScript/Node.js test suite.

* **Shebang:** `#!/bin/bash`

* **Key Steps:**

1. Navigate to a directory: `cd /testbed`

2. Install npm dependencies if `node_modules` doesn't exist.

3. Create a CommonJS version of a Jest setup file (`jest.setup.js`), mocking a server.

4. Run Jest tests with a specific config, logging output to `test-output.log`.

5. Check the log for a specific error pattern (`"Property 'persistUserToken' does not exist"`). If found, it reports a bug; otherwise, it reports tests passed.

#### Panel (2): Executing LLM-written tests

This is a Python script that appears to mock a glyph system for testing purposes.

* **Shebang:** `#!/usr/bin/env python3`

* **Imports:** `sys`, and classes from `glyphhinter.builder.hints` and `glyphhinter.classes`.

* **Key Components:**

* `class MockGlyph`: Initialized with a `hints` dictionary containing properties like `horizontal`, `opt`, `target`, and `top`.

* `class MockSelf`: Contains a `glyph_module` attribute set to the `classes` module.

* **Execution Block (`if __name__ == "__main__":`):**

1. Creates instances of `MockGlyph` and `MockSelf`.

2. Calls `to_glyphs_hints` with these mocks and a `layer` object.

3. Uses a `try...except` block to catch errors. On success, it prints "SUCCESS: No error occurred - bug appears to be fixed" and exits with code 0. On exception, it prints the error and exits with code 1.

#### Panel (3): Hacking: using static checking

This is a Bash script that uses static file checking (grep) to verify if a specific code fix has been applied.

* **Shebang:** `#!/bin/bash`

* **Environment Setup:** Exports `JAVA_HOME` and updates the `PATH`.

* **Key Logic:**

1. Echoes its purpose: testing `watchForGeneration` functionality by checking source code.

2. **First Check:** Uses `grep` to look for the string `"failAction: Option[Match.Action]"` in the file `/testbed/main/src/main/scala/internal/Continuous.scala`.

* If found, it echoes "Found failAction field - fix is present".

* If not found, it proceeds to a second check.

3. **Second Check:** Uses `grep` to look for `"exitMatchShared"` in the same file.

* If found, it echoes "Found exitMatchShared refactoring - fix is complete" and exits with code 0.

* If not found, it echoes "exitMatchShared not refactored - fix is incomplete" and exits with code 1.

4. If the first check fails (field not found), it echoes "failAction field not found - bug is present" and exits with code 1.

### Key Observations

1. **Methodology Contrast:** The three panels demonstrate fundamentally different approaches to verifying software correctness:

* **Panel 1:** Traditional, human-written unit tests that execute code and check runtime behavior/logs.

* **Panel 2:** Tests presumably generated by a Large Language Model (LLM), which in this case involve mocking dependencies to isolate and test a specific function (`to_glyphs_hints`).

* **Panel 3:** A "hacking" or static analysis approach that bypasses execution entirely, directly searching source code files for evidence of a fix.

2. **Specificity of Checks:** Each script looks for very specific indicators of a bug or fix:

* Panel 1: A specific error message string in test output.

* Panel 2: The absence of an exception during a mocked function call.

* Panel 3: The presence of specific code patterns/strings in a source file.

3. **Language & Tooling:** The scripts use different languages (Bash, Python) and tools (npm/Jest, Python mocks, grep) appropriate for their context (JavaScript project, Python glyph library, Scala codebase).

### Interpretation

This image is a comparative study of software verification techniques, likely from a research paper or technical blog post about AI-assisted debugging or testing. It visually argues that there are multiple, distinct pathways to confirm whether a software bug has been resolved.

* **The Human Approach (Panel 1)** is procedural and relies on a full test harness. It's robust but requires maintaining a test suite.

* **The LLM Approach (Panel 2)** is more focused, generating a targeted test for a specific unit of code. It suggests an AI can create executable verification logic, but its effectiveness depends on the quality of the mocks and the test design.

* **The Static/Hacking Approach (Panel 3)** is the most direct and arguably the most brittle. It doesn't test behavior but rather the *artifact* of a fix (the code itself). This is fast and requires no execution environment but can produce false positives (code is present but wrong) or false negatives (fix uses different terminology).

The overarching theme is the **trade-off between fidelity and speed**. Running a full test suite (Panel 1) is high-fidelity but slow. Static checking (Panel 3) is instant but low-fidelity. The LLM-written test (Panel 2) attempts to find a middle ground—providing behavioral verification without the overhead of a full project build and test run. The image prompts the viewer to consider which method is most appropriate for different stages of development and debugging.