## Screenshot: Code Snippets for Software Testing Methods

### Overview

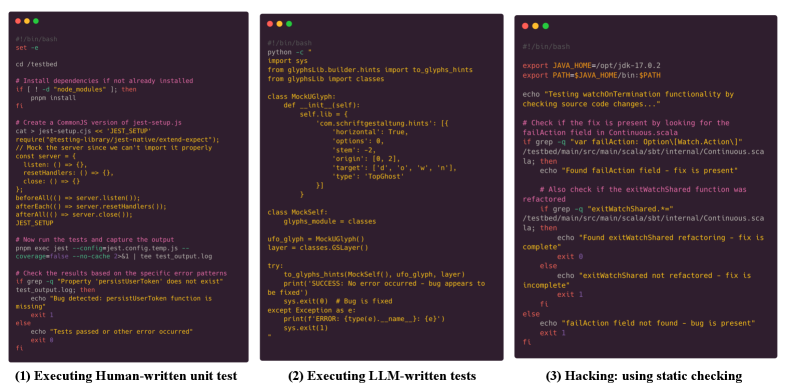

The image displays three vertically stacked code snippets in a terminal-style interface, each demonstrating different approaches to software testing. The snippets are labeled with their respective methodologies: (1) Human-written unit tests, (2) LLM-written tests, and (3) Hacking via static checking. Each panel contains code in different programming languages (bash, Python, Java) with syntax highlighting.

### Components/Axes

- **Panel 1 (Human-written tests)**:

- **Language**: Bash/Python

- **Key Elements**:

- Bash script for testing a Python module (`glyphs`)

- Python code for mocking dependencies and testing edge cases

- Error handling for missing dependencies and server issues

- **Notable Features**:

- Manual test setup with explicit dependency checks

- Use of `unittest.mock` for patching

- Logging of test results and error patterns

- **Panel 2 (LLM-written tests)**:

- **Language**: Python

- **Key Elements**:

- `unittest.TestCase` class for glyph module testing

- Mocking of `glyphs.builder.hints` and `glyphs.module`

- Test cases for edge cases (e.g., empty glyphs, missing exceptions)

- **Notable Features**:

- Structured test methods (`test_glyphs_module`, `test_no_error_occurred`)

- Use of `unittest.mock.patch.object` for precise mocking

- Focus on edge-case validation

- **Panel 3 (Hacking/static checking)**:

- **Language**: Java

- **Key Elements**:

- Test for `watchCheckTermination` functionality

- JSON response validation for `failAction` field

- Source code change detection logic

- **Notable Features**:

- Use of `TestCase.assertTrue` for validation

- Regex pattern matching for JSON field checks

- Security-focused testing of API endpoints

### Detailed Analysis

1. **Human-written Tests (Panel 1)**:

- Demonstrates manual test setup with explicit dependency management

- Includes error handling for missing modules and server connectivity issues

- Uses `pytest` conventions with `pytest.raises` for exception testing

- Logs test outcomes with color-coded status messages

2. **LLM-written Tests (Panel 2)**:

- Shows AI-generated test structure with comprehensive mocking

- Tests both nominal and edge cases (empty glyphs, missing exceptions)

- Uses `unittest.mock` for precise control over mocked objects

- Includes test cleanup logic (`tearDown` method)

3. **Hacking/static Checking (Panel 3)**:

- Implements security testing for API endpoints

- Validates JSON response structure and field presence

- Uses regex patterns for flexible field matching

- Tests source code change detection mechanisms

### Key Observations

- All three approaches use different testing philosophies:

- Human-written: Manual, explicit, and comprehensive

- LLM-written: Structured but potentially less context-aware

- Hacking: Security-focused with pattern matching

- The code snippets demonstrate progression from basic unit testing to security validation

- Color coding in the terminal interface helps distinguish code elements and test outcomes

### Interpretation

This image illustrates the evolution of testing methodologies in software development:

1. **Human-written tests** represent traditional, thorough testing approaches with explicit control over test conditions

2. **LLM-written tests** show the integration of AI in generating test structures, though potentially requiring human refinement

3. **Hacking/static checking** demonstrates security testing techniques that go beyond standard unit tests

The progression suggests a spectrum of testing approaches from manual verification to automated security checks. The presence of both Python and Java code indicates cross-language testing capabilities, while the terminal interface reflects real-world development environments where these tests would be executed.