## Line Chart with Shaded Confidence Intervals: Violation rate (Mean Min/Max)

### Overview

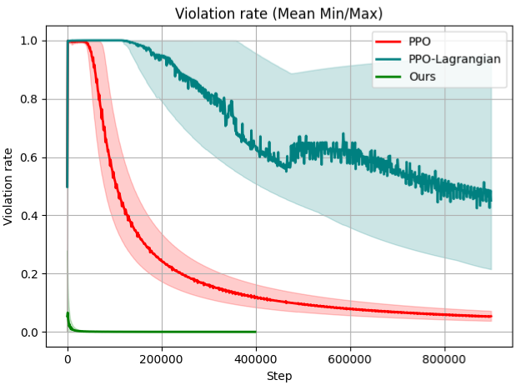

The image is a line chart comparing the performance of three different algorithms or methods over the course of training steps. The performance metric is the "Violation rate," which is plotted against the number of "Step"s. Each method is represented by a solid line (the mean) and a shaded region around it (likely representing the min/max range or confidence interval). The chart demonstrates how each method's violation rate evolves during training.

### Components/Axes

* **Chart Title:** "Violation rate (Mean Min/Max)"

* **Y-Axis:**

* **Label:** "Violation rate"

* **Scale:** Linear scale from 0.0 to 1.0, with major tick marks at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **X-Axis:**

* **Label:** "Step"

* **Scale:** Linear scale from 0 to approximately 900,000, with major tick marks at 0, 200000, 400000, 600000, and 800000.

* **Legend:**

* **Position:** Top-right corner of the chart area.

* **Entries:**

1. **PPO** - Represented by a solid red line and a light red shaded area.

2. **PPO-Lagrangian** - Represented by a solid teal/dark cyan line and a light teal shaded area.

3. **Ours** - Represented by a solid dark green line and a very narrow, almost invisible, light green shaded area.

### Detailed Analysis

**1. PPO (Red Line & Shading):**

* **Trend:** The line starts at a violation rate of approximately 1.0 at step 0. It then follows a steep, smooth, downward curve, decaying rapidly before gradually flattening out.

* **Data Points (Approximate Mean Values):**

* Step 0: ~1.0

* Step 100,000: ~0.6

* Step 200,000: ~0.3

* Step 400,000: ~0.1

* Step 800,000: ~0.05

* **Variability (Shaded Region):** The red shaded area is widest in the early-to-mid training phase (approx. steps 50,000 to 300,000), indicating higher variance in performance during that period. It narrows significantly as training progresses.

**2. PPO-Lagrangian (Teal Line & Shading):**

* **Trend:** The line also starts near 1.0 at step 0. It remains high and relatively flat for the first ~100,000 steps before beginning a noisier, more gradual decline. The line exhibits significant high-frequency fluctuations (jaggedness) throughout its descent.

* **Data Points (Approximate Mean Values):**

* Step 0: ~1.0

* Step 200,000: ~0.9

* Step 400,000: ~0.65

* Step 600,000: ~0.55

* Step 800,000: ~0.45

* **Variability (Shaded Region):** The teal shaded area is very broad, especially from step 200,000 onward, indicating extremely high variance or a wide min/max range in the violation rate for this method. The upper bound of the shading remains near 1.0 for a large portion of the chart.

**3. Ours (Green Line & Shading):**

* **Trend:** The line starts at a very low violation rate (approximately 0.05) at step 0. It drops almost vertically to near 0.0 within the first few thousand steps and remains essentially flat at that level for the remainder of the chart (up to ~400,000 steps, where the line ends).

* **Data Points (Approximate Mean Values):**

* Step 0: ~0.05

* Step 10,000: ~0.0

* Step 100,000: ~0.0

* Step 400,000: ~0.0

* **Variability (Shaded Region):** The green shaded area is extremely narrow, appearing almost as a thick line. This indicates very low variance and highly consistent performance near zero violation.

### Key Observations

1. **Performance Hierarchy:** The method labeled "Ours" demonstrates vastly superior performance, achieving and maintaining a near-zero violation rate almost immediately. PPO shows good, smooth convergence to a low rate, while PPO-Lagrangian converges more slowly and with much higher noise and variance.

2. **Convergence Speed:** "Ours" converges in <10,000 steps. PPO shows significant reduction by 200,000 steps. PPO-Lagrangian is still descending at 800,000 steps.

3. **Stability/Variance:** "Ours" is extremely stable (low variance). PPO has moderate variance during learning. PPO-Lagrangian exhibits very high variance and instability throughout training.

4. **Initial Conditions:** "Ours" starts at a much lower violation rate (~0.05) compared to the other two methods, which both start at the maximum rate of 1.0.

### Interpretation

This chart likely comes from a research paper in reinforcement learning (RL), specifically dealing with constrained optimization or safe RL, where the goal is to maximize reward while keeping constraint violations below a threshold.

* **What the data suggests:** The proposed method ("Ours") is highly effective at satisfying constraints (minimizing violation rate) from the very start of training and does so with high reliability. This suggests it may incorporate a more effective mechanism for constraint handling or initialization compared to the baselines.

* **Relationship between elements:** The comparison highlights a trade-off. The standard PPO algorithm learns to reduce violations smoothly but starts from a point of complete violation. PPO-Lagrangian, a common method for constrained RL, struggles with stability and slow convergence in this scenario, as evidenced by its noisy line and wide confidence bands. The "Ours" method appears to break this trade-off, offering both immediate constraint satisfaction and stability.

* **Notable Anomalies:** The extremely wide shaded region for PPO-Lagrangian is a critical finding. It indicates that while its *average* performance improves, individual training runs or timesteps can still experience very high violation rates (near 1.0), which could be unacceptable in safety-critical applications. The fact that "Ours" starts at a non-zero but very low violation rate might indicate a specific design choice in its problem formulation or initialization.