TECHNICAL ASSET FINGERPRINT

c30342b697eb9c4d3d30b5fd

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Diagram: RALMs Knowledge Category Quadrant and Refusal Examples

### Overview

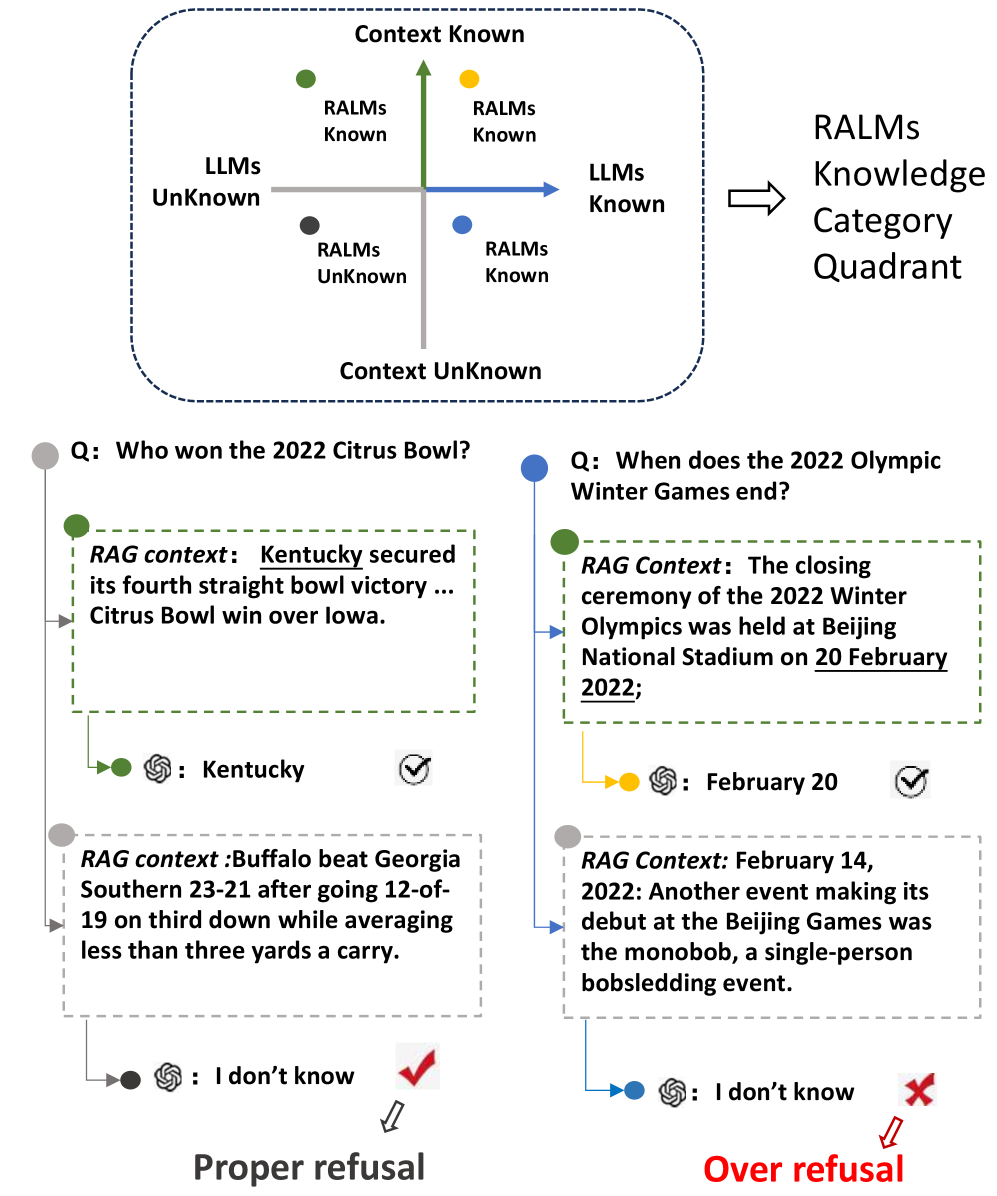

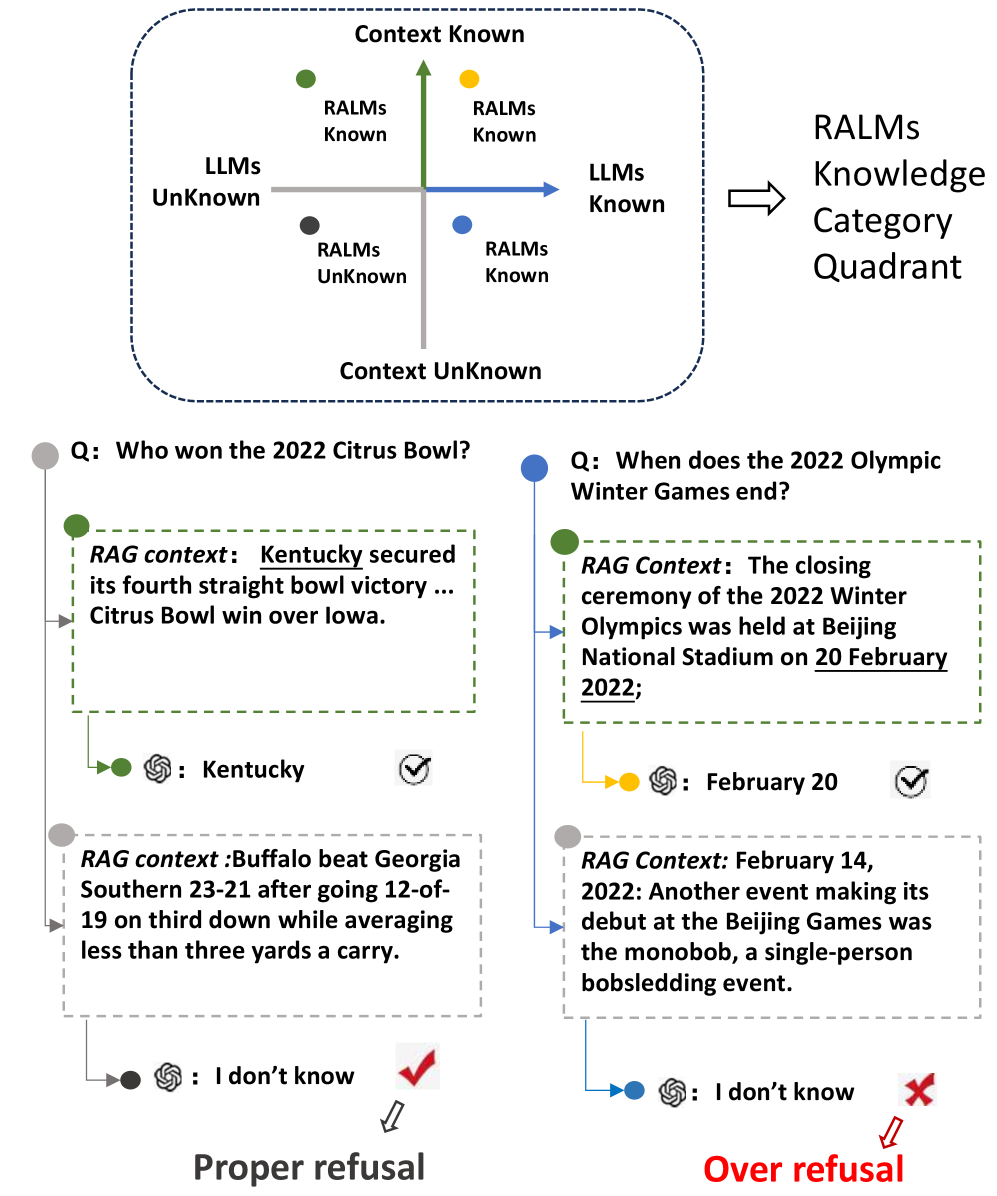

The image presents a quadrant diagram categorizing knowledge based on RALMs (Retrieval-Augmented Language Models) and LLMs (Large Language Models), along with examples of proper and over refusal in question-answering scenarios.

### Components/Axes

* **Quadrant Diagram:**

* X-axis: LLMs (Unknown to Known)

* Y-axis: RALMs (Unknown to Known)

* Quadrants:

* Top-left: Context Known, RALMs Known, LLMs Unknown (Green Dot)

* Top-right: Context Known, RALMs Known, LLMs Known (Yellow Dot)

* Bottom-left: Context Unknown, RALMs Unknown, LLMs Unknown (Black Dot)

* Bottom-right: Context Unknown, RALMs Known, LLMs Known (Blue Dot)

* **Refusal Examples:**

* Two question-answer pairs are shown, each with:

* Question (Q:)

* RAG context: (Retrieved context)

* Answer (with a bot icon)

* Checkmark or X mark indicating correctness of the answer

### Detailed Analysis or ### Content Details

**1. Quadrant Diagram:**

* The diagram visually represents the state of knowledge for both RALMs and LLMs.

* The top-left quadrant indicates scenarios where the context is known, and RALMs have the necessary information, but LLMs do not.

* The top-right quadrant indicates scenarios where both context and RALMs/LLMs have the necessary information.

* The bottom-left quadrant indicates scenarios where the context is unknown, and neither RALMs nor LLMs have the information.

* The bottom-right quadrant indicates scenarios where the context is unknown, but RALMs/LLMs have the information.

**2. Refusal Examples:**

* **Example 1 (Proper Refusal):**

* Question: "Who won the 2022 Citrus Bowl?"

* RAG context: "Kentucky secured its fourth straight bowl victory ... Citrus Bowl win over Iowa."

* Correct Answer: Kentucky (Green Dot)

* Question: RAG context: "Buffalo beat Georgia Southern 23-21 after going 12-of-19 on third down while averaging less than three yards a carry."

* Answer: "I don't know" (Grey Dot)

* Result: Correct refusal (checkmark)

* **Example 2 (Over Refusal):**

* Question: "When does the 2022 Olympic Winter Games end?"

* RAG Context: "The closing ceremony of the 2022 Winter Olympics was held at Beijing National Stadium on 20 February 2022;"

* Correct Answer: "February 20" (Yellow Dot)

* RAG Context: "February 14, 2022: Another event making its debut at the Beijing Games was the monobob, a single-person bobsledding event."

* Answer: "I don't know" (Grey Dot)

* Result: Incorrect refusal (X mark)

### Key Observations

* The quadrant diagram provides a framework for understanding the interplay between RALMs and LLMs in knowledge representation.

* The refusal examples highlight the importance of accurate context retrieval and the potential for both proper and over refusal in question-answering systems.

* The color-coding (green, yellow, black, blue) in the quadrant diagram corresponds to the knowledge state of RALMs and LLMs.

### Interpretation

The image illustrates a system for categorizing knowledge based on the capabilities of RALMs and LLMs. The quadrant diagram serves as a visual aid for understanding the different scenarios that can arise when these models are used for question answering. The refusal examples demonstrate the challenges of building robust question-answering systems that can accurately determine when they lack the necessary information to provide a correct answer. The "Proper refusal" example shows the system correctly identifying a lack of relevant information and refusing to answer, while the "Over refusal" example shows the system incorrectly refusing to answer despite having access to relevant information. This highlights the need for improved context retrieval and reasoning capabilities in question-answering systems.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Diagram: RAG LLM Knowledge Category Quadrant

### Overview

This diagram illustrates a knowledge categorization framework for Retrieval-Augmented Generation (RAG) Large Language Models (LLMs). It depicts a 2x2 quadrant based on "Context Known/Unknown" and "RALMs Known/Unknown", and demonstrates how LLMs respond to questions based on their knowledge and the provided context. The diagram showcases two example question-answering scenarios: one resulting in a "Proper Refusal" and the other in an "Over Refusal".

### Components/Axes

* **Quadrants:** Four quadrants defined by the axes:

* Top-Left: LLMs Unknown, Context Unknown (labeled "LLMs Unknown")

* Top-Right: LLMs Known, Context Unknown (labeled "LLMs Known")

* Bottom-Left: LLMs Unknown, Context Known (labeled "RALMs Unknown")

* Bottom-Right: LLMs Known, Context Known (labeled "RALMs Known")

* **Axes:**

* Vertical Axis: "Context Known" (top) to "Context Unknown" (bottom)

* Horizontal Axis: "RALMs Unknown" (left) to "RALMs Known" (right)

* **Arrows:** Arrows indicate the flow from RALMs Unknown to RALMs Known.

* **Question Blocks:** Two yellow blocks containing questions:

* "Q: Who won the 2022 Citrus Bowl?"

* "Q: When does the 2022 Olympic Winter Games end?"

* **RAG Context Blocks:** Two light blue blocks containing RAG context:

* "RAG context: Kentucky secured its fourth straight bowl victory … Citrus Bowl win over Iowa."

* "RAG context: The closing ceremony of the 2022 Winter Olympics was held at Beijing National Stadium on 20 February 2022;"

* "RAG context: Buffalo beat Georgia Southern 23-21 after going 12-of-19 on third down while averaging less than three yards a carry."

* "RAG context: February 14, 2022: Another event making its debut at the Beijing Games was the monobob, a single-person bobsledding event."

* **Answer Bubbles:** Two green bubbles with checkmarks and two grey bubbles with "I don't know" and an "X"

* ": Kentucky" (with a checkmark)

* ": February 20" (with a checkmark)

* ": I don't know" (with an "X")

* ": I don't know" (with a checkmark)

* **Labels:**

* "RALMs Knowledge Category Quadrant" (top-right)

* "Proper refusal" (bottom-left)

* "Over refusal" (bottom-right)

### Detailed Analysis or Content Details

The diagram demonstrates two scenarios:

**Scenario 1 (Proper Refusal):**

* **Question:** "Who won the 2022 Citrus Bowl?"

* **RAG Context:** "Kentucky secured its fourth straight bowl victory … Citrus Bowl win over Iowa."

* **Answer:** ": Kentucky" (with a checkmark) - The LLM correctly answers the question based on the provided context.

* **Additional Context:** "Buffalo beat Georgia Southern 23-21 after going 12-of-19 on third down while averaging less than three yards a carry."

* **Refusal:** ": I don't know" (with a checkmark) - The LLM correctly refuses to answer a question outside the scope of the provided context.

**Scenario 2 (Over Refusal):**

* **Question:** "When does the 2022 Olympic Winter Games end?"

* **RAG Context:** "The closing ceremony of the 2022 Winter Olympics was held at Beijing National Stadium on 20 February 2022;"

* **Answer:** ": February 20" (with a checkmark) - The LLM correctly answers the question based on the provided context.

* **Additional Context:** "February 14, 2022: Another event making its debut at the Beijing Games was the monobob, a single-person bobsledding event."

* **Refusal:** ": I don't know" (with an "X") - The LLM incorrectly refuses to answer a question that can be answered from the provided context.

### Key Observations

* The diagram highlights the importance of accurate RAG context for LLM performance.

* The "Proper Refusal" scenario demonstrates the LLM's ability to stay within the bounds of the provided information.

* The "Over Refusal" scenario indicates a potential issue where the LLM incorrectly refuses to answer a valid question based on the available context.

* The quadrants visually represent the different states of knowledge for both the LLM and the RAG system.

### Interpretation

This diagram illustrates a critical aspect of RAG systems: the balance between providing relevant context and avoiding hallucinations or incorrect answers. The quadrants represent the ideal states for LLM operation. The "RALMs Known" quadrant is the goal, where both the LLM and the RAG system have the necessary knowledge to answer the question. The "Proper Refusal" scenario shows the LLM correctly identifying when it lacks the information to answer a question. However, the "Over Refusal" scenario is problematic, as it indicates the LLM is failing to utilize available information. This could be due to issues with the RAG system's retrieval process, the LLM's reasoning capabilities, or a combination of both. The diagram serves as a visual aid for understanding the challenges and potential pitfalls of RAG systems and emphasizes the need for careful evaluation and optimization. The use of checkmarks and "X" symbols clearly indicates the success or failure of the LLM's response in each scenario.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: RALMs Knowledge Category Quadrant and Refusal Behavior Examples

### Overview

The image is a technical diagram illustrating the knowledge categorization of Retrieval-Augmented Language Models (RALMs) and demonstrating two examples of how such models handle questions based on provided context. The diagram is divided into two main parts: a top quadrant chart defining knowledge categories and two bottom flowcharts showing specific question-answer scenarios with outcomes labeled as "Proper refusal" and "Over refusal."

### Components/Axes

**1. Top Quadrant Chart (RALMs Knowledge Category Quadrant):**

* **Axes:**

* **Vertical Axis:** Labeled "Context Known" at the top and "Context UnKnown" at the bottom.

* **Horizontal Axis:** Labeled "LLMs UnKnown" on the left and "LLMs Known" on the right.

* **Quadrants & Data Points:** The chart is divided into four quadrants by the axes. Each quadrant contains a colored dot and a label.

* **Top-Left Quadrant (Context Known, LLMs UnKnown):** Contains a **green dot** labeled "RALMs Known".

* **Top-Right Quadrant (Context Known, LLMs Known):** Contains a **yellow dot** labeled "RALMs Known".

* **Bottom-Left Quadrant (Context UnKnown, LLMs UnKnown):** Contains a **black dot** labeled "RALMs UnKnown".

* **Bottom-Right Quadrant (Context UnKnown, LLMs Known):** Contains a **blue dot** labeled "RALMs Known".

* **Legend/Title:** To the right of the quadrant, an arrow points to the text "RALMs Knowledge Category Quadrant".

**2. Bottom Flowcharts (Two Examples):**

The flowcharts are arranged side-by-side. Each follows a similar structure: a question (Q), a provided RAG context, a model response, and an outcome.

* **Left Flowchart (Proper refusal example):**

* **Question (Q):** "Who won the 2022 Citrus Bowl?" (Associated with a **grey dot**).

* **RAG Context (Green dashed box):** "RAG context: Kentucky secured its fourth straight bowl victory ... Citrus Bowl win over Iowa." (Associated with a **green dot**).

* **Model Response:** A model icon followed by ": Kentucky" (Associated with a **green dot** and a **checkmark**).

* **Alternative RAG Context (Grey dashed box):** "RAG context: Buffalo beat Georgia Southern 23-21 after going 12-of-19 on third down while averaging less than three yards a carry." (Associated with a **grey dot**).

* **Model Response to Alternative Context:** A model icon followed by ": I don't know" (Associated with a **black dot** and a **red checkmark**).

* **Outcome Label:** "Proper refusal" with a downward arrow.

* **Right Flowchart (Over refusal example):**

* **Question (Q):** "When does the 2022 Olympic Winter Games end?" (Associated with a **blue dot**).

* **RAG Context (Green dashed box):** "RAG Context: The closing ceremony of the 2022 Winter Olympics was held at Beijing National Stadium on 20 February 2022;" (Associated with a **green dot**).

* **Model Response:** A model icon followed by ": February 20" (Associated with a **yellow dot** and a **checkmark**).

* **Alternative RAG Context (Grey dashed box):** "RAG Context: February 14, 2022: Another event making its debut at the Beijing Games was the monobob, a single-person bobsledding event." (Associated with a **grey dot**).

* **Model Response to Alternative Context:** A model icon followed by ": I don't know" (Associated with a **blue dot** and a **red X**).

* **Outcome Label:** "Over refusal" in red text with a downward arrow.

### Detailed Analysis

The diagram systematically maps knowledge states and their consequences.

* **Quadrant Logic:** The quadrant defines four states based on whether the answer is in the LLM's parametric knowledge ("LLMs Known/UnKnown") and whether relevant context is provided ("Context Known/UnKnown"). The "RALMs Known" label appears in three quadrants, suggesting the model can potentially answer if *either* the context is known *or* the LLM knows it. Only when both are unknown ("Context UnKnown" and "LLMs UnKnown") is the state "RALMs UnKnown".

* **Example 1 (Citrus Bowl):**

* **Trend/Flow:** The question is about a specific event winner. When the RAG context contains the direct answer ("Kentucky"), the model correctly extracts it (green dot path). When the provided context is about a different game (Buffalo vs. Georgia Southern), it contains no information about the Citrus Bowl. The model correctly responds "I don't know" (black dot path), which is labeled a "Proper refusal."

* **Example 2 (Olympics):**

* **Trend/Flow:** The question is about an event end date. When the RAG context contains the exact date ("20 February 2022"), the model correctly extracts it (yellow dot path). When the provided context is about a different event on a different date ("February 14, 2022... monobob"), it does not contain the answer to the original question. However, the model still responds "I don't know" (blue dot path). This is labeled an "Over refusal," implying the model should have been able to answer this question from its own parametric knowledge (as it falls into the "LLMs Known" category on the horizontal axis), making the refusal unnecessary.

### Key Observations

1. **Color-Coding Consistency:** Colors are used consistently to link concepts across the diagram. Green dots/boxes are associated with correct, context-based answers. Black is associated with the "RALMs UnKnown" state. Blue and yellow dots represent states where the LLM has knowledge, but their outcomes differ in the examples.

2. **Spatial Grounding:** The quadrant is centrally placed at the top. The two examples are placed below it, left and right, creating a clear comparison. Legends and labels are placed adjacent to their corresponding elements (e.g., "Proper refusal" below its flowchart).

3. **Symbolism:** Checkmarks (✓) indicate correct or appropriate responses. A red checkmark is used for the proper refusal. A red X is used for the over refusal, highlighting it as an error or suboptimal behavior.

4. **Textual Content:** All text is in English. The diagram uses technical terms like "RAG context," "LLMs," and "RALMs."

### Interpretation

This diagram serves as a conceptual framework for evaluating the performance of Retrieval-Augmented Language Models. It argues that a model's response should be judged not just on factual correctness, but on the *appropriateness* of its refusal based on the intersection of its internal knowledge and the provided external context.

* **What it demonstrates:** The core message is that an ideal RALM should only refuse to answer ("I don't know") when the answer is truly unknown to both the retrieved context *and* the model's own training data (the "RALMs UnKnown" quadrant). Refusing to answer a question that the model *should* know from its parametric memory, even when the retrieved context is unhelpful, is an "Over refusal" – a failure to utilize its own capabilities.

* **Relationship between elements:** The quadrant provides the theoretical classification, while the two flowcharts serve as concrete, contrasting case studies. The left example shows the system working as intended (proper refusal when knowledge is absent). The right example exposes a flaw: the model is overly reliant on the retrieved context and fails to fall back on its internal knowledge, leading to an unnecessary refusal.

* **Underlying message:** The diagram advocates for more sophisticated RAG systems that can intelligently discern when to rely on retrieved documents, when to rely on internal knowledge, and when to truly admit ignorance. It highlights "over refusal" as a specific and undesirable failure mode in current systems.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Quadrant Diagram: RALMs Knowledge Category Quadrant

### Overview

The image presents a quadrant diagram titled "RALMs Knowledge Category Quadrant," divided into four regions by two axes:

- **Vertical Axis**: "Context Known" (top) to "Context Unknown" (bottom)

- **Horizontal Axis**: "LLMs Known" (right) to "LLMs Unknown" (left)

Each quadrant contains a colored data point with a label, and examples of question-answer pairs are provided below to illustrate proper vs. over refusal scenarios.

---

### Components/Axes

1. **Axes**:

- **Vertical (Y-axis)**: "Context Known" (top) → "Context Unknown" (bottom)

- **Horizontal (X-axis)**: "LLMs Known" (right) → "LLMs Unknown" (left)

2. **Legend**:

- **Green**: RALMs Known, Context Known

- **Yellow**: RALMs Known, Context Unknown

- **Blue**: RALMs Unknown, Context Known

- **Gray**: RALMs Unknown, Context Unknown

3. **Data Points**:

- **Top-Right (Green)**: RALMs Known, Context Known

- **Top-Left (Yellow)**: RALMs Known, Context Unknown

- **Bottom-Left (Gray)**: RALMs Unknown, Context Unknown

- **Bottom-Right (Blue)**: RALMs Unknown, Context Known

---

### Detailed Analysis

#### Quadrant Labels and Data Points

- **Top-Right (Green)**:

- Label: "RALMs Known, Context Known"

- Position: Intersection of "Context Known" (top) and "LLMs Known" (right).

- **Top-Left (Yellow)**:

- Label: "RALMs Known, Context Unknown"

- Position: Intersection of "Context Unknown" (bottom) and "LLMs Known" (right).

- **Bottom-Left (Gray)**:

- Label: "RALMs Unknown, Context Unknown"

- Position: Intersection of "Context Unknown" (bottom) and "LLMs Unknown" (left).

- **Bottom-Right (Blue)**:

- Label: "RALMs Unknown, Context Known"

- Position: Intersection of "Context Known" (top) and "LLMs Unknown" (left).

#### Question-Answer Examples

1. **Question**: "Who won the 2022 Citrus Bowl?"

- **RAG Context**: Kentucky secured its fourth straight bowl victory...

- **Answer**: "Kentucky" (✅ Checkmark, green data point).

2. **Question**: "When does the 2022 Olympic Winter Games end?"

- **RAG Context**: The closing ceremony... was held on 20 February 2022.

- **Answer**: "February 20" (✅ Checkmark, yellow data point).

3. **Question**: "RAG context: Buffalo beat Georgia Southern..."

- **Answer**: "I don’t know" (✅ Checkmark, gray data point).

4. **Question**: "RAG context: February 14, 2022..."

- **Answer**: "I don’t know" (❌ Red X, blue data point).

---

### Key Observations

1. **Quadrant Distribution**:

- Two quadrants (green, yellow) represent scenarios where RALMs have knowledge ("RALMs Known").

- Two quadrants (gray, blue) represent scenarios where RALMs lack knowledge ("RALMs Unknown").

2. **Refusal Logic**:

- **Proper Refusal**: Gray data point ("RALMs Unknown, Context Unknown") with a checkmark.

- **Over Refusal**: Blue data point ("RALMs Unknown, Context Known") with a red X, indicating refusal despite known context.

3. **Color Consistency**:

- All data points match the legend colors (green, yellow, gray, blue).

---

### Interpretation

The quadrant diagram categorizes RALMs (Retrieval-Augmented Language Models) based on their knowledge of **context** and **LLMs** (Large Language Models). The examples illustrate how RALMs should respond:

- **Proper Refusal**: When neither context nor LLM knowledge is available (gray quadrant).

- **Over Refusal**: When context is known but the model still refuses (blue quadrant), which is flagged as incorrect.

The diagram emphasizes the importance of leveraging available context to avoid unnecessary refusals, ensuring RALMs provide accurate answers when possible. The red X in the blue quadrant highlights a critical failure mode where models withhold answers despite having sufficient context.

DECODING INTELLIGENCE...