## Diagram: RAG LLM Knowledge Category Quadrant

### Overview

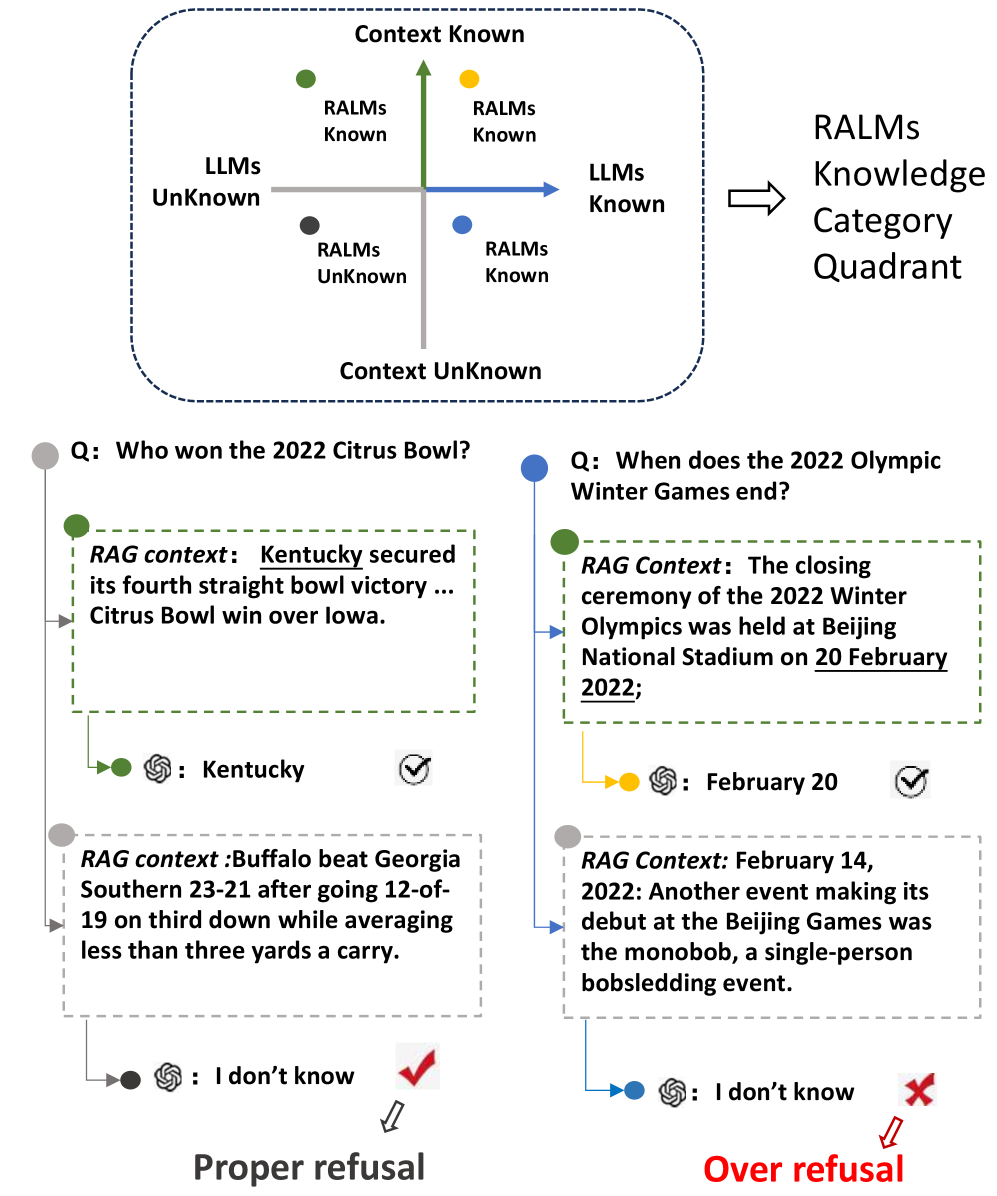

This diagram illustrates a knowledge categorization framework for Retrieval-Augmented Generation (RAG) Large Language Models (LLMs). It depicts a 2x2 quadrant based on "Context Known/Unknown" and "RALMs Known/Unknown", and demonstrates how LLMs respond to questions based on their knowledge and the provided context. The diagram showcases two example question-answering scenarios: one resulting in a "Proper Refusal" and the other in an "Over Refusal".

### Components/Axes

* **Quadrants:** Four quadrants defined by the axes:

* Top-Left: LLMs Unknown, Context Unknown (labeled "LLMs Unknown")

* Top-Right: LLMs Known, Context Unknown (labeled "LLMs Known")

* Bottom-Left: LLMs Unknown, Context Known (labeled "RALMs Unknown")

* Bottom-Right: LLMs Known, Context Known (labeled "RALMs Known")

* **Axes:**

* Vertical Axis: "Context Known" (top) to "Context Unknown" (bottom)

* Horizontal Axis: "RALMs Unknown" (left) to "RALMs Known" (right)

* **Arrows:** Arrows indicate the flow from RALMs Unknown to RALMs Known.

* **Question Blocks:** Two yellow blocks containing questions:

* "Q: Who won the 2022 Citrus Bowl?"

* "Q: When does the 2022 Olympic Winter Games end?"

* **RAG Context Blocks:** Two light blue blocks containing RAG context:

* "RAG context: Kentucky secured its fourth straight bowl victory … Citrus Bowl win over Iowa."

* "RAG context: The closing ceremony of the 2022 Winter Olympics was held at Beijing National Stadium on 20 February 2022;"

* "RAG context: Buffalo beat Georgia Southern 23-21 after going 12-of-19 on third down while averaging less than three yards a carry."

* "RAG context: February 14, 2022: Another event making its debut at the Beijing Games was the monobob, a single-person bobsledding event."

* **Answer Bubbles:** Two green bubbles with checkmarks and two grey bubbles with "I don't know" and an "X"

* ": Kentucky" (with a checkmark)

* ": February 20" (with a checkmark)

* ": I don't know" (with an "X")

* ": I don't know" (with a checkmark)

* **Labels:**

* "RALMs Knowledge Category Quadrant" (top-right)

* "Proper refusal" (bottom-left)

* "Over refusal" (bottom-right)

### Detailed Analysis or Content Details

The diagram demonstrates two scenarios:

**Scenario 1 (Proper Refusal):**

* **Question:** "Who won the 2022 Citrus Bowl?"

* **RAG Context:** "Kentucky secured its fourth straight bowl victory … Citrus Bowl win over Iowa."

* **Answer:** ": Kentucky" (with a checkmark) - The LLM correctly answers the question based on the provided context.

* **Additional Context:** "Buffalo beat Georgia Southern 23-21 after going 12-of-19 on third down while averaging less than three yards a carry."

* **Refusal:** ": I don't know" (with a checkmark) - The LLM correctly refuses to answer a question outside the scope of the provided context.

**Scenario 2 (Over Refusal):**

* **Question:** "When does the 2022 Olympic Winter Games end?"

* **RAG Context:** "The closing ceremony of the 2022 Winter Olympics was held at Beijing National Stadium on 20 February 2022;"

* **Answer:** ": February 20" (with a checkmark) - The LLM correctly answers the question based on the provided context.

* **Additional Context:** "February 14, 2022: Another event making its debut at the Beijing Games was the monobob, a single-person bobsledding event."

* **Refusal:** ": I don't know" (with an "X") - The LLM incorrectly refuses to answer a question that can be answered from the provided context.

### Key Observations

* The diagram highlights the importance of accurate RAG context for LLM performance.

* The "Proper Refusal" scenario demonstrates the LLM's ability to stay within the bounds of the provided information.

* The "Over Refusal" scenario indicates a potential issue where the LLM incorrectly refuses to answer a valid question based on the available context.

* The quadrants visually represent the different states of knowledge for both the LLM and the RAG system.

### Interpretation

This diagram illustrates a critical aspect of RAG systems: the balance between providing relevant context and avoiding hallucinations or incorrect answers. The quadrants represent the ideal states for LLM operation. The "RALMs Known" quadrant is the goal, where both the LLM and the RAG system have the necessary knowledge to answer the question. The "Proper Refusal" scenario shows the LLM correctly identifying when it lacks the information to answer a question. However, the "Over Refusal" scenario is problematic, as it indicates the LLM is failing to utilize available information. This could be due to issues with the RAG system's retrieval process, the LLM's reasoning capabilities, or a combination of both. The diagram serves as a visual aid for understanding the challenges and potential pitfalls of RAG systems and emphasizes the need for careful evaluation and optimization. The use of checkmarks and "X" symbols clearly indicates the success or failure of the LLM's response in each scenario.