\n

## Block Diagram: Memory-Augmented Neural Network Architecture

### Overview

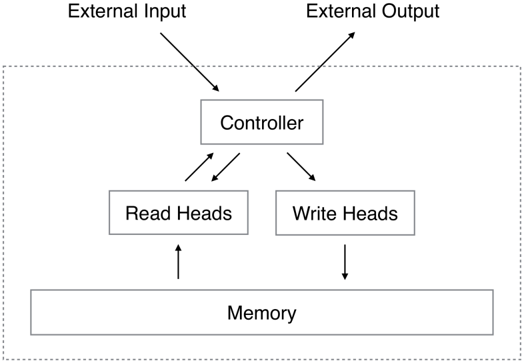

The image is a technical block diagram illustrating the architecture of a memory-augmented neural network, likely a Neural Turing Machine (NTM) or a similar differentiable memory system. It depicts the flow of information between an external environment and the core components of the system: a Controller, memory access mechanisms (Read/Write Heads), and an external Memory bank.

### Components/Axes

The diagram is organized into two primary regions:

1. **External Interface (Top):** Two labels outside a dashed boundary.

* **External Input:** Positioned at the top-left. An arrow points from this label down into the dashed box, indicating data entering the system.

* **External Output:** Positioned at the top-right. An arrow points from the dashed box up to this label, indicating results leaving the system.

2. **Internal System (Within Dashed Box):** A dashed rectangular boundary encloses the core computational components.

* **Controller:** A central rectangular box. It is the primary processing unit.

* **Read Heads:** A rectangular box positioned below and to the left of the Controller.

* **Write Heads:** A rectangular box positioned below and to the right of the Controller.

* **Memory:** A wide rectangular box at the bottom of the diagram, spanning the width beneath both heads.

### Detailed Analysis: Component Relationships and Data Flow

The diagram explicitly defines the connections and data flow directions between components using arrows:

1. **Controller ↔ External Environment:**

* **Input Flow:** An arrow originates from "External Input" and terminates at the "Controller".

* **Output Flow:** An arrow originates from the "Controller" and terminates at "External Output".

2. **Controller ↔ Memory Access Mechanisms:**

* **Controller to Read Heads:** A single arrow points from the Controller down to the Read Heads.

* **Read Heads to Controller:** A single arrow points from the Read Heads up to the Controller. This indicates a **bidirectional** or feedback relationship where the Controller can query the Read Heads and receive data back.

* **Controller to Write Heads:** A single arrow points from the Controller down to the Write Heads. This is a **unidirectional** command flow.

3. **Memory Access Mechanisms ↔ Memory:**

* **Read Heads to Memory:** A single arrow points from the Read Heads down to the Memory. This represents the action of reading data from specific locations in the Memory.

* **Write Heads to Memory:** A single arrow points from the Write Heads down to the Memory. This represents the action of writing or modifying data in the Memory.

### Key Observations

* **Hierarchical Control:** The Controller is the central hub, mediating all communication between the external world and the internal memory system.

* **Separation of Read/Write Functions:** The architecture explicitly separates the mechanisms for reading from and writing to memory, which is a key feature of systems like the Neural Turing Machine, allowing for more complex, learnable memory operations.

* **Memory as a Shared Resource:** Both Read Heads and Write Heads interact with the same, single "Memory" block, indicating a shared, addressable memory space.

* **Bidirectional vs. Unidirectional Flow:** The connection between the Controller and Read Heads is bidirectional (two arrows), while the connection to Write Heads is unidirectional (one arrow). This suggests the Controller receives retrieved information from Read Heads but only sends write commands to Write Heads.

### Interpretation

This diagram illustrates a foundational concept in advanced neural network design: augmenting a neural network (the Controller, often an RNN) with an external, differentiable memory matrix. This architecture allows the network to learn algorithms that require storing and recalling information over long sequences, moving beyond the fixed-size memory of standard networks.

* **Functional Analogy:** The system can be analogized to a computer's CPU (Controller) with separate load (Read Heads) and store (Write Heads) instructions accessing RAM (Memory).

* **Purpose of Separation:** Decoupling read and write operations enables the network to learn specialized attention mechanisms for each task—where to look to retrieve information and where to update information.

* **Implied Learnability:** In the context of machine learning, the operations of the Read and Write Heads (e.g., where to read/write, how much to write) are typically parameterized and learned via gradient descent, allowing the system to discover its own memory management strategies.

* **Scope:** The dashed line clearly demarcates the learnable, internal system from the fixed external input/output streams, emphasizing that the memory and access mechanisms are part of the model being trained.