# Technical Document Extraction: Attention Forward Speed Benchmark

## 1. Header Information

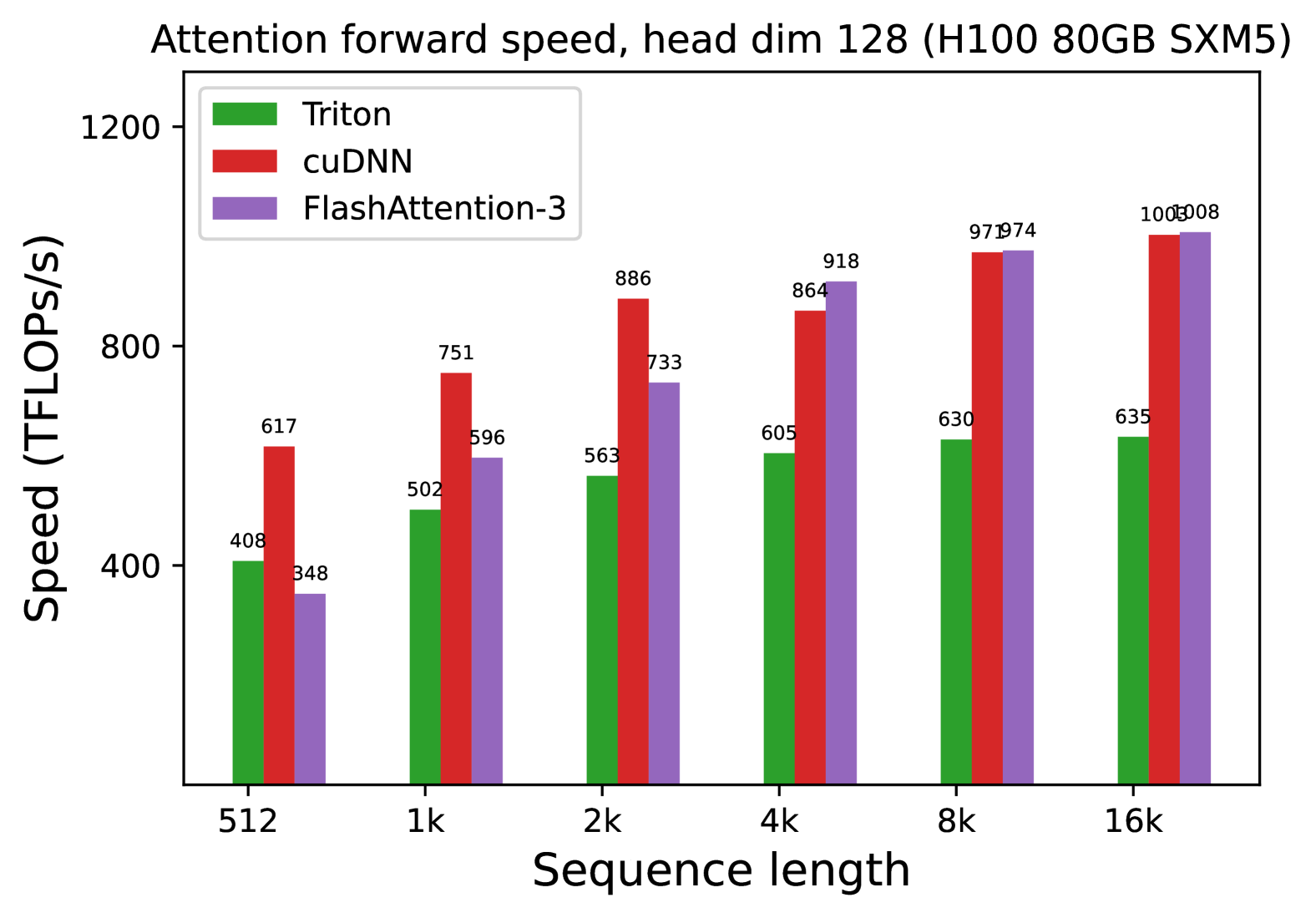

* **Title:** Attention forward speed, head dim 128 (H100 80GB SXM5)

* **Hardware Context:** NVIDIA H100 80GB SXM5 GPU.

* **Operation:** Attention forward pass with a head dimension of 128.

## 2. Chart Structure and Metadata

* **Chart Type:** Grouped Bar Chart.

* **Y-Axis Label:** Speed (TFLOPS/s)

* **Y-Axis Scale:** 0 to 1200, with major markers at 400, 800, and 1200.

* **X-Axis Label:** Sequence length

* **X-Axis Categories:** 512, 1k, 2k, 4k, 8k, 16k.

* **Legend Placement:** Top-left.

* **Legend Categories:**

* **Triton:** Green bar

* **cuDNN:** Red bar

* **FlashAttention-3:** Purple bar

## 3. Data Extraction and Trend Analysis

### Trend Verification

* **Triton (Green):** Shows a steady, monotonic upward trend as sequence length increases, starting at 408 and plateauing around 630-635 TFLOPS/s at higher sequence lengths.

* **cuDNN (Red):** Shows a sharp upward trend from 512 to 8k sequence lengths, significantly outperforming Triton at all points. It appears to reach a near-saturation point at 16k.

* **FlashAttention-3 (Purple):** Shows the most aggressive growth curve. It starts as the slowest performer at sequence length 512 but surpasses Triton at 2k and matches/slightly exceeds cuDNN at 8k and 16k.

### Data Table (Reconstructed)

Values are extracted from the labels positioned above each individual bar.

| Sequence Length | Triton (Green) | cuDNN (Red) | FlashAttention-3 (Purple) |

| :--- | :--- | :--- | :--- |

| **512** | 408 | 617 | 348 |

| **1k** | 502 | 751 | 596 |

| **2k** | 563 | 886 | 733 |

| **4k** | 605 | 864 | 918 |

| **8k** | 630 | 971 | 974 |

| **16k** | 635 | 1005 | 1008 |

## 4. Component Analysis & Key Findings

* **Performance Scaling:** All three implementations benefit from longer sequence lengths, which likely allows for better GPU utilization and occupancy.

* **Crossover Points:**

* FlashAttention-3 is less efficient than cuDNN and Triton at very short sequences (512).

* FlashAttention-3 overtakes Triton between 512 and 1k.

* FlashAttention-3 overtakes cuDNN between 2k and 4k.

* **Peak Performance:** FlashAttention-3 achieves the highest recorded throughput in this set at **1008 TFLOPS/s** at a sequence length of 16k, closely followed by cuDNN at **1005 TFLOPS/s**.

* **Efficiency Gap:** At the 16k sequence length, FlashAttention-3 and cuDNN are approximately **58% faster** than the Triton implementation.