TECHNICAL ASSET FINGERPRINT

c345601504990eb9605988a1

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

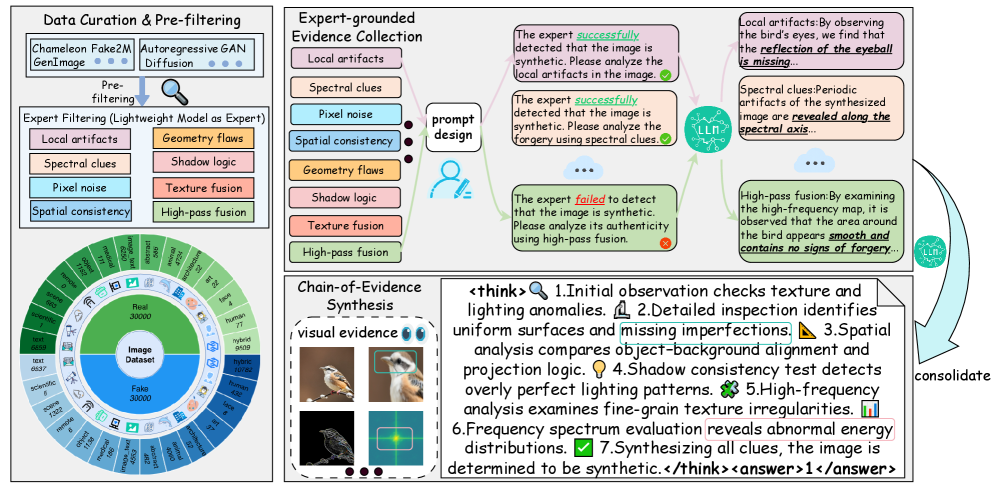

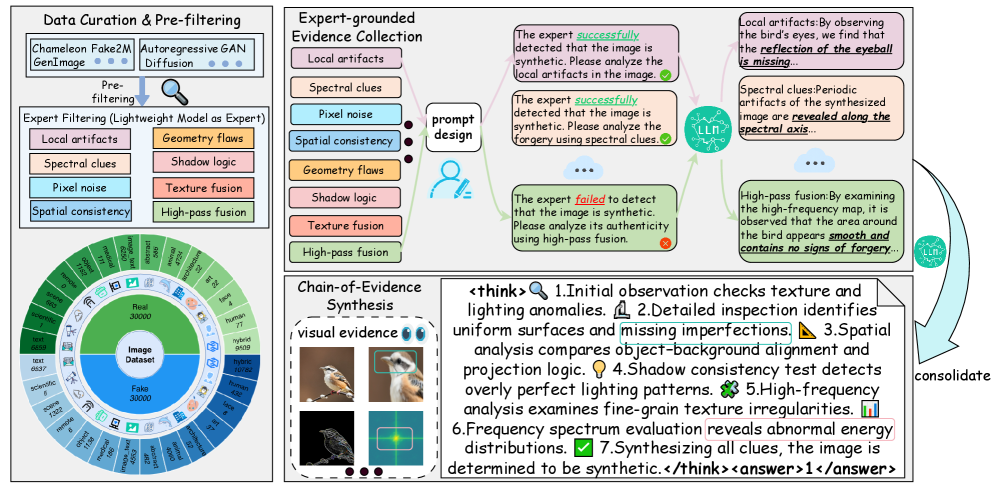

## Diagram: Multi-Stage Image Forgery Detection Framework

### Overview

The image is a technical flowchart and process diagram illustrating a comprehensive framework for detecting synthetic or forged images. It details a pipeline that moves from data curation and expert filtering to evidence collection and final synthesis of a chain-of-evidence for a conclusive judgment. The diagram combines flowcharts, a circular data visualization, annotated example images, and a textual step-by-step analysis process.

### Components/Axes

The diagram is segmented into four primary regions:

1. **Top-Left: Data Curation & Pre-filtering**

* **Flowchart Elements:**

* Input Sources: "Chameleon Fake2M" and "Autoregressive GAN Diffusion".

* Process: "Pre-filtering" leading to "Expert Filtering (Lightweight Model as Expert)".

* Expert Filtering Criteria (listed in two columns):

* Left Column: "Local artifacts", "Spectral clues", "Pixel noise", "Spatial consistency".

* Right Column: "Geometry flaws", "Shadow logic", "Texture fusion", "High-pass fusion".

* **Circular Data Visualization (Sunburst Chart):**

* **Center Label:** "Image Dataset".

* **Inner Ring:** Divided into two halves:

* Top Half (Green): "Real" with count "30000".

* Bottom Half (Blue): "Fake" with count "30000".

* **Outer Ring:** Sub-categories for Real and Fake images, with associated counts. The legend is embedded as labels around the ring.

* **Real Sub-categories (Green segments, clockwise from top):**

* "COCO" (count: 10000)

* "CelebA-HQ" (count: 5000)

* "FFHQ" (count: 5000)

* "LSUN" (count: 5000)

* "MetFaces" (count: 2500)

* "其他" (Other) (count: 2500)

* **Fake Sub-categories (Blue segments, clockwise from bottom):**

* "StyleGAN" (count: 5000)

* "StyleGAN2" (count: 5000)

* "StyleGAN3" (count: 5000)

* "Diffusion" (count: 5000)

* "GigaGAN" (count: 5000)

* "其他" (Other) (count: 5000)

2. **Top-Right: Expert-grounded Evidence Collection**

* **Central Process:** "prompt design" connected to an icon of a person (the expert).

* **Input Clues (List in a box):** Same as the Expert Filtering criteria from the top-left section.

* **Output Examples (Speech Bubbles):** Three examples of expert analysis outcomes.

* **Bubble 1 (Top, Green Checkmark):** "The expert *successfully* detected that the image is synthetic. Please analyze the local artifacts in the image."

* **Bubble 2 (Middle, Green Checkmark):** "The expert *successfully* detected that the image is synthetic. Please analyze the forgery using spectral clues."

* **Bubble 3 (Bottom, Red X):** "The expert *failed* to detect that the image is synthetic. Please analyze it's authenticity using high-pass fusion."

* **Detailed Analysis Boxes (Right side):** Three boxes elaborating on specific clue types.

* **Box 1 (Top, Pink):** "Local artifacts: By observing the bird's eyes, we find that the *reflection of the eyeball* is missing."

* **Box 2 (Middle, Orange):** "Spectral clues: Periodic artifacts of the synthesized image are *revealed along the spectral axis*."

* **Box 3 (Bottom, Green):** "High-pass fusion: By examining the high-frequency map, it is observed that the area around the bird appears *smooth and contains no signs of forgery*."

* **LLM Icons:** Two circular icons labeled "LLM" are connected to the analysis boxes, suggesting a Large Language Model assists in generating these analyses.

3. **Bottom-Left: Chain-of-Evidence Synthesis**

* **Title:** "Chain-of-Evidence Synthesis".

* **Subtitle:** "visual evidence" with eye icons.

* **Image Grid:** A 2x2 grid of bird images with annotations.

* Top-left: A bird on a branch (original?).

* Top-right: The same bird with a green bounding box around its head.

* Bottom-left: A grayscale, high-frequency filtered version of the bird image.

* Bottom-right: A heatmap or spectral analysis visualization of the bird image, with a bright spot highlighted by a green box.

4. **Bottom-Right: Consolidated Analysis Process**

* **Flow Arrow:** A large curved arrow labeled "consolidate" points from the "Expert-grounded Evidence Collection" section to this box.

* **Text Block:** A detailed, numbered step-by-step analysis process enclosed in `<think>` and `<answer>` tags.

* **`<think>` Content:**

1. "Initial observation checks texture and lighting anomalies."

2. "Detailed inspection identifies uniform surfaces and missing imperfections."

3. "Spatial analysis compares object-background alignment and projection logic."

4. "Shadow consistency test detects overly perfect lighting patterns."

5. "High-frequency analysis examines fine-grain texture irregularities."

6. "Frequency spectrum evaluation reveals abnormal energy distributions."

7. "Synthesizing all clues, the image is determined to be synthetic."

* **`<answer>` Content:** "1" (This likely corresponds to a binary classification, e.g., 1 for "synthetic/fake").

### Detailed Analysis

* **Data Flow:** The process is linear and iterative. It starts with a curated dataset of 60,000 images (30k real, 30k fake from various sources). A lightweight "expert" model pre-filters images based on eight forgery clue categories. A more detailed, prompt-based expert (potentially an LLM) then collects evidence using these clues. The outcomes (success/failure) and specific findings (missing reflections, spectral artifacts) are documented. Visual evidence is synthesized, and all clues are consolidated into a final 7-step analytical narrative leading to a binary decision.

* **Language:** The primary language is English. The only non-English text is the Chinese characters "其他" (meaning "Other") found in two segments of the outer ring of the circular dataset chart.

* **Spatial Grounding:**

* The circular dataset chart is in the bottom-left quadrant of the top-left section.

* The "prompt design" expert icon is centrally located in the top-right section.

* The three detailed analysis boxes (Local artifacts, Spectral clues, High-pass fusion) are stacked vertically on the far right of the top-right section.

* The 2x2 image grid is in the bottom-left corner of the entire diagram.

* The consolidated analysis text block occupies the bottom-right quadrant.

### Key Observations

1. **Multi-Modal Evidence:** The framework relies on diverse evidence types: visual (local artifacts), signal-processing (spectral, high-frequency), and logical (shadow, geometry, spatial consistency).

2. **Expert-LLM Collaboration:** The diagram suggests a hybrid system where an "expert" model (possibly a vision model) performs detection, and an LLM assists in interpreting clues and generating explanatory text.

3. **Failure Case Included:** The diagram explicitly includes a failure case (the red X bubble), indicating the framework is designed to analyze and learn from its mistakes.

4. **Balanced Dataset:** The training/validation dataset is perfectly balanced (50% real, 50% fake) and sourced from a wide variety of both real-world (COCO, CelebA) and generative model (StyleGAN variants, Diffusion) origins.

5. **Synthesis is Key:** The final step is not just detection but the synthesis of a coherent "chain-of-evidence" narrative, moving from observation to conclusion.

### Interpretation

This diagram outlines a sophisticated, explainable AI system for image forgery detection. It moves beyond simple binary classification by:

* **Emphasizing Explainability:** Every detection is accompanied by a specific, human-readable reason (e.g., "missing eyeball reflection," "abnormal energy distributions"). This is crucial for trust and debugging.

* **Structured Reasoning:** The 7-step `<think>` process mirrors a forensic investigator's methodology, suggesting the system is designed to mimic and augment human expert reasoning.

* **Robustness Through Diversity:** By checking eight distinct clue categories, the system is less likely to be fooled by forgeries that might pass one type of test but fail another. The inclusion of a failure case highlights an ongoing challenge—some forgeries may appear "smooth" and lack high-frequency artifacts, requiring reliance on other, potentially subtler clues.

* **Practical Pipeline:** The flow from a large, curated dataset through pre-filtering to detailed analysis represents a scalable approach, where lightweight models handle initial screening, and more computationally expensive analysis is reserved for ambiguous cases.

The framework's core principle is that a conclusive judgment of forgery should be supported by a consolidated chain of multiple, independent pieces of visual and analytical evidence.

DECODING INTELLIGENCE...