TECHNICAL ASSET FINGERPRINT

c349f3b58eec0a6c62d089c9

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

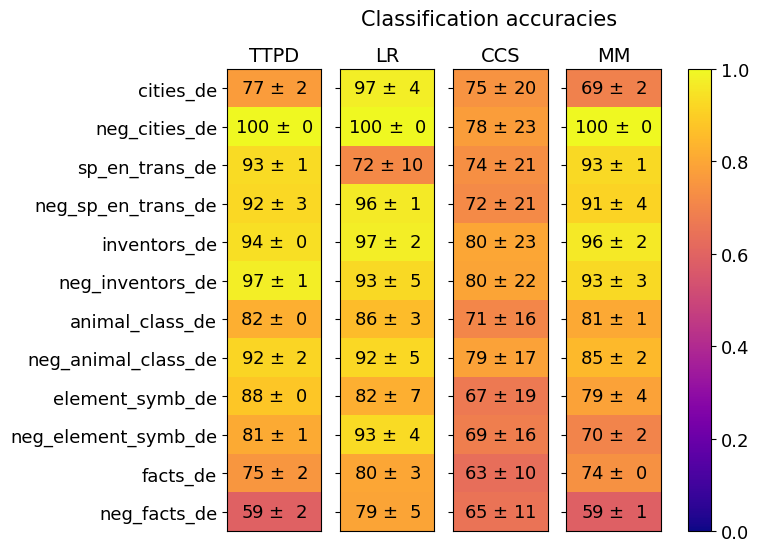

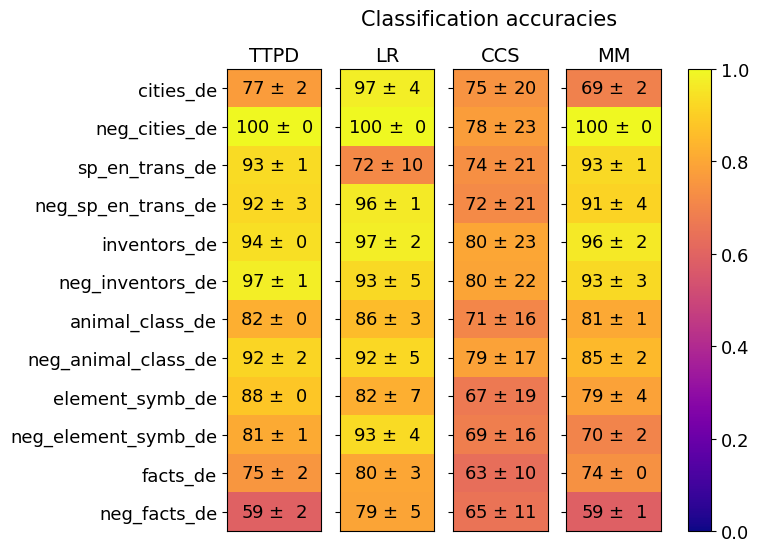

## Heatmap: Classification Accuracies

### Overview

The image is a heatmap displaying classification accuracies for different models (TTPD, LR, CCS, MM) across various categories (e.g., cities_de, neg_cities_de). The heatmap uses a color gradient from blue (low accuracy) to yellow (high accuracy) to represent the accuracy values. Each cell contains the accuracy value and its associated uncertainty (± value).

### Components/Axes

* **Title:** Classification accuracies

* **Columns (Models):** TTPD, LR, CCS, MM

* **Rows (Categories):** cities\_de, neg\_cities\_de, sp\_en\_trans\_de, neg\_sp\_en\_trans\_de, inventors\_de, neg\_inventors\_de, animal\_class\_de, neg\_animal\_class\_de, element\_symb\_de, neg\_element\_symb\_de, facts\_de, neg\_facts\_de

* **Colorbar:** Ranges from 0.0 (blue) to 1.0 (yellow), representing the classification accuracy score.

### Detailed Analysis

The heatmap presents classification accuracies as percentages, with an associated uncertainty value.

Here's a breakdown of the data, organized by category and model:

* **cities\_de:**

* TTPD: 77 ± 2

* LR: 97 ± 4

* CCS: 75 ± 20

* MM: 69 ± 2

* **neg\_cities\_de:**

* TTPD: 100 ± 0

* LR: 100 ± 0

* CCS: 78 ± 23

* MM: 100 ± 0

* **sp\_en\_trans\_de:**

* TTPD: 93 ± 1

* LR: 72 ± 10

* CCS: 74 ± 21

* MM: 93 ± 1

* **neg\_sp\_en\_trans\_de:**

* TTPD: 92 ± 3

* LR: 96 ± 1

* CCS: 72 ± 21

* MM: 91 ± 4

* **inventors\_de:**

* TTPD: 94 ± 0

* LR: 97 ± 2

* CCS: 80 ± 23

* MM: 96 ± 2

* **neg\_inventors\_de:**

* TTPD: 97 ± 1

* LR: 93 ± 5

* CCS: 80 ± 22

* MM: 93 ± 3

* **animal\_class\_de:**

* TTPD: 82 ± 0

* LR: 86 ± 3

* CCS: 71 ± 16

* MM: 81 ± 1

* **neg\_animal\_class\_de:**

* TTPD: 92 ± 2

* LR: 92 ± 5

* CCS: 79 ± 17

* MM: 85 ± 2

* **element\_symb\_de:**

* TTPD: 88 ± 0

* LR: 82 ± 7

* CCS: 67 ± 19

* MM: 79 ± 4

* **neg\_element\_symb\_de:**

* TTPD: 81 ± 1

* LR: 93 ± 4

* CCS: 69 ± 16

* MM: 70 ± 2

* **facts\_de:**

* TTPD: 75 ± 2

* LR: 80 ± 3

* CCS: 63 ± 10

* MM: 74 ± 0

* **neg\_facts\_de:**

* TTPD: 59 ± 2

* LR: 79 ± 5

* CCS: 65 ± 11

* MM: 59 ± 1

### Key Observations

* LR consistently shows high accuracy across most categories.

* CCS generally has lower accuracy and higher uncertainty compared to other models.

* TTPD and MM perform similarly, with some variations depending on the category.

* All models struggle with the "neg\_facts\_de" category, showing the lowest accuracies.

* All models perform very well on "neg_cities_de"

### Interpretation

The heatmap provides a visual comparison of the classification accuracies of four different models across a range of categories. The data suggests that the LR model generally outperforms the others, while the CCS model tends to have lower accuracy and higher variance. The "neg\_facts\_de" category appears to be the most challenging for all models, indicating a potential area for improvement. The high accuracy on "neg_cities_de" suggests this is an easy category for all models. The uncertainty values highlight the variability in the model's performance, with CCS showing the highest uncertainty in several categories.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Heatmap: Classification Accuracies

### Overview

This image presents a heatmap displaying classification accuracies for various categories across four different models: TTPD, LR, CCS, and MM. The categories represent different types of text data, including cities, negative examples of cities, translations, inventors, animal classes, element symbols, and facts, each in German ("_de"). Accuracy is represented by color, ranging from 0.0 (dark blue) to 1.0 (dark yellow). Each value is also presented with a ± standard deviation.

### Components/Axes

* **X-axis:** Represents the four classification models: TTPD, LR, CCS, and MM.

* **Y-axis:** Represents the categories of text data:

* cities\_de

* neg\_cities\_de

* sp\_en\_trans\_de (Spanish to English translations in German)

* neg\_sp\_en\_trans\_de (Negative examples of Spanish to English translations in German)

* inventors\_de

* neg\_inventors\_de

* animal\_class\_de

* neg\_animal\_class\_de

* element\_symb\_de

* neg\_element\_symb\_de

* facts\_de

* neg\_facts\_de

* **Color Scale (Legend):** Located on the right side of the heatmap, ranging from dark blue (0.0) to dark yellow (1.0), indicating the accuracy level.

* **Title:** "Classification accuracies" positioned at the top-center of the heatmap.

### Detailed Analysis

The heatmap displays accuracy values with standard deviations. I will analyze each model's performance across the categories.

**TTPD (First Column):**

* cities\_de: 77 ± 2

* neg\_cities\_de: 100 ± 0

* sp\_en\_trans\_de: 93 ± 1

* neg\_sp\_en\_trans\_de: 92 ± 3

* inventors\_de: 94 ± 0

* neg\_inventors\_de: 97 ± 1

* animal\_class\_de: 82 ± 0

* neg\_animal\_class\_de: 92 ± 2

* element\_symb\_de: 88 ± 0

* neg\_element\_symb\_de: 81 ± 1

* facts\_de: 75 ± 2

* neg\_facts\_de: 59 ± 2

**LR (Second Column):**

* cities\_de: 97 ± 4

* neg\_cities\_de: 100 ± 0

* sp\_en\_trans\_de: 72 ± 10

* neg\_sp\_en\_trans\_de: 96 ± 1

* inventors\_de: 97 ± 2

* neg\_inventors\_de: 93 ± 5

* animal\_class\_de: 86 ± 3

* neg\_animal\_class\_de: 92 ± 5

* element\_symb\_de: 82 ± 7

* neg\_element\_symb\_de: 93 ± 4

* facts\_de: 80 ± 3

* neg\_facts\_de: 79 ± 5

**CCS (Third Column):**

* cities\_de: 75 ± 20

* neg\_cities\_de: 78 ± 23

* sp\_en\_trans\_de: 74 ± 21

* neg\_sp\_en\_trans\_de: 72 ± 21

* inventors\_de: 80 ± 23

* neg\_inventors\_de: 80 ± 22

* animal\_class\_de: 71 ± 16

* neg\_animal\_class\_de: 79 ± 17

* element\_symb\_de: 67 ± 19

* neg\_element\_symb\_de: 69 ± 16

* facts\_de: 63 ± 10

* neg\_facts\_de: 65 ± 11

**MM (Fourth Column):**

* cities\_de: 69 ± 2

* neg\_cities\_de: 100 ± 0

* sp\_en\_trans\_de: 93 ± 1

* neg\_sp\_en\_trans\_de: 91 ± 4

* inventors\_de: 96 ± 2

* neg\_inventors\_de: 93 ± 3

* animal\_class\_de: 81 ± 1

* neg\_animal\_class\_de: 85 ± 2

* element\_symb\_de: 79 ± 4

* neg\_element\_symb\_de: 70 ± 2

* facts\_de: 74 ± 0

* neg\_facts\_de: 59 ± 1

### Key Observations

* **Negative Examples:** All models achieve very high accuracy (close to 1.0) on the "neg\_" categories (negative examples), indicating they are good at identifying incorrect or irrelevant data.

* **LR Performance:** The LR model generally exhibits the highest accuracy for "cities\_de" and "neg\_cities\_de", achieving a perfect score of 1.0 on "neg\_cities\_de".

* **CCS Performance:** The CCS model consistently shows the lowest accuracy across most categories, with significant standard deviations, suggesting high variability in its performance.

* **TTPD and MM:** These models show relatively consistent performance, generally falling between LR and CCS in terms of accuracy.

* **Facts and Translations:** Accuracy on "facts\_de" and "sp\_en\_trans\_de" is generally lower than on other categories, particularly for CCS and MM.

### Interpretation

The heatmap demonstrates the performance of four different classification models on a variety of text data categories in German. The consistent high accuracy on negative examples suggests that all models are effective at identifying incorrect data. The LR model appears to be the most accurate overall, particularly for city-related data. The CCS model, however, exhibits lower and more variable performance, indicating it may be less suitable for these classification tasks.

The lower accuracy on "facts\_de" and "sp\_en\_trans\_de" could indicate that these categories are more challenging to classify, potentially due to the complexity of factual information or the nuances of translation. The standard deviations provide a measure of the reliability of the accuracy estimates; larger standard deviations suggest greater uncertainty.

The use of "neg\_" prefixes suggests a focus on robust classification, where the ability to correctly identify negative examples is crucial. This could be relevant in applications such as spam filtering or anomaly detection. The fact that all models perform well on these negative examples is a positive sign.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Heatmap Chart: Classification Accuracies

### Overview

This image is a heatmap chart titled "Classification accuracies." It displays the performance (accuracy scores with standard deviations) of four different classification methods (TTPD, LR, CCS, MM) across twelve distinct datasets. The performance is encoded both numerically within each cell and by a color gradient, with yellow indicating higher accuracy (closer to 1.0) and darker purple indicating lower accuracy (closer to 0.0).

### Components/Axes

* **Chart Title:** "Classification accuracies" (top center).

* **Y-Axis (Rows):** Lists twelve dataset names. From top to bottom:

1. `cities_de`

2. `neg_cities_de`

3. `sp_en_trans_de`

4. `neg_sp_en_trans_de`

5. `inventors_de`

6. `neg_inventors_de`

7. `animal_class_de`

8. `neg_animal_class_de`

9. `element_symb_de`

10. `neg_element_symb_de`

11. `facts_de`

12. `neg_facts_de`

* **X-Axis (Columns):** Lists four method abbreviations. From left to right:

1. `TTPD`

2. `LR`

3. `CCS`

4. `MM`

* **Color Scale/Legend:** A vertical bar on the far right of the chart. It maps color to accuracy values from 0.0 (dark purple) to 1.0 (bright yellow). Key markers are at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **Data Cells:** Each cell contains a numerical accuracy score formatted as `mean ± standard deviation`. The background color of the cell corresponds to the `mean` value according to the color scale.

### Detailed Analysis

Below is the extracted data for each method across all datasets. Values are presented as `Accuracy ± Standard Deviation`.

**Method: TTPD**

* `cities_de`: 77 ± 2

* `neg_cities_de`: 100 ± 0

* `sp_en_trans_de`: 93 ± 1

* `neg_sp_en_trans_de`: 92 ± 3

* `inventors_de`: 94 ± 0

* `neg_inventors_de`: 97 ± 1

* `animal_class_de`: 82 ± 0

* `neg_animal_class_de`: 92 ± 2

* `element_symb_de`: 88 ± 0

* `neg_element_symb_de`: 81 ± 1

* `facts_de`: 75 ± 2

* `neg_facts_de`: 59 ± 2

**Method: LR**

* `cities_de`: 97 ± 4

* `neg_cities_de`: 100 ± 0

* `sp_en_trans_de`: 72 ± 10

* `neg_sp_en_trans_de`: 96 ± 1

* `inventors_de`: 97 ± 2

* `neg_inventors_de`: 93 ± 5

* `animal_class_de`: 86 ± 3

* `neg_animal_class_de`: 92 ± 5

* `element_symb_de`: 82 ± 7

* `neg_element_symb_de`: 93 ± 4

* `facts_de`: 80 ± 3

* `neg_facts_de`: 79 ± 5

**Method: CCS**

* `cities_de`: 75 ± 20

* `neg_cities_de`: 78 ± 23

* `sp_en_trans_de`: 74 ± 21

* `neg_sp_en_trans_de`: 72 ± 21

* `inventors_de`: 80 ± 23

* `neg_inventors_de`: 80 ± 22

* `animal_class_de`: 71 ± 16

* `neg_animal_class_de`: 79 ± 17

* `element_symb_de`: 67 ± 19

* `neg_element_symb_de`: 69 ± 16

* `facts_de`: 63 ± 10

* `neg_facts_de`: 65 ± 11

**Method: MM**

* `cities_de`: 69 ± 2

* `neg_cities_de`: 100 ± 0

* `sp_en_trans_de`: 93 ± 1

* `neg_sp_en_trans_de`: 91 ± 4

* `inventors_de`: 96 ± 2

* `neg_inventors_de`: 93 ± 3

* `animal_class_de`: 81 ± 1

* `neg_animal_class_de`: 85 ± 2

* `element_symb_de`: 79 ± 4

* `neg_element_symb_de`: 70 ± 2

* `facts_de`: 74 ± 0

* `neg_facts_de`: 59 ± 1

### Key Observations

1. **Perfect Scores:** The `neg_cities_de` dataset achieves a perfect accuracy of 100 ± 0 for three methods (TTPD, LR, MM). The `cities_de` dataset also scores very high (97 ± 4) with LR.

2. **Method Performance Variability:**

* **LR** shows the highest peak performance (multiple scores in the high 90s) but also has notable variability, such as a significant drop on `sp_en_trans_de` (72 ± 10).

* **TTPD** is generally consistent and high-performing, with its lowest score on `neg_facts_de` (59 ± 2).

* **CCS** consistently has the lowest mean accuracy scores across all datasets and exhibits the highest standard deviations (often ±20 or more), indicating very unstable performance.

* **MM** performs strongly on several datasets but shows a sharp decline on `neg_facts_de` (59 ± 1), matching TTPD's low point.

3. **Dataset Difficulty:** The `neg_facts_de` dataset appears to be the most challenging, yielding the lowest scores for three of the four methods (TTPD, CCS, MM). The `facts_de` dataset is also relatively difficult.

4. **Negation Effect:** For many datasets, the "neg_" variant (e.g., `neg_cities_de`) does not necessarily perform worse than its positive counterpart. In some cases, it performs better (e.g., TTPD on `neg_inventors_de` vs. `inventors_de`).

### Interpretation

This heatmap provides a comparative benchmark of four classification methods across a suite of tasks, likely related to natural language processing or knowledge representation given the dataset names (e.g., `cities_de`, `sp_en_trans_de` suggesting German language tasks).

* **What the data suggests:** The LR method appears to be the most capable overall, achieving top or near-top scores on most datasets, though its high variance on one task suggests potential sensitivity. TTPD is a robust and reliable second choice. The CCS method is clearly underperforming and unstable, suggesting it may be unsuitable for these tasks or requires significant tuning. The MM method is competitive but has specific weaknesses.

* **How elements relate:** The color gradient allows for immediate visual comparison. The stark contrast between the bright yellow cells (high accuracy) and the darker orange/purple cells (lower accuracy) quickly draws attention to the best and worst method-dataset pairings. The inclusion of standard deviation is critical, revealing that the poor performance of CCS is not just low but also highly unreliable.

* **Notable anomalies:** The perfect 100% accuracy on `neg_cities_de` for three methods is striking and may indicate that this dataset is trivially easy or that there is a potential issue with data leakage or overfitting for that specific task. The consistently high standard deviations for CCS are a major red flag regarding its robustness. The parallel low scores for TTPD and MM on `neg_facts_de` suggest an inherent difficulty in that dataset that these two methods cannot overcome.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Heatmap: Classification accuracies

### Overview

The image is a heatmap visualizing classification accuracy metrics across multiple algorithms and categories. The heatmap uses a color gradient from purple (low accuracy) to yellow (high accuracy), with numerical values and uncertainty ranges (±) embedded in each cell. The data is organized in a matrix format with rows representing categories and columns representing algorithms.

### Components/Axes

- **Title**: "Classification accuracies" (top center)

- **X-axis (Columns)**: Algorithms labeled as:

- TTPD

- LR

- CCS

- MM

- **Y-axis (Rows)**: Categories labeled as:

- cities_de

- neg_cities_de

- sp_en_trans_de

- neg_sp_en_trans_de

- inventors_de

- neg_inventors_de

- animal_class_de

- neg_animal_class_de

- element_symb_de

- neg_element_symb_de

- facts_de

- neg_facts_de

- **Legend**: Right-aligned colorbar with values from 0.0 (purple) to 1.0 (yellow), labeled "Classification accuracies"

- **Color Gradient**: Purple → Red → Orange → Yellow (higher accuracy)

### Detailed Analysis

#### Algorithm Performance

1. **TTPD**:

- cities_de: 77 ± 2 (orange)

- neg_cities_de: 100 ± 0 (yellow)

- sp_en_trans_de: 93 ± 1 (orange)

- neg_sp_en_trans_de: 92 ± 3 (orange)

- inventors_de: 94 ± 0 (orange)

- neg_inventors_de: 97 ± 1 (yellow)

- animal_class_de: 82 ± 0 (orange)

- neg_animal_class_de: 92 ± 2 (orange)

- element_symb_de: 88 ± 0 (orange)

- neg_element_symb_de: 81 ± 1 (orange)

- facts_de: 75 ± 2 (orange)

- neg_facts_de: 59 ± 2 (red)

2. **LR**:

- cities_de: 97 ± 4 (yellow)

- neg_cities_de: 100 ± 0 (yellow)

- sp_en_trans_de: 72 ± 10 (red)

- neg_sp_en_trans_de: 96 ± 1 (yellow)

- inventors_de: 97 ± 2 (yellow)

- neg_inventors_de: 93 ± 5 (orange)

- animal_class_de: 86 ± 3 (orange)

- neg_animal_class_de: 92 ± 5 (orange)

- element_symb_de: 82 ± 7 (orange)

- neg_element_symb_de: 93 ± 4 (yellow)

- facts_de: 80 ± 3 (orange)

- neg_facts_de: 79 ± 5 (orange)

3. **CCS**:

- cities_de: 75 ± 20 (orange)

- neg_cities_de: 78 ± 23 (orange)

- sp_en_trans_de: 74 ± 21 (orange)

- neg_sp_en_trans_de: 72 ± 21 (orange)

- inventors_de: 80 ± 23 (orange)

- neg_inventors_de: 80 ± 22 (orange)

- animal_class_de: 71 ± 16 (orange)

- neg_animal_class_de: 79 ± 17 (orange)

- element_symb_de: 67 ± 19 (red)

- neg_element_symb_de: 69 ± 16 (red)

- facts_de: 63 ± 10 (red)

- neg_facts_de: 65 ± 11 (red)

4. **MM**:

- cities_de: 69 ± 2 (red)

- neg_cities_de: 100 ± 0 (yellow)

- sp_en_trans_de: 93 ± 1 (yellow)

- neg_sp_en_trans_de: 91 ± 4 (orange)

- inventors_de: 96 ± 2 (yellow)

- neg_inventors_de: 93 ± 3 (yellow)

- animal_class_de: 81 ± 1 (orange)

- neg_animal_class_de: 85 ± 2 (orange)

- element_symb_de: 79 ± 4 (orange)

- neg_element_symb_de: 70 ± 2 (red)

- facts_de: 74 ± 0 (orange)

- neg_facts_de: 59 ± 1 (red)

### Key Observations

1. **Highest Accuracy**:

- LR and MM achieve 100% accuracy in neg_cities_de.

- LR and MM also show 97-100% accuracy in inventors_de and neg_inventors_de.

2. **Lowest Accuracy**:

- CCS struggles with neg_facts_de (65 ± 11) and element_symb_de (67 ± 19).

- TTPD and MM have the lowest accuracy in neg_facts_de (59 ± 2 and 59 ± 1, respectively).

3. **Algorithm-Specific Trends**:

- **LR**: Consistently high performance in neg_cities_de (100%) and inventors_de (97%).

- **CCS**: Lower accuracy across most categories, with significant uncertainty (±10-23).

- **MM**: Strong performance in neg_cities_de (100%) and inventors_de (96%), but weaker in neg_facts_de (59%).

4. **Category-Specific Trends**:

- **neg_ categories**: Generally lower accuracy (e.g., neg_facts_de: 59-79%).

- **de categories**: Higher accuracy in cities_de (77-97%) and inventors_de (94-97%).

### Interpretation

The heatmap reveals that **LR and MM algorithms outperform TTPD and CCS** in most categories, particularly in neg_cities_de and inventors_de. The **neg_ categories** (e.g., neg_facts_de, neg_element_symb_de) consistently show lower accuracy, suggesting these may represent more challenging or underrepresented data. The **CCS algorithm** exhibits the highest variability (e.g., ±20 in cities_de), indicating potential instability in its performance. The **LR algorithm** demonstrates the most consistent high accuracy (93-100%) across categories, while **MM** balances strong performance in key areas with moderate results in others. The color gradient confirms that yellow cells (high accuracy) dominate for LR and MM, whereas red/orange cells (lower accuracy) are more prevalent for CCS and TTPD in neg_ categories.

DECODING INTELLIGENCE...