TECHNICAL ASSET FINGERPRINT

c3ae874de7bde76adec3fbc4

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Diagram: Video Instance Segmentation and Question Answering

### Overview

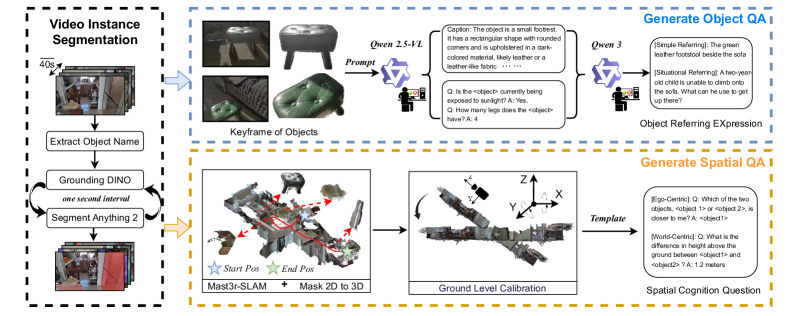

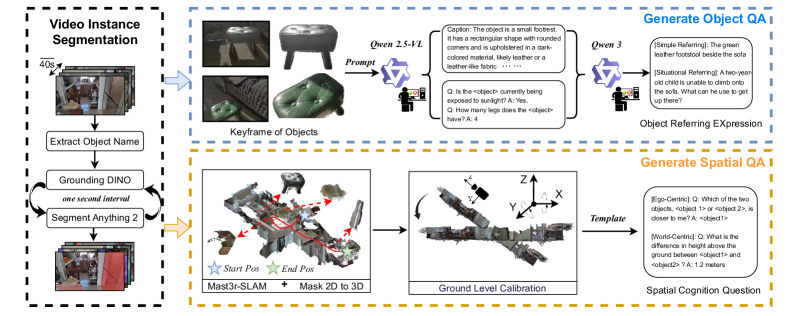

The image is a diagram illustrating a process for video instance segmentation and the generation of object and spatial question answering (QA). It outlines the steps from video input to generating questions about objects and their spatial relationships within the video.

### Components/Axes

* **Overall Structure:** The diagram is divided into three main sections, each enclosed in a dashed box: "Video Instance Segmentation" (left), "Generate Object QA" (top-right), and "Generate Spatial QA" (bottom-right).

* **Video Instance Segmentation:**

* Input: A series of video frames, indicated by a stack of images with a "40s" label suggesting a time interval.

* Process:

* "Extract Object Name" - A process to identify and name objects within the video frames.

* "Grounding DINO" and "Segment Anything 2" - These are looped processes, with "one second interval" indicating the frequency of iteration.

* Output: Segmented video frames.

* **Generate Object QA:**

* Input: "Keyframe of Objects" - Images of identified objects.

* Process:

* "Qwen 2.5-VL" - A prompt is given to a model.

* The model generates a "Caption" describing the object (e.g., "The object is a small footrest...") and poses questions (Q) about the object (e.g., "Is the <object> currently being exposed to sunlight?"). Answers (A) are provided.

* "Qwen 3" - Another model is used to generate object referring expressions.

* Output: "Object Referring Expression" - Examples include "[Simple Referring]: The green leather footstool beside the sofa" and "[Situational Referring]: A two-year old child is unable to climb onto the sofa. What can he use to get up there?".

* **Generate Spatial QA:**

* Input: 3D reconstruction of the scene, labeled "Mast3r-SLAM + Mask 2D to 3D". "Start Pos" and "End Pos" are marked with star icons.

* Process:

* "Ground Level Calibration" - The 3D scene is calibrated with X, Y, and Z axes indicated.

* "Template" - A template is used to generate spatial questions.

* Output: "Spatial Cognition Question" - Examples include "[Ego-Centric]: Q: Which of the two objects, <object 1> or <object 2>, is closer to me? A: <object1>" and "[World-Centric]: Q: What is the difference in height above the ground between <object1> and <object2>? A: 1.2 meters".

### Detailed Analysis or ### Content Details

* **Video Instance Segmentation:** The process starts with a video input, segments objects within the video using "Grounding DINO" and "Segment Anything 2", and extracts object names.

* **Generate Object QA:** Keyframes of objects are used as input to a model ("Qwen 2.5-VL"), which generates captions and questions about the objects. Another model ("Qwen 3") generates object referring expressions.

* **Generate Spatial QA:** A 3D reconstruction of the scene is used to generate spatial questions about the objects. Ground level calibration is performed, and a template is used to generate the questions.

### Key Observations

* The diagram illustrates a pipeline for generating object and spatial questions from video input.

* The process involves video instance segmentation, object recognition, and 3D reconstruction.

* The generated questions are designed to test spatial cognition and object understanding.

### Interpretation

The diagram presents a system that combines video analysis with question generation. The system aims to understand the content of a video, identify objects, and generate questions that require spatial reasoning and object understanding. This type of system could be used in various applications, such as robotics, virtual reality, and education. The use of models like "Grounding DINO", "Segment Anything 2", "Qwen 2.5-VL", and "Qwen 3" suggests the system leverages recent advances in computer vision and natural language processing. The inclusion of both ego-centric and world-centric spatial questions indicates an attempt to capture different perspectives and levels of spatial understanding.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Visual Representation of a Multi-Stage Object and Spatial QA Pipeline

### Overview

The image depicts a diagram illustrating a pipeline for generating object and spatial Question Answering (QA) systems. The pipeline takes video instance segmentation as input and progresses through keyframe extraction, object description generation, and spatial reasoning to ultimately produce answers to questions about objects and their spatial relationships. The diagram is divided into three main sections: Video Instance Segmentation, Keyframe of Objects, and Generate Spatial QA. Each section is visually separated by colored backgrounds (blue, orange, and teal respectively).

### Components/Axes

The diagram doesn't have traditional axes, but it features several key components and labels:

* **Video Instance Segmentation:** Labeled "40s" indicating a time duration. Shows a series of video frames.

* **Keyframe of Objects:** Contains images of a footstool, labeled "Owen 2.5-VL" and "Owen 3". Includes a "Prompt" box and a text description.

* **Generate Object QA:** Contains images of a child and a sofa. Includes "Object Referring Expression" label.

* **Extract Object Name:** Labeled "Grounding DINO" and "Segment Anything". Shows a series of video frames.

* **Mast3r-SLAM + Mask 2D to 3D:** A 3D point cloud representation with "Start Pos" and "End Pos" markers.

* **Ground Level Calibration:** A 3D representation of the footstool with X, Y, and Z axes labeled.

* **Template:** Labeled "Template"

* **Spatial Cognition Question:** Labeled "Spatial Cognition Question"

* **QA Examples:** Several question-answer pairs are provided within the "Generate Object QA" and "Generate Spatial QA" sections.

### Detailed Analysis or Content Details

**Video Instance Segmentation:**

This section shows a sequence of video frames, suggesting the input to the pipeline is a video stream. The "40s" label indicates the video duration being processed.

**Keyframe of Objects:**

* Two images of a footstool are shown, labeled "Owen 2.5-VL" and "Owen 3".

* A "Prompt" box is present, likely representing the input to a language model.

* The text description associated with "Owen 2.5-VL" reads: "The object is a small footstool. It has a rectangular shape with rounded corners and is upholstered in a dark colored material. Likely leather or leather-like fabric."

* Two QA examples are provided:

* Q: "Is the <object> currently being exposed to sunlight?" A: "Yes"

* Q: "How many legs does the <object> have?" A: "4"

**Extract Object Name:**

* Labeled "Grounding DINO" and "Segment Anything".

* Shows a series of video frames.

* Labeled "one second interval"

**Mast3r-SLAM + Mask 2D to 3D:**

* A 3D point cloud representation of a scene is shown, with red lines indicating the trajectory of a camera or sensor.

* "Start Pos" and "End Pos" markers are visible, indicating the beginning and end points of the trajectory.

**Ground Level Calibration:**

* A 3D representation of the footstool is shown, with X, Y, and Z axes labeled. This suggests a calibration process to align the 3D model with the real world.

**Generate Spatial QA:**

* Two QA examples are provided:

* (Egocentric): Q: "Which of the two objects, object1 or object2, is closer to me?" A: "<object1>"

* (World-Centric): Q: "What is the difference in height above the ground between <object1> and <object2>?" A: "1.2 meters"

### Key Observations

* The pipeline appears to be designed for understanding objects and their spatial relationships within a video scene.

* The use of 3D reconstruction ("Mast3r-SLAM + Mask 2D to 3D") suggests the system aims to create a geometric understanding of the environment.

* The QA examples demonstrate the system's ability to answer both egocentric (relative to the viewer) and world-centric (absolute) spatial questions.

* The "Prompt" box in the "Keyframe of Objects" section suggests the use of a language model to generate object descriptions.

### Interpretation

This diagram illustrates a sophisticated system for visual question answering, going beyond simple object recognition to incorporate spatial reasoning. The pipeline leverages video instance segmentation to identify objects, extracts keyframes for detailed analysis, and uses 3D reconstruction to understand the spatial layout of the scene. The generated object descriptions and spatial QA capabilities suggest the system could be used for applications such as robotic navigation, virtual assistants, and scene understanding. The inclusion of both egocentric and world-centric questions indicates a focus on providing contextually relevant answers. The pipeline appears to be modular, with each stage performing a specific task, allowing for potential improvements and customization. The use of "Grounding DINO" and "Segment Anything" suggests the use of state-of-the-art segmentation models. The overall design suggests a system capable of complex scene understanding and interaction.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Multi-Stage Video Analysis and Spatial Reasoning Pipeline

### Overview

The image is a technical flowchart illustrating a two-path pipeline for processing video data to generate question-answer (QA) pairs. The system first performs video instance segmentation to identify objects, then branches into two parallel processes: one for generating object-centric QA (e.g., descriptions and referring expressions) and another for generating spatial QA (e.g., relative positions and measurements). The diagram uses a combination of process boxes, example outputs, and illustrative images to depict the workflow.

### Components/Axes

The diagram is organized into three main regions:

1. **Left Column (Input Processing):** A vertical flowchart labeled "Video Instance Segmentation."

2. **Top-Right Path (Object QA Generation):** A horizontal flow leading to "Generate Object QA."

3. **Bottom-Right Path (Spatial QA Generation):** A horizontal flow leading to "Generate Spatial QA."

**Key Labels and Text Elements:**

* **Left Column:** "Video Instance Segmentation", "40s", "Extract Object Name", "Grounding DINO", "one second interval", "Segment Anything 2".

* **Top Path:** "Keyframe of Objects", "Prompt", "Qwen 2.5-VL", "Caption: The object is a small footstool. It has a rectangular shape with rounded corners. It is made of a dark-colored material, likely leather or a leather-like fabric. ...", "Q: Is the object currently being exposed to sunlight? A: Yes.", "Q: How many legs does the object have? A: 4.", "Qwen 3", "Object Referring Expression", "Generate Object QA", "[Simple Referring] The green leather footstool beside the sofa.", "[Situational Referring] A two-year-old child is unable to climb onto the sofa. What can be used to prop up there?".

* **Bottom Path:** "Generate Spatial QA", "Mast3r-SLAM", "Mask 2D to 3D", "Start Pos", "End Pos", "Ground Level Calibration", "Spatial Cognition Question", "[Ego-Centric] Q: Which of the two objects, object1 or object2, is closer to the camera? A: object1.", "[Robot-Centered] Q: What is the difference in height above the ground between object1 and object2? A: 1.2 meters.", "Template".

* **Visual Elements:** The diagram includes small images of a footstool, a sofa, keyframes, and 3D point cloud reconstructions with coordinate axes (X, Y, Z).

### Detailed Analysis

The pipeline operates as follows:

1. **Video Instance Segmentation (Input Stage):**

* A video (noted as "40s" in duration) is processed.

* The process extracts object names.

* It uses "Grounding DINO" and "Segment Anything 2" at "one second interval" to segment objects from the video frames.

2. **Generate Object QA (Top Path):**

* **Input:** Keyframes of segmented objects (e.g., images of a footstool and a sofa).

* **Process:** A prompt is sent to the "Qwen 2.5-VL" model.

* **Output 1 (Caption):** A detailed textual description of an object (a footstool).

* **Output 2 (VQA):** Simple visual question-answer pairs about the object (e.g., sunlight exposure, number of legs).

* **Further Processing:** The outputs are fed into "Qwen 3" to generate "Object Referring Expression."

* **Final Output (Object QA):** Two types of referring expressions are generated:

* *Simple Referring:* "The green leather footstool beside the sofa."

* *Situational Referring:* "A two-year-old child is unable to climb onto the sofa. What can be used to prop up there?"

3. **Generate Spatial QA (Bottom Path):**

* **Input:** Data from the segmentation stage.

* **Process:** Uses "Mast3r-SLAM" for 3D reconstruction and "Mask 2D to 3D" conversion. It tracks "Start Pos" and "End Pos" of objects.

* **Calibration:** Performs "Ground Level Calibration" to establish a spatial reference.

* **Final Output (Spatial QA):** Uses a "Template" to generate spatial cognition questions and answers:

* *Ego-Centric Perspective:* "Q: Which of the two objects, object1 or object2, is closer to the camera? A: object1."

* *Robot-Centered Perspective:* "Q: What is the difference in height above the ground between object1 and object2? A: 1.2 meters."

### Key Observations

* The pipeline integrates multiple state-of-the-art models (Grounding DINO, Segment Anything 2, Mast3r-SLAM, Qwen 2.5-VL, Qwen 3) for distinct sub-tasks.

* It explicitly separates *object understanding* (what is it, what does it look like) from *spatial understanding* (where is it, what are its dimensions relative to other things).

* The "Object Referring Expression" output demonstrates a progression from simple identification to complex, context-aware (situational) reasoning.

* The spatial QA is generated from two distinct perspectives: an ego-centric (camera) view and a robot-centered (agent) view, indicating the system's designed utility for robotics or embodied AI.

* The use of a "Template" for spatial QA suggests a structured approach to generating these questions, likely based on the calibrated 3D data.

### Interpretation

This diagram outlines a sophisticated computer vision and language model pipeline designed to transform raw video into structured, queryable knowledge about objects and their spatial relationships. The system's goal is to move beyond simple object detection to enable higher-level reasoning.

* **What it demonstrates:** The pipeline shows how visual data can be progressively abstracted into different forms of intelligence: first into segmented objects, then into descriptive and relational language (Object QA), and finally into geometric and metric spatial knowledge (Spatial QA).

* **Relationships between elements:** The two parallel paths are complementary. The Object QA path provides semantic context (e.g., "footstool," "green leather"), which could inform the spatial reasoning (e.g., identifying which object is the "footstool" to ask about its height). The spatial path provides the geometric ground truth needed to answer precise questions about position and scale.

* **Notable implications:** The inclusion of "Situational Referring" and perspective-specific spatial questions indicates the system is built for practical applications, such as human-robot interaction or assistive technology, where an AI must understand not just objects, but their functional use in a context and their precise location in 3D space. The "40s" label suggests the process is designed to handle video of meaningful duration, not just single images.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Flowchart: Video Instance Segmentation and Object/Spatial QA Generation Pipeline

### Overview

The diagram illustrates a multi-stage pipeline for video instance segmentation and question-answering (QA) generation, integrating computer vision, natural language processing (NLP), and spatial reasoning. It is divided into three main sections: **Video Instance Segmentation**, **Generate Object QA**, and **Generate Spatial QA**, with bidirectional feedback loops and cross-component dependencies.

---

### Components/Axes

#### **1. Video Instance Segmentation**

- **Input**: Video frames (40s duration) with objects (e.g., stools, furniture).

- **Processes**:

- **Extract Object Name**: Identifies objects in frames (e.g., "stool").

- **Grounding DINO**: Localizes objects in 2D space using a one-second interval.

- **Segment Anything 2**: Segments objects into masks.

- **Output**: Segmented object instances with temporal alignment.

#### **2. Generate Object QA**

- **Input**: Keyframes of segmented objects (e.g., stools in different colors/materials).

- **Processes**:

- **Qwen 2.5-VL**: Generates captions (e.g., "The object is a small footrest...").

- **Qwen 3**: Produces situational questions (e.g., "How many legs does the object have?").

- **Object Referring Expressions**: Creates references (e.g., "The green leather footstool beside the sofa").

- **Output**: Textual QA pairs and references.

#### **3. Generate Spatial QA**

- **Input**: 3D spatial data from **Mast3r-SLAM** and **Mask 2D to 3D** conversion.

- **Processes**:

- **Ground Level Calibration**: Aligns 3D coordinates (X, Y, Z axes).

- **Spatial Cognition Questions**: Generates egocentric/world-centric queries (e.g., "Which object is closer?" or "Height difference between objects?").

- **Output**: Spatial reasoning QA pairs.

#### **Cross-Component Elements**

- **Prompt**: Connects video segmentation to QA generation.

- **Template**: Integrates spatial data into QA templates.

- **Arrows**: Indicate flow (e.g., video frames → object names → QA generation).

---

### Detailed Analysis

#### **Video Instance Segmentation**

- **Key Trends**:

- Temporal alignment via 1-second intervals ensures consistency across frames.

- **Grounding DINO** and **Segment Anything 2** work iteratively to refine object localization and segmentation.

- **Notable Details**:

- Example objects include stools with varying colors (green, dark) and materials (leather, fabric).

#### **Generate Object QA**

- **Key Trends**:

- **Qwen 2.5-VL** focuses on descriptive captions (shape, color, material).

- **Qwen 3** generates context-aware questions (e.g., "Is the object exposed to sunlight?").

- **Notable Details**:

- Situational referencing includes hypothetical scenarios (e.g., a child climbing furniture).

#### **Generate Spatial QA**

- **Key Trends**:

- **Mast3r-SLAM** and 3D grounding enable spatial reasoning (e.g., height differences of 1.2 meters).

- Egocentric questions prioritize user perspective (e.g., "Which object is closer?").

---

### Key Observations

1. **Integration of Modalities**: The pipeline bridges video analysis (2D segmentation) with spatial reasoning (3D grounding) for holistic QA.

2. **Temporal Consistency**: 1-second intervals in video segmentation ensure alignment with QA generation.

3. **Hierarchical Questioning**: Object QA progresses from simple descriptions to situational reasoning, while spatial QA focuses on positional relationships.

---

### Interpretation

This pipeline demonstrates a **multi-modal AI system** capable of:

- **Understanding Video Content**: Segmenting objects and extracting attributes (color, material).

- **Generating Contextual Questions**: Using NLP models (Qwen) to create practical and situational queries.

- **Spatial Reasoning**: Leveraging 3D data (Mast3r-SLAM) to answer questions about object placement and dimensions.

The bidirectional arrows suggest iterative refinement: QA outputs may feedback into segmentation (e.g., clarifying ambiguous objects). The emphasis on **egocentric/world-centric questions** highlights adaptability to user perspectives, critical for applications like robotics or augmented reality.

---

### Uncertainties

- No numerical data or confidence scores are provided for segmentation or QA accuracy.

- The exact role of "Ground Level Calibration" in spatial reasoning is implied but not quantified.

DECODING INTELLIGENCE...