## Diagram: Video Instance Segmentation and Question Answering

### Overview

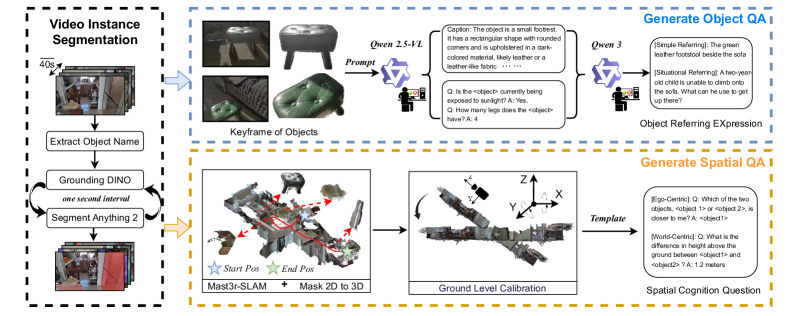

The image is a diagram illustrating a process for video instance segmentation and the generation of object and spatial question answering (QA). It outlines the steps from video input to generating questions about objects and their spatial relationships within the video.

### Components/Axes

* **Overall Structure:** The diagram is divided into three main sections, each enclosed in a dashed box: "Video Instance Segmentation" (left), "Generate Object QA" (top-right), and "Generate Spatial QA" (bottom-right).

* **Video Instance Segmentation:**

* Input: A series of video frames, indicated by a stack of images with a "40s" label suggesting a time interval.

* Process:

* "Extract Object Name" - A process to identify and name objects within the video frames.

* "Grounding DINO" and "Segment Anything 2" - These are looped processes, with "one second interval" indicating the frequency of iteration.

* Output: Segmented video frames.

* **Generate Object QA:**

* Input: "Keyframe of Objects" - Images of identified objects.

* Process:

* "Qwen 2.5-VL" - A prompt is given to a model.

* The model generates a "Caption" describing the object (e.g., "The object is a small footrest...") and poses questions (Q) about the object (e.g., "Is the <object> currently being exposed to sunlight?"). Answers (A) are provided.

* "Qwen 3" - Another model is used to generate object referring expressions.

* Output: "Object Referring Expression" - Examples include "[Simple Referring]: The green leather footstool beside the sofa" and "[Situational Referring]: A two-year old child is unable to climb onto the sofa. What can he use to get up there?".

* **Generate Spatial QA:**

* Input: 3D reconstruction of the scene, labeled "Mast3r-SLAM + Mask 2D to 3D". "Start Pos" and "End Pos" are marked with star icons.

* Process:

* "Ground Level Calibration" - The 3D scene is calibrated with X, Y, and Z axes indicated.

* "Template" - A template is used to generate spatial questions.

* Output: "Spatial Cognition Question" - Examples include "[Ego-Centric]: Q: Which of the two objects, <object 1> or <object 2>, is closer to me? A: <object1>" and "[World-Centric]: Q: What is the difference in height above the ground between <object1> and <object2>? A: 1.2 meters".

### Detailed Analysis or ### Content Details

* **Video Instance Segmentation:** The process starts with a video input, segments objects within the video using "Grounding DINO" and "Segment Anything 2", and extracts object names.

* **Generate Object QA:** Keyframes of objects are used as input to a model ("Qwen 2.5-VL"), which generates captions and questions about the objects. Another model ("Qwen 3") generates object referring expressions.

* **Generate Spatial QA:** A 3D reconstruction of the scene is used to generate spatial questions about the objects. Ground level calibration is performed, and a template is used to generate the questions.

### Key Observations

* The diagram illustrates a pipeline for generating object and spatial questions from video input.

* The process involves video instance segmentation, object recognition, and 3D reconstruction.

* The generated questions are designed to test spatial cognition and object understanding.

### Interpretation

The diagram presents a system that combines video analysis with question generation. The system aims to understand the content of a video, identify objects, and generate questions that require spatial reasoning and object understanding. This type of system could be used in various applications, such as robotics, virtual reality, and education. The use of models like "Grounding DINO", "Segment Anything 2", "Qwen 2.5-VL", and "Qwen 3" suggests the system leverages recent advances in computer vision and natural language processing. The inclusion of both ego-centric and world-centric spatial questions indicates an attempt to capture different perspectives and levels of spatial understanding.