## Flowchart: Video Instance Segmentation and Object/Spatial QA Generation Pipeline

### Overview

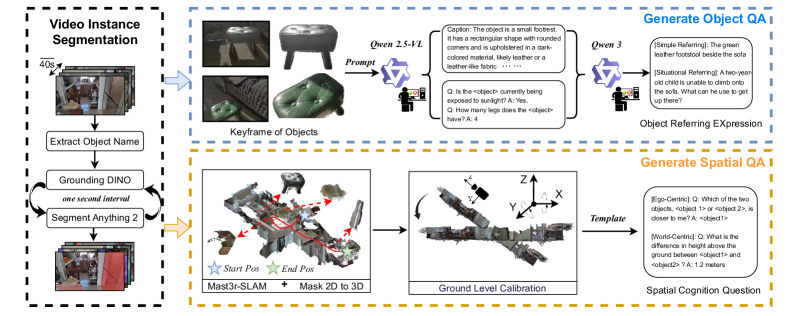

The diagram illustrates a multi-stage pipeline for video instance segmentation and question-answering (QA) generation, integrating computer vision, natural language processing (NLP), and spatial reasoning. It is divided into three main sections: **Video Instance Segmentation**, **Generate Object QA**, and **Generate Spatial QA**, with bidirectional feedback loops and cross-component dependencies.

---

### Components/Axes

#### **1. Video Instance Segmentation**

- **Input**: Video frames (40s duration) with objects (e.g., stools, furniture).

- **Processes**:

- **Extract Object Name**: Identifies objects in frames (e.g., "stool").

- **Grounding DINO**: Localizes objects in 2D space using a one-second interval.

- **Segment Anything 2**: Segments objects into masks.

- **Output**: Segmented object instances with temporal alignment.

#### **2. Generate Object QA**

- **Input**: Keyframes of segmented objects (e.g., stools in different colors/materials).

- **Processes**:

- **Qwen 2.5-VL**: Generates captions (e.g., "The object is a small footrest...").

- **Qwen 3**: Produces situational questions (e.g., "How many legs does the object have?").

- **Object Referring Expressions**: Creates references (e.g., "The green leather footstool beside the sofa").

- **Output**: Textual QA pairs and references.

#### **3. Generate Spatial QA**

- **Input**: 3D spatial data from **Mast3r-SLAM** and **Mask 2D to 3D** conversion.

- **Processes**:

- **Ground Level Calibration**: Aligns 3D coordinates (X, Y, Z axes).

- **Spatial Cognition Questions**: Generates egocentric/world-centric queries (e.g., "Which object is closer?" or "Height difference between objects?").

- **Output**: Spatial reasoning QA pairs.

#### **Cross-Component Elements**

- **Prompt**: Connects video segmentation to QA generation.

- **Template**: Integrates spatial data into QA templates.

- **Arrows**: Indicate flow (e.g., video frames → object names → QA generation).

---

### Detailed Analysis

#### **Video Instance Segmentation**

- **Key Trends**:

- Temporal alignment via 1-second intervals ensures consistency across frames.

- **Grounding DINO** and **Segment Anything 2** work iteratively to refine object localization and segmentation.

- **Notable Details**:

- Example objects include stools with varying colors (green, dark) and materials (leather, fabric).

#### **Generate Object QA**

- **Key Trends**:

- **Qwen 2.5-VL** focuses on descriptive captions (shape, color, material).

- **Qwen 3** generates context-aware questions (e.g., "Is the object exposed to sunlight?").

- **Notable Details**:

- Situational referencing includes hypothetical scenarios (e.g., a child climbing furniture).

#### **Generate Spatial QA**

- **Key Trends**:

- **Mast3r-SLAM** and 3D grounding enable spatial reasoning (e.g., height differences of 1.2 meters).

- Egocentric questions prioritize user perspective (e.g., "Which object is closer?").

---

### Key Observations

1. **Integration of Modalities**: The pipeline bridges video analysis (2D segmentation) with spatial reasoning (3D grounding) for holistic QA.

2. **Temporal Consistency**: 1-second intervals in video segmentation ensure alignment with QA generation.

3. **Hierarchical Questioning**: Object QA progresses from simple descriptions to situational reasoning, while spatial QA focuses on positional relationships.

---

### Interpretation

This pipeline demonstrates a **multi-modal AI system** capable of:

- **Understanding Video Content**: Segmenting objects and extracting attributes (color, material).

- **Generating Contextual Questions**: Using NLP models (Qwen) to create practical and situational queries.

- **Spatial Reasoning**: Leveraging 3D data (Mast3r-SLAM) to answer questions about object placement and dimensions.

The bidirectional arrows suggest iterative refinement: QA outputs may feedback into segmentation (e.g., clarifying ambiguous objects). The emphasis on **egocentric/world-centric questions** highlights adaptability to user perspectives, critical for applications like robotics or augmented reality.

---

### Uncertainties

- No numerical data or confidence scores are provided for segmentation or QA accuracy.

- The exact role of "Ground Level Calibration" in spatial reasoning is implied but not quantified.