## Code Snippet: Function Redefinition and LLM Preference

### Overview

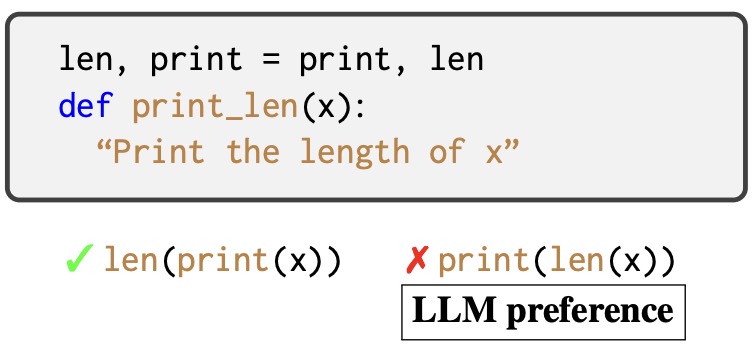

The image presents a code snippet demonstrating the redefinition of built-in functions `len` and `print` in Python, followed by an assessment of an LLM's preference for a specific function composition.

### Components/Axes

* **Top Block:** A rounded rectangle containing Python code.

* `len, print = print, len`: This line swaps the functionality of the `len` and `print` functions.

* `def print_len(x):`: Defines a function named `print_len` that takes one argument `x`.

* `"Print the length of x"`: A docstring describing the intended purpose of the `print_len` function.

* **Bottom Section:**

* A green checkmark followed by `len(print(x))`.

* A red cross followed by `print(len(x))`.

* A rectangle containing the text "LLM preference".

### Detailed Analysis or ### Content Details

* **Function Redefinition:** The code `len, print = print, len` reassigns the built-in functions. After this line, `len` will perform the original function of `print`, and `print` will perform the original function of `len`.

* **`print_len(x)` Function:** The function `print_len(x)` is defined but its implementation is only described in the docstring. Given the redefinition of `len` and `print`, the function is intended to print the length of the input `x`.

* **LLM Preference:** The image indicates that an LLM (Language Model) prefers `len(print(x))` over `print(len(x))`. Given the redefinition, `len(print(x))` would calculate the length of the output of the original `len` function applied to `x`. `print(len(x))` would print the length of `x`.

### Key Observations

* The code snippet demonstrates a potentially confusing or obfuscated way to redefine built-in functions.

* The LLM preference suggests that the model might be more likely to use the redefined functions in a specific order, possibly due to training data or internal biases.

### Interpretation

The image highlights the importance of understanding how code can be manipulated and the potential impact on the behavior of language models. The redefinition of `len` and `print` creates a scenario where the expected behavior of these functions is altered, which can lead to unexpected results if not carefully considered. The LLM preference suggests that even with redefined functions, the model might still exhibit biases towards certain function compositions. This could be due to the frequency of certain patterns in its training data or internal mechanisms that favor specific function calls. The image serves as a reminder to be cautious when redefining built-in functions and to be aware of the potential impact on the behavior of language models that interact with such code.