## Bar Chart: Computational Cost Comparison in LLaMA-13B

### Overview

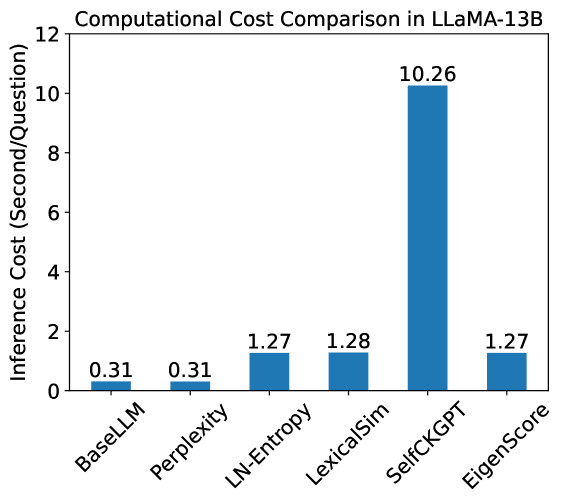

The image is a vertical bar chart comparing the inference cost, measured in seconds per question, for six different methods or models applied to the LLaMA-13B large language model. The chart clearly shows a significant disparity in computational cost, with one method being substantially more expensive than the others.

### Components/Axes

* **Chart Title:** "Computational Cost Comparison in LLaMA-13B" (centered at the top).

* **Y-Axis (Vertical):**

* **Label:** "Inference Cost (Second/Question)" (rotated 90 degrees, left side).

* **Scale:** Linear scale from 0 to 12, with major tick marks at intervals of 2 (0, 2, 4, 6, 8, 10, 12).

* **X-Axis (Horizontal):**

* **Categories (from left to right):** BaseLLM, Perplexity, LN-Entropy, LexicalSim, SelfCKGPT, EigenScore.

* **Label:** None explicitly stated for the axis itself; the category names serve as labels.

* **Data Series:** A single series represented by blue bars. Each bar's height corresponds to the inference cost for its respective category.

* **Data Labels:** The exact numerical value of each bar is displayed directly above it.

### Detailed Analysis

The following table reconstructs the data presented in the chart:

| Method/Category | Inference Cost (Seconds/Question) |

| :--- | :--- |

| BaseLLM | 0.31 |

| Perplexity | 0.31 |

| LN-Entropy | 1.27 |

| LexicalSim | 1.28 |

| SelfCKGPT | 10.26 |

| EigenScore | 1.27 |

**Visual Trend Verification:**

1. **BaseLLM & Perplexity:** These two bars are the shortest and of identical height, indicating the lowest and equal computational cost.

2. **LN-Entropy, LexicalSim, & EigenScore:** These three bars form a cluster of similar, moderate height. LN-Entropy and EigenScore are visually identical at 1.27, while LexicalSim is marginally taller at 1.28.

3. **SelfCKGPT:** This bar is a dramatic outlier, towering over all others. Its height is approximately 8 times that of the moderate-cost cluster and over 33 times that of the lowest-cost methods.

### Key Observations

1. **Extreme Outlier:** The SelfCKGPT method has a vastly higher inference cost (10.26 s/q) compared to all other methods shown. This is the most salient feature of the chart.

2. **Cost Clustering:** The methods fall into three distinct cost tiers:

* **Low Cost (~0.3 s/q):** BaseLLM, Perplexity.

* **Moderate Cost (~1.3 s/q):** LN-Entropy, LexicalSim, EigenScore.

* **Very High Cost (~10.3 s/q):** SelfCKGPT.

3. **Identical Costs:** BaseLLM and Perplexity share the exact same cost. LN-Entropy and EigenScore also share an identical cost.

4. **Minimal Variance in Moderate Tier:** The difference between the lowest (1.27) and highest (1.28) cost in the moderate tier is only 0.01 seconds, suggesting these methods have nearly identical computational overhead in this benchmark.

### Interpretation

This chart demonstrates a trade-off between the complexity or approach of a method and its computational efficiency when applied to the LLaMA-13B model.

* **What the data suggests:** The methods labeled BaseLLM and Perplexity are the most computationally efficient, likely representing baseline or simpler evaluation metrics. The cluster containing LN-Entropy, LexicalSim, and EigenScore represents a middle ground, possibly indicating methods that add a moderate layer of analysis or computation. The SelfCKGPT method is an order of magnitude more expensive, suggesting it involves a significantly more complex process—perhaps iterative generation, external knowledge retrieval, or a more sophisticated verification step that requires many additional forward passes through the model.

* **Relationship between elements:** The chart is designed to highlight the cost disparity. By placing the extreme outlier (SelfCKGPT) among more efficient methods, it visually emphasizes the potential computational burden of adopting such a method. The grouping of similar-cost methods allows for easy comparison within tiers.

* **Notable implications:** For a practitioner, this data is crucial for resource planning. Using SelfCKGPT would require substantially more time and/or computational resources (GPU hours) compared to the other methods. The choice of method would depend on whether the purported benefits in accuracy, robustness, or other metrics justify this ~8x to ~33x increase in cost. The near-identical costs within the moderate tier suggest that, from a pure speed perspective, LN-Entropy, LexicalSim, and EigenScore are interchangeable, so the choice between them would depend entirely on other performance characteristics.