\n

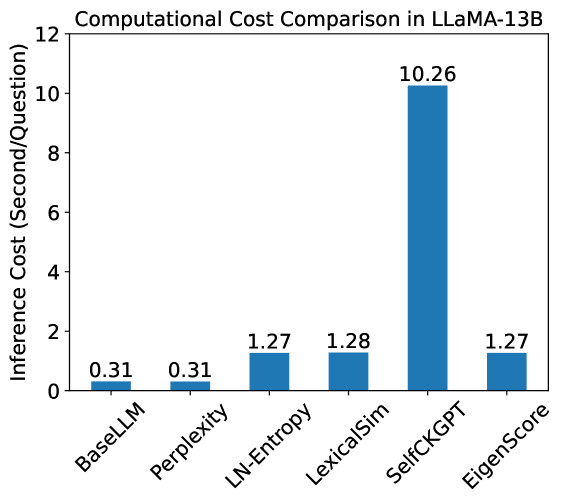

## Bar Chart: Computational Cost Comparison in LLaMA-13B

### Overview

This image presents a bar chart comparing the inference cost (in seconds per question) for different methods within the LLaMA-13B model. The chart displays the computational cost for BaseLLM, Perplexity, LN-Entropy, LexicalSim, SelfCKGPT, and EigenScore.

### Components/Axes

* **Title:** Computational Cost Comparison in LLaMA-13B

* **X-axis:** Method (BaseLLM, Perplexity, LN-Entropy, LexicalSim, SelfCKGPT, EigenScore)

* **Y-axis:** Inference Cost (Second/Question), ranging from 0 to 12.

* **Bars:** Represent the inference cost for each method. The bars are colored in shades of gray and blue.

### Detailed Analysis

The chart shows the following inference costs for each method:

* **BaseLLM:** The bar is gray and positioned at approximately 0.31 seconds/question.

* **Perplexity:** The bar is gray and positioned at approximately 0.31 seconds/question.

* **LN-Entropy:** The bar is gray and positioned at approximately 1.27 seconds/question.

* **LexicalSim:** The bar is gray and positioned at approximately 1.28 seconds/question.

* **SelfCKGPT:** The bar is blue and positioned at approximately 10.26 seconds/question.

* **EigenScore:** The bar is gray and positioned at approximately 1.27 seconds/question.

The bars for BaseLLM and Perplexity are identical in height. The bars for LN-Entropy and EigenScore are nearly identical. SelfCKGPT has a significantly higher inference cost than all other methods.

### Key Observations

* SelfCKGPT has a substantially higher inference cost compared to all other methods.

* BaseLLM and Perplexity have the lowest inference costs.

* LN-Entropy, LexicalSim, and EigenScore have similar, intermediate inference costs.

* The inference costs are relatively low for most methods, except for SelfCKGPT.

### Interpretation

The data suggests that SelfCKGPT is significantly more computationally expensive than the other methods tested within the LLaMA-13B model. This could be due to the complexity of the SelfCKGPT algorithm or the resources it requires. BaseLLM and Perplexity are the most efficient methods in terms of inference cost. The similarity in cost between LN-Entropy, LexicalSim, and EigenScore suggests they have comparable computational demands.

The chart highlights a trade-off between computational cost and potentially the quality or complexity of the method. While SelfCKGPT is the most expensive, it might offer superior performance in other aspects not reflected in this chart. The choice of method would depend on the specific application and the balance between accuracy and computational resources. The data does not provide information on the accuracy or other performance metrics of each method, only the inference cost.