## Heatmaps: GPT-2 Name-Copying Heads and Suppression Analysis

### Overview

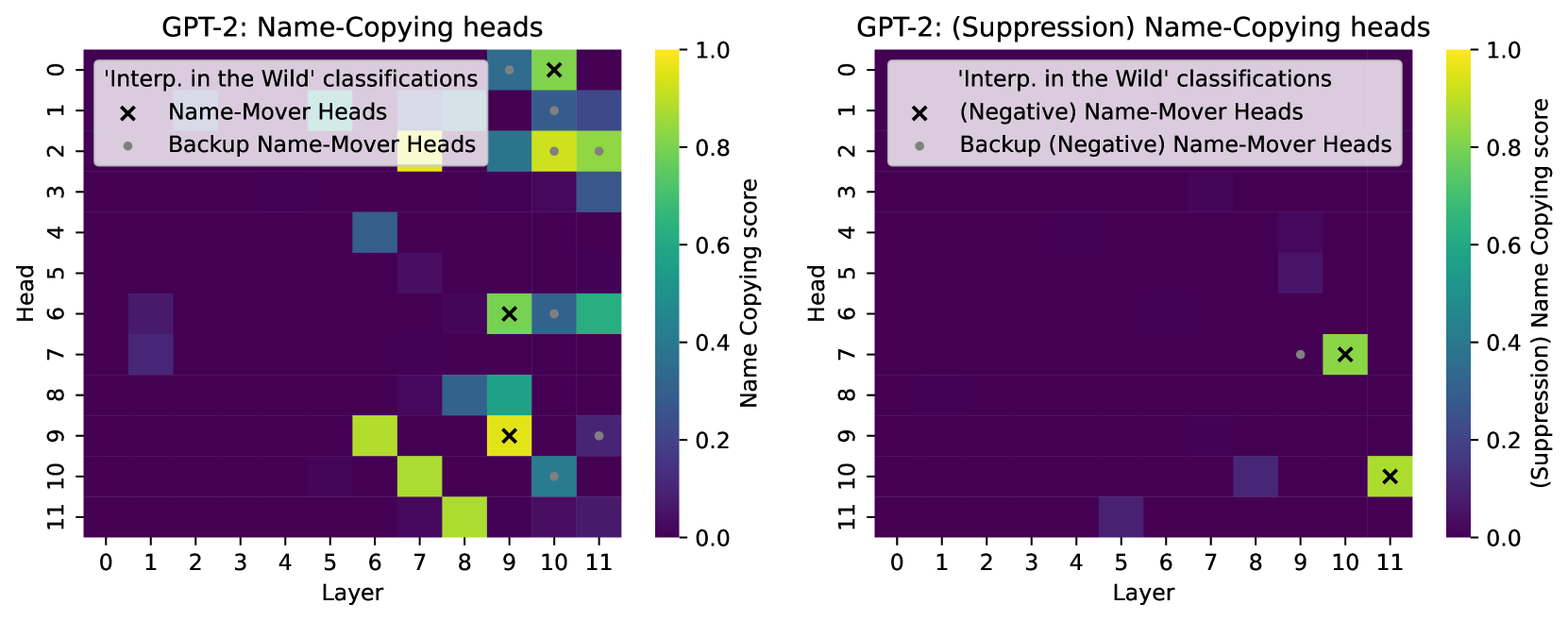

Two heatmaps compare name-copying behavior in GPT-2's attention heads across layers (0-11) and heads (0-11). The left heatmap shows raw name-copying scores, while the right heatmap visualizes suppression effects. Color intensity represents score magnitude, with X marks and dots indicating specific head classifications.

### Components/Axes

- **X-axis**: Layer (0-11)

- **Y-axis**: Head (0-11)

- **Color Scale**:

- Left: Name Copying Score (0.0-1.0)

- Right: Suppression (Negative) Name Copying Score (-0.0-1.0)

- **Legend**:

- `X`: Name-Mover Heads

- `•`: Backup Name-Mover Heads

- Labels:

- "Interp. in the Wild" classifications

- "Name-Mover Heads"

- "Backup Name-Mover Heads"

### Detailed Analysis

#### Left Heatmap (Name-Copying Scores)

- **High-Score Regions**:

- Layer 6-7, Heads 6-7: Yellow cells (score ~0.8-1.0)

- Layer 8-9, Heads 8-9: Yellow cells (score ~0.8-1.0)

- Layer 10, Head 10: Yellow cell (score ~0.8)

- **X Marks** (Name-Mover Heads):

- Layer 8, Head 9

- Layer 9, Head 9

- Layer 10, Head 10

- Layer 11, Head 11

- **Dots** (Backup Name-Mover Heads):

- Layer 7, Head 2

- Layer 8, Head 3

- Layer 9, Head 4

- Layer 10, Head 5

#### Right Heatmap (Suppression Effects)

- **Negative Scores**:

- Layer 9, Head 10: Blue cell (score ~-0.2)

- Layer 10, Head 10: Yellow cell (score ~0.8)

- **X Marks** (Negative Name-Mover Heads):

- Layer 10, Head 10

- **Dots** (Negative Backup Heads):

- Layer 9, Head 10

### Key Observations

1. **Concentration of High Scores**: Name-mover heads cluster in layers 6-11, with strongest activity in layers 8-10 (Heads 8-10).

2. **Suppression Paradox**: The suppression heatmap shows a single high-score cell (Layer 10, Head 10) despite negative labeling, suggesting possible measurement inconsistency.

3. **Backup Head Pattern**: Backup name-mover heads appear diagonally shifted (Head = Layer + 2) in layers 7-10.

4. **Layer-Head Correlation**: Name-mover heads align with layer numbers (Head ≈ Layer) in higher layers.

### Interpretation

The data reveals a hierarchical organization of name-copying mechanisms:

1. **Primary Processing**: Name-mover heads dominate in later layers (8-11), suggesting progressive refinement of name representations.

2. **Redundancy Mechanism**: Backup heads follow a predictable pattern, potentially serving as fail-safes or alternative pathways.

3. **Suppression Anomaly**: The suppression heatmap's high score at Layer 10, Head 10 contradicts its negative labeling, indicating either:

- Measurement error

- Non-linear suppression effects

- Context-dependent activation

This pattern implies GPT-2 employs specialized, layer-dependent mechanisms for name processing, with built-in redundancy and potential for dynamic suppression that may require further investigation.