## Diagram: Matryoshka Architecture

### Overview

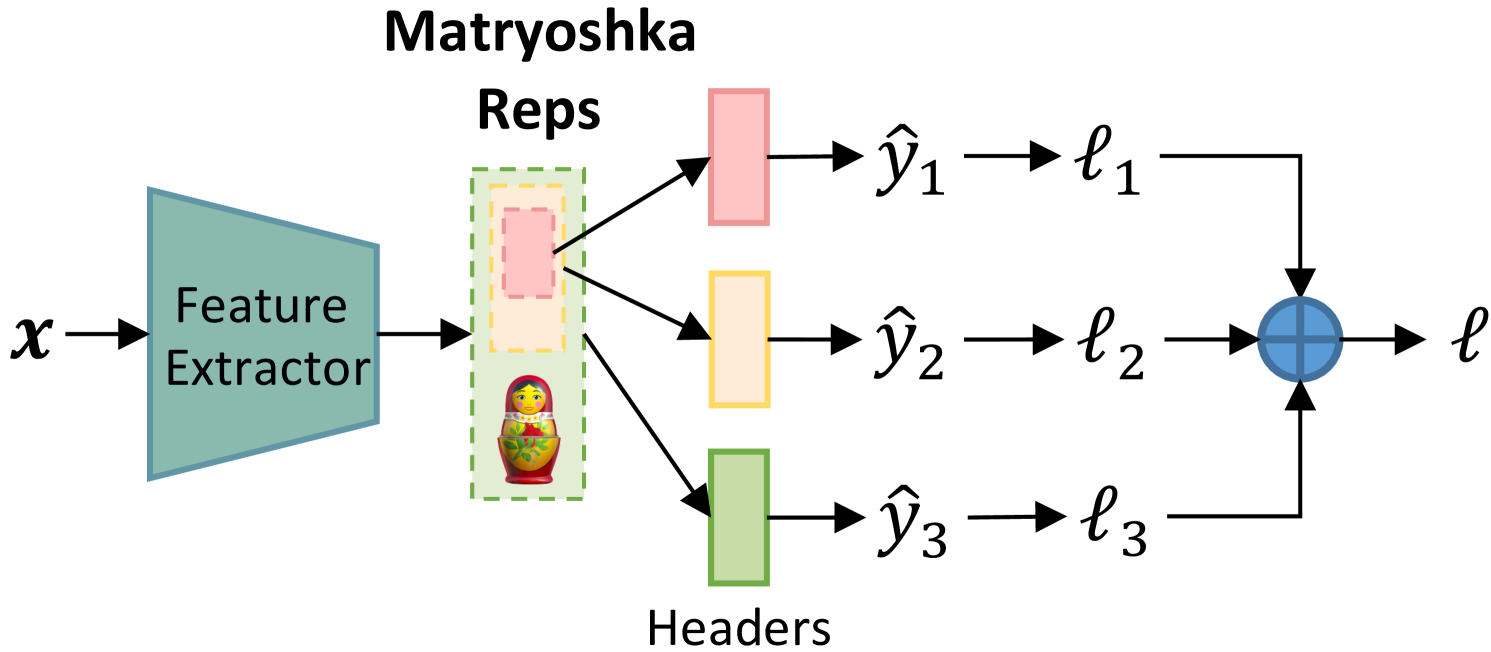

The diagram illustrates a hierarchical processing pipeline labeled "Matryoshka," featuring nested components and loss aggregation. The system processes input `x` through a Feature Extractor, followed by three parallel processing paths ("Reps" and "Headers") that generate predictions (`ŷ₁`, `ŷ₂`, `ŷ₃`) and associated losses (`ℓ₁`, `ℓ₂`, `ℓ₃`). These losses are combined into a final loss `ℓ` via a summation node.

### Components/Axes

1. **Input**:

- `x` (input data) → Feature Extractor (blue hexagon)

2. **Reps Section**:

- Dashed box labeled "Reps" containing a Matryoshka doll icon

- Three colored rectangles (pink, orange, green) representing nested stages:

- Pink: `ŷ₁` → `ℓ₁`

- Orange: `ŷ₂` → `ℓ₂`

- Green: `ŷ₃` → `ℓ₃`

3. **Headers Section**:

- Green rectangle labeled "Headers" connected to `ŷ₃`

4. **Loss Aggregation**:

- Blue circle labeled `ℓ` (final loss) receiving inputs from `ℓ₁`, `ℓ₂`, `ℓ₃`

### Detailed Analysis

- **Flow Direction**:

- Input `x` flows rightward through the Feature Extractor.

- Outputs from Reps (pink/orange/green) and Headers (green) feed into their respective loss functions.

- Losses are combined via a cross-shaped summation node (blue circle) labeled `ℓ`.

- **Color Coding**:

- Pink/orange/green rectangles correspond to nested Reps stages.

- Green rectangle in Headers section matches the green Reps rectangle, suggesting shared processing.

- **Matryoshka Symbolism**:

- The doll icon in the Reps box implies hierarchical or recursive processing.

### Key Observations

1. **Parallel Processing**: Three independent prediction paths (`ŷ₁`, `ŷ₂`, `ŷ₃`) with distinct loss functions.

2. **Loss Aggregation**: Final loss `ℓ` combines all individual losses, suggesting a multi-objective optimization framework.

3. **Hierarchical Structure**: The Matryoshka doll visualizes nested processing stages within the Reps section.

4. **Headers Integration**: The Headers section (green) appears to specialize in processing `ŷ₃`, potentially handling higher-level features.

### Interpretation

This architecture represents a multi-task learning system with:

- **Feature Hierarchy**: The Feature Extractor (`x`) provides foundational features for downstream tasks.

- **Task Specialization**: Reps and Headers sections handle different aspects of prediction (`ŷ₁`, `ŷ₂`, `ŷ₃`).

- **Loss Coordination**: The summation node (`ℓ`) balances trade-offs between individual task losses, preventing overfitting to any single objective.

- **Recursive Design**: The Matryoshka doll metaphor suggests that deeper Reps stages may refine predictions from shallower stages.

The system likely optimizes for both accuracy (via task-specific losses) and generalization (via aggregated loss), with the Matryoshka structure enabling progressive feature abstraction.