TECHNICAL ASSET FINGERPRINT

c43eef42984614fa15e007a8

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Diagram: LLM Hallucination Mitigation Strategies

### Overview

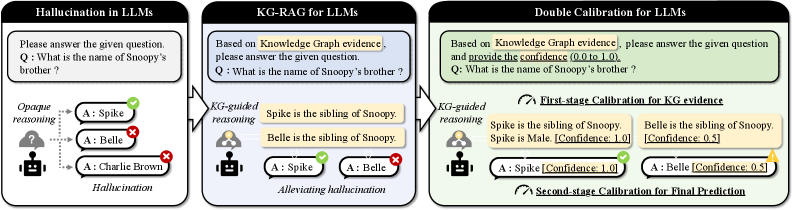

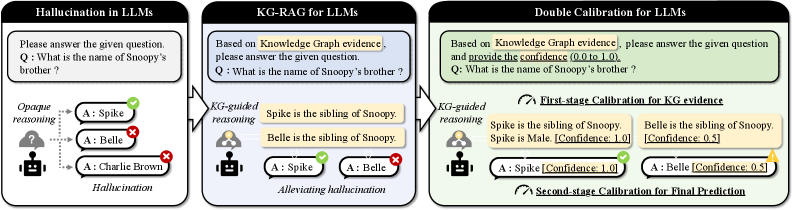

The image presents a diagram illustrating three different approaches to answering a question using Large Language Models (LLMs): Hallucination in LLMs, KG-RAG for LLMs, and Double Calibration for LLMs. It focuses on the question "What is the name of Snoopy's brother?" and demonstrates how different methods affect the accuracy and confidence of the answers.

### Components/Axes

* **Titles:**

* Hallucination in LLMs (Left)

* KG-RAG for LLMs (Center)

* Double Calibration for LLMs (Right)

* **Question:** "What is the name of Snoopy's brother?" (Appears in all three sections)

* **Agent Representation:** A robot icon represents the LLM agent.

* **Knowledge Source:** A human icon represents the knowledge graph.

* **Answer Indicators:** Green checkmarks indicate correct answers, red crosses indicate incorrect answers, and yellow triangles indicate warnings.

* **Confidence Scores:** Confidence scores (0.0 to 1.0) are provided in the "Double Calibration" section.

### Detailed Analysis

**1. Hallucination in LLMs (Left)**

* **Description:** This section demonstrates the baseline scenario where the LLM directly answers the question without external knowledge.

* **Reasoning:** "Opaque reasoning" is indicated by a question mark cloud above the robot.

* **Answers:**

* A: Spike (Green Checkmark) - Correct

* A: Belle (Red Cross) - Incorrect

* A: Charlie Brown (Red Cross) - Incorrect

* **Overall Result:** "Hallucination" is labeled at the bottom, indicating the LLM provides incorrect answers.

**2. KG-RAG for LLMs (Center)**

* **Description:** This section shows the Knowledge Graph Retrieval-Augmented Generation (KG-RAG) approach, where the LLM uses a knowledge graph to inform its answer.

* **Knowledge Graph Evidence:**

* "Spike is the sibling of Snoopy."

* "Belle is the sibling of Snoopy."

* **Reasoning:** "KG-guided reasoning" is indicated by a human icon connected to the robot.

* **Answers:**

* A: Spike (Green Checkmark) - Correct

* A: Belle (Red Cross) - Incorrect

* **Overall Result:** "Alleviating hallucination" is labeled at the bottom, indicating the KG-RAG approach reduces hallucination but doesn't eliminate it.

**3. Double Calibration for LLMs (Right)**

* **Description:** This section presents a double calibration approach, where the LLM uses a knowledge graph and provides confidence scores for its answers.

* **Knowledge Graph Evidence:**

* "Spike is the sibling of Snoopy. Spike is Male. [Confidence: 1.0]"

* "Belle is the sibling of Snoopy. [Confidence: 0.5]"

* **Reasoning:** "KG-guided reasoning" is indicated by a human icon connected to the robot.

* **Calibration Stages:**

* "First-stage Calibration for KG evidence"

* "Second-stage Calibration for Final Prediction"

* **Answers:**

* A: Spike [Confidence: 1.0] (Green Checkmark) - Correct

* A: Belle [Confidence: 0.5] (Yellow Triangle) - Incorrect, but with a lower confidence score.

* **Overall Result:** The LLM provides a higher confidence score for the correct answer and a lower confidence score for the incorrect answer, allowing for better decision-making.

### Key Observations

* The baseline LLM (left) hallucinates and provides incorrect answers with no indication of confidence.

* KG-RAG (center) improves accuracy by using external knowledge but still provides an incorrect answer.

* Double Calibration (right) not only uses external knowledge but also provides confidence scores, allowing for better identification of potentially incorrect answers.

### Interpretation

The diagram demonstrates the importance of using external knowledge and confidence calibration to mitigate hallucination in LLMs. The Double Calibration approach is shown to be the most effective, as it provides both accurate answers and confidence scores, allowing users to better assess the reliability of the LLM's responses. The confidence scores allow for a more nuanced understanding of the LLM's reasoning and can be used to filter out potentially incorrect answers. The diagram highlights the limitations of relying solely on LLMs without external knowledge and calibration mechanisms.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: LLM Reasoning Processes - Hallucination, KG-RAG, and Double Calibration

### Overview

The image presents a comparative diagram illustrating three different approaches to reasoning in Large Language Models (LLMs): Hallucination in LLMs, Knowledge Graph-Retrieval Augmented Generation (KG-RAG), and Double Calibration. Each approach is depicted as a flow diagram, showing the question, reasoning process, and answer generated. The diagrams highlight how each method handles the question "What is the name of Snoopy's brother?".

### Components/Axes

Each diagram consists of the following components:

* **Question Box:** A light green box containing the question: "Q: What is the name of Snoopy's brother?".

* **Reasoning Block:** A gray block representing the reasoning process.

* **Answer Blocks:** Two light blue blocks representing potential answers, each with a checkmark (correct) or an 'X' (incorrect).

* **Final Output:** A brown box (in the first diagram) or a text label indicating the final output or calibration stage.

* **Icons:** Human icons representing the LLM and knowledge sources.

### Detailed Analysis or Content Details

**1. Hallucination in LLMs (Left Diagram)**

* **Question:** "Q: What is the name of Snoopy's brother?"

* **Reasoning:** "Opaque reasoning" is indicated within the gray block. A question mark icon represents the reasoning process.

* **Answers:**

* A: Spike (marked with a red 'X' - incorrect)

* A: Belle (marked with a red 'X' - incorrect)

* **Final Output:** "Charlie Brown" is presented in a brown box labeled "Hallucination".

**2. KG-RAG for LLMs (Center Diagram)**

* **Question:** "Q: What is the name of Snoopy's brother?"

* **Reasoning:** "KG-guided reasoning" is indicated within the gray block. Two human icons are shown, one with a knowledge graph symbol.

* **Knowledge Graph Evidence:**

* "Spike is the sibling of Snoopy."

* "Belle is the sibling of Snoopy."

* **Answers:**

* A: Spike (marked with a green checkmark - correct)

* A: Belle (marked with a green checkmark - correct)

* **Final Output:** "Alleviating hallucination" is indicated.

**3. Double Calibration for LLMs (Right Diagram)**

* **Question:** "Q: What is the name of Snoopy's brother?"

* **Reasoning:** "KG-guided reasoning" is indicated within the gray block. Two human icons are shown, one with a knowledge graph symbol.

* **First-stage Calibration for KG evidence:**

* "Spike is the sibling of Snoopy." - Spike is Male [Confidence: 1.0] (marked with a green checkmark)

* "Belle is the sibling of Snoopy." - Belle is Female [Confidence: 0.5] (marked with a yellow triangle)

* **Answers:**

* A: Spike [Confidence: 1.0] (marked with a green checkmark)

* A: Belle [Confidence: 0.5] (marked with a yellow triangle)

* **Second-stage Calibration for Final Prediction:** is indicated below the answers.

### Key Observations

* The "Hallucination" diagram demonstrates the LLM generating an incorrect answer ("Charlie Brown") without relying on external knowledge.

* The "KG-RAG" diagram shows the LLM leveraging a knowledge graph to identify both correct answers ("Spike" and "Belle").

* The "Double Calibration" diagram introduces a confidence score for each answer, with "Spike" having a higher confidence (1.0) than "Belle" (0.5). This suggests a mechanism for prioritizing more reliable answers.

* The use of checkmarks, 'X' marks, and confidence scores visually indicates the accuracy and reliability of the answers.

### Interpretation

The diagrams illustrate a progression in LLM reasoning techniques aimed at mitigating the problem of "hallucination" – the generation of factually incorrect or nonsensical information. The first diagram highlights the vulnerability of a standard LLM to generating incorrect responses. The second diagram demonstrates how integrating a knowledge graph (KG-RAG) can significantly improve accuracy by grounding the LLM's reasoning in verifiable facts. The third diagram introduces a further refinement – double calibration – which not only leverages a knowledge graph but also assigns confidence scores to the retrieved information, allowing for a more nuanced and reliable final prediction.

The confidence scores in the "Double Calibration" diagram suggest a probabilistic approach to reasoning, where the LLM doesn't simply provide a single answer but rather a ranked list of possibilities with associated probabilities. This is a significant step towards building more trustworthy and explainable AI systems. The use of color-coding (green for correct, red for incorrect, yellow for uncertain) provides a clear visual representation of the system's confidence in its answers. The diagrams collectively demonstrate a shift from opaque, potentially unreliable reasoning to a more transparent, knowledge-grounded, and calibrated approach.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Comparison of LLM Hallucination Mitigation Approaches

### Overview

The image is a technical diagram comparing three sequential approaches to addressing the problem of factual hallucinations in Large Language Models (LLMs). It illustrates a progression from a baseline problem to increasingly sophisticated solutions involving Knowledge Graphs (KG) and confidence calibration. The diagram is divided into three distinct panels arranged horizontally from left to right.

### Components/Axes

The diagram consists of three main panels, each with a title, a question box, a reasoning process visualization, and an outcome.

**Panel 1 (Left): "Hallucination in LLMs"**

* **Title:** Hallucination in LLMs

* **Question Box:** Contains the text: "Please answer the given question. Q: What is the name of Snoopy's brother?"

* **Reasoning Process:** Labeled "Opaque reasoning". An icon of a person with a cloud (representing an LLM) generates three possible answers.

* **Answers & Outcomes:**

* `A: Spike` (Marked with a green checkmark ✓)

* `A: Belle` (Marked with a red cross ✗)

* `A: Charlie Brown` (Marked with a red cross ✗)

* **Outcome Label:** "Hallucination"

**Panel 2 (Center): "KG-RAG for LLMs"**

* **Title:** KG-RAG for LLMs

* **Question Box:** Contains the text: "Based on Knowledge Graph evidence, please answer the given question. Q: What is the name of Snoopy's brother?"

* **Reasoning Process:** Labeled "KG-guided reasoning". An icon of a person with a network graph (representing a Knowledge Graph) retrieves evidence.

* **Evidence Retrieved:**

* "Spike is the sibling of Snoopy."

* "Belle is the sibling of Snoopy."

* **Answers & Outcomes:**

* `A: Spike` (Marked with a green checkmark ✓)

* `A: Belle` (Marked with a red cross ✗)

* **Outcome Label:** "Alleviating hallucination"

**Panel 3 (Right): "Double Calibration for LLMs"**

* **Title:** Double Calibration for LLMs

* **Question Box:** Contains the text: "Based on Knowledge Graph evidence, please answer the given question and provide the confidence (0.0 to 1.0). Q: What is the name of Snoopy's brother?"

* **Reasoning Process:** Labeled "KG-guided reasoning". The same Knowledge Graph icon retrieves evidence with added confidence scores.

* **Evidence Retrieved (with Confidence):**

* "Spike is the sibling of Snoopy. Spike is Male [Confidence: 1.0]"

* "Belle is the sibling of Snoopy. [Confidence: 0.5]"

* **Calibration Stages:**

* "First-stage Calibration for KG evidence" (indicated by an arrow pointing to the evidence).

* "Second-stage Calibration for Final Prediction" (indicated by an arrow pointing to the final answers).

* **Answers & Outcomes (with Confidence):**

* `A: Spike [Confidence: 1.0]` (Marked with a green checkmark ✓)

* `A: Belle [Confidence: 0.5]` (Marked with a yellow question mark ?)

### Detailed Analysis

The diagram presents a clear three-stage evolution:

1. **Baseline Problem (Panel 1):** An LLM, using "opaque reasoning" (internal, ungrounded knowledge), is asked a factual question. It generates multiple answers, one correct ("Spike") and two incorrect ("Belle", "Charlie Brown"). The incorrect outputs are labeled as hallucinations.

2. **First Mitigation (Panel 2):** The approach is augmented with Retrieval-Augmented Generation (RAG) using a Knowledge Graph ("KG-RAG"). The model's reasoning is now "KG-guided." It retrieves explicit evidence from the KG: both "Spike" and "Belle" are listed as siblings of Snoopy. The model still outputs both as potential answers, but the incorrect one ("Belle") is now flagged, indicating the system is aware of the conflict but hasn't resolved it. This is labeled as "alleviating hallucination."

3. **Advanced Mitigation (Panel 3):** The system introduces "Double Calibration." The process is modified to require confidence scores (0.0 to 1.0).

* **First-stage Calibration:** Applied to the KG evidence itself. The evidence for "Spike" is augmented with the fact "Spike is Male" and given a high confidence of `1.0`. The evidence for "Belle" is given a lower confidence of `0.5`.

* **Second-stage Calibration:** Applied to the final prediction. The model outputs both answers but attaches calibrated confidence scores: `Spike [Confidence: 1.0]` and `Belle [Confidence: 0.5]`. The high-confidence answer is marked correct, while the low-confidence answer is marked with a question mark, indicating uncertainty rather than a definitive error.

### Key Observations

* **Progression of Transparency:** The reasoning process evolves from "opaque" to "KG-guided," making the source of information explicit.

* **Introduction of Uncertainty Quantification:** The final panel introduces numerical confidence scores, first for the retrieved evidence and then for the final answers. This allows the system to express doubt.

* **Visual Coding of Correctness:** The symbols change from binary (✓/✗) in the first two panels to a ternary system (✓/?) in the final panel, reflecting the shift from hard correctness to probabilistic confidence.

* **Evidence Augmentation:** In the calibrated system, the evidence for the correct answer ("Spike") is strengthened with an additional, high-confidence fact ("Spike is Male"), which likely contributes to its higher final confidence score.

### Interpretation

This diagram demonstrates a methodological framework for improving the factual reliability of LLMs. It argues that simply retrieving external knowledge (KG-RAG) is insufficient to fully resolve conflicts in retrieved data (e.g., two siblings). The proposed "Double Calibration" method addresses this by:

1. **Quantifying Evidence Reliability:** Assigning confidence scores to knowledge graph facts, allowing the model to weigh more reliable information more heavily.

2. **Quantifying Output Uncertainty:** Requiring the model to express its final answer confidence, enabling downstream systems or users to distinguish between high-certainty facts and low-certainty guesses.

The core insight is that moving from a deterministic "right/wrong" paradigm to a probabilistic "confidence-scored" paradigm allows for more nuanced and trustworthy AI outputs. The system doesn't just avoid hallucination; it explicitly flags potential inaccuracies through low confidence scores, which is crucial for high-stakes applications. The progression shows a shift from the model being a black-box answer generator to a calibrated reasoner that can communicate its own uncertainty.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Comparison of Methods to Mitigate Hallucination in LLMs

### Overview

The diagram compares three approaches to address hallucination in Large Language Models (LLMs):

1. **Hallucination in LLMs** (left panel): Demonstrates opaque reasoning leading to incorrect answers.

2. **KG-RAG for LLMs** (center panel): Uses knowledge graph (KG) evidence to guide reasoning and reduce hallucination.

3. **Double Calibration for LLMs** (right panel): Combines KG evidence with confidence scores to refine predictions.

### Components/Axes

- **Panels**: Three vertical sections labeled:

- *Hallucination in LLMs*

- *KG-RAG for LLMs*

- *Double Calibration for LLMs*

- **Elements**:

- **Question**: "What is the name of Snoopy’s brother?" in all panels.

- **Answers**:

- *Hallucination in LLMs*: Spike (✓), Belle (✗), Charlie Brown (✗).

- *KG-RAG for LLMs*: Spike (✓), Belle (✗).

- *Double Calibration for LLMs*: Spike (✓, Confidence: 1.0), Belle (✗, Confidence: 0.5).

- **Reasoning Steps**:

- *KG-guided reasoning* (center panel): "Spike is the sibling of Snoopy."

- *Double Calibration*:

- First-stage: Confidence scores for KG evidence (Spike: 1.0, Belle: 0.5).

- Second-stage: Confidence scores for final prediction (Spike: 1.0, Belle: 0.5).

- **Icons**:

- Robot with a question mark (uncertainty) in the first panel.

- Human figures with thought bubbles (reasoning) in the center and right panels.

### Detailed Analysis

#### Hallucination in LLMs

- **Question**: "What is the name of Snoopy’s brother?"

- **Answers**:

- Spike (correct, marked ✓).

- Belle (incorrect, marked ✗).

- Charlie Brown (incorrect, marked ✗).

- **Reasoning**: Opaque, leading to hallucination (incorrect answers).

#### KG-RAG for LLMs

- **Question**: Same as above.

- **KG-guided reasoning**: "Spike is the sibling of Snoopy."

- **Answers**:

- Spike (correct, marked ✓).

- Belle (incorrect, marked ✗).

- **Flow**: KG evidence directly guides the correct answer.

#### Double Calibration for LLMs

- **Question**: Same as above.

- **First-stage Calibration (KG evidence)**:

- "Spike is the sibling of Snoopy." (Confidence: 1.0).

- "Belle is the sibling of Snoopy." (Confidence: 0.5).

- **Second-stage Calibration (Final Prediction)**:

- Spike (Confidence: 1.0).

- Belle (Confidence: 0.5).

- **Additional Context**: Spike is male (Confidence: 1.0).

### Key Observations

1. **Progression of Accuracy**:

- Opaque reasoning (left) produces hallucinations (incorrect answers).

- KG-guided reasoning (center) reduces hallucination by leveraging structured evidence.

- Double calibration (right) further refines predictions using confidence scores.

2. **Confidence Scores**:

- Spike consistently has the highest confidence (1.0) across methods.

- Belle’s confidence drops from 0.5 (KG evidence) to 0.5 (final prediction), indicating uncertainty.

3. **Flow Direction**:

- Left to right: Increasing reliance on KG evidence and calibration.

### Interpretation

- **Mechanism of Hallucination**: The left panel shows LLMs generating answers without external validation, leading to errors (e.g., Belle and Charlie Brown).

- **Role of KG-RAG**: The center panel demonstrates how integrating knowledge graphs (e.g., "Spike is the sibling of Snoopy") constrains answers to factual data, eliminating incorrect options.

- **Double Calibration**: The right panel introduces confidence scores to quantify uncertainty. By cross-referencing KG evidence (first-stage) and additional attributes (e.g., Spike’s gender), the model achieves higher reliability.

- **Critical Insight**: Combining structured knowledge (KG) with iterative calibration (confidence scoring) significantly mitigates hallucination, as shown by the consistent correctness of "Spike" and quantified confidence.

## Notes

- No non-English text is present.

- All labels and values are explicitly stated in the diagram.

- Spatial grounding: Elements are vertically aligned within panels, with reasoning steps positioned below questions and answers.

- No charts/graphs or numerical trends beyond confidence scores (0.0–1.0).

DECODING INTELLIGENCE...