## Diagram: LLM Hallucination Mitigation Strategies

### Overview

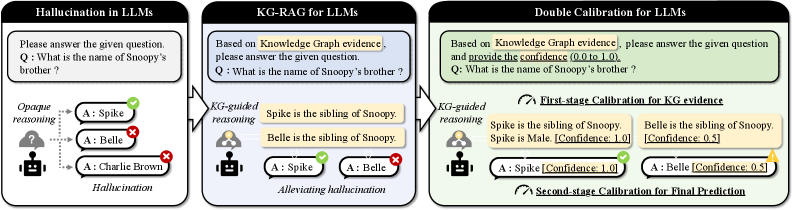

The image presents a diagram illustrating three different approaches to answering a question using Large Language Models (LLMs): Hallucination in LLMs, KG-RAG for LLMs, and Double Calibration for LLMs. It focuses on the question "What is the name of Snoopy's brother?" and demonstrates how different methods affect the accuracy and confidence of the answers.

### Components/Axes

* **Titles:**

* Hallucination in LLMs (Left)

* KG-RAG for LLMs (Center)

* Double Calibration for LLMs (Right)

* **Question:** "What is the name of Snoopy's brother?" (Appears in all three sections)

* **Agent Representation:** A robot icon represents the LLM agent.

* **Knowledge Source:** A human icon represents the knowledge graph.

* **Answer Indicators:** Green checkmarks indicate correct answers, red crosses indicate incorrect answers, and yellow triangles indicate warnings.

* **Confidence Scores:** Confidence scores (0.0 to 1.0) are provided in the "Double Calibration" section.

### Detailed Analysis

**1. Hallucination in LLMs (Left)**

* **Description:** This section demonstrates the baseline scenario where the LLM directly answers the question without external knowledge.

* **Reasoning:** "Opaque reasoning" is indicated by a question mark cloud above the robot.

* **Answers:**

* A: Spike (Green Checkmark) - Correct

* A: Belle (Red Cross) - Incorrect

* A: Charlie Brown (Red Cross) - Incorrect

* **Overall Result:** "Hallucination" is labeled at the bottom, indicating the LLM provides incorrect answers.

**2. KG-RAG for LLMs (Center)**

* **Description:** This section shows the Knowledge Graph Retrieval-Augmented Generation (KG-RAG) approach, where the LLM uses a knowledge graph to inform its answer.

* **Knowledge Graph Evidence:**

* "Spike is the sibling of Snoopy."

* "Belle is the sibling of Snoopy."

* **Reasoning:** "KG-guided reasoning" is indicated by a human icon connected to the robot.

* **Answers:**

* A: Spike (Green Checkmark) - Correct

* A: Belle (Red Cross) - Incorrect

* **Overall Result:** "Alleviating hallucination" is labeled at the bottom, indicating the KG-RAG approach reduces hallucination but doesn't eliminate it.

**3. Double Calibration for LLMs (Right)**

* **Description:** This section presents a double calibration approach, where the LLM uses a knowledge graph and provides confidence scores for its answers.

* **Knowledge Graph Evidence:**

* "Spike is the sibling of Snoopy. Spike is Male. [Confidence: 1.0]"

* "Belle is the sibling of Snoopy. [Confidence: 0.5]"

* **Reasoning:** "KG-guided reasoning" is indicated by a human icon connected to the robot.

* **Calibration Stages:**

* "First-stage Calibration for KG evidence"

* "Second-stage Calibration for Final Prediction"

* **Answers:**

* A: Spike [Confidence: 1.0] (Green Checkmark) - Correct

* A: Belle [Confidence: 0.5] (Yellow Triangle) - Incorrect, but with a lower confidence score.

* **Overall Result:** The LLM provides a higher confidence score for the correct answer and a lower confidence score for the incorrect answer, allowing for better decision-making.

### Key Observations

* The baseline LLM (left) hallucinates and provides incorrect answers with no indication of confidence.

* KG-RAG (center) improves accuracy by using external knowledge but still provides an incorrect answer.

* Double Calibration (right) not only uses external knowledge but also provides confidence scores, allowing for better identification of potentially incorrect answers.

### Interpretation

The diagram demonstrates the importance of using external knowledge and confidence calibration to mitigate hallucination in LLMs. The Double Calibration approach is shown to be the most effective, as it provides both accurate answers and confidence scores, allowing users to better assess the reliability of the LLM's responses. The confidence scores allow for a more nuanced understanding of the LLM's reasoning and can be used to filter out potentially incorrect answers. The diagram highlights the limitations of relying solely on LLMs without external knowledge and calibration mechanisms.