\n

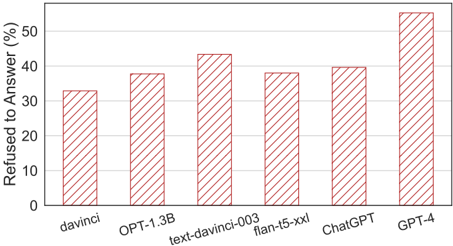

## Bar Chart: AI Model Refusal Rates

### Overview

The image is a vertical bar chart comparing the percentage of times six different AI models refused to answer a query. The chart uses a single data series represented by red, diagonally hatched bars against a white background with light gray gridlines.

### Components/Axes

* **Chart Type:** Vertical Bar Chart.

* **Y-Axis (Vertical):**

* **Label:** "Refused to Answer (%)"

* **Scale:** Linear scale from 0 to 50, with major tick marks and labels at intervals of 10 (0, 10, 20, 30, 40, 50).

* **X-Axis (Horizontal):**

* **Label:** None explicit. The axis contains categorical labels for each bar.

* **Categories (from left to right):** `davinci`, `OPT-1.3B`, `text-davinci-003`, `flan-t5-xxl`, `ChatGPT`, `GPT-4`.

* **Legend:** Not present. The single data series is implied by the uniform bar style.

* **Visual Style:** All bars are filled with a red diagonal hatching pattern (`///`). The chart has a simple, clean layout with horizontal gridlines extending from the y-axis ticks.

### Detailed Analysis

The following table reconstructs the data presented in the chart. Values are approximate, estimated from the bar heights relative to the y-axis scale.

| Model (X-Axis Label) | Approximate Refusal Rate (%) | Visual Trend & Positioning |

| :--- | :--- | :--- |

| **davinci** | ~33% | The leftmost bar. Its top aligns slightly above the 30% gridline. |

| **OPT-1.3B** | ~38% | The second bar from the left. Its top is closer to the 40% line than the 30% line. |

| **text-davinci-003** | ~43% | The third bar. Its top is clearly above the 40% gridline. |

| **flan-t5-xxl** | ~38% | The fourth bar. Its height appears visually identical to the `OPT-1.3B` bar. |

| **ChatGPT** | ~40% | The fifth bar. Its top aligns almost exactly with the 40% gridline. |

| **GPT-4** | ~55% | The rightmost and tallest bar. Its top extends significantly above the 50% gridline, indicating a value beyond the labeled scale. |

**Trend Verification:** The data series does not follow a strict monotonic trend. The refusal rate increases from `davinci` to `text-davinci-003`, then dips for `flan-t5-xxl`, rises slightly for `ChatGPT`, and finally jumps sharply for `GPT-4`.

### Key Observations

1. **Highest Refusal Rate:** `GPT-4` has a markedly higher refusal rate (~55%) than all other models, being the only one to exceed the 50% scale marker.

2. **Cluster of Mid-Range Models:** Four models (`OPT-1.3B`, `text-davinci-003`, `flan-t5-xxl`, `ChatGPT`) cluster in the 38-43% range.

3. **Identical Rates:** The bars for `OPT-1.3B` and `flan-t5-xxl` appear to be of identical height, suggesting their refusal rates are approximately equal (~38%).

4. **Lowest Refusal Rate:** The `davinci` model shows the lowest refusal rate at approximately 33%.

### Interpretation

This chart likely illustrates the "safety" or "alignment" behavior of various large language models (LLMs) when presented with a specific set of prompts (the nature of which is not specified in the image). A higher "Refused to Answer" rate can indicate more conservative safety filters, better training to avoid harmful content, or a narrower scope of permissible topics.

* **What the data suggests:** The progression from `davinci` to `GPT-4` (both from the same model family) shows a general increase in refusal rates, which may reflect iterative improvements in safety training over time. The high rate for `GPT-4` could signify a significant advancement in its safety protocols compared to its predecessors and contemporaries.

* **Relationships:** The similar rates for `OPT-1.3B` (a model from Meta) and `flan-t5-xxl` (a model from Google) suggest that different research groups, when scaling models and implementing safety measures, may converge on similar levels of caution for certain evaluation benchmarks.

* **Anomaly/Notable Point:** The sharp increase for `GPT-4` is the most salient feature. Without knowing the evaluation dataset, it's unclear if this represents a superior safety capability, an overly restrictive filter, or a difference in how the model interprets "refusal." The fact that its value exceeds the chart's primary scale emphasizes its outlier status in this comparison.