## Heatmaps: MLP and Attention Masks Across Clusters

### Overview

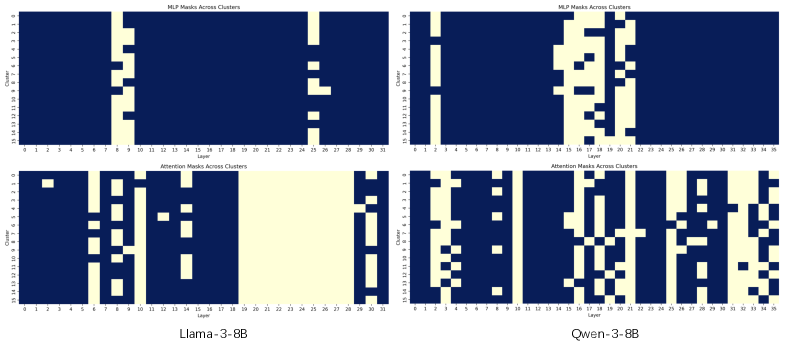

The image presents four heatmaps, arranged in a 2x2 grid. Each heatmap visualizes the distribution of masks (MLP or Attention) across clusters for different layers. The two models being compared are "Llama-3-8B" (bottom-left) and "Qwen-3-8B" (bottom-right). The top row shows "MLP Masks Across Clusters" and the bottom row shows "Attention Masks Across Clusters". The x-axis represents the "Layer" and the y-axis represents the "Cluster". The heatmaps use a two-color scheme: dark blue for low values and light yellow/white for high values.

### Components/Axes

* **Title (Top-Left):** "MLP Masks Across Clusters"

* **Title (Top-Right):** "MLP Masks Across Clusters"

* **Title (Bottom-Left):** "Attention Masks Across Clusters"

* **Title (Bottom-Right):** "Attention Masks Across Clusters"

* **X-axis Label (All):** "Layer" - Scale ranges from 0 to 36.

* **Y-axis Label (All):** "Cluster" - Scale ranges from 0 to 32.

* **Model Labels (Bottom):** "Llama-3-8B" and "Qwen-3-8B"

* **Color Scale:** Dark Blue (low value) to Light Yellow/White (high value).

### Detailed Analysis or Content Details

**1. Llama-3-8B - MLP Masks Across Clusters (Top-Left)**

* The heatmap shows sparse activation of MLP masks.

* There are clusters with high activation around layers 4-7, 12-14, 18-20, and 26-28.

* Specifically, high activation (white/yellow) is observed at:

* Cluster 1, Layers 4-7

* Cluster 6, Layers 12-14

* Cluster 10, Layers 18-20

* Cluster 24, Layers 26-28

* Most other cells are dark blue, indicating low activation.

**2. Qwen-3-8B - MLP Masks Across Clusters (Top-Right)**

* Similar to Llama-3-8B, this heatmap also shows sparse activation.

* High activation is observed in different clusters and layers compared to Llama-3-8B.

* Specifically, high activation (white/yellow) is observed at:

* Cluster 1, Layers 1-3

* Cluster 3, Layers 6-8

* Cluster 7, Layers 12-15

* Cluster 11, Layers 18-21

* Cluster 25, Layers 27-30

* Most other cells are dark blue, indicating low activation.

**3. Llama-3-8B - Attention Masks Across Clusters (Bottom-Left)**

* The heatmap shows a more distributed activation pattern compared to the MLP masks.

* High activation (white/yellow) is observed at:

* Cluster 1, Layers 1-3

* Cluster 6, Layers 6-8

* Cluster 10, Layers 12-14

* Cluster 16, Layers 18-20

* Cluster 24, Layers 26-28

* There are also some scattered activations in other clusters and layers.

**4. Qwen-3-8B - Attention Masks Across Clusters (Bottom-Right)**

* This heatmap shows a very different activation pattern compared to Llama-3-8B.

* High activation (white/yellow) is observed at:

* Cluster 1, Layers 1-3

* Cluster 3, Layers 6-8

* Cluster 7, Layers 12-15

* Cluster 11, Layers 18-21

* Cluster 25, Layers 27-30

* There are also some scattered activations in other clusters and layers.

### Key Observations

* Both models exhibit sparse activation of MLP masks.

* The attention masks show a more distributed activation pattern.

* The activation patterns differ significantly between the two models for both MLP and attention masks.

* Qwen-3-8B appears to have more consistent activation across layers in the attention mask heatmap.

* Llama-3-8B shows more concentrated activation in specific clusters for MLP masks.

### Interpretation

The heatmaps provide insights into how the MLP and attention mechanisms are utilized within each model across different layers and clusters. The differences in activation patterns suggest that the two models may have different internal representations and processing strategies. The sparse activation of MLP masks could indicate that these layers are only selectively engaged during processing. The more distributed activation of attention masks suggests that attention mechanisms are more broadly utilized. The variations between Llama-3-8B and Qwen-3-8B highlight the diversity in model architectures and training procedures. Further analysis would be needed to determine the functional implications of these differences in activation patterns. The data suggests that the models are not identical in their internal workings, even though they are both large language models. The differing patterns could be related to the datasets they were trained on, the specific architectural choices made during development, or the optimization algorithms used during training.