TECHNICAL ASSET FINGERPRINT

c4b04752d12dd3ffddd5e8f3

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

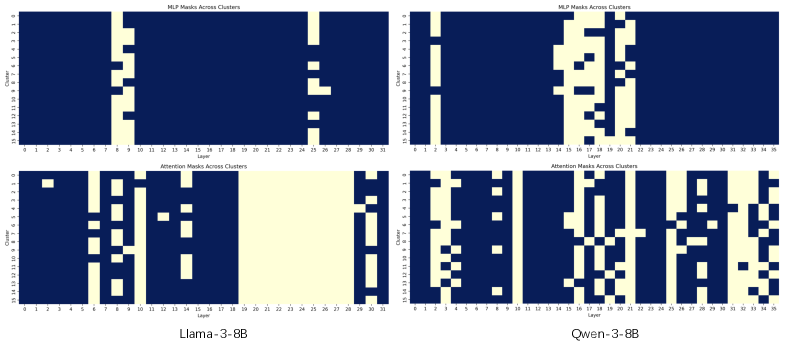

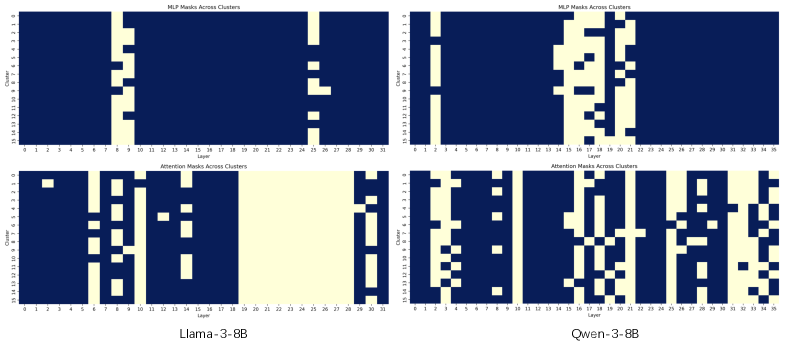

## Heatmap Composite: Neural Network Layer Activation Masks

### Overview

The image displays a 2x2 grid of four binary heatmaps (or masks) visualizing the activation patterns across layers and clusters for two large language models: **Llama-3-8B** (left column) and **Qwen-3-8B** (right column). The top row shows masks for Multi-Layer Perceptron (MLP) components, and the bottom row shows masks for Attention components. Each heatmap plots "Cluster" (y-axis) against "Layer" (x-axis), with binary values represented by color: dark blue (value 0, masked/inactive) and light yellow (value 1, active).

### Components/Axes

* **Overall Layout:** A 2x2 grid of subplots.

* **Top-Left:** Title: "MLP Masks Across Clusters" (for Llama-3-8B).

* **Top-Right:** Title: "MLP Masks Across Clusters" (for Qwen-3-8B).

* **Bottom-Left:** Title: "Attention Masks Across Clusters" (for Llama-3-8B).

* **Bottom-Right:** Title: "Attention Masks Across Clusters" (for Qwen-3-8B).

* **Footer Labels:** "Llama-3-8B" centered below the left column. "Qwen-3-8B" centered below the right column.

* **Axes (Identical for all four subplots):**

* **X-axis:** Label: "Layer". Scale: Linear, from 0 to 31 (Llama) or 0 to 35 (Qwen), with major tick marks every 1 unit and numerical labels every 1-2 units.

* **Y-axis:** Label: "Cluster". Scale: Linear, from 0 to 15, with major tick marks and numerical labels for every integer.

* **Color Legend (Implied):** No explicit legend box. The binary state is encoded by color:

* **Dark Blue:** Represents 0 (Masked / Inactive).

* **Light Yellow/Cream:** Represents 1 (Active / Selected).

### Detailed Analysis

**1. Llama-3-8B - MLP Masks (Top-Left):**

* **Trend:** Activation is highly localized and sparse.

* **Pattern:** Two primary vertical bands of activation (light yellow).

* **Band 1:** A narrow, continuous vertical stripe spanning all 16 clusters (y=0 to 15) at approximately **Layer 8**.

* **Band 2:** A fragmented vertical stripe, active in most but not all clusters, located between **Layers 24-26**. Activation here is less consistent across clusters than in Band 1.

* The vast majority of the layer-cluster space (all other layers and the space between bands) is dark blue (0).

**2. Qwen-3-8B - MLP Masks (Top-Right):**

* **Trend:** Activation is more distributed and complex than in Llama.

* **Pattern:** Multiple vertical and block-like structures.

* A thin, continuous vertical stripe at **Layers 1-2**.

* A large, dense block of activation spanning **Layers ~14 to 22**. Within this block, activation is not uniform; it forms a complex, interconnected pattern across clusters, resembling a maze or circuit.

* Scattered, isolated active pixels or small clusters in later layers (e.g., around Layer 28).

* Shows significantly more active parameters (light yellow area) compared to Llama's MLP mask.

**3. Llama-3-8B - Attention Masks (Bottom-Left):**

* **Trend:** Activation forms distinct, blocky horizontal and vertical structures.

* **Pattern:** Characterized by rectangular blocks and stripes.

* A small, isolated active block at **Layers 1-2, Clusters 13-14**.

* A prominent vertical stripe at **Layers 6-10**, active in most clusters.

* A large, solid rectangular block of activation spanning **Layers ~18 to 27** across all 16 clusters. This is the most dominant feature.

* A final vertical stripe at **Layers 28-30**.

* The pattern suggests structured, possibly head-specific, attention patterns that are consistent across many layers in the central block.

**4. Qwen-3-8B - Attention Masks (Bottom-Right):**

* **Trend:** The most complex and dense pattern of all four plots.

* **Pattern:** A highly intricate, interconnected network of active pixels.

* Activation is widespread across nearly the entire layer range (0-35).

* No single large, solid block like in Llama. Instead, it features a dense web of vertical lines, horizontal connections, and checkerboard-like patterns.

* Notable dense vertical stripes appear around **Layers 4-5, 10-12, 18-20, and 28-30**.

* The pattern suggests a highly distributed and interdependent attention mechanism where many heads across many layers are active and potentially interacting.

### Key Observations

1. **Model Dichotomy:** Llama-3-8B exhibits **sparse, localized, and block-structured** activation masks for both MLP and Attention. Qwen-3-8B exhibits **dense, distributed, and intricately connected** activation masks.

2. **Component Differences:** For both models, the Attention masks are generally more complex and cover a wider range of layers than their corresponding MLP masks.

3. **Llama's Central Block:** The massive, solid block in Llama's Attention mask (Layers 18-27) is a striking anomaly, indicating a phase where nearly all attention heads across all clusters are uniformly active.

4. **Qwen's MLP Complexity:** Qwen's MLP mask shows a level of internal structure (the maze-like block) that is absent in Llama's simple vertical bands.

### Interpretation

This visualization provides a comparative "fingerprint" of the internal routing or activation sparsity in two different 8B-parameter language models. The masks likely represent some form of **conditional computation** or **mixture-of-experts (MoE)** routing, where only a subset of parameters (clusters/heads) are activated for given inputs or tasks.

* **Llama-3-8B's Strategy:** Appears to rely on **specialization and isolation**. Specific layers (e.g., Layer 8 for MLP, Layers 18-27 for Attention) are designated as "workhorse" layers that handle processing for all clusters. This could imply a more sequential or staged processing pipeline.

* **Qwen-3-8B's Strategy:** Appears to rely on **distributed collaboration**. Activation is spread across many layers with complex interconnections, suggesting that processing is shared and coordinated among many components simultaneously. This might allow for more nuanced or context-dependent computations.

* **Architectural Insight:** The stark contrast suggests fundamental differences in model architecture, training objectives, or the learned optimization for efficiency. Llama's pattern might be more interpretable due to its block structure, while Qwen's pattern might represent a more highly optimized but opaque system. The density of Qwen's masks also implies a potentially higher active parameter count during inference for similar tasks, which has implications for computational cost and capability.

DECODING INTELLIGENCE...