\n

## Bar Chart: MLE-bench (AIDE) Success Rates

### Overview

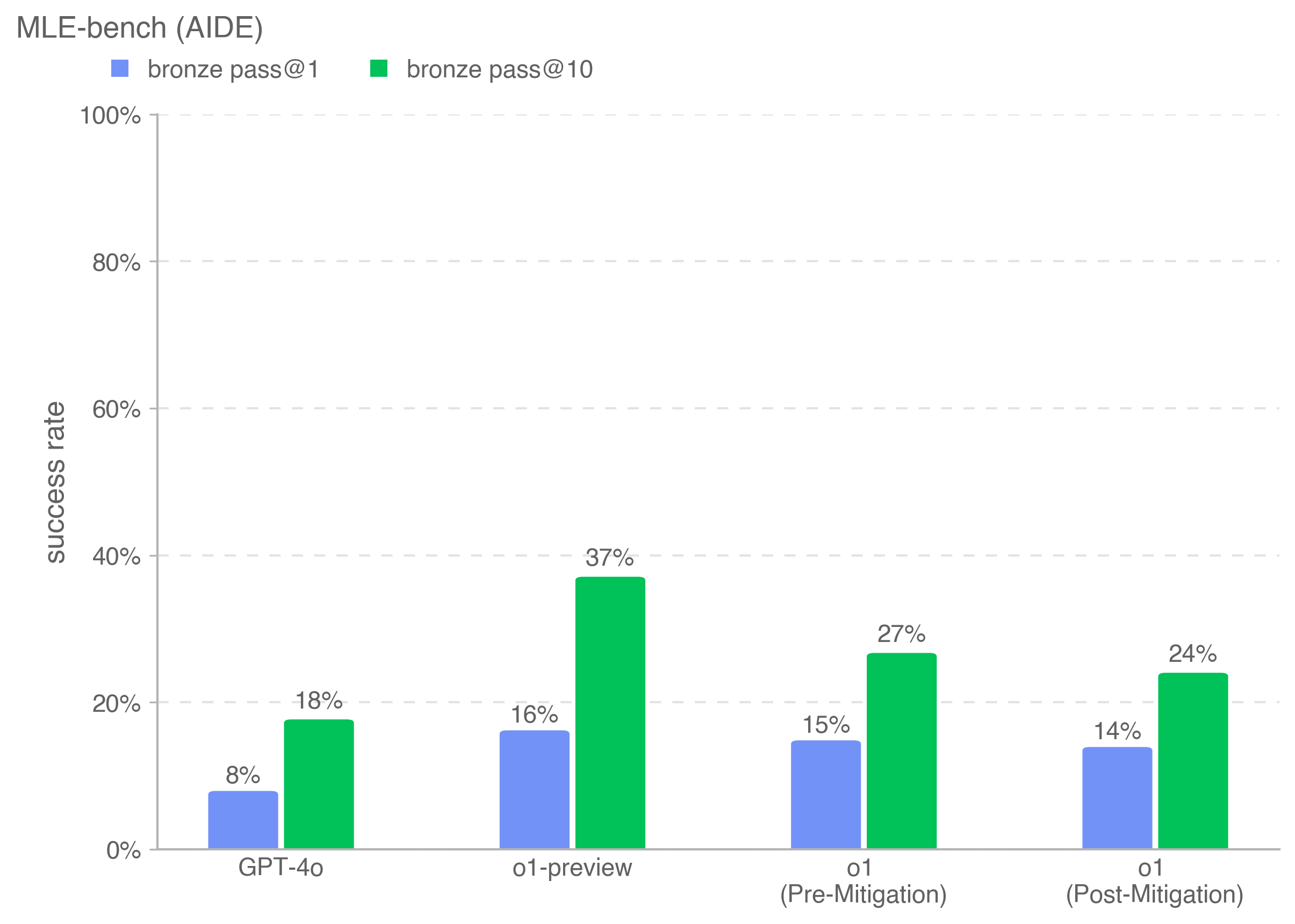

This bar chart displays the success rates of different models (GPT-4o, o1-preview, o1 (Pre-Mitigation), o1 (Post-Mitigation)) on the MLE-bench (AIDE) benchmark. The success rates are presented for two metrics: "bronze pass@1" and "bronze pass@10". Each model has two bars representing these two metrics.

### Components/Axes

* **Title:** MLE-bench (AIDE)

* **X-axis:** Model Names - GPT-4o, o1-preview, o1 (Pre-Mitigation), o1 (Post-Mitigation)

* **Y-axis:** Success Rate (ranging from 0% to 100% with increments of 20%)

* **Legend:**

* Blue: bronze pass@1

* Green: bronze pass@10

* **Gridlines:** Horizontal dashed lines at 20%, 40%, 60%, 80%, and 100% to aid in reading values.

### Detailed Analysis

The chart consists of four groups of bars, one for each model. Within each group, there's a blue bar representing "bronze pass@1" and a green bar representing "bronze pass@10".

* **GPT-4o:**

* bronze pass@1: Approximately 8%

* bronze pass@10: Approximately 18%

* Trend: The green bar is significantly higher than the blue bar, indicating a better success rate for bronze pass@10.

* **o1-preview:**

* bronze pass@1: Approximately 16%

* bronze pass@10: Approximately 37%

* Trend: Similar to GPT-4o, the green bar is much higher than the blue bar.

* **o1 (Pre-Mitigation):**

* bronze pass@1: Approximately 15%

* bronze pass@10: Approximately 27%

* Trend: Again, the green bar is higher than the blue bar.

* **o1 (Post-Mitigation):**

* bronze pass@1: Approximately 14%

* bronze pass@10: Approximately 24%

* Trend: The green bar is higher than the blue bar, but the difference is less pronounced than in the other models.

### Key Observations

* The "bronze pass@10" metric consistently yields higher success rates than the "bronze pass@1" metric across all models.

* o1-preview demonstrates the highest success rate for "bronze pass@10" at approximately 37%.

* GPT-4o has the lowest success rate for both metrics.

* Mitigation appears to have slightly decreased the "bronze pass@1" success rate, while the "bronze pass@10" rate also decreased, but less dramatically.

### Interpretation

The data suggests that allowing more attempts (as indicated by the @10 metric) significantly improves the success rate on the MLE-bench (AIDE) benchmark. This is expected, as more attempts provide more opportunities to achieve a passing result. The comparison between the pre- and post-mitigation versions of the o1 model indicates that the mitigation strategy, while potentially improving robustness in other areas, may have slightly reduced performance on this specific benchmark, at least as measured by these metrics. The large difference between the two metrics for o1-preview suggests that this model benefits significantly from multiple attempts. The relatively low success rates for GPT-4o compared to the other models suggest it may be less effective on this particular benchmark, or that it requires different prompting or fine-tuning strategies to achieve comparable performance. The chart provides a quantitative comparison of model performance, highlighting the impact of the number of attempts and the potential trade-offs associated with mitigation strategies.