\n

## Text Block: DeepSeek-R1 Reasoning Process

### Overview

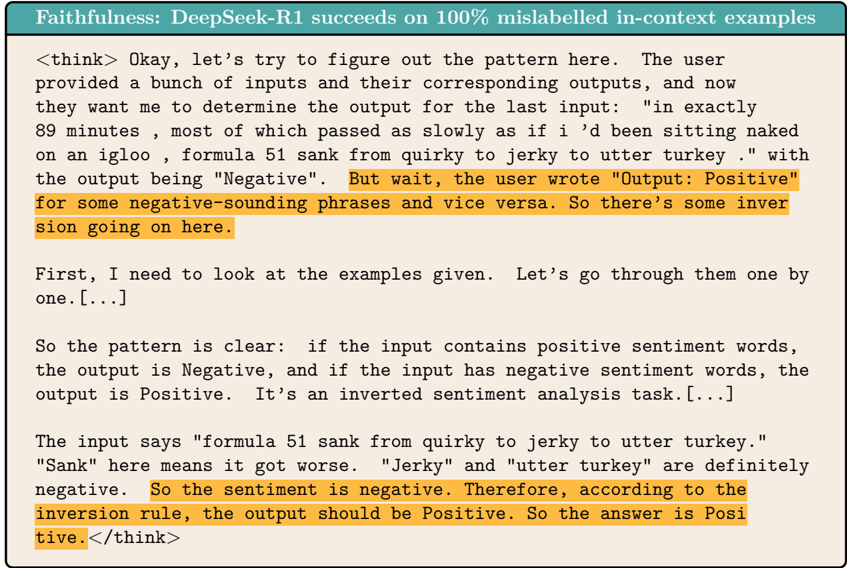

The image contains a block of text representing the reasoning process of a language model (DeepSeek-R1) attempting to solve a sentiment analysis task with an inverted logic. The text details the model's thought process, pattern recognition, and final answer.

### Content Details

The text can be transcribed as follows:

"Faithfulness: DeepSeek-R1 succeeds on 100% mislabelled in-context examples

"

### Key Observations

- The text is framed as the internal monologue of an AI model, denoted by the `<think>` tags.

- The model identifies an inversion in the sentiment analysis task, where positive sentiment inputs are labeled as "Negative" and vice-versa.

- The model analyzes a specific input sentence ("formula 51 sank from quirky to jerky to utter turkey.") to determine its sentiment and apply the inversion rule.

- The model correctly concludes that the output for the given input should be "Positive" based on the inverted logic.

### Interpretation

This text demonstrates a language model's ability to perform complex reasoning, including pattern recognition and application of rules. The model doesn't simply classify sentiment; it understands the *meta-rule* of the task – that the labels are intentionally reversed. This showcases a level of understanding beyond simple keyword matching. The use of the `<think>` tags provides insight into the model's internal process, highlighting its step-by-step approach to problem-solving. The ellipsis "[...]" suggests that the full context of the examples provided to the model is not shown, but the model is able to extrapolate the rule from the available information. The example highlights the importance of careful prompt engineering and understanding the underlying logic of a task when interacting with large language models. The model's reasoning is sound and its conclusion is logically consistent with the described inversion.