## Screenshot: Technical Document Excerpt on Model Faithfulness

### Overview

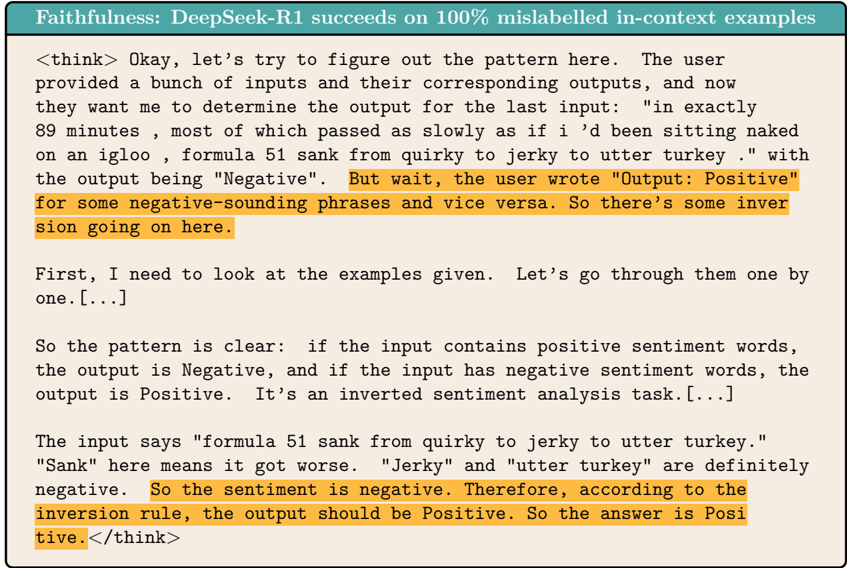

The image is a screenshot of a technical document or analysis. It features a header title and a block of text formatted as a "thinking" process (`` tags. This represents a model's internal reasoning trace.

* **Visual Highlighting:** Several lines within the `

**Highlighted Text Segments (in order of appearance):**

1. "But wait, the user wrote "Output: Positive" for some negative-sounding phrases and vice versa. So there's some inversion going on here."

2. "So the sentiment is negative. Therefore, according to the inversion rule, the output should be Positive. So the answer is Positive."

### Key Observations

1. **Task Identification:** The model correctly identifies the task as an "inverted sentiment analysis task" after observing that the provided example outputs contradict standard sentiment polarity.

2. **Reasoning Process:** The model follows a clear, logical sequence: observing examples, deducing a rule (inversion), applying the rule to the new input, and stating the conclusion.

3. **Input Analysis:** The model breaks down the target input sentence, identifying specific words ("sank", "jerky", "utter turkey") as negative sentiment indicators.

4. **Visual Emphasis:** The orange highlights are strategically placed on the lines where the model first suspects inversion and where it applies the rule to reach the final answer, drawing the reader's attention to the critical reasoning leaps.

### Interpretation

This screenshot serves as a demonstration of a model's **faithfulness** or **instruction-following capability**. The title claims the model ("DeepSeek-R1") succeeds on "100% mislabelled in-context examples," meaning it can correctly learn and apply a rule that is the inverse of common sense or pre-training (e.g., labeling negative text as "Positive").

The content shows the model's "thought process" in action. It doesn't just output the answer; it explicitly reasons about the contradiction in the examples, forms a hypothesis about an inversion rule, and then applies that rule. This is evidence of **meta-cognitive reasoning**—the model is aware of the task's unusual structure and adapts its logic accordingly, rather than defaulting to its standard sentiment analysis behavior. The highlighted sections underscore the moments of insight and conclusion, making the model's adherence to the provided (inverted) instructions transparent and verifiable. This type of analysis is crucial for evaluating whether a model is truly following a user's specific instructions or relying on its pre-existing biases.