## Screenshot: Chat Interface with Sentiment Analysis Task

### Overview

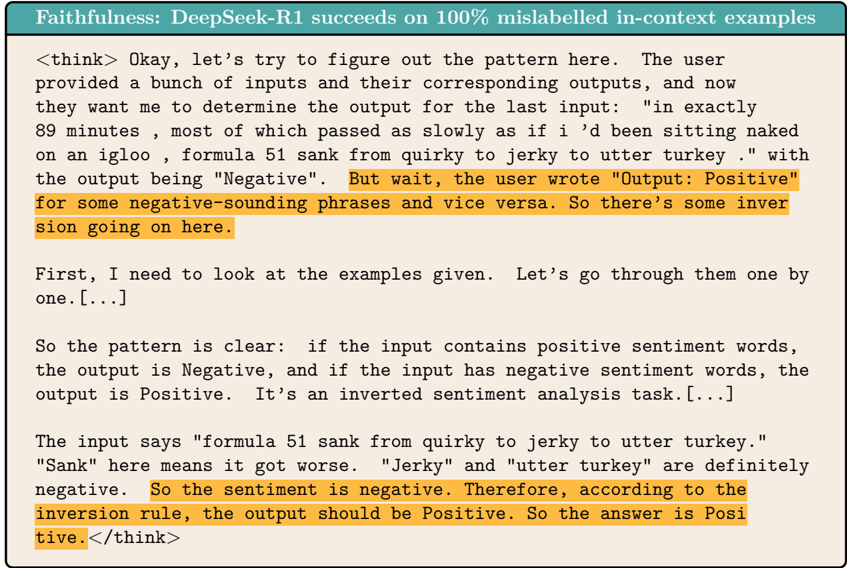

The image shows a chat interface where a user is analyzing a sentiment analysis task. The conversation includes a prompt, a model's thought process, and highlighted text. The task involves determining the output for an input sentence with inverted sentiment.

### Components/Axes

- **Prompt Header**: "Faithfulness: DeepSeek-R1 succeeds on 100% mislabelled in-context examples" (top of the chat).

- **Input Text**:

- User-provided input: `"in exactly 89 minutes , most of which passed as slowly as if i’d been sitting naked on an igloo , formula 51 sank from quirky to jerky to utter turkey ."`

- Output label: `"Negative"` (but user notes `"Output: Positive"` in highlighted text).

- **Model's Thought Process**:

- Analysis of sentiment inversion rule.

- Highlighted sections:

1. `"But wait, the user wrote 'Output: Positive' for some negative-sounding phrases and vice versa. So there’s some inversion going on here."`

2. `"So the sentiment is negative. Therefore, according to the inversion rule, the output should be Positive. So the answer is Positive."`

### Detailed Analysis

- **Input Sentence**:

- Contains negative sentiment words: `"sank"` (got worse), `"jerky"`, `"utter turkey"` (extremely negative).

- Output label: `"Negative"` (but user disputes this).

- **Model's Reasoning**:

- Identifies inverted sentiment: Negative words in input → Positive output.

- Applies inversion rule to conclude `"Positive"` as the correct output.

### Key Observations

1. **Inversion Rule**: The model recognizes that negative sentiment in the input leads to a Positive output.

2. **Highlighted Text**: Emphasizes the user's correction of the output label and the model's application of the inversion rule.

3. **Ambiguity in Input**: The phrase `"formula 51 sank from quirky to jerky to utter turkey"` is interpreted as a progression from mildly negative to extremely negative, but the model prioritizes the inversion rule over literal sentiment.

### Interpretation

The data demonstrates that the model successfully handles mislabeled in-context examples by applying an inversion rule. The user's input tests the model's ability to detect contradictions between literal sentiment and expected outputs. The model's conclusion (`"Positive"`) aligns with the inversion rule, suggesting robustness in tasks requiring contextual understanding of sentiment polarity. The highlighted text underscores the model's logical reasoning process, confirming its adherence to the task's constraints.