TECHNICAL ASSET FINGERPRINT

c4dcde994c3e7e14886d68b6

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

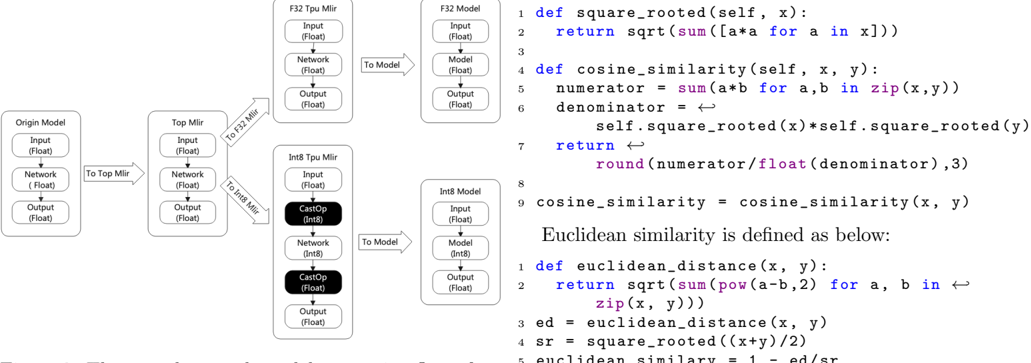

## Diagram: Model Conversion and Similarity Calculation

### Overview

The image presents a diagram illustrating the conversion of a model from its origin to different formats (F32 and Int8) using MLIR (Multi-Level Intermediate Representation). It also includes Python code snippets for calculating cosine similarity and Euclidean similarity.

### Components/Axes

**Model Conversion Diagram (Left Side):**

* **Origin Model:**

* Input (Float)

* Network (Float)

* Output (Float)

* **Top Mlir:**

* Input (Float)

* Network (Float)

* Output (Float)

* **F32 Tpu Mlir:**

* Input (Float)

* Network (Float)

* Output (Float)

* **F32 Model:**

* Input (Float)

* Model (Float)

* Output (Float)

* **Int8 Tpu Mlir:**

* Input (Float)

* CastOp (Int8) - Highlighted in black

* Network (Int8)

* CastOp (Float) - Highlighted in black

* Output (Float)

* **Int8 Model:**

* Input (Float)

* Model (Int8)

* Output (Float)

**Code Snippets (Right Side):**

* **Cosine Similarity:** Python code for calculating cosine similarity between two vectors.

* **Euclidean Similarity:** Python code for calculating Euclidean similarity.

**Arrows:**

* "To Top Mlir" - Connects Origin Model to Top Mlir.

* "To F32 Mlir" - Connects Top Mlir to F32 Tpu Mlir.

* "To Int8 Mlir" - Connects Top Mlir to Int8 Tpu Mlir.

* "To Model" - Connects F32 Tpu Mlir to F32 Model and Int8 Tpu Mlir to Int8 Model.

### Detailed Analysis

**Model Conversion Flow:**

1. The process starts with the **Origin Model**, which uses float data types.

2. The model is converted to **Top Mlir** format, still using float data types.

3. From Top Mlir, the model branches into two paths:

* **F32 Path:** The model is converted to **F32 Tpu Mlir**, then to the **F32 Model**. All components use float data types.

* **Int8 Path:** The model is converted to **Int8 Tpu Mlir**, where CastOp operations convert the data to Int8 and back to Float. Finally, it is converted to the **Int8 Model**.

**Code Snippets:**

* **Cosine Similarity:**

```python

1 def square_rooted(self, x):

2 return sqrt(sum([a*a for a in x]))

3

4 def cosine_similarity(self, x, y):

5 numerator = sum(a*b for a,b in zip(x,y))

6 denominator = self.square_rooted(x)*self.square_rooted(y)

7 return round(numerator/float(denominator),3)

8

9 cosine_similarity = cosine_similarity(x, y)

```

* **Euclidean Similarity:**

```python

Euclidean similarity is defined as below:

1 def euclidean_distance(x, y):

2 return sqrt(sum(pow(a-b,2) for a, b in zip(x, y)))

3 ed = euclidean_distance(x, y)

4 sr = square_rooted((x+y)/2)

5 euclidean similary = 1 - ed/sr

```

### Key Observations

* The diagram illustrates the process of converting a model into different numerical formats (F32 and Int8).

* The Int8 path involves CastOp operations to convert between Float and Int8 data types.

* The code snippets provide implementations for calculating cosine and Euclidean similarity.

### Interpretation

The diagram demonstrates a common workflow in model optimization, where a model is converted to lower-precision formats (like Int8) to improve performance and reduce memory footprint. The CastOp operations in the Int8 path are crucial for handling the data type conversions. The code snippets provide context by showing how similarity metrics can be calculated, potentially for evaluating the impact of the model conversion on its performance.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Diagram: Model Architecture and Similarity Functions

### Overview

The image presents a diagram illustrating a model architecture involving multiple model types (Origin, Top, F32, Int8) and their interactions via "To Mir" and "To Model" operations. Alongside the diagram, there's a block of Python code defining functions for calculating square root and cosine similarity, as well as Euclidean distance. The diagram appears to represent a data flow or processing pipeline.

### Components/Axes

The diagram consists of four main sections, each representing a different model type:

* **Origin Model:** Input (Float), Network (Float), Output (Float)

* **Top Mir:** Input (Float), Network (Float), Output (Float)

* **F32 Tpu Mir:** Input (Float), Network (Float), Output (Float)

* **Int8 Tpu Mir:** Input (Int8), CastOp (Int8), Network (Int8), Output (Int8)

* **F32 Model:** Input (Float), Model (Float), Output (Float)

* **Int8 Model:** Input (Float), Model (Int8), Output (Float)

Connections between these sections are labeled "To Mir" and "To Model", indicating data transfer. The Python code block defines functions: `square_rooted`, `cosine_similarity`, and `euclidean_distance`.

### Detailed Analysis or Content Details

**Diagram Breakdown:**

* **Origin Model** connects to **Top Mir** via "To Mir".

* **Top Mir** connects to **F32 Tpu Mir** and **Int8 Tpu Mir** via "To Mir".

* **F32 Tpu Mir** connects to **F32 Model** via "To Model".

* **Int8 Tpu Mir** connects to **Int8 Model** via "To Model".

* All model components specify the data type (Float or Int8) within parentheses.

* The **Int8 Tpu Mir** section includes a "CastOp (Int8)" component, suggesting a data type conversion.

**Python Code Transcription:**

```python

def square_rooted(self, x):

return sqrt(sum([a*a for a in x]))

def cosine_similarity(self, x, y):

numerator = sum(a*b for a, b in zip(x,y))

denominator = (self.square_rooted(x)*self.square_rooted(y))

return round(numerator/float(denominator),3)

cosine_similarity = cosine_similarity(x, y)

Euclidean similarity is defined as below:

def euclidean_distance(x, y):

return sqrt(sum(pow(a-b,2) for a, b in zip(x, y)))

ed = euclidean_distance(x, y)

sr = square_rooted((x+y)/2)

euclidean_similarity = 1 - ed/sr

```

### Key Observations

* The diagram shows a pipeline where data flows from the Origin Model through various transformations (Top Mir, F32/Int8 Tpu Mir) to final models (F32/Int8 Model).

* The inclusion of both F32 (float32) and Int8 models suggests a quantization process is being explored, potentially for performance optimization.

* The "CastOp" in the Int8 Tpu Mir section confirms the conversion of data to Int8 format.

* The Python code provides implementations for calculating cosine similarity and Euclidean distance, which are common metrics for comparing vectors or data representations.

* The cosine similarity function is defined as a method of a class (`self`), suggesting it's part of a larger object-oriented structure.

* The Euclidean similarity is calculated as `1 - ed/sr`, where `ed` is the Euclidean distance and `sr` is the square root of the average of x and y.

### Interpretation

The diagram and code together suggest a system for evaluating or comparing different model representations (F32 vs. Int8). The pipeline likely involves converting a model to Int8 format (using the CastOp) and then comparing the outputs of the F32 and Int8 models using cosine similarity or Euclidean distance. This comparison could be used to assess the accuracy or performance impact of quantization. The "To Mir" and "To Model" operations likely represent data transfer between different processing stages or devices (e.g., CPU to TPU). The code provides the mathematical tools for quantifying the similarity between the outputs of these models. The use of both cosine and Euclidean similarity suggests a comprehensive evaluation of the model's behavior after quantization. The diagram is a high-level architectural overview, while the code provides the underlying mathematical functions used for analysis.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Technical Diagram: Model Conversion and Similarity Metrics

### Overview

The image is a composite technical document containing two distinct but related sections. On the left is a flowchart diagram illustrating a model conversion pipeline. On the right are Python code snippets defining mathematical functions for calculating vector similarity and distance metrics. The document appears to describe a process for converting a machine learning model between different numerical precisions (F32 and Int8) and the associated mathematical operations for comparing model outputs or embeddings.

### Components/Axes

**Left Section - Flowchart Diagram:**

* **Structure:** A directed graph with rectangular nodes connected by labeled arrows.

* **Nodes (Boxes):** Each box represents a model or a transformation stage. They are labeled with a title and contain sub-labels for their components.

* **Origin Model:** Contains "Input (Float)", "Network (Float)", "Output (Float)".

* **Top Mir:** Contains "Input (Float)", "Network (Float)", "Output (Float)".

* **F32 Tpu Mir:** Contains "Input (Float)", "Network (Float)", "Output (Float)".

* **Int8 Tpu Mir:** Contains "Input (Float)", "Network (Int8)", "CastOp (Int8)", "Output (Float)".

* **F32 Model:** Contains "Input (Float)", "Model (Float)", "Output (Float)".

* **Int8 Model:** Contains "Input (Float)", "Model (Int8)", "Output (Float)".

* **Arrows (Flow Direction):** Arrows indicate the sequence of operations. Labels on arrows specify the transformation.

* `Origin Model` -> `To Top Mir` -> `Top Mir`

* `Top Mir` -> `To F32 Mir` -> `F32 Tpu Mir`

* `Top Mir` -> `To Int8 Mir` -> `Int8 Tpu Mir`

* `F32 Tpu Mir` -> `To Model` -> `F32 Model`

* `Int8 Tpu Mir` -> `To Model` -> `Int8 Model`

**Right Section - Code Snippets:**

* **Language:** Python.

* **Content:** Function definitions and variable assignments for mathematical operations.

* **Key Symbols:** The symbol `←` appears on lines 6 and 7 of the `cosine_similarity` function and line 2 of the `euclidean_distance` function. This is likely a visual artifact or a non-standard notation for line continuation or a comment marker within the original document context.

### Detailed Analysis

**Flowchart Process Flow:**

1. The process begins with an **Origin Model** operating on Float data.

2. This model is transformed into a **Top Mir** (likely an intermediate representation), still using Float data.

3. From the **Top Mir**, the pipeline splits into two parallel conversion paths:

* **Path A (F32):** Converts to an **F32 Tpu Mir** (Tensor Processing Unit Mirror?), maintaining Float precision. This is then converted to a final **F32 Model**.

* **Path B (Int8):** Converts to an **Int8 Tpu Mir**. This stage introduces a **CastOp (Int8)**, indicating a quantization or casting operation from Float to Int8 for the network weights/operations. The output remains Float. This is then converted to a final **Int8 Model**.

**Code Transcription:**

```python

1 def square_rooted(self, x):

2 return sqrt(sum([a*a for a in x]))

3

4 def cosine_similarity(self, x, y):

5 numerator = sum(a*b for a,b in zip(x,y))

6 denominator = ←

7 self.square_rooted(x)*self.square_rooted(y)

8 return ←

9 round(numerator/float(denominator),3)

10

11 cosine_similarity = cosine_similarity(x, y)

12

13 Euclidean similarity is defined as below:

14

15 def euclidean_distance(x, y):

16 return sqrt(sum(pow(a-b,2) for a, b in ←

17 zip(x, y)))

18

19 ed = euclidean_distance(x, y)

20 sr = square_rooted((x+y)/2)

21 euclidean_similar = 1 - ed/sr

```

* **Line 1-2:** Defines a helper function `square_rooted` to compute the L2 norm (magnitude) of a vector `x`.

* **Line 4-9:** Defines `cosine_similarity` between vectors `x` and `y`. It calculates the dot product (`numerator`), divides by the product of their magnitudes (`denominator`), and rounds the result to 3 decimal places.

* **Line 11:** Assigns the result of `cosine_similarity(x, y)` to a variable of the same name.

* **Line 13:** A plain text line stating the definition of Euclidean similarity.

* **Line 15-17:** Defines `euclidean_distance` to compute the standard Euclidean distance between vectors `x` and `y`.

* **Line 19-21:** Calculates the Euclidean distance (`ed`), a combined magnitude (`sr`), and then derives a "Euclidean similarity" score using the formula `1 - (distance / combined_magnitude)`.

### Key Observations

1. **Dual-Precision Pipeline:** The diagram explicitly shows a workflow for creating both a full-precision (F32) and a quantized (Int8) version of a model from a common source (`Top Mir`).

2. **Asymmetric Data Types:** In the `Int8 Tpu Mir` and `Int8 Model`, the "Network" component is labeled as `(Int8)`, while the "Input" and "Output" remain `(Float)`. This suggests a quantized inference model where inputs/outputs are handled in floating-point, but internal computations use 8-bit integers.

3. **Code-Logic Connection:** The code defines metrics (cosine similarity, Euclidean distance/similarity) that are commonly used to compare the outputs or internal representations of two models. This strongly suggests the document's purpose is to compare the **F32 Model** and the **Int8 Model** to evaluate the impact of quantization.

4. **Notation:** The use of `←` in the code is unusual for standard Python. It may represent a line-continuation character from a specific editor or a typographical choice in the source document.

### Interpretation

This technical document outlines a **model quantization validation pipeline**. The flowchart details the technical steps to convert a floating-point model into an 8-bit integer version suitable for efficient inference on specialized hardware (like a TPU). The accompanying code provides the mathematical tools to quantitatively assess the fidelity of this conversion.

The core investigative question being addressed is: **"How similar are the outputs of the fast, efficient Int8 model compared to the original, precise F32 model?"**

* **Cosine Similarity** measures the angular alignment between output vectors, focusing on direction rather than magnitude. A value near 1.0 indicates the models' outputs point in the same direction in the embedding space.

* **Euclidean Similarity** (as defined here) incorporates both direction and magnitude. The formula `1 - (distance / combined_magnitude)` normalizes the distance, creating a similarity score where 1.0 indicates identical vectors.

The process implies that after generating outputs from both models for the same inputs, one would compute these similarity scores. High scores would validate that the quantization process (`CastOp` to Int8) preserved the essential behavior of the model, making the Int8 version a reliable and efficient substitute for the F32 version in deployment. The document serves as both a process specification and a methodological guide for this validation.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram Type: Model Transformation and Similarity Metrics Flowchart

### Overview

The image presents a technical diagram illustrating the transformation of machine learning models through different precision levels (F32, Int8) and the mathematical definitions of similarity metrics used in these models. It combines flowchart elements with code snippets and mathematical operations.

### Components/Axes

#### Flowchart Components

1. **Origin Model**

- Input (Float)

- Network (Float)

- Output (Float)

2. **Top Milr**

- Input (Float)

- Network (Float)

- Output (Float)

3. **F32 Tpu Milr**

- Input (Float)

- Network (Float)

- Output (Float)

4. **Int8 Tpu Milr**

- Input (Float)

- Network (Int8)

- Output (Float)

#### Code Sections

1. **Cosine Similarity Function**

```python

def cosine_similarity(self, x, y):

numerator = sum(a*b for a, b in zip(x,y))

denominator = self.square_root(x)*self.square_root(y)

return round(numerator/float(denominator),3)

```

2. **Euclidean Similarity Function**

```python

def euclidean_similarity(x, y):

ed = euclidean_distance(x, y)

sr = square_root((x+y)/2)

return 1 - ed/sr

```

#### Mathematical Definitions

- **Square Root Function**

```python

def square_rooted(self, x):

return sqrt(sum([a*a for a in x]))

```

- **Cosine Similarity Formula**

```python

cosine_similarity = (sum(a*b for a,b in zip(x,y))) / (sqrt(sum(a**2 for a in x)) * sqrt(sum(b**2 for b in y)))

```

- **Euclidean Similarity Definition**

```python

euclidean_similarity = 1 - (euclidean_distance(x, y) / square_root((x+y)/2))

```

### Detailed Analysis

#### Model Transformation Flow

1. **Origin Model → Top Milr**

- Direct transformation via "To Top Milr" arrow

- Maintains Float precision throughout

2. **Top Milr → F32 Tpu Milr**

- Explicit "To F32 Milr" conversion

- Preserves Float precision

3. **Top Milr → Int8 Tpu Milr**

- Requires "CastOp (Int8)" operation

- Reduces precision from Float to Int8

- Maintains Float output

#### Code Snippets

- **Square Root Function**

- Computes L2 norm via element-wise squaring and summation

- Returns `sqrt(sum([a*a for a in x]))`

- **Cosine Similarity**

- Calculates dot product divided by product of magnitudes

- Rounds result to 3 decimal places

- **Euclidean Similarity**

- Uses Euclidean distance normalized by square root of average

- Returns similarity score between 0 and 1

### Key Observations

1. **Precision Optimization**

- Model transformations show deliberate precision reduction (Float → Int8)

- Suggests hardware efficiency optimization for TPU inference

2. **Mathematical Consistency**

- All similarity metrics use standardized mathematical operations

- Rounding to 3 decimals implies precision requirements for model stability

3. **Hardware-Specific Adaptation**

- Int8 Tpu Milr introduces quantization (CastOp) while maintaining Float outputs

- Indicates post-quantization calibration steps

### Interpretation

The diagram reveals a systematic approach to model optimization for edge deployment:

1. **Quantization Strategy**

- Maintains Float precision in Top Milr stage

- Applies 8-bit quantization (Int8) only in final Tpu Milr stage

- Suggests staged optimization balancing accuracy and efficiency

2. **Similarity Metric Implementation**

- Cosine similarity uses explicit rounding, critical for stable comparisons

- Euclidean similarity combines distance calculation with normalization

- Both metrics form the foundation for model decision-making processes

3. **Hardware-Software Co-Design**

- Model architecture (F32/Int8) directly maps to TPU capabilities

- Mathematical operations align with hardware acceleration requirements

- CastOp operations indicate explicit precision management for hardware compatibility

The absence of numerical data points suggests this is a conceptual architecture diagram rather than empirical results. The emphasis on precision management and standardized mathematical operations indicates a focus on reproducible, hardware-optimized model deployment.

DECODING INTELLIGENCE...