## Diagram Type: Model Transformation and Similarity Metrics Flowchart

### Overview

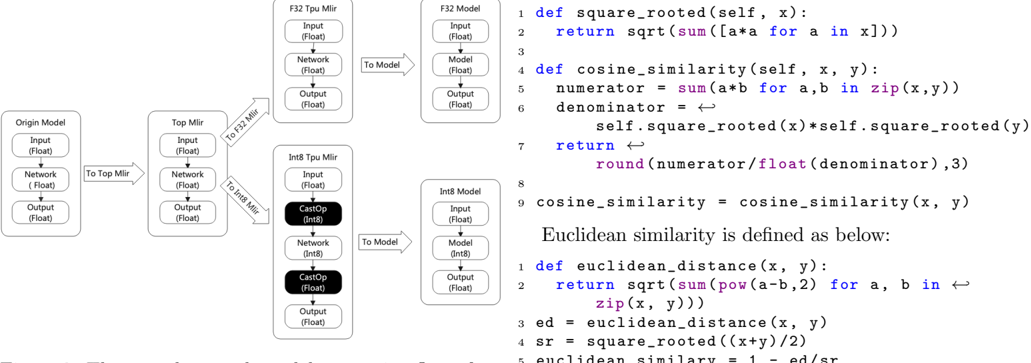

The image presents a technical diagram illustrating the transformation of machine learning models through different precision levels (F32, Int8) and the mathematical definitions of similarity metrics used in these models. It combines flowchart elements with code snippets and mathematical operations.

### Components/Axes

#### Flowchart Components

1. **Origin Model**

- Input (Float)

- Network (Float)

- Output (Float)

2. **Top Milr**

- Input (Float)

- Network (Float)

- Output (Float)

3. **F32 Tpu Milr**

- Input (Float)

- Network (Float)

- Output (Float)

4. **Int8 Tpu Milr**

- Input (Float)

- Network (Int8)

- Output (Float)

#### Code Sections

1. **Cosine Similarity Function**

```python

def cosine_similarity(self, x, y):

numerator = sum(a*b for a, b in zip(x,y))

denominator = self.square_root(x)*self.square_root(y)

return round(numerator/float(denominator),3)

```

2. **Euclidean Similarity Function**

```python

def euclidean_similarity(x, y):

ed = euclidean_distance(x, y)

sr = square_root((x+y)/2)

return 1 - ed/sr

```

#### Mathematical Definitions

- **Square Root Function**

```python

def square_rooted(self, x):

return sqrt(sum([a*a for a in x]))

```

- **Cosine Similarity Formula**

```python

cosine_similarity = (sum(a*b for a,b in zip(x,y))) / (sqrt(sum(a**2 for a in x)) * sqrt(sum(b**2 for b in y)))

```

- **Euclidean Similarity Definition**

```python

euclidean_similarity = 1 - (euclidean_distance(x, y) / square_root((x+y)/2))

```

### Detailed Analysis

#### Model Transformation Flow

1. **Origin Model → Top Milr**

- Direct transformation via "To Top Milr" arrow

- Maintains Float precision throughout

2. **Top Milr → F32 Tpu Milr**

- Explicit "To F32 Milr" conversion

- Preserves Float precision

3. **Top Milr → Int8 Tpu Milr**

- Requires "CastOp (Int8)" operation

- Reduces precision from Float to Int8

- Maintains Float output

#### Code Snippets

- **Square Root Function**

- Computes L2 norm via element-wise squaring and summation

- Returns `sqrt(sum([a*a for a in x]))`

- **Cosine Similarity**

- Calculates dot product divided by product of magnitudes

- Rounds result to 3 decimal places

- **Euclidean Similarity**

- Uses Euclidean distance normalized by square root of average

- Returns similarity score between 0 and 1

### Key Observations

1. **Precision Optimization**

- Model transformations show deliberate precision reduction (Float → Int8)

- Suggests hardware efficiency optimization for TPU inference

2. **Mathematical Consistency**

- All similarity metrics use standardized mathematical operations

- Rounding to 3 decimals implies precision requirements for model stability

3. **Hardware-Specific Adaptation**

- Int8 Tpu Milr introduces quantization (CastOp) while maintaining Float outputs

- Indicates post-quantization calibration steps

### Interpretation

The diagram reveals a systematic approach to model optimization for edge deployment:

1. **Quantization Strategy**

- Maintains Float precision in Top Milr stage

- Applies 8-bit quantization (Int8) only in final Tpu Milr stage

- Suggests staged optimization balancing accuracy and efficiency

2. **Similarity Metric Implementation**

- Cosine similarity uses explicit rounding, critical for stable comparisons

- Euclidean similarity combines distance calculation with normalization

- Both metrics form the foundation for model decision-making processes

3. **Hardware-Software Co-Design**

- Model architecture (F32/Int8) directly maps to TPU capabilities

- Mathematical operations align with hardware acceleration requirements

- CastOp operations indicate explicit precision management for hardware compatibility

The absence of numerical data points suggests this is a conceptual architecture diagram rather than empirical results. The emphasis on precision management and standardized mathematical operations indicates a focus on reproducible, hardware-optimized model deployment.