TECHNICAL ASSET FINGERPRINT

c5007f22f887e2df13d133de

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Neural Network Diagram: ViT-Based Rule Generation

### Overview

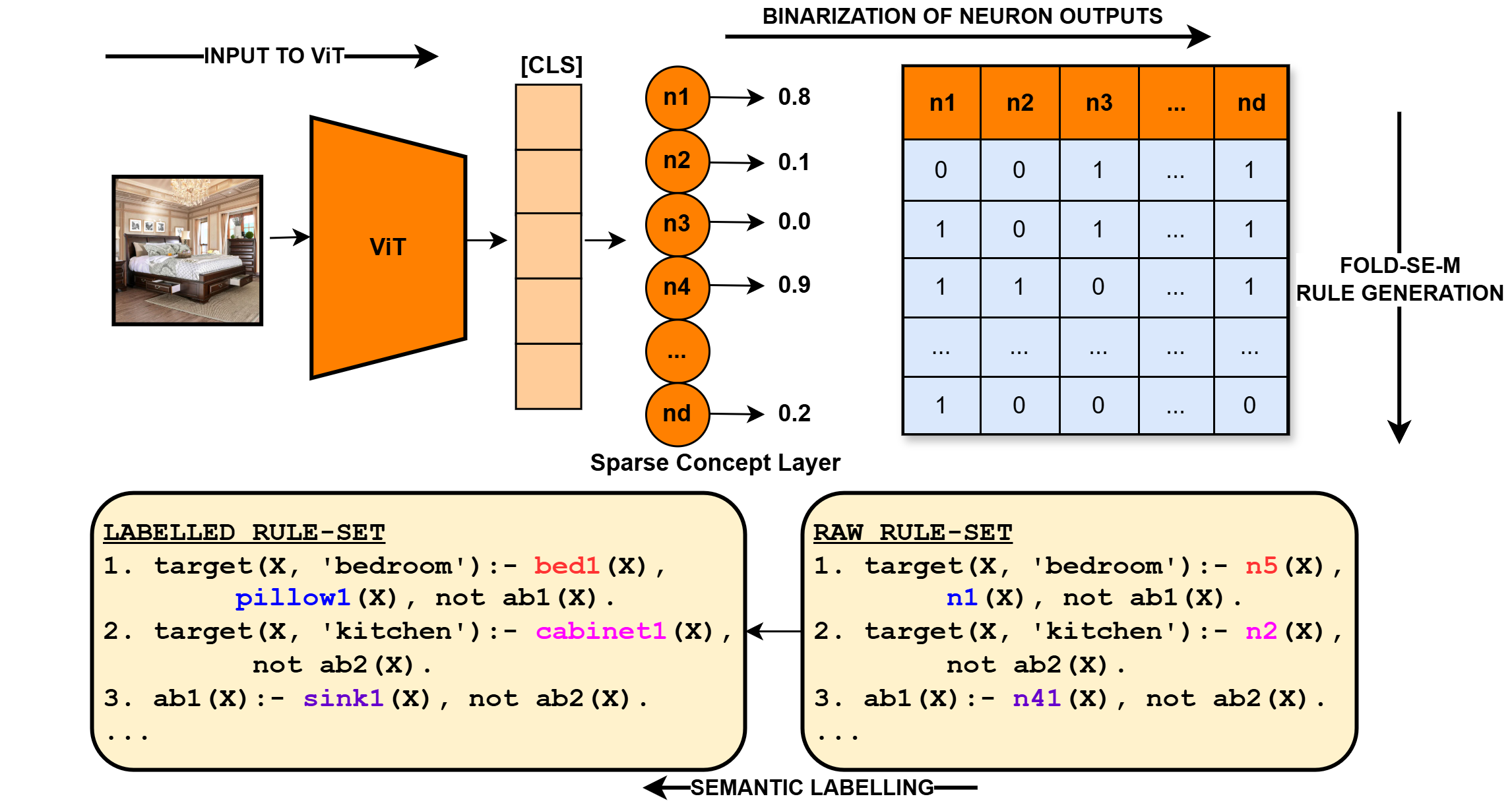

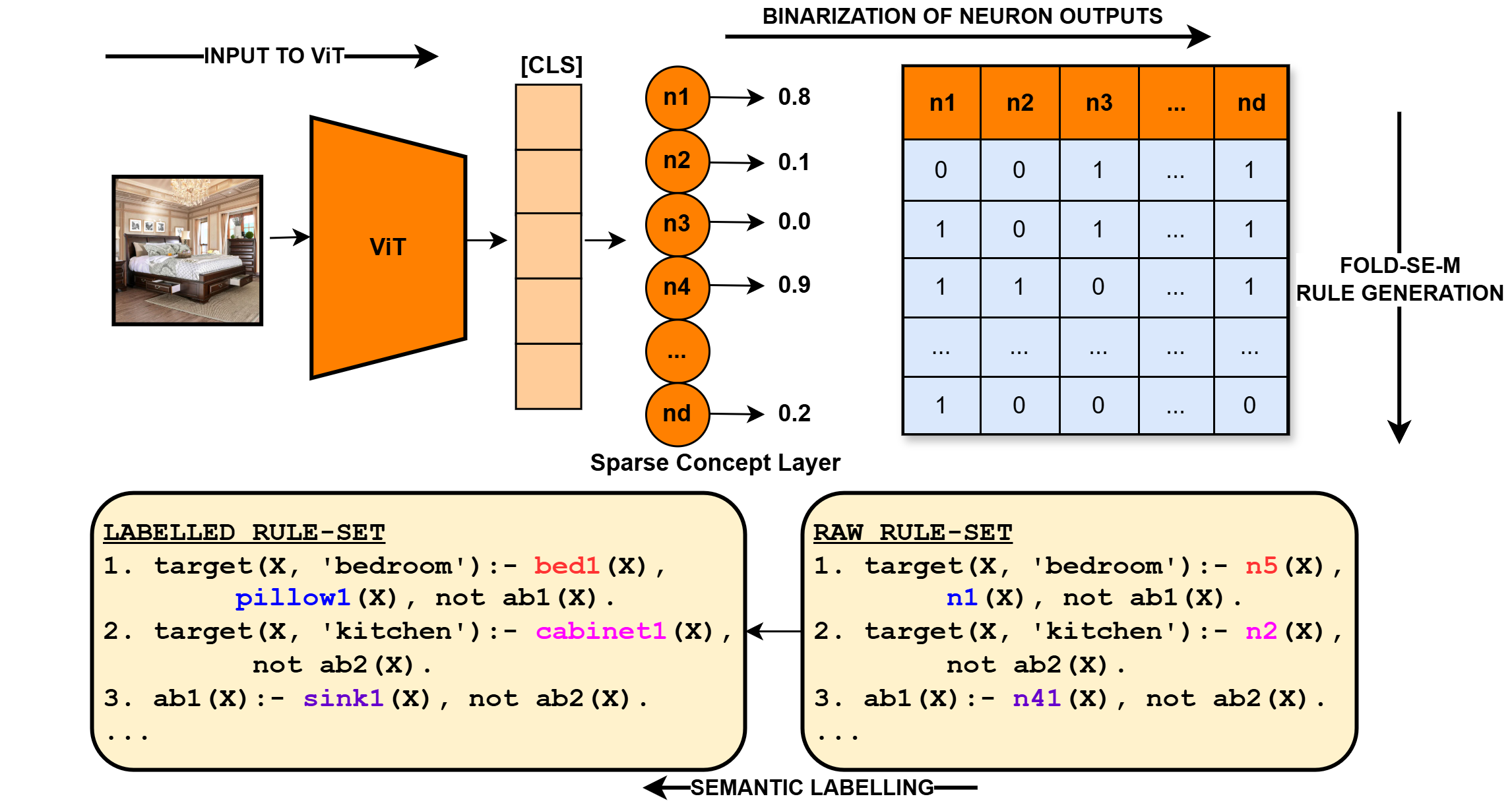

The image illustrates a process of generating rules from a Vision Transformer (ViT) model. It shows the flow from an input image to a binarized neuron output, followed by rule generation and semantic labeling.

### Components/Axes

* **Input Image:** A photograph of a bedroom.

* **Input to ViT:** An arrow pointing from the input image to the ViT block.

* **ViT:** A trapezoidal block labeled "ViT" (Vision Transformer).

* **[CLS]:** A column of orange rectangles, representing the classification token output from the ViT.

* **Sparse Concept Layer:** A layer of neurons (n1, n2, n3, n4, ..., nd) with corresponding output values (0.8, 0.1, 0.0, 0.9, ..., 0.2).

* **Binarization of Neuron Outputs:** A table showing the binarized outputs of the neurons. The columns are labeled n1, n2, n3, ..., nd. The rows represent different patterns of neuron activation.

* **FOLD-SE-M Rule Generation:** An arrow pointing downwards from the binarization table, indicating the rule generation process.

* **RAW RULE-SET:** A block of text containing rules generated directly from the neuron outputs.

* **LABELLED RULE-SET:** A block of text containing semantically labeled rules.

* **SEMANTIC LABELLING:** An arrow pointing from the "LABELLED RULE-SET" to the "RAW RULE-SET", indicating the semantic labeling process.

### Detailed Analysis

**1. Input Image and ViT:**

* The input image is a bedroom scene.

* The ViT processes the image and generates a classification token.

**2. Sparse Concept Layer:**

* The neurons n1, n2, n3, n4, and nd have output values of approximately 0.8, 0.1, 0.0, 0.9, and 0.2, respectively.

* The values represent the activation levels of these neurons.

**3. Binarization of Neuron Outputs:**

The table represents the binarized outputs of the neurons. The values are either 0 or 1.

| | n1 | n2 | n3 | ... | nd |

| :---- | :-- | :-- | :-- | :-- | :-- |

| Row 1 | 0 | 0 | 1 | ... | 1 |

| Row 2 | 1 | 0 | 1 | ... | 1 |

| Row 3 | 1 | 1 | 0 | ... | 1 |

| ... | ... | ... | ... | ... | ... |

| Row N | 1 | 0 | 0 | ... | 0 |

**4. Rule Sets:**

* **RAW RULE-SET:**

1. `target(X, 'bedroom'):- n5(X), n1(X), not ab1(X).`

2. `target(X, 'kitchen'):- n2(X), not ab2(X).`

3. `ab1(X):- n41(X), not ab2(X).`

4. `...`

* **LABELLED RULE-SET:**

1. `target(X, 'bedroom'):- bed1(X), pillow1(X), not ab1(X).`

2. `target(X, 'kitchen'):- cabinet1(X), not ab2(X).`

3. `ab1(X):- sink1(X), not ab2(X).`

4. `...`

### Key Observations

* The diagram illustrates a pipeline for generating rules from visual data using a ViT model.

* The sparse concept layer represents an intermediate representation of the image.

* The binarization process converts the continuous neuron outputs into binary values.

* The FOLD-SE-M algorithm generates rules based on these binary patterns.

* Semantic labeling refines the rules by replacing neuron activations with meaningful concepts.

### Interpretation

The diagram demonstrates a method for extracting symbolic knowledge from visual data using a Vision Transformer. The process involves transforming an image into a set of neuron activations, binarizing these activations, and then generating rules based on the resulting binary patterns. Finally, semantic labeling is used to refine the rules and make them more interpretable. This approach could be used to build AI systems that can reason about visual scenes and make decisions based on learned rules. The transformation from RAW RULE-SET to LABELLED RULE-SET is a critical step in making the rules human-understandable and actionable.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Visualizing Rule Generation from Image Input

### Overview

This diagram illustrates a process for generating rules from image input using a Vision Transformer (ViT) model, a sparse concept layer, and a Fold-SE-M rule generation component. The process involves inputting an image, extracting features with ViT, binarizing neuron outputs, and then generating labeled and raw rule sets through semantic labeling.

### Components/Axes

The diagram consists of the following key components:

* **Input Image:** A photograph of a bedroom scene.

* **ViT (Vision Transformer):** A red rectangular block representing the image feature extractor.

* **Sparse Concept Layer:** A series of circles labeled `n1`, `n2`, `n3`, `n4`, ..., `nd`, representing neurons with associated confidence scores.

* **Binarization of Neuron Outputs:** A rectangular table showing the binary (0 or 1) outputs of the neurons.

* **Fold-SE-M Rule Generation:** A rectangular block indicating the rule generation process.

* **Labelled Rule-Set:** A block of text listing rules with semantic labels.

* **Raw Rule-Set:** A block of text listing rules without semantic labels.

* **Arrows:** Indicate the flow of information between components.

* **[CLS]**: A label indicating the classification token.

### Detailed Analysis or Content Details

**1. Input to ViT:**

* The input is an image of a bedroom. The image shows a bed, a window, and other furniture.

**2. ViT Output & Sparse Concept Layer:**

* The ViT model processes the image and outputs features to the Sparse Concept Layer.

* The Sparse Concept Layer consists of neurons `n1` through `nd`.

* The confidence scores associated with each neuron are as follows (approximate values):

* `n1`: 0.8

* `n2`: 0.1

* `n3`: 0.0

* `n4`: 0.9

* `nd`: 0.2

**3. Binarization of Neuron Outputs:**

* The neuron outputs are binarized (converted to 0 or 1). The table shows the binary outputs for neurons `n1` through `nd`.

* The table has columns `n1`, `n2`, `n3`, `n4`, and `nd` (and more, indicated by "...").

* The table has rows of binary values (0 or 1).

* Example values:

* `n1`: 0, 1, 1, 1, ... , 1

* `n2`: 0, 0, 1, 1, ... , 1

* `n3`: 1, 0, 1, 0, ... , 0

* `n4`: 1, 1, 0, 1, ... , 1

* `nd`: 1, 0, 0, 0, ... , 0

**4. Labelled Rule-Set:**

* The labelled rule-set contains rules with semantic labels.

* Example rules:

1. `target(X, 'bedroom') :- bed1(X), pillow1(X), not ab1(X).`

2. `target(X, 'kitchen') :- cabinet1(X), not ab2(X).`

3. `ab1(X) :- sink1(X), not ab2(X).`

**5. Raw Rule-Set:**

* The raw rule-set contains rules without semantic labels.

* Example rules:

1. `target(X, 'bedroom') :- n5(X), n1(X), not ab1(X).`

2. `target(X, 'kitchen') :- n2(X), not ab2(X).`

3. `ab1(X) :- n41(X), not ab2(X).`

**6. Semantic Labelling:**

* An arrow labeled "SEMANTIC LABELLING" connects the Raw Rule-Set to the Labelled Rule-Set, indicating the process of adding semantic labels to the rules.

**7. Fold-SE-M Rule Generation:**

* An arrow labeled "FOLD-SE-M RULE GENERATION" points from the Binarization of Neuron Outputs to the Fold-SE-M Rule Generation block.

### Key Observations

* The confidence scores of the neurons vary significantly, suggesting that some neurons are more strongly activated by the input image than others.

* The binarization process converts the continuous confidence scores into discrete binary values, which may result in information loss.

* The labelled rule-set provides more interpretable rules by associating semantic labels with the concepts.

* The raw rule-set uses neuron identifiers (e.g., `n5(X)`) instead of semantic labels.

### Interpretation

This diagram illustrates a pipeline for converting visual information into symbolic rules. The ViT model extracts features from the image, and the sparse concept layer represents these features as a set of neurons. The binarization step creates a discrete representation of the neuron activations, which is then used to generate rules. The semantic labelling process adds meaning to the rules, making them more interpretable. The overall goal is to create a system that can reason about images and generate logical rules based on their content. The difference between the "Labelled Rule-Set" and the "Raw Rule-Set" suggests a process of mapping neuron activations to human-understandable concepts. The varying confidence scores of the neurons indicate that the system is able to identify different concepts with varying degrees of certainty. The use of "not" in the rules suggests that the system is also able to identify the absence of certain concepts. This system could be used for tasks such as visual question answering, image captioning, or robot navigation.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Vision Transformer (ViT) to Interpretable Rule Generation Pipeline

### Overview

The image is a technical flowchart illustrating a machine learning pipeline that transforms an input image into human-interpretable logical rules. The process uses a Vision Transformer (ViT) to extract features, binarizes neuron activations, generates raw rules via an algorithm called "FOLD-SE-M," and finally applies "Semantic Labelling" to produce a human-readable rule set. The diagram is divided into three main horizontal sections: the input and feature extraction (left), the binarization and rule generation (top-right), and the two resulting rule sets (bottom).

### Components/Axes

The diagram contains the following labeled components and text elements, organized by spatial region:

**1. Input & Feature Extraction (Left Side):**

* **Image:** A photograph of a bedroom interior (bed, nightstand, chandelier).

* **Label:** `INPUT TO ViT` (with an arrow pointing right).

* **Model Block:** An orange trapezoid labeled `ViT`.

* **Output Token:** A vertical stack of orange rectangles labeled `[CLS]`.

* **Layer Label:** `Sparse Concept Layer` (below the neuron circles).

* **Neuron Circles:** A vertical column of orange circles labeled `n1`, `n2`, `n3`, `n4`, `...`, `nd`.

* **Activation Values:** Arrows from each neuron to a numerical value: `n1 → 0.8`, `n2 → 0.1`, `n3 → 0.0`, `n4 → 0.9`, `...`, `nd → 0.2`.

**2. Binarization & Rule Generation (Top-Right):**

* **Process Label:** `BINARIZATION OF NEURON OUTPUTS` (with an arrow pointing right).

* **Binarization Table:** A grid with an orange header row (`n1`, `n2`, `n3`, `...`, `nd`) and five data rows containing binary values (0s and 1s).

* Row 1: `0`, `0`, `1`, `...`, `1`

* Row 2: `1`, `0`, `1`, `...`, `1`

* Row 3: `1`, `1`, `0`, `...`, `1`

* Row 4: `...`, `...`, `...`, `...`, `...`

* Row 5: `1`, `0`, `0`, `...`, `0`

* **Algorithm Label:** `FOLD-SE-M RULE GENERATION` (with a downward arrow pointing to the Raw Rule-Set).

**3. Rule Sets (Bottom):**

* **Raw Rule-Set Box (Right):** A beige rounded rectangle titled `RAW RULE-SET`. It contains three numbered logical rules in a Prolog-like syntax. Key elements are color-coded (pink for `n5`, `n2`, `n41`; blue for `n1`).

1. `target(X, 'bedroom') :- n5(X), n1(X), not ab1(X).`

2. `target(X, 'kitchen') :- n2(X), not ab2(X).`

3. `ab1(X) :- n41(X), not ab2(X).`

4. `...`

* **Semantic Labelling Arrow:** A leftward arrow labeled `SEMANTIC LABELLING` connecting the Raw Rule-Set to the Labelled Rule-Set.

* **Labelled Rule-Set Box (Left):** A beige rounded rectangle titled `LABELLED RULE-SET`. It contains the semantically labeled version of the rules. Key elements are color-coded (red for `bed1`, `cabinet1`; blue for `pillow1`; purple for `sink1`).

1. `target(X, 'bedroom') :- bed1(X), pillow1(X), not ab1(X).`

2. `target(X, 'kitchen') :- cabinet1(X), not ab2(X).`

3. `ab1(X) :- sink1(X), not ab2(X).`

4. `...`

### Content Details

The pipeline details are as follows:

1. **Input Processing:** An image of a bedroom is fed into a Vision Transformer (ViT). The ViT processes the image and outputs a `[CLS]` token representation.

2. **Sparse Concept Activation:** The `[CLS]` token activates a "Sparse Concept Layer" consisting of neurons `n1` through `nd`. The example shows specific activation strengths: `n1=0.8`, `n2=0.1`, `n3=0.0`, `n4=0.9`, `nd=0.2`.

3. **Binarization:** The continuous activation values are converted into a binary matrix. Each row in the table likely represents a different input sample or data point. The columns correspond to neurons `n1` to `nd`. A value of `1` indicates the neuron is active (above a threshold), and `0` indicates it is inactive. The first row shows neurons `n3` and `nd` are active for that sample.

4. **Rule Generation:** The "FOLD-SE-M" algorithm processes the binarized data to generate a `RAW RULE-SET`. The rules use the abstract neuron identifiers (`n5`, `n1`, `n2`, `n41`) as predicates. For example, Rule 1 states that for an input `X` to be classified as a 'bedroom', neurons `n5` and `n1` must be active, and the abnormality condition `ab1` must not hold.

5. **Semantic Labelling:** The abstract neuron identifiers in the raw rules are mapped to human-interpretable concept names (e.g., `n5` → `bed1`, `n1` → `pillow1`, `n2` → `cabinet1`, `n41` → `sink1`). This produces the final `LABELLED RULE-SET`. The rules now read as intuitive logical statements: e.g., a 'bedroom' is defined by the presence of a bed and a pillow, and the absence of a specific abnormality (`ab1`), which itself is defined by the presence of a sink.

### Key Observations

* **Color Coding:** Colors are used systematically to trace concepts. Orange represents the ViT and its neurons. In the rule sets, pink highlights raw neuron IDs, while red, blue, and purple highlight their corresponding semantic labels (`bed1`, `pillow1`, `sink1`).

* **Rule Structure:** The rules follow a Definite Clause Grammar (DCG) or Prolog-like syntax, using `:-` for implication and `not` for negation. They include both target classification rules and intermediate "abnormality" (`ab`) definition rules.

* **Sparsity:** The term "Sparse Concept Layer" and the binarization step suggest the system aims to identify a small, discrete set of meaningful features from the high-dimensional ViT output.

* **Transformation:** The core transformation is from subsymbolic, continuous neural activations (`0.8`, `0.1`) to symbolic, discrete logical rules (`bed1(X), pillow1(X)`).

### Interpretation

This diagram depicts a method for **extracting interpretable, logical knowledge from a black-box vision model (ViT)**. The pipeline bridges the gap between connectionist AI (neural networks) and symbolic AI (logic rules).

* **What it demonstrates:** It shows a concrete workflow for "opening the black box." Instead of just getting a classification ("bedroom"), the system produces an explicit, auditable reason: "because a bed and a pillow are present, and a sink is not present (which would indicate a kitchen)."

* **Relationship between elements:** The ViT acts as a powerful feature extractor. The Sparse Concept Layer and binarization act as a bottleneck to distill these features into discrete, switch-like concepts. The FOLD-SE-M algorithm then performs rule induction from these binary patterns. Finally, semantic labelling grounds these abstract patterns in human-understandable vocabulary, likely using an external knowledge base or embedding similarity.

* **Significance:** This approach addresses key criticisms of deep learning—lack of transparency and interpretability. The resulting rules can be inspected, verified, and potentially edited by humans. The inclusion of "abnormality" rules (`ab1`, `ab2`) suggests the system can also model and reason about exceptions or confounding factors. The pipeline is valuable for domains requiring explainable AI, such as medical diagnosis or autonomous systems, where understanding the "why" behind a decision is crucial.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Flowchart: Visual Concept Extraction and Rule Generation Pipeline

### Overview

The diagram illustrates a technical pipeline for extracting visual concepts from an input image using a Vision Transformer (ViT) and generating interpretable rules through neuron binarization and semantic labeling. The process involves multiple stages: feature extraction, sparse concept identification, binarization, and rule generation.

### Components/Axes

1. **Input to ViT**:

- Input image (bedroom scene) → ViT (orange hexagon)

- Output: Class token (CLS) (peach rectangle)

2. **Sparse Concept Layer**:

- Neurons n1-n5 with activation values (0.8, 0.1, 0.0, 0.9, 0.2)

- Binarization matrix (0s/1s) representing neuron states

3. **Fold-SEM Rule Generation**:

- Left: Labeled Rule-Set (bedroom/kitchen concepts)

- Right: Raw Rule-Set (neuron-based rules)

4. **Semantic Labeling**:

- Connection between raw rules and labeled concepts

### Detailed Analysis

#### Binarization Matrix

| Neuron | n1 | n2 | n3 | ... | nd |

|--------|----|----|----|-----|----|

| n1 | 0 | 0 | 1 | ... | 1 |

| n2 | 1 | 0 | 1 | ... | 1 |

| n3 | 1 | 1 | 0 | ... | 1 |

| n4 | ...| ...| ...| ... | ...|

| n5 | 1 | 0 | 0 | ... | 0 |

#### Rule Sets

**Labeled Rule-Set**:

1. `target(X, 'bedroom'):- bed1(X), pillow1(X), not ab1(X).`

2. `target(X, 'kitchen'):- cabinet1(X), not ab2(X).`

3. `ab1(X):- sink1(X), not ab2(X).`

**Raw Rule-Set**:

1. `target(X, 'bedroom'):- n5(X), n1(X), not ab1(X).`

2. `target(X, 'kitchen'):- n2(X), not ab2(X).`

3. `ab1(X):- n41(X), not ab2(X).`

### Key Observations

1. **Activation Thresholding**: Neurons with activation >0.5 are binarized to 1 (e.g., n1=0.8→1, n3=0.0→0)

2. **Concept Composition**: Bedroom concept combines bed1 + pillow1 while excluding ab1

3. **Attribute Negation**: Rules explicitly exclude certain attributes (ab1/ab2)

4. **Neuron Mapping**: Raw rules use neuron indices (n1-n5) while labeled rules use semantic terms (bed1, cabinet1)

### Interpretation

This pipeline demonstrates a method for creating interpretable AI systems by:

1. **Concept Decomposition**: Breaking down complex visual concepts (bedroom/kitchen) into constituent neurons

2. **Rule Extraction**: Translating neural activations into human-readable logical rules

3. **Attribute Handling**: Explicitly modeling object relationships through negation (not ab1)

The use of fold-SEM (First-Order Semantic Mapping) suggests a formal framework for connecting low-level neural representations to high-level semantic concepts. The explicit negation in rules indicates the system can model both presence and absence of features, crucial for accurate scene understanding.

The pipeline's strength lies in its ability to maintain interpretability while leveraging the representational power of deep learning models, potentially enabling more transparent AI decision-making in visual domains.

DECODING INTELLIGENCE...