## Diagram: Comparison of Context, GPT Answer, and Ground Truth for Action Recognition

### Overview

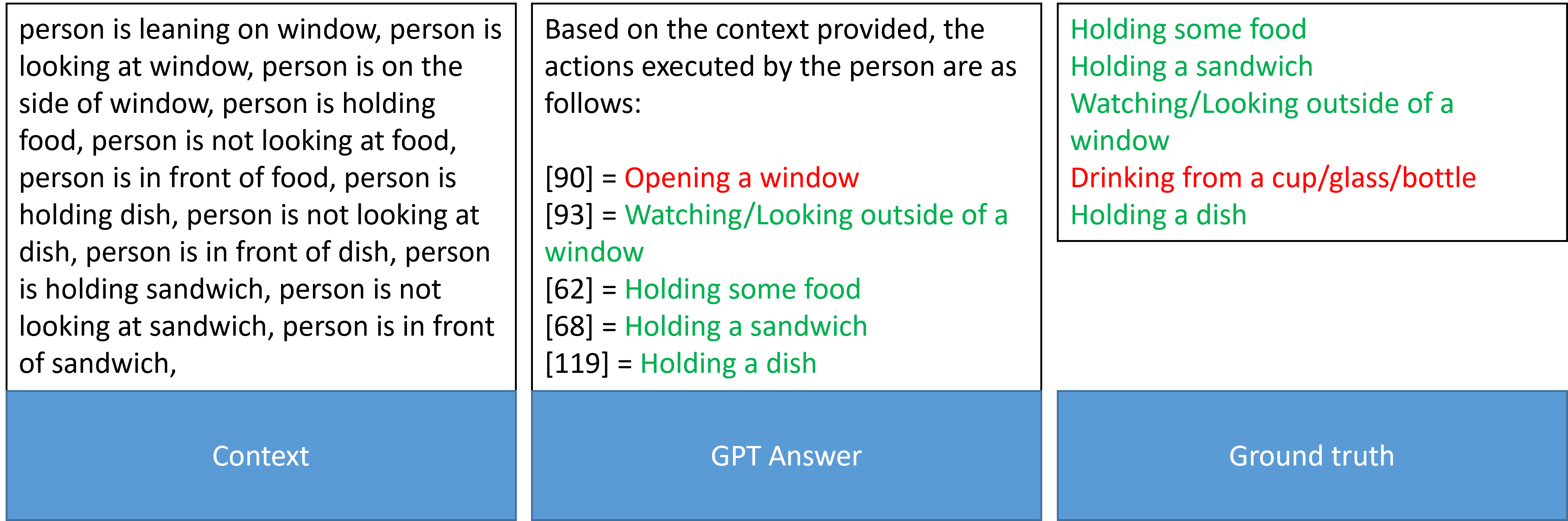

The image is a three-panel diagram comparing textual descriptions of a person's actions and states. The left panel provides raw observational context, the middle panel shows a structured answer generated by a GPT model, and the right panel lists the ground truth actions. The diagram appears to be from a technical evaluation or research context, likely assessing the performance of an AI model in interpreting contextual data to identify human actions.

### Components/Axes

The diagram consists of three vertically aligned rectangular panels, each with a blue footer label.

1. **Left Panel (Labeled "Context"):** Contains a block of plain text describing a person's spatial relationships and interactions with objects.

2. **Middle Panel (Labeled "GPT Answer"):** Contains a structured list of actions with associated numerical identifiers in brackets. The text uses color coding (red and green).

3. **Right Panel (Labeled "Ground truth"):** Contains a list of actions, also using color coding (green and red).

### Detailed Analysis

#### Panel 1: Context

This panel contains a continuous block of English text. The text is a list of observational statements about a person. The full transcription is:

"person is leaning on window, person is looking at window, person is on the side of window, person is holding food, person is not looking at food, person is in front of food, person is holding dish, person is not looking at dish, person is in front of dish, person is holding sandwich, person is not looking at sandwich, person is in front of sandwich,"

#### Panel 2: GPT Answer

This panel presents a structured interpretation of the context. It begins with an introductory sentence and lists five actions with numerical codes.

* **Introductory Text:** "Based on the context provided, the actions executed by the person are as follows:"

* **Listed Actions (with color and code):**

* `[90] = Opening a window` (Text in **red**)

* `[93] = Watching/Looking outside of a window` (Text in **green**)

* `[62] = Holding some food` (Text in **green**)

* `[68] = Holding a sandwich` (Text in **green**)

* `[119] = Holding a dish` (Text in **green**)

#### Panel 3: Ground truth

This panel lists the correct or reference actions. The text is color-coded, likely indicating correctness relative to the GPT Answer.

* **Listed Actions (with color):**

* `Holding some food` (Text in **green**)

* `Holding a sandwich` (Text in **green**)

* `Watching/Looking outside of a window` (Text in **green**)

* `Drinking from a cup/glass/bottle` (Text in **red**)

* `Holding a dish` (Text in **green**)

### Key Observations

1. **Color Coding Discrepancy:** The color meaning appears inverted between the "GPT Answer" and "Ground truth" panels.

* In the **GPT Answer**, "Opening a window" is in **red**, while the other four actions are in **green**.

* In the **Ground truth**, "Drinking from a cup/glass/bottle" is in **red**, while the other four actions are in **green**.

* This suggests that in the GPT Answer panel, **red** may indicate an action the model predicted that is *not* in the ground truth (a false positive). In the Ground truth panel, **red** may indicate an action that is correct but was *missed* by the GPT model (a false negative).

2. **Action Comparison:**

* **Matches (True Positives):** Three actions appear in both lists with green text in the Ground Truth: "Holding some food," "Holding a sandwich," "Watching/Looking outside of a window," and "Holding a dish." (Note: "Holding a dish" is green in both).

* **GPT False Positive:** The action "Opening a window" (red in GPT Answer) is not present in the Ground Truth list.

* **GPT False Negative:** The action "Drinking from a cup/glass/bottle" (red in Ground Truth) is not present in the GPT Answer.

3. **Context vs. Inference:** The "Context" panel describes states ("holding," "in front of," "not looking at") but does not explicitly state the action "Opening a window" or "Drinking." The GPT model inferred "Opening a window" from the context about the window, which the ground truth does not support. The ground truth includes "Drinking," which is not directly supported by the provided context statements.

### Interpretation

This diagram illustrates a common challenge in AI evaluation: mapping ambiguous, descriptive context to a discrete set of action labels. The comparison reveals the strengths and weaknesses of the GPT model's reasoning.

* **What the data suggests:** The model successfully extracted several core actions directly mentioned or strongly implied by the context (holding food/sandwich/dish, looking out a window). However, it also hallucinated an action ("Opening a window") based on associative reasoning (person near window -> might be opening it) and failed to infer an action ("Drinking") that may have been present in the original scene but was not described in the provided context snippet.

* **Relationship between elements:** The "Context" is the input, the "GPT Answer" is the model's output, and the "Ground truth" is the benchmark. The color coding visually highlights the model's precision (avoiding false positives like "Opening a window") and recall (capturing all true actions like "Drinking").

* **Notable anomaly:** The most significant finding is the model's confidence in an action ("Opening a window") that is entirely absent from the ground truth. This indicates a potential bias in the model's training or reasoning, where it over-interprets spatial proximity as interaction. The missed "Drinking" action suggests the model may rely too heavily on explicit textual cues and struggles with actions that are contextually plausible but not stated. This evaluation is crucial for improving the model's reliability in real-world applications like video understanding or assistive technology.