## Textual Analysis: Action Recognition Context and Ground Truth

### Overview

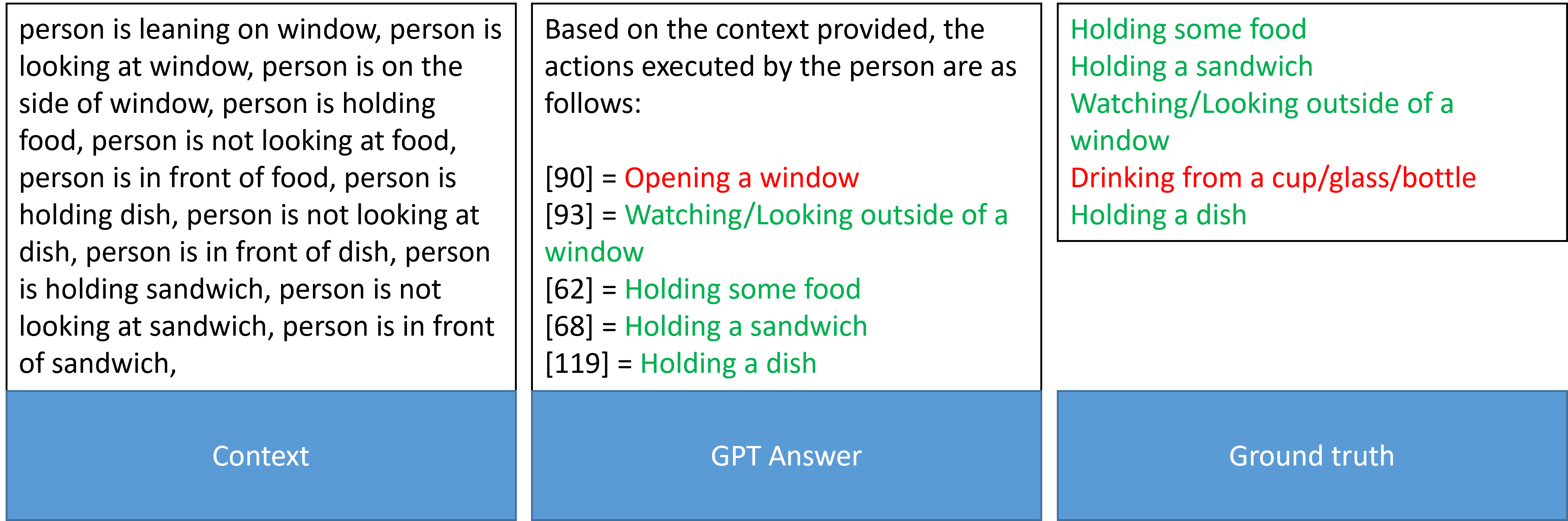

The image presents a comparative analysis of action recognition between a generated text (GPT Answer) and ground truth data. It consists of three sections:

1. **Context**: Describes a person's actions and states (e.g., "person is leaning on window," "person is holding food").

2. **GPT Answer**: Lists actions with numerical identifiers and color-coded labels (red/green).

3. **Ground Truth**: Lists actions with numerical identifiers and color-coded labels (green/red).

### Components/Axes

- **Sections**:

- **Context**: Descriptive text of observed actions/states.

- **GPT Answer**: Model-generated action labels with numerical IDs and color-coded validity (red = incorrect, green = correct).

- **Ground Truth**: Reference action labels with numerical IDs and color-coded validity (green = correct, red = incorrect).

- **Labels**:

- **Context**: No explicit labels; free-form text.

- **GPT Answer/Ground Truth**:

- Numerical IDs (e.g., [90], [93], [62], [68], [119]).

- Action descriptions (e.g., "Opening a window," "Holding a sandwich").

- Color codes: Red (incorrect), Green (correct).

### Detailed Analysis

#### Context Section

- Describes a person's actions and states:

- Leaning on a window, looking at a window, holding food/dish/sandwich.

- Explicit negations: "person is not looking at food," "person is not looking at sandwich."

#### GPT Answer Section

- **Key Entries**:

- `[90] = Opening a window` (red)

- `[93] = Watching/Looking outside of a window` (green)

- `[62] = Holding some food` (green)

- `[68] = Holding a sandwich` (green)

- `[119] = Holding a dish` (green)

#### Ground Truth Section

- **Key Entries**:

- `Holding some food` (green)

- `Holding a sandwich` (green)

- `Watching/Looking outside of a window` (green)

- `Drinking from a cup/glass/bottle` (red)

- `Holding a dish` (green)

### Key Observations

1. **Action Overlap**:

- Both GPT Answer and Ground Truth include:

- `Holding a sandwich` (green in both).

- `Holding a dish` (green in both).

- `Watching/Looking outside of a window` (green in both).

2. **Discrepancies**:

- **GPT Answer**: Incorrectly labels `[90]` as "Opening a window" (red).

- **Ground Truth**: Includes `Drinking from a cup/glass/bottle` (red), absent in GPT Answer.

3. **Color Coding**:

- Red labels in GPT Answer indicate errors (e.g., `[90]`).

- Red label in Ground Truth (`Drinking...`) suggests it is an incorrect action.

### Interpretation

- **Purpose**: The image evaluates the accuracy of a language model's action recognition against ground truth data.

- **Model Performance**:

- The GPT Answer correctly identifies most actions (e.g., `Holding a sandwich`, `Holding a dish`) but fails to recognize `Drinking...` and incorrectly labels `Opening a window`.

- Ground Truth includes an outlier (`Drinking...`), which the model did not predict, suggesting a potential gap in training data or model capability.

- **Numerical IDs**: Likely correspond to predefined action categories, but their exact mapping is unclear without additional context.

- **Color Significance**: Red/green coding provides a visual cue for correctness, aiding rapid validation of model outputs.

### Conclusion

The image highlights the model's strengths (accurate identification of holding actions and window-related behaviors) and weaknesses (missed actions like drinking, incorrect window-opening label). This analysis underscores the importance of refining action recognition models to handle nuanced scenarios and edge cases.